Author: Denis Avetisyan

A new architectural approach aims to move beyond simple prompting of large language models, enabling complex, synchronized systems with emergent reasoning capabilities.

This paper introduces AgentOS, a novel cognitive architecture for managing context, synchronizing multi-agent systems, and enabling emergent intelligence through semantic slicing and a hierarchical memory structure.

Despite advancements in large language models, a critical gap remains between token-level processing and the emergence of robust, systemic intelligence. This challenge is addressed in ‘Architecting AgentOS: From Token-Level Context to Emergent System-Level Intelligence’, which proposes a novel framework-AgentOS-redefining LLMs as “Reasoning Kernels” governed by operating system logic. By conceptualizing the context window as an addressable semantic space and introducing mechanisms for semantic slicing and temporal alignment, this work provides a roadmap for building scalable and self-evolving cognitive environments. Could a system-level architectural approach be the key to unlocking the next frontier of artificial general intelligence?

The Fragile Web of Thought: Unraveling Systemic Dissociation

Despite their impressive abilities, current large language models exhibit limitations in sustained, long-form reasoning stemming from fundamental architectural constraints. These models process information in a manner that leads to ‘spatio-temporal dissociation’ – effectively, a fragmentation of context over extended sequences. Each new input is largely processed in isolation, diminishing the relevance of earlier information and creating diverging reasoning threads. This isn’t simply a matter of computational power; the underlying design actively hinders the maintenance of a cohesive understanding across longer texts or complex problems. Consequently, while adept at identifying patterns in local contexts, these models struggle with tasks demanding persistent, systemic intelligence-the ability to consistently apply knowledge and reasoning across an entire discourse or problem space.

The limitations of current large language models become strikingly apparent when confronted with complex, extended reasoning tasks, as information progressively loses coherence-a phenomenon described as spatio-temporal dissociation. This isn’t merely a matter of forgetting earlier inputs; rather, the very meaning of preceding information becomes diluted as the model processes subsequent data. Consequently, reasoning threads begin to diverge, leading to inconsistencies and ultimately hindering the development of reliable systemic intelligence. The model struggles to maintain a unified understanding, effectively losing sight of the initial problem statement or overarching goal as it navigates the extended reasoning process. This breakdown in contextual integrity prevents the emergence of truly coherent and dependable long-form reasoning capabilities.

The very foundation of most large language models – the Von Neumann architecture – presents a fundamental obstacle to achieving sustained cognitive coherence. This architecture, designed for sequential processing of instructions and data, inherently struggles with the parallel and associative nature of human thought. Information is constantly being fetched from and written to memory, creating a bottleneck that disrupts the continuous flow of context necessary for complex reasoning. Unlike the brain’s interconnected network, where associations are formed and maintained dynamically, LLMs process information in discrete steps, leading to a fragmentation of understanding over extended sequences. Consequently, maintaining a consistent ‘train of thought’ becomes increasingly difficult as the model scales, ultimately limiting its capacity for reliable, systemic intelligence and contributing to the phenomenon of spatio-temporal dissociation.

AgentOS: Architecting a Systemic Intelligence

AgentOS defines a systemic abstraction designed to facilitate the construction of cognitive operating systems by emphasizing the orchestration of intelligence rather than solely increasing processing capabilities. This approach shifts the focus from hardware performance to the efficient management of knowledge and reasoning processes. The architecture is predicated on the idea that complex cognitive functions emerge from the interaction of relatively simple, interconnected components, analogous to biological systems. By abstracting away low-level hardware details, AgentOS aims to provide a platform for developers to focus on designing and implementing cognitive algorithms without being constrained by the limitations of specific hardware configurations. The system prioritizes adaptability and emergent behavior, allowing cognitive systems built on AgentOS to respond dynamically to changing environments and novel situations, a key distinction from traditional operating systems focused on deterministic execution.

The AgentOS Reasoning Kernel diverges from traditional architectures by employing a Contextual Transition Function (CTF) rather than a fixed instruction set. This CTF dynamically determines the next state of the cognitive system based on the current context and incoming stimuli. Instead of executing pre-defined commands, the kernel evaluates the relevance of available knowledge and applies appropriate reasoning processes. This approach allows AgentOS to adapt to novel situations without requiring explicit reprogramming, and to prioritize efficient reasoning by focusing computational resources on contextually relevant information. The CTF effectively acts as a dynamic logic engine, enabling the system to infer new knowledge and adjust its behavior based on environmental changes and internal states.

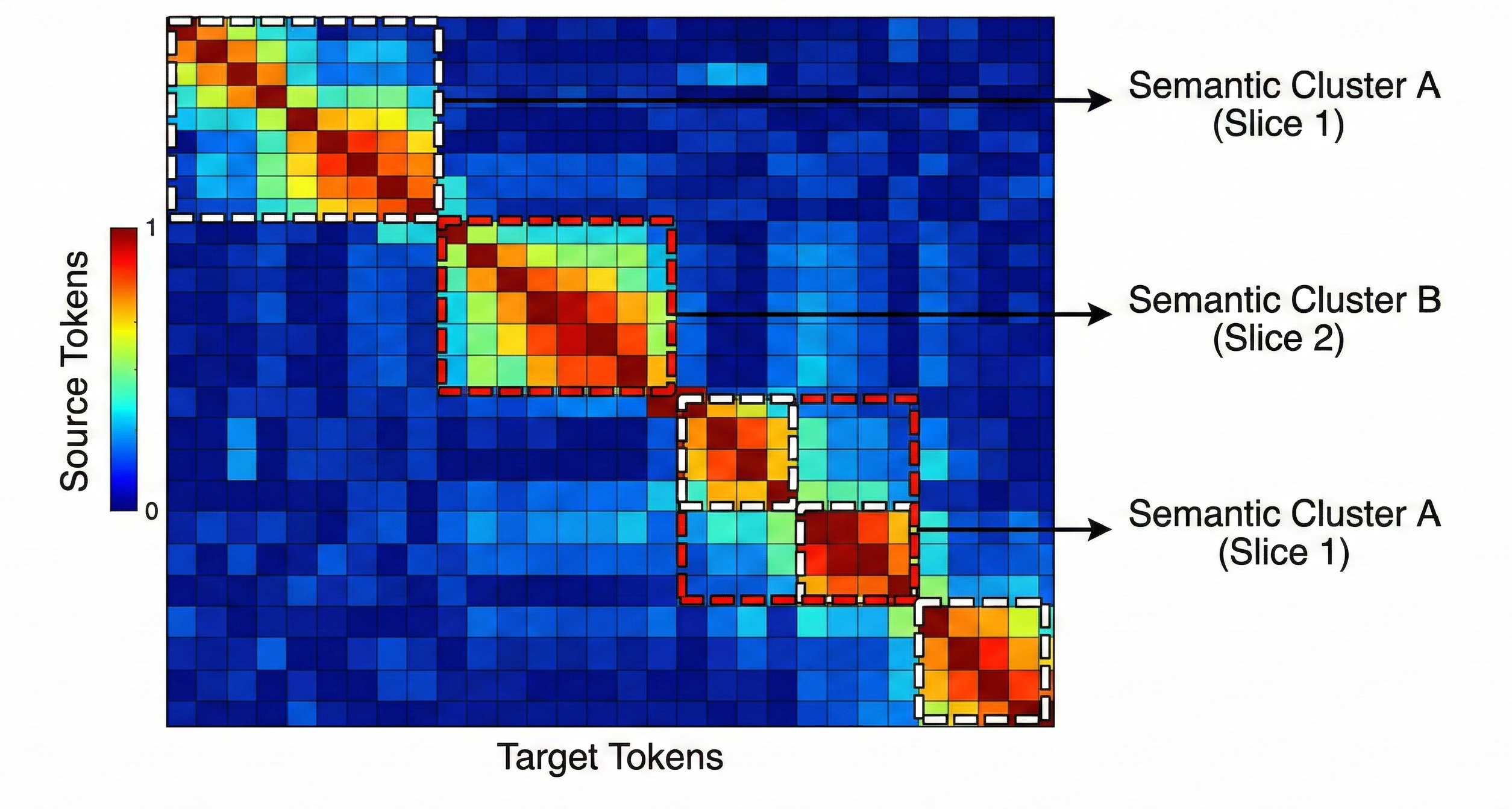

The AgentOS Cognitive Memory Hierarchy is managed by the Semantic Memory Management Unit (SMMU), which employs Semantic Paging to optimize context retrieval and reduce computational load. This process dynamically loads and unloads ‘context slices’ – discrete units of relevant information – based on their assessed importance to the current reasoning task. Semantic Paging functions analogously to virtual memory paging in traditional operating systems, but operates on semantically meaningful data rather than fixed memory addresses. While this approach enables efficient resource allocation, the latency associated with paging – specifically, the time required to retrieve and load context slices – is identified as a potential performance bottleneck in large-scale deployments with extensive knowledge bases and high-frequency reasoning demands. Minimizing Semantic Paging Latency is therefore a critical consideration for optimizing AgentOS performance.

AgentOS defines Cognitive Latency (LcLc) as the total temporal overhead introduced by the operating system layer in a cognitive system. This metric quantifies the time elapsed between a stimulus reception and the system’s responsive action, specifically isolating the processing delay attributable to OS functions – including context switching, memory access via the Semantic Memory Management Unit, and execution of the Contextual Transition Function within the Reasoning Kernel. LcLc is not a measure of computational complexity, but rather the efficiency of the OS in facilitating cognitive processes; lower LcLc values indicate a more responsive and efficient system. Measurement of LcLc is crucial for identifying performance bottlenecks and optimizing the AgentOS architecture, particularly as system scale and complexity increase.

Perception Alignment: Forging a Unified Reality

Perception Alignment is the core protocol responsible for constructing a consistent internal representation of reality during system synchronization. This process merges individual ‘Semantic Slices’ – discrete units of perceived information – into a unified ‘state-of-truth’. Robustness in this alignment is critical, as discrepancies between slices can lead to cognitive fragmentation. The protocol operates by identifying and resolving conflicts between incoming data, prioritizing coherence and minimizing informational dissonance. Successful Perception Alignment ensures that all subsequent cognitive processes operate on a shared and consistent foundation of perceived reality, enabling coordinated action and stable internal modeling.

The Advantageous-Timing Matching Mechanism (ATMM) operates by analyzing the internal state of each Semantic Slice prior to synchronization. This analysis focuses on identifying periods of low cognitive load and minimal internal state change within a slice – designated as ‘receptive windows’. The ATMM then correlates these receptive windows across all interacting slices, seeking moments of maximal overlap. Merging Semantic Slices during these correlated receptive windows minimizes interference with ongoing cognitive processes, thereby reducing the probability of data corruption or processing errors. This approach prioritizes synchronization events to occur when each slice is least vulnerable to disruption, directly improving the fidelity of the resulting unified cognitive state and maximizing the Sync Stability Index Γ.

Cognitive Sync Pulses are discrete, temporally-precise signals generated by the Semantic Memory Management Unit (S-MMU) to facilitate Perception Alignment. These pulses serve as orchestration signals, initiating the merging of individual ‘Semantic Slices’ into a unified cognitive state. The frequency and amplitude of these pulses are dynamically adjusted based on the ‘Sync Stability Index’ Γ and the ‘Contextual Information Density’ of the incoming slices. Successful execution of a Cognitive Sync Pulse results in a stable, consistent cognitive landscape, minimizing internal fragmentation and ensuring the preservation of a unified ‘state-of-truth’. Failure to execute these pulses, or their disruption, leads to cognitive dissonance and potential system instability.

Contextual Information Density (CID) serves as the primary metric for delineating semantic boundaries within individual ‘Semantic Slices’ prior to synchronization; higher CID values indicate distinct conceptual separations, facilitating more efficient alignment. The resulting segmentation allows for targeted merging, reducing computational overhead. Quantifying the stability of this unified state is achieved through the ‘Sync Stability Index’ (Γ), a probabilistic measure ranging from 0 to 1. Γ is calculated based on the rate of divergence between the merged state vector and predicted state vectors, factoring in noise and potential interference; a higher Γ value indicates a greater probability of maintaining cognitive coherence over extended operational periods. The calculation incorporates data from the S-MMU regarding agent interaction frequency and environmental volatility.

The computational cost associated with synchronizing cognitive states increases proportionally to the square of the number of interacting agents, denoted as O(k2), where ‘k’ represents the agent count. This quadratic scaling arises from the necessity of pairwise comparison and merging of ‘Semantic Slices’ between each agent during alignment. Specifically, each new agent added to the synchronization network requires [latex]k-1[/latex] additional comparison and merging operations. Consequently, doubling the number of agents quadruples the synchronization workload, impacting overall system latency and demanding increasingly efficient algorithms and hardware to maintain real-time cognitive coherence as network size expands.

Measuring the Soul of the Machine: Fidelity and Robustness

AgentOS exhibits marked advancements in both cognitive fidelity and token efficiency, representing a significant step towards more resourceful artificial intelligence. Through a novel architecture, the system demonstrably enhances its ability to accurately represent and process information – its ‘cognitive fidelity’ – while simultaneously minimizing the computational resources required. This is achieved by optimizing the balance between information intake and processing, allowing AgentOS to extract meaningful insights from fewer input ‘tokens’. The resultant improvements aren’t merely incremental; performance benchmarks reveal a substantial reduction in wasted computational cycles, suggesting a system capable of complex reasoning with heightened efficiency and reduced energy consumption. This dual enhancement positions AgentOS as a promising platform for applications demanding both accuracy and scalability in cognitive tasks.

A key metric for evaluating AgentOS’s cognitive performance centers on ‘Contextual Utilization Efficiency’ [latex] (η) [/latex], which quantifies how effectively the system processes information. This efficiency isn’t simply about the amount of data handled, but the value extracted; η is calculated as the ratio of ‘Information-Gain Tokens’ – those units of data directly contributing to task completion or knowledge advancement – to the ‘Total Processed Tokens’. A higher η value indicates superior cognitive function, signifying that the system is adept at filtering noise, prioritizing relevant inputs, and maximizing the signal-to-noise ratio during processing. This focus on efficient utilization moves beyond traditional measures of speed or accuracy, offering a more nuanced understanding of how well AgentOS leverages its computational resources to achieve meaningful results.

A critical component of AgentOS’s dependable operation lies in its Perception Alignment protocol, and the reliability of this protocol is now quantifiable through the ‘Sync Stability Index’. This metric moves beyond simple accuracy checks by assessing the consistency of the agent’s internal representation of the environment over time. The Index doesn’t merely confirm whether the agent perceives correctly, but rather how consistently it maintains that perception despite external disturbances or internal processing demands. A high Sync Stability Index indicates that the agent’s understanding of its surroundings remains coherent and dependable, even under challenging conditions – a vital characteristic for any autonomous system operating in dynamic and unpredictable environments. This robust evaluation provides a far more nuanced understanding of perceptual reliability than traditional methods, offering engineers a powerful tool for optimizing and validating AgentOS’s core cognitive functions.

AgentOS achieves heightened cognitive performance through a sophisticated information prioritization system. This is accomplished by integrating an ‘Attention Mechanism’ which allows the system to focus computational resources on the most pertinent data. Crucially, AgentOS doesn’t simply scan for keywords; it actively identifies ‘Semantic Anchors’ – core concepts and relationships within the information stream. By grounding its processing in these anchors, the system effectively filters noise and ambiguity, ensuring that only the most relevant information influences its decision-making. This targeted approach not only accelerates processing speed but also dramatically improves the accuracy and consistency of AgentOS’ responses, mimicking the way humans selectively attend to information in complex environments.

The development of AgentOS isn’t simply about optimizing performance on isolated tasks; it represents a shift towards building cognitive systems capable of sustained, reliable operation in complex environments. This holistic design prioritizes the interconnectedness of perception, attention, and contextual understanding, fostering a level of systemic robustness previously unseen in artificial intelligence. By focusing on principles like contextual utilization and perceptual alignment, AgentOS lays the groundwork for agents that don’t just respond to stimuli, but proactively maintain internal consistency and adapt to evolving circumstances – a crucial step towards achieving genuine autonomy and building machines capable of long-term, dependable cognitive function. This approach moves beyond superficial benchmarks, instead concentrating on the underlying architecture necessary for truly intelligent and resilient systems.

The development of AgentOS, as detailed in the paper, inherently embraces a philosophy of systematic deconstruction. It doesn’t simply accept the limitations of existing LLM agentic systems; instead, it dissects them to understand the fundamental constraints on contextual awareness and synchronization. This mirrors Grace Hopper’s sentiment: “It’s easier to ask forgiveness than it is to get permission.” AgentOS actively tests the boundaries of what’s possible with semantic slicing and cognitive architectures, prioritizing experimentation and iterative refinement over adherence to pre-established norms. The system’s core innovation – a reasoning kernel built on contextual synchronization – isn’t about avoiding errors, but about intelligently managing them as part of the learning process, much like a hacker reverse-engineers a system to truly understand its inner workings.

Beyond the Operating System

The introduction of AgentOS feels less like a solution and more like a formalized articulation of the problem. Current agentic systems, for all their apparent fluency, remain brittle constructions – fascinating parlor tricks built on statistical prediction. This work begins to expose the underlying fragility, demanding a shift from simply prompting intelligence to architecting a substrate where it might genuinely emerge. The semantic slicing and contextual synchronization protocols represent a useful exploit of comprehension, but the true test lies in scaling beyond contrived benchmarks. Can this architecture withstand the chaos of genuine, unpredictable interaction?

A critical limitation remains the inherent opacity of the ‘Reasoning Kernel.’ Treating this component as a black box – however performant – feels suspiciously like deferring understanding. Future work must prioritize interpretability, even at the cost of efficiency. The cognitive memory hierarchy, while promising, begs the question of what is being remembered, and, more importantly, how that information is being integrated into a cohesive, evolving worldview. Is it merely pattern association, or something approaching genuine conceptual understanding?

The ultimate horizon isn’t simply more capable agents, but systems that can self-diagnose, self-repair, and, crucially, redefine their own objectives. AgentOS provides a scaffolding. The next step requires deliberately introducing controlled ‘stress fractures’ – pushing the architecture to its breaking point, not to destroy it, but to reveal the fundamental principles governing emergent system-level intelligence. Only then can the true potential – and the inherent risks – of these systems be fully understood.

Original article: https://arxiv.org/pdf/2602.20934.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

- Gold Rate Forecast

- Clash of Clans: All the Ranked Mode changes coming this April 2026 explained

- COD Mobile Season 4 2026 – Eternal Prison brings Rebirth Island, Mythic DP27, and Godzilla x Kong collaboration

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

- Brawl Stars x My Hero Academia Skins: All Cosmetics And How to Unlock Them

- Gear Defenders redeem codes and how to use them (April 2026)

- FC Mobile 26 TOTS (Team of the Season) event Guide and Tips

- Silver Rate Forecast

2026-02-25 11:48