Author: Denis Avetisyan

A new reinforcement learning framework enables humanoid robots to acquire versatile motor skills by combining reference guidance with goal-conditioned learning.

This work presents a multi-task approach to decouple motion quality from specific demonstrations, enhancing generalization in complex robotic systems.

Achieving both robust motor skills and adaptable behavior remains a central challenge in humanoid robotics. This is addressed in ‘Generalizing from References using a Multi-Task Reference and Goal-Driven RL Framework’, which introduces a novel reinforcement learning approach that bridges the gap between reference-guided imitation and goal-conditioned generalization. By co-optimizing these objectives within a shared policy, the framework learns structured, human-like movements while retaining the flexibility to adapt to novel situations without relying on explicit trajectory tracking. Could this unified approach unlock more natural and versatile control strategies for complex robotic systems operating in dynamic environments?

The Inevitable Complexity of Control

Achieving stable and nuanced control of humanoid robots presents a significant engineering challenge, largely due to the inherent complexities of both the robot’s mechanics and the unpredictable nature of real-world environments. Traditional control strategies, often relying on pre-programmed sequences or meticulously tuned feedback loops, falter when confronted with uneven terrain, unexpected disturbances, or even slight variations in the robot’s own physical parameters. These methods struggle to account for the numerous interacting forces and moments required for balanced locomotion and manipulation, leading to jerky movements, instability, and ultimately, an inability to reliably perform tasks in dynamic settings. The high dimensionality of the control problem – managing dozens of joints simultaneously – further exacerbates these difficulties, demanding approaches capable of adapting to unforeseen circumstances and maintaining equilibrium amidst constant change.

The sheer complexity of coordinating a humanoid robot’s numerous joints and actuators renders manual programming of even basic movements exceptionally difficult. Attempting to define every nuance of a gait, for example, would require painstakingly crafting a vast and brittle set of instructions, incapable of adapting to uneven terrain or unexpected disturbances. Consequently, researchers are increasingly turning to learning-based methods, particularly deep reinforcement learning, to allow robots to discover effective control strategies through trial and error. This approach bypasses the need for explicit programming, instead framing the control problem as a sequential decision-making process where the robot learns to maximize a reward signal by interacting with its environment – effectively teaching itself to move, balance, and manipulate objects without precise, pre-defined instructions.

A significant obstacle to deploying reinforcement learning on complex humanoid robots lies in its inherent inefficiency and susceptibility to instability. Traditional, or ‘naive’, reinforcement learning algorithms typically require an enormous number of interactions with the environment – countless trials and errors – to learn even relatively simple behaviors. This poses a major challenge for physical robots, as real-world interactions are time-consuming, potentially damaging to the hardware, and expensive to collect. Furthermore, the learning process can be highly sensitive to the choice of hyperparameters and environmental conditions, leading to unpredictable and often unstable policies that fail to generalize beyond the specific training scenario. This combination of poor sample efficiency and instability significantly hinders the practical application of [latex]RL[/latex] for controlling the complex dynamics of humanoid robots in dynamic, real-world environments.

The Allure of Imitation: A Temporary Stay of Execution

Motion imitation represents a significant approach to reinforcement learning by leveraging expert demonstrations, termed “reference motion,” to establish a robust initial policy. This technique bypasses the need for random exploration at the start of training, substantially accelerating the learning process. By directly learning from successful trajectories, the agent quickly acquires a functional, albeit potentially inflexible, policy. The quality of the reference motion directly impacts the speed and ultimate performance of the learned policy; high-quality demonstrations provide a strong foundation for further refinement through reinforcement learning algorithms. This contrasts with traditional methods where the agent must discover effective strategies through trial and error, often requiring substantial computational resources and time.

Reference-Guided Reinforcement Learning (RL) methods address complex control tasks by reformulating them as imitation problems. Approaches like DeepMimic leverage demonstrations – provided as reference trajectories – to guide the learning process. Instead of directly maximizing a reward function, the policy is trained to minimize the difference between its actions and those observed in the reference motion. This is typically achieved through regression or behavioral cloning techniques, where the policy learns to map states to actions exhibited by the expert demonstrator. The benefit lies in providing a strong initial policy, accelerating learning and circumventing the exploration challenges inherent in traditional RL, though performance is inherently limited by the quality and diversity of the provided demonstrations.

Motion tracking, a core technique in learning from demonstration, involves training policies to replicate provided trajectories as closely as possible. This is typically achieved through minimizing the difference between the agent’s actions and those demonstrated in the reference data, often using metrics like mean squared error. While effective for quickly establishing a functional initial policy and ensuring accurate reproduction of known behaviors, strict adherence to demonstrated motion can hinder adaptability in novel situations or environments. The policy may struggle to generalize beyond the observed data, lacking the flexibility to recover from perturbations or optimize performance in scenarios not explicitly covered in the demonstration set.

Scaling the Echo: Beyond Simple Replication

Gradient Matching Techniques (GMT) and Scalable Online Neural Imitation with Curriculum (SONIC) address limitations in applying Reference-Guided Reinforcement Learning (RGRL) to extensive and heterogeneous motion capture datasets. Traditional RGRL methods struggle with the computational cost and instability inherent in large-scale data. GMT improves sample efficiency by matching gradients between the policy and expert demonstrations, reducing the variance in policy updates. SONIC further enhances scalability through a curriculum learning approach, starting with simpler motions and progressively increasing complexity. Both techniques demonstrably improve the robustness of learned policies across varied environments and motion types, and increase their ability to transfer to unseen scenarios by effectively leveraging the diversity present in larger datasets.

Adversarial Imitation Learning and Hybrid Imitation Learning methods address limitations of traditional imitation learning by moving beyond pixel-perfect trajectory replication. Adversarial approaches introduce a discriminator network that distinguishes between the agent’s actions and those demonstrated in the expert data, training the agent to generate actions that are indistinguishable, thereby focusing on behavioral similarity rather than exact path reproduction. Hybrid methods combine imitation learning with reinforcement learning, using the expert data to initialize or guide the RL agent, which then further refines the policy through interaction with the environment. This combination allows the agent to leverage the benefits of both approaches – the efficiency of imitation and the adaptability of reinforcement learning – resulting in policies that generalize more effectively to unseen scenarios and exhibit improved robustness to variations in initial conditions or environmental factors.

Distillation, in the context of imitation learning, addresses limitations of directly deploying tracking-based controllers by transferring their learned behavior to a policy network independent of the original reference motion. This process typically involves training a student policy network to mimic the actions predicted by a pre-trained teacher controller, which does rely on reference trajectories. The teacher network effectively acts as a source of supervisory signals, allowing the student policy to learn a robust control strategy without requiring the same input dependencies. This decoupling improves deployment potential by enabling the learned policy to operate in previously unseen environments or with altered reference conditions, as it is no longer strictly bound to replicating specific trajectories but rather generalizing the underlying control principles.

![Our method achieves a high success rate for both walk-climb and walk-jump skills even with variations in initial conditions, as demonstrated by performance near the nominal configuration (orange) and under randomized initialization (purple) within a [latex]2.3m[/latex] box.](https://arxiv.org/html/2602.20375v1/figures/success_rate_jump.png)

Towards Versatile Agents: The Illusion of True Skill

Recent advances in robotics are increasingly focused on developing agents capable of performing diverse tasks, and a key enabler of this versatility is multi-task learning. This approach skillfully integrates reference-guided reinforcement learning with goal-conditioned reinforcement learning, allowing robots to acquire multiple skills concurrently rather than mastering them in isolation. By leveraging both guidance – learning from demonstrations or pre-defined references – and the ability to adapt based on specified goals, robots can generalize more effectively across a range of challenges. This simultaneous learning not only accelerates the acquisition of new skills but also fosters the development of underlying representations that facilitate transfer learning and improve overall performance in complex, dynamic environments. The result is an agent capable of adapting to novel situations and seamlessly transitioning between tasks, paving the way for more robust and adaptable robotic systems.

Initial learning in complex robotic systems often benefits from a technique called behavioral shaping, where demonstrations provide a crucial starting point for skill acquisition. Rather than beginning from random actions, the system learns by observing examples of desired behavior, effectively bootstrapping the learning process. This approach significantly accelerates progress and improves stability, particularly in environments with sparse rewards. However, relying solely on demonstrations limits the system’s ability to surpass the demonstrated performance or adapt to novel situations. Consequently, reinforcement learning is integrated to refine the initially learned policy, allowing the agent to explore beyond the demonstrated examples and discover more efficient or robust solutions. This synergy between demonstration-guided learning and reinforcement learning unlocks new capabilities and allows the robot to not only replicate existing skills but also to improve upon them and generalize to previously unseen scenarios.

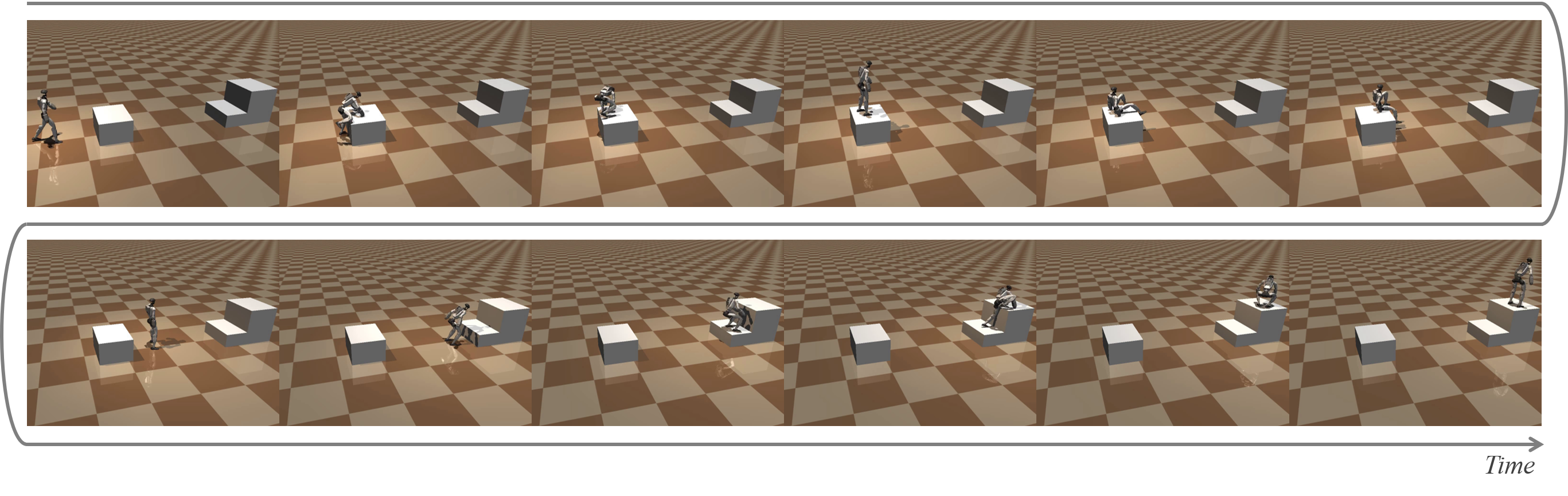

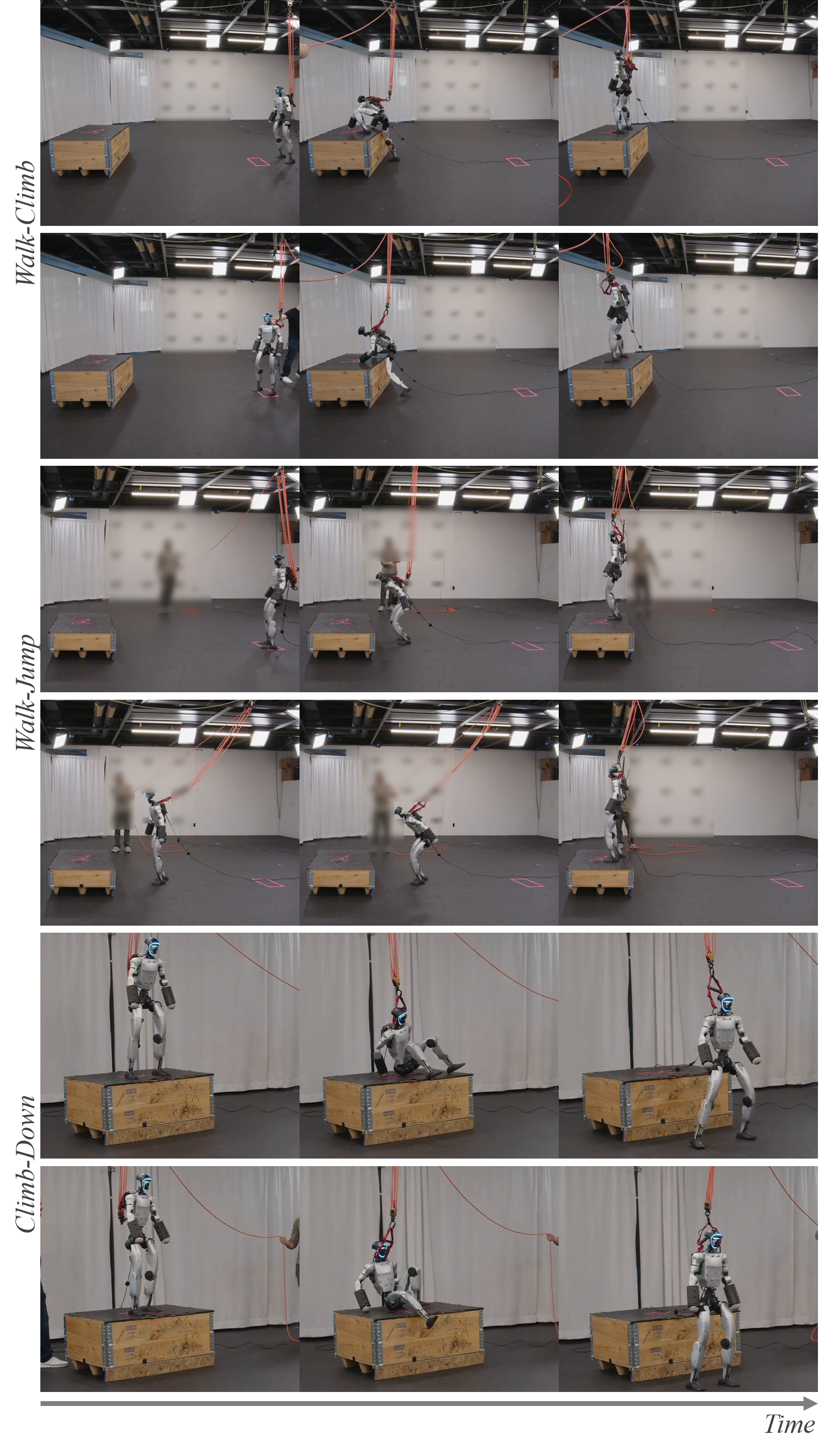

The challenge of transferring robotic skills learned in simulation to the complexities of the real world is being addressed by frameworks like ZEST, which strategically combines curriculum learning and domain randomization. This approach doesn’t simply train a robot on varied simulations; it carefully sequences the learning process with curricula, such as the Assistive Wrench Curriculum, that gradually increase task difficulty. By simultaneously exposing the robot to a wide range of randomized environments – altering factors like friction, lighting, and object textures – ZEST fosters robustness to real-world perturbations. Consequently, this framework has demonstrated significantly higher success rates in complex locomotion tasks – including walk-climb, walk-jump, and climb-down sequences – when compared to traditional methods, suggesting a pathway toward more adaptable and reliable robotic agents.

The Future of Embodied Intelligence: A Mirage of Autonomy

The advancement of embodied intelligence is no longer confined to controlled laboratory settings; instead, researchers are deliberately subjecting humanoid robots to increasingly complex and dynamic physical challenges, notably tasks inspired by the human discipline of Parkour. This deliberate push towards extreme locomotion – involving running, jumping, climbing, and navigating obstacles – serves as a critical benchmark for evaluating progress in areas like dynamic balance, real-time perception, and robust control algorithms. Successfully executing Parkour-like maneuvers demands a confluence of sophisticated capabilities, forcing robots to adapt to unpredictable terrain, maintain stability during high-impact landings, and plan trajectories on the fly. Consequently, performance on these demanding tasks provides a rigorous test of a robot’s ability to generalize learned behaviors to novel situations, effectively pushing the boundaries of what’s possible in autonomous physical systems.

Advancements in embodied intelligence are increasingly reliant on the synergistic effect of larger datasets, thoughtfully designed learning sequences, and techniques that enable robots to perform well in previously unseen situations. Recent frameworks demonstrate this potential, achieving notably low errors in both root orientation and joint positioning – indicators of stable balance and fluid, lifelike movement. These improvements aren’t merely incremental; they represent a significant leap towards more capable autonomous agents, exceeding the performance of existing systems and paving the way for robots that can navigate complex environments and adapt to unforeseen challenges with greater reliability and grace. This focus on robust generalization suggests a future where robots move beyond pre-programmed tasks and exhibit a true capacity for independent problem-solving.

The pursuit of embodied intelligence culminates in the development of truly autonomous agents – systems capable of navigating and responding to the complexities of real-world environments without explicit programming for every eventuality. These agents aren’t simply pre-programmed to execute tasks; they are engineered to learn and adapt, leveraging sensory input to understand their surroundings and modify behavior accordingly. This necessitates advancements beyond mere task completion; it demands robust generalization capabilities, allowing these systems to confront unforeseen circumstances and solve novel problems – essentially, exhibiting a form of cognitive flexibility previously exclusive to biological intelligence. Ultimately, the creation of such agents promises a future where robots and artificial intelligence become seamless, proactive collaborators, capable of addressing challenges across diverse fields, from disaster relief and healthcare to exploration and complex manufacturing.

The pursuit of robust motor skills in humanoid robotics, as demonstrated in this work, echoes a fundamental truth about complex systems. One might observe that attempting to dictate precise behavior is often less fruitful than cultivating an environment where adaptability can flourish. As Paul Erdős famously stated, “A mathematician knows how to solve any problem, but he doesn’t always know which problems are worth solving.” Similarly, this research doesn’t seek to solve the problem of robot locomotion with a single, rigid solution, but rather to create a framework where the robot can learn to solve a variety of problems, decoupling motion quality from the limitations of any specific demonstration. The multi-task learning approach acknowledges that a system isn’t a machine, it’s a garden-neglect the ability to generalize, and technical debt quickly accumulates in the form of brittle, demonstration-specific behaviors.

What Lies Ahead?

This work, in its attempt to disentangle demonstration quality from goal achievement, highlights a perennial truth: every solved problem merely reveals a more subtle one. The framework achieves a degree of generalization, yet the very notion of ‘generalization’ feels increasingly like a comforting illusion. Scalability is simply the word used to justify complexity, and each added task introduces new failure modes, unforeseen interactions. The system learns to mimic robustness, but true resilience-the ability to gracefully degrade in the face of the genuinely novel-remains elusive.

The decoupling of motion quality and goals is a promising direction, but it also begs the question of what constitutes ‘quality’ in the first place. Is it smoothness, efficiency, or simply the absence of catastrophic errors? Optimization, after all, is a temporary truce with entropy. Everything optimized will someday lose flexibility, becoming brittle in the face of changing conditions. The pursuit of perfect control-a flawless execution of a predefined plan-may be a fundamentally misguided endeavor.

The perfect architecture is a myth, a necessary fiction to maintain the appearance of progress. The next step isn’t to build a more comprehensive framework, but to cultivate systems that can adapt, evolve, and even learn to fail intelligently. Perhaps the future of robotics lies not in controlling movement, but in choreographing controlled instability, allowing systems to discover solutions beyond the bounds of explicit programming.

Original article: https://arxiv.org/pdf/2602.20375.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

- Gold Rate Forecast

- Clash of Clans: All the Ranked Mode changes coming this April 2026 explained

- COD Mobile Season 4 2026 – Eternal Prison brings Rebirth Island, Mythic DP27, and Godzilla x Kong collaboration

- Gear Defenders redeem codes and how to use them (April 2026)

- Silver Rate Forecast

- The Mummy 2026 Ending Explained: What Really Happened To Katie

- Brawl Stars April 2026 Brawl Talk: Three New Brawlers, Adidas Collab, Game Modes, Bling Rework, Skins, Buffies, and more

- Laura Henshaw issues blunt clap back after she is slammed for breastfeeding newborn son on camera

2026-02-26 02:59