Teaching Robots to Feel: Smarter Manipulation Through Language and Touch

![The system integrates visual observation, language instruction, and force feedback to dynamically adjust impedance parameters [latex]\mathcal{K,D}[/latex], enabling a variable impedance controller to execute adaptable and safe contact-rich manipulation.](https://arxiv.org/html/2601.15541v1/figs/overview.png)

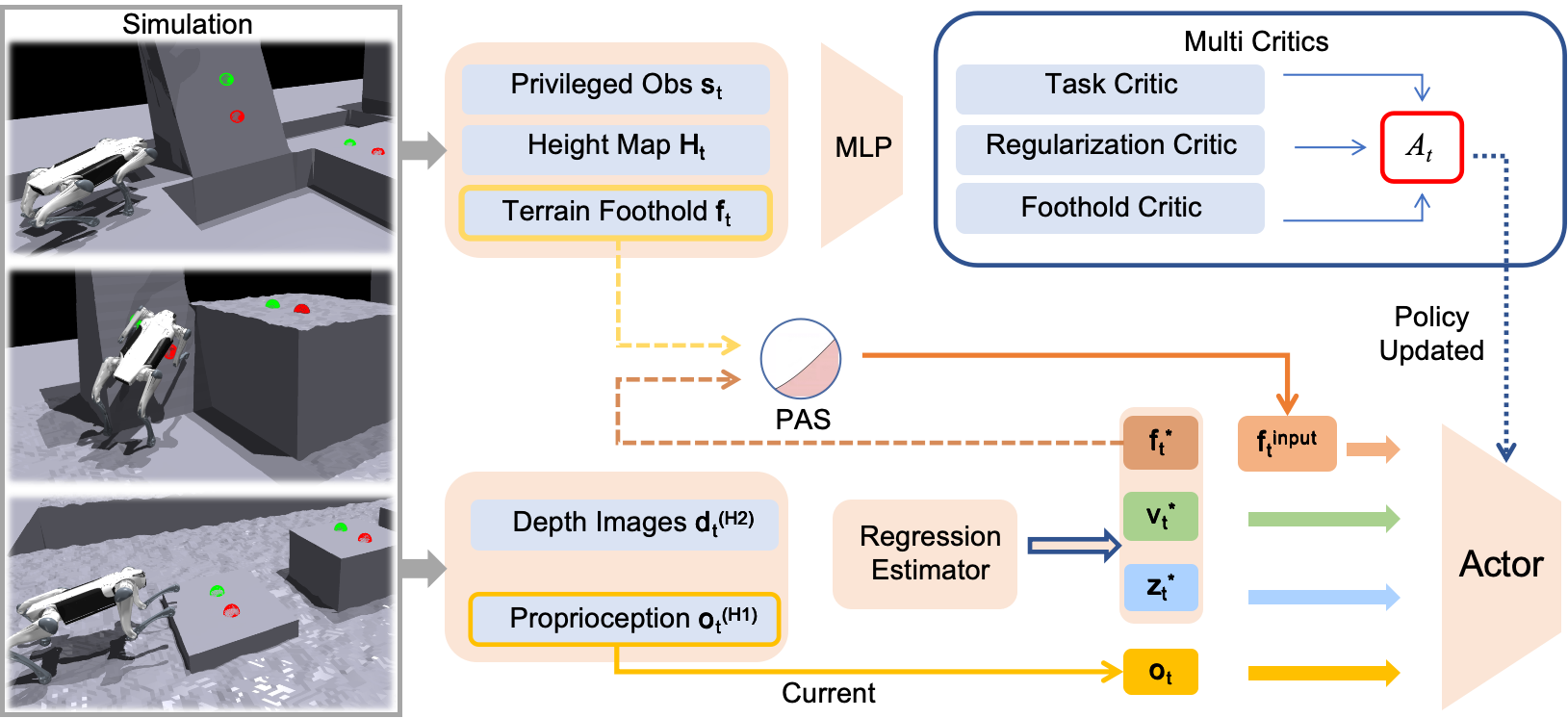

Researchers have developed a new approach that combines visual understanding, language guidance, and adaptable force control to enable robots to perform complex manipulation tasks with greater safety and precision.

![A learning framework leverages a Unity-based simulation-generating 75,655 robot configurations-to train a deep neural network that predicts the minimum distance [latex] d_{min} [/latex] between robotic arms, enabling the system to issue an audio warning when [latex] d_{min} [/latex] falls below 0.2 meters and preemptively mitigate potential collisions on real-world robotic setups.](https://arxiv.org/html/2601.15459v1/images/framework.jpg)

![The system’s foundational principle centers on restructuring inference to achieve a cohesive and adaptable framework, where alterations to one component necessitate a comprehensive understanding of the interconnected whole to maintain systemic integrity and predictable behavior - a concept akin to biological organisms where structure fundamentally governs function [latex] S = f(I, R) [/latex], indicating structure (S) as a function of inference (I) and restructuring (R).](https://arxiv.org/html/2601.15871v1/G11.png)

![Models readily latch onto superficial object cues as shortcuts during learning, sacrificing robust verb representation-a study using a ViT[10] trained on a verb-object subset of Sth-com[16] reveals that while object accuracy increases rapidly, verb accuracy plummets in unseen compositional settings, even dropping below chance, demonstrating a bias towards easily-identified objects over generalized verb understanding.](https://arxiv.org/html/2601.16211v1/x5.png)