Beyond Words: How Protein Models Differ From Natural Language

New research reveals key distinctions in how transformer-based models process proteins versus human language, impacting model efficiency and performance.

New research reveals key distinctions in how transformer-based models process proteins versus human language, impacting model efficiency and performance.

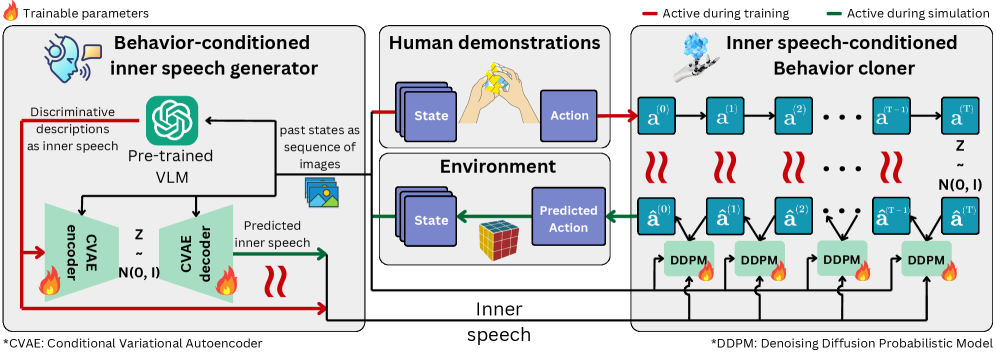

Researchers are exploring how modeling the internal monologue humans use to guide actions can dramatically improve the realism, diversity, and controllability of artificial intelligence agents.

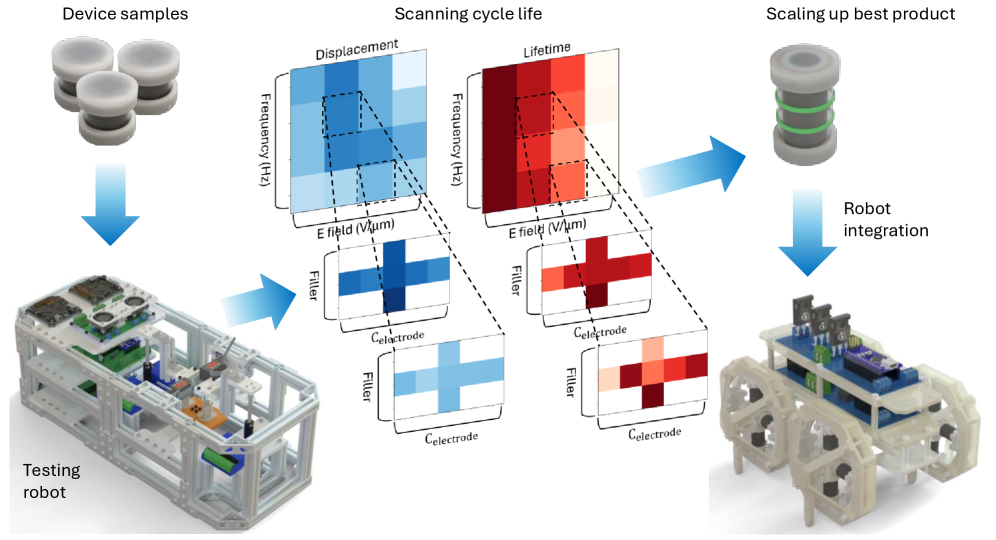

A new robotic platform dramatically accelerates the development of resilient soft actuators, paving the way for more robust and adaptable robotic systems.

A new approach to software design prioritizes formal verification and runtime safety for the next generation of AI-powered applications.

Artists are increasingly using machine learning not just as a tool for creation, but as a medium for critical inquiry into its societal implications.

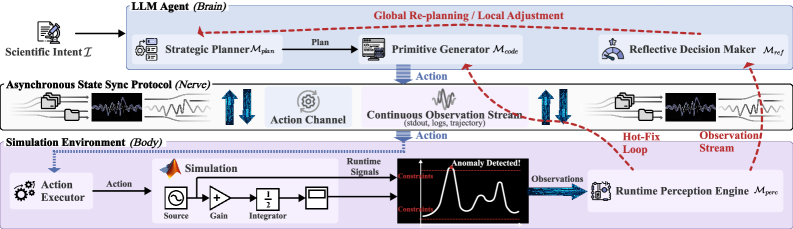

Researchers are developing AI systems that don’t just analyze data, but actively perform experiments within simulated environments to accelerate scientific breakthroughs.

![The study demonstrates that simulation-to-real transfer performance, while initially promising, inevitably degrades as increasingly complex robotic tasks are attempted, highlighting the persistent challenges of bridging the reality gap despite advancements in [latex]Sim2Real[/latex] methodologies.](https://arxiv.org/html/2602.21119v1/x2.png)

New research demonstrates a robotic platform where teams of agents learn to work together and against each other in real-world scenarios.

![The study characterizes transformer dynamics through a physical testbed-a multi-oscillator system predicting Lorenz chaotic time-series-and quantifies modality preference using dynamical SHAP values, [latex]\phi(Y) - \phi(X)[/latex], represented as directional arrows ranging from -90° to 90° to indicate the relative contribution of each input modality; analysis of prediction accuracy, visualized through embedding spaces at low ([latex]\beta_{self}, \beta_{cross} = (10^{-4}, 10^{-4})[/latex]) and high ([latex]\beta_{self}, \beta_{cross} = (10^{0}, 10^{0})[/latex]) attention levels-specifically examining time series data between t=50 and t=70-reveals how attention mechanisms influence the model’s reliance on different input modalities and the resulting prediction error.](https://arxiv.org/html/2602.20624v1/figures/fig6_0111.jpg)

New research reveals that distortions in the internal dynamics of artificial intelligence systems can lead to disproportionate reliance on certain types of data, causing AI to exhibit predictable biases when processing information from multiple sources.

A new analysis reveals that the automation of delivery services isn’t about replacing human workers, but rather shifting and obscuring the labor required to keep these robots running.

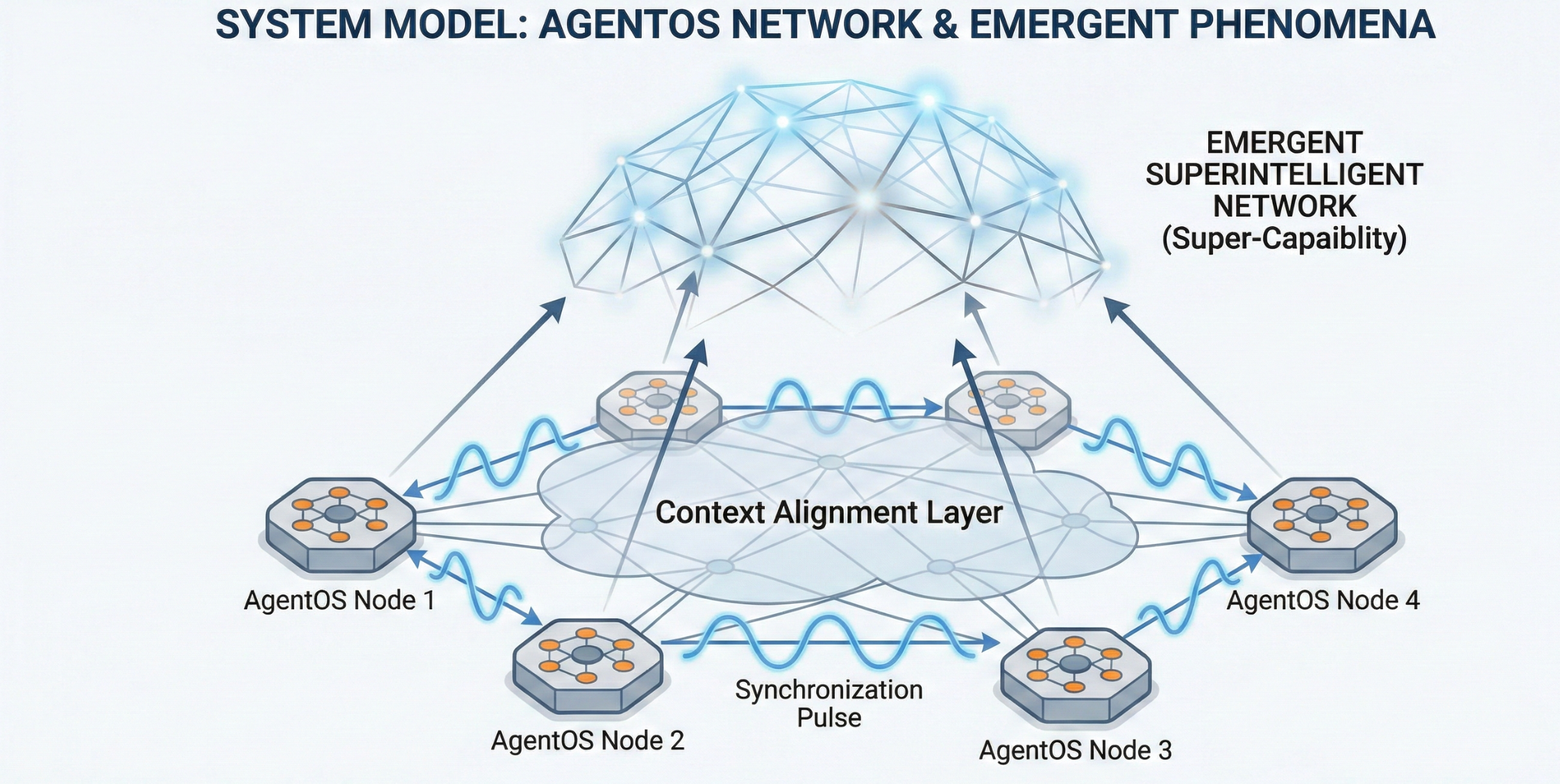

A new architectural approach aims to move beyond simple prompting of large language models, enabling complex, synchronized systems with emergent reasoning capabilities.