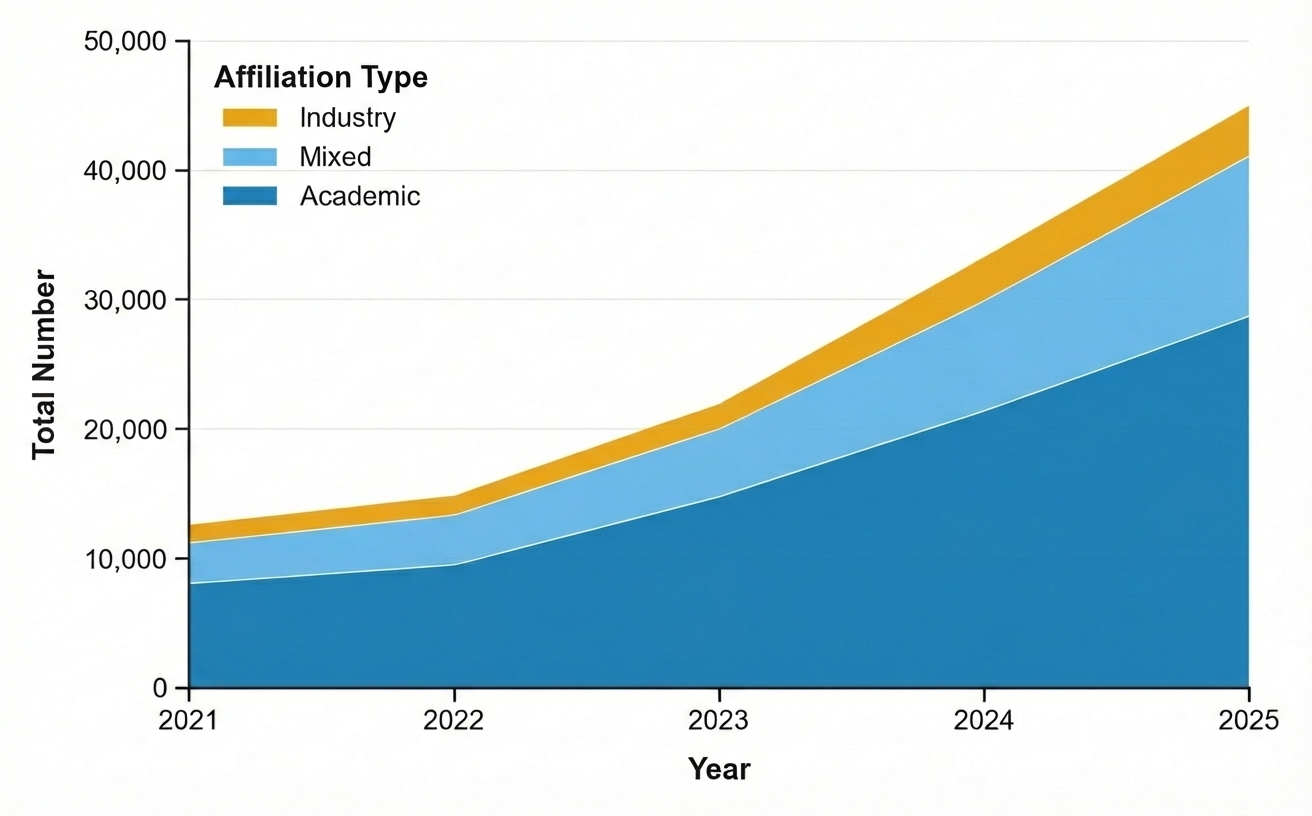

Robots That Truly Listen: Building Conversational AI for the Real World

![The system integrates streaming egocentric vision and audio via a real-time multimodal language model to not only generate spoken dialogue but also to proactively issue low-latency function calls-such as directing gaze to specific people, objects, or areas-that dynamically update perceptual context and drive active perception through external tools like [latex]Look\_at\_Person[/latex], [latex]Look\_at\_Object[/latex], and [latex]Use\_Vision[/latex].](https://arxiv.org/html/2602.04157v1/figs/main_fig.png)

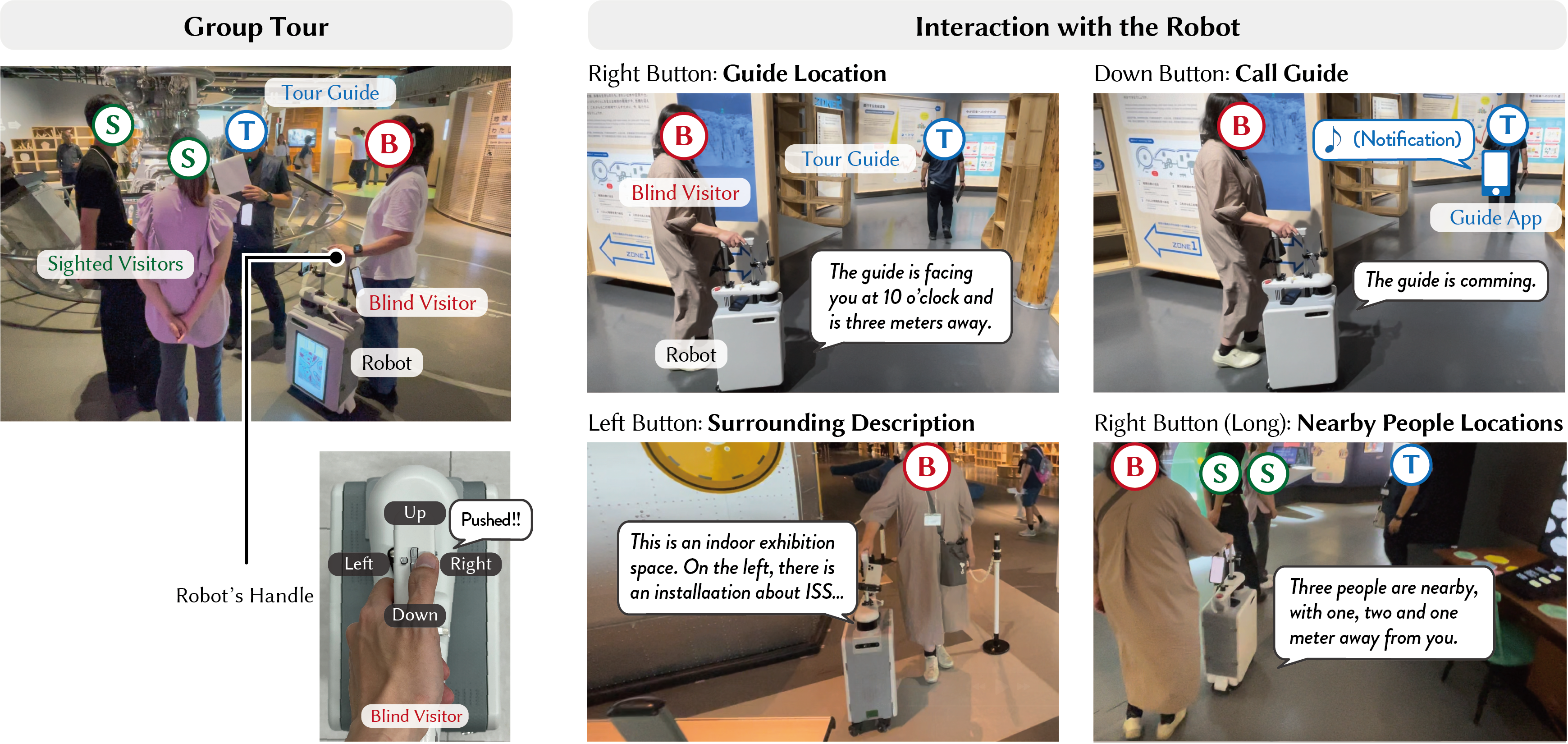

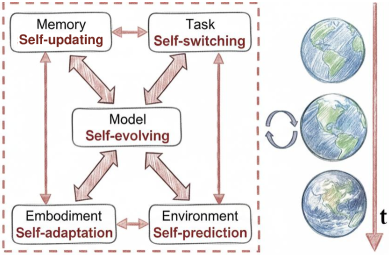

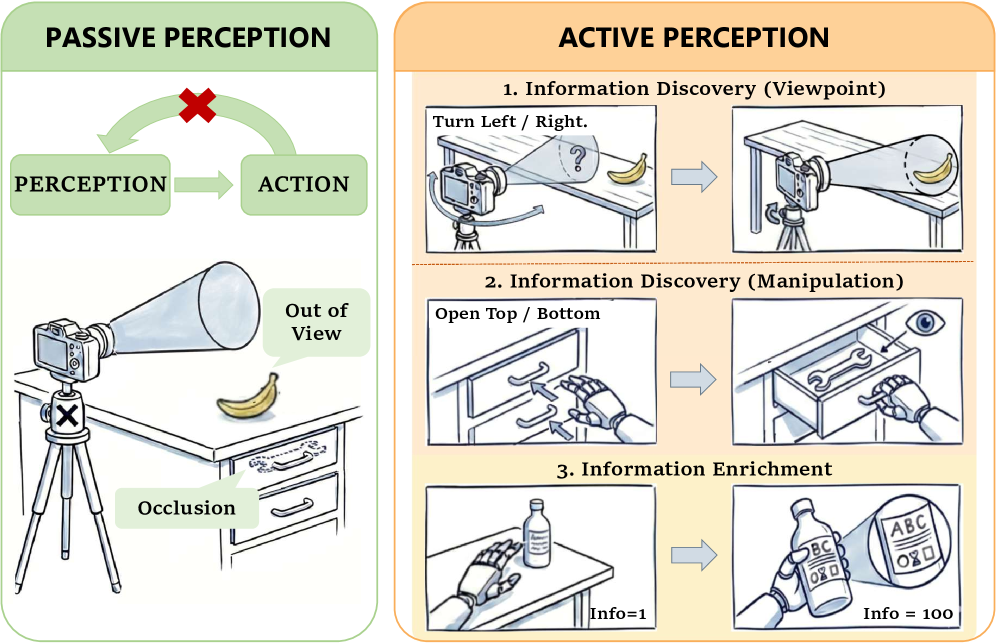

Researchers are developing new systems that enable robots to engage in more natural and grounded conversations by combining real-time sensory input with advanced language processing.

![Rapid contextual adaptation within the [latex]OLMo-2-{13}bin[/latex] system-demonstrated on a 16-unit linear topology-suggests a swift collapse of initial states into emergent, contextually-defined representations, indicative of a system prioritizing immediate relevance over sustained historical fidelity.](https://arxiv.org/html/2602.04212v1/x11.png)

![The system establishes a closed-loop interaction where human vocal input is processed by integrated software and a large language model to generate dynamic gestural responses from a robotic platform, thereby influencing subsequent human behavior and completing a cycle of affective feedback driven by [latex] \text{voice} \rightarrow \text{gesture} \rightarrow \text{response} [/latex].](https://arxiv.org/html/2602.04787v1/Figures/Affective_Expression_Loop.jpg)