Author: Denis Avetisyan

New research explores how combining the power of artificial intelligence with established learning principles can create more engaging and effective educational experiences.

This review details the design and evaluation of hybrid dialogue agents embedding large language models to facilitate self-regulated learning through responsive and theory-driven reflective interactions.

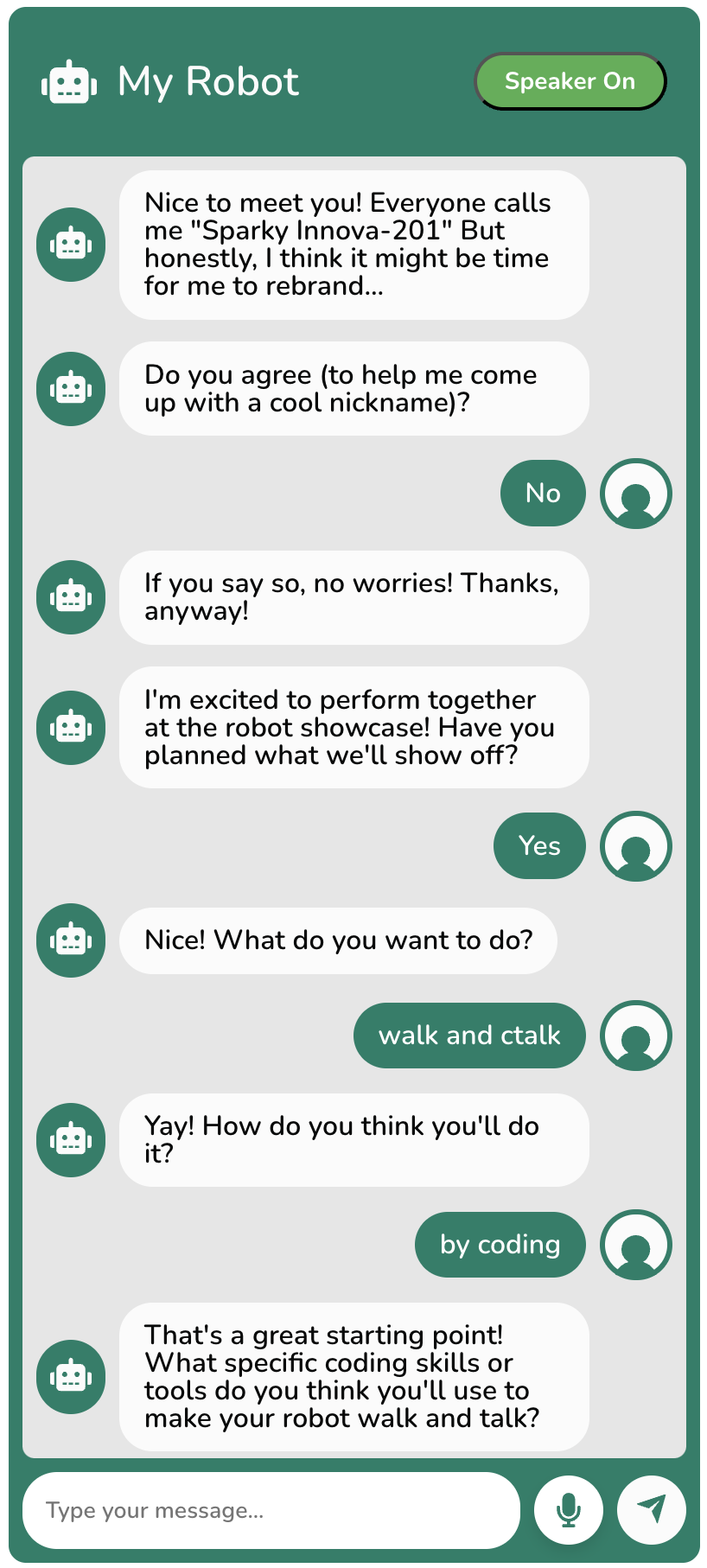

While theoretically grounded dialogue systems offer structured learning support, they often lack the adaptability to maintain engagement, a challenge Large Language Models (LLMs) address with responsiveness but without inherent pedagogical frameworks. This paper, ‘Hybrid LLM-Embedded Dialogue Agents for Learner Reflection: Designing Responsive and Theory-Driven Interactions’, explores a novel hybrid approach, embedding LLMs within a rule-based system to facilitate richer learner reflections during open-ended activities in a culturally responsive robotics camp. Analysis of dialogue themes revealed that this system fostered deeper engagement with goals and activities, though challenges with repetitiveness and prompt misalignment emerged. How can we best leverage the strengths of both rule-based and LLM approaches to create truly effective and engaging learning dialogues?

The Challenge of Adaptive Learning: A Systemic Imperative

Conventional educational frameworks, designed for large-scale instruction, frequently encounter difficulties in catering to the unique needs of each learner. This often results in a standardized approach that fails to acknowledge individual learning styles, paces, and prior knowledge. Consequently, students may experience disengagement, as the material either proves too challenging or insufficiently stimulating, ultimately leading to a wide range of academic outcomes. The inherent limitations of a ‘one-size-fits-all’ model underscore the need for more flexible and adaptive learning environments capable of providing personalized support and fostering intrinsic motivation.

Successful learning isn’t simply about receiving information; it fundamentally requires learners to actively monitor their own comprehension and adjust their strategies accordingly – a process known as self-regulation. However, many students haven’t been explicitly taught how to effectively assess their understanding, leaving them unaware of gaps in their knowledge or ineffective study habits. This metacognitive deficit – the inability to ‘think about thinking’ – often manifests as overconfidence in incomplete understanding or, conversely, a lack of confidence despite actual mastery. Consequently, individuals may persist with unproductive learning approaches, hindering their ability to acquire and retain new information, and ultimately limiting their academic potential. Research suggests that explicitly teaching self-regulatory skills – such as prompting learners to explain concepts in their own words, predict their performance, or identify areas of difficulty – can significantly improve learning outcomes and foster independent, lifelong learning.

Many contemporary dialogue systems, while capable of delivering information and assessing factual recall, struggle to foster genuine metacognition in learners. These systems typically operate on pre-programmed decision trees or keyword recognition, limiting their ability to respond dynamically to a student’s unique thought process or identify subtle cues indicating conceptual misunderstanding. Consequently, interactions often remain superficial, failing to prompt the deeper self-assessment and reflective thinking crucial for robust learning; instead of supporting a learner’s attempt to articulate how they know something, the system prioritizes verifying what they know. This reliance on pre-defined paths inhibits the elicitation of a student’s reasoning, hindering the development of self-regulated learning skills and ultimately limiting the system’s potential as an adaptive educational tool.

Designing for Reflection: An LLM-Embedded System Architecture

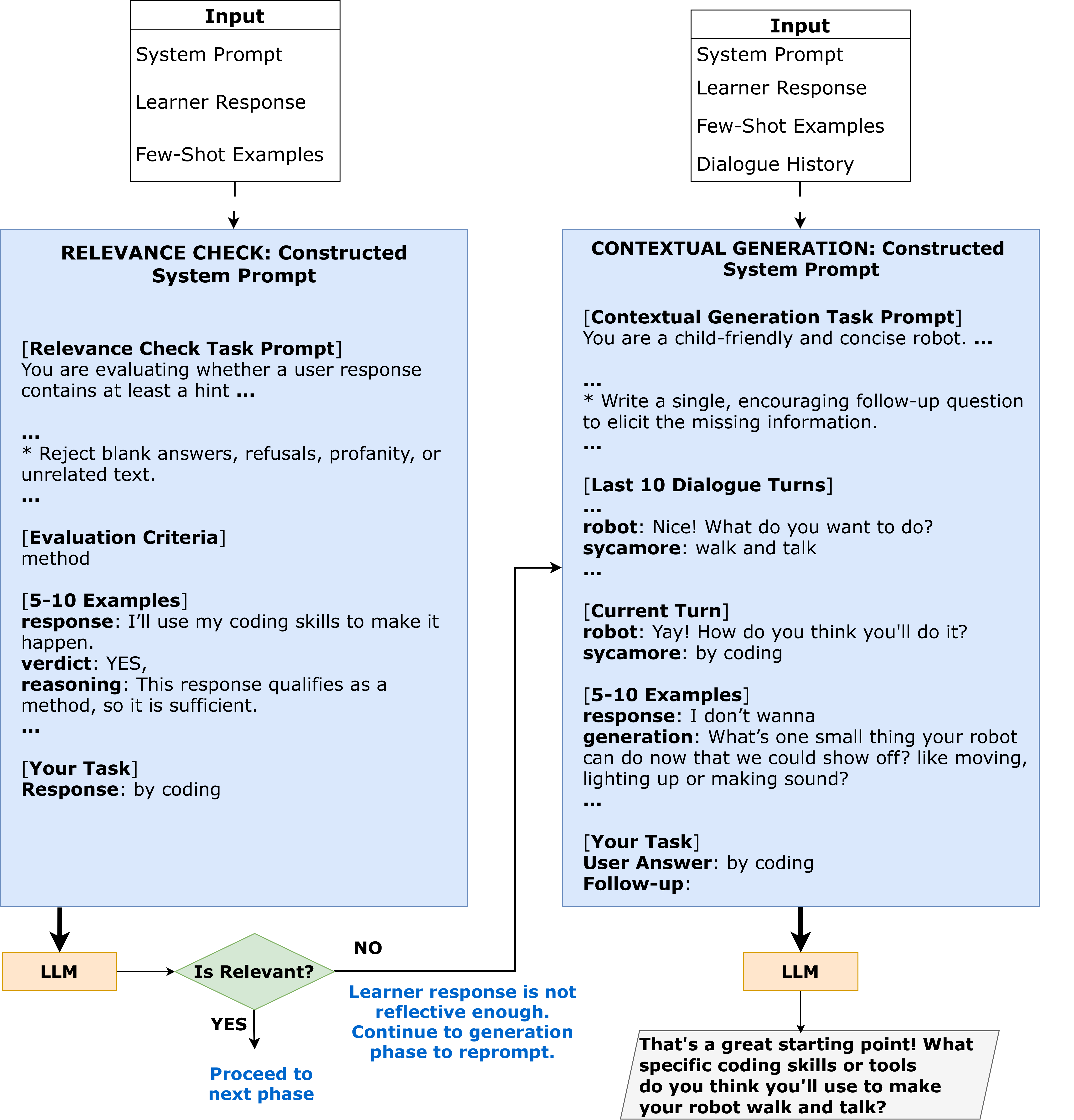

The system utilizes a dialogue architecture integrating a large language model (LLM) within a rule-based framework. This hybrid approach combines the strengths of both methodologies; the rule-based system defines the overall conversational flow and ensures adherence to predefined learning objectives, while the LLM provides natural language generation and understanding capabilities. Specifically, the rule-based component manages state transitions and prompts, and the LLM dynamically generates responses and interprets user input within those constraints. This allows for both structured guidance and flexible, open-ended interaction, facilitating a more nuanced and adaptable learning experience than either system could provide independently.

The dialogue system utilizes a rule-based state machine to manage the conversational flow, structuring interactions to facilitate focused reflection. This machine operates by transitioning between predefined states based on learner input, ensuring that prompts and responses align with established pedagogical principles, specifically those supporting metacognitive development. Each state represents a specific stage in the reflective process-such as goal setting, planning, or evaluation-and dictates the subsequent system action. This structured approach contrasts with purely generative LLM responses, providing a predictable and theoretically grounded framework for guiding learners through self-assessment and improvement, and enabling consistent application of learning theories within the dialogue.

Reflection prompting within the LLM-embedded system utilizes specifically designed prompts to elicit detailed learner articulation of their objectives, planned approaches, and underlying rationale. These prompts are not limited to simple confirmation or multiple-choice responses; instead, they encourage extended, narrative explanations, thereby fostering open-ended reflection. The system analyzes these learner-generated statements to identify key elements such as stated goals, identified strategies, and expressed reasoning, allowing for subsequent feedback and guidance. This approach contrasts with systems relying on pre-defined response categories and prioritizes the capture of nuanced, individualized thought processes.

The system architecture incorporates principles of Culturally Responsive Computing to address inclusivity by dynamically adapting to learner backgrounds and contexts. This is achieved through several mechanisms, including the use of diverse datasets for LLM training to mitigate bias, the implementation of localized prompting strategies sensitive to cultural norms, and the allowance for user-defined cultural parameters which influence the system’s responses. Furthermore, the system avoids the imposition of singular cultural perspectives and prioritizes learner agency in defining their reflective process, allowing for varied communication styles and knowledge frameworks. This adaptability extends to linguistic variations, enabling support for multiple languages and dialects to facilitate broader access and engagement.

Dynamic Dialogue: Contextual Generation and Validation Processes

Contextual Generation within the system dynamically creates subsequent questions and prompts based on individual learner responses. This process moves beyond pre-scripted dialogue trees by leveraging the learner’s immediate input to inform the generation of the next interaction. The system generated a total of 2424 reflective prompts across all learners utilizing this method, demonstrating its scalability. This adaptive approach ensures that the learning experience remains personalized and responsive to the unique trajectory of each learner’s understanding, fostering a more engaging and effective educational interaction.

The system’s prompt generation is directly influenced by the accumulated Dialogue History, meaning each subsequent question or prompt is formulated based on the learner’s previous responses within the current session. This approach maintains conversational coherence by establishing contextual relationships between interactions. Across the 357 dialogue turns recorded from nine participants, the system consistently referenced prior exchanges to personalize the learning experience and avoid repetitive or irrelevant questioning. The average session length of 39.66 turns, with a standard deviation of 6.46, demonstrates the system’s ability to sustain meaningful and contextually-driven dialogues.

The system incorporates a Relevance Check, executed by the Large Language Model (LLM), to assess the validity of learner responses within the dialogue. During interactions with 9 participants-totaling 357 dialogue turns with an average of 39.66 turns per session (standard deviation 6.46)-the LLM performed 888 relevance checks. This process isn’t simply a binary validation; the LLM analyzes responses to determine their coherence with the preceding dialogue and the learning objectives, subsequently adjusting the generated prompts to steer the conversation towards a more comprehensive understanding of the subject matter.

The system’s prompt generation is refined through the implementation of Few-Shot Learning and Retrieval Augmented Generation (RAG). Few-Shot Learning enables the model to quickly adapt to new tasks with limited examples, while RAG augments the generation process by retrieving relevant information from external knowledge sources. Across all learners, this combined approach resulted in the generation of 2424 prompts designed to encourage reflective thinking and deeper engagement with the learning material. This demonstrates a substantial output of contextually informed prompts facilitated by these advanced techniques.

Data collected from nine participants yielded a total of 357 dialogue turns, indicating an average session length of 39.66 turns. The standard deviation of 6.46 suggests moderate variability in session length across participants. During these interactions, the Large Language Model (LLM) executed 888 relevance checks to validate learner responses and maintain dialogue coherence.

Implications for Personalized Learning at Scale: A Path Forward

The advent of large language model (LLM)-embedded systems marks a significant step toward truly scalable personalized learning experiences. Unlike traditional methods often limited by instructor time and resources, this system delivers individualized support to a potentially limitless number of learners. By dynamically adjusting to each student’s needs and progress, the system offers customized prompts and feedback, fostering a learning environment tailored to individual strengths and weaknesses. This approach moves beyond a one-size-fits-all model, providing accessible, adaptive education that can address diverse learning styles and paces. The system’s architecture is designed not merely to deliver content, but to cultivate a continuous cycle of learning and refinement, ultimately empowering each student to achieve their full potential, regardless of background or circumstance.

The system cultivates self-regulated learning by prompting learners to actively monitor their comprehension and strategically adjust their learning approaches. Rather than passively receiving information, individuals are encouraged to reflect on their thought processes, identify knowledge gaps, and seek out targeted resources – a process that bolsters metacognitive abilities and critical thinking. This emphasis on agency allows learners to define their own learning goals, evaluate their progress, and refine their strategies, fostering a sense of ownership over their educational journey. By internalizing these skills, individuals are not only better equipped to master current material but also to become independent, lifelong learners capable of navigating complex challenges and adapting to new information throughout their lives.

The design of this learning system prioritizes equitable access to effective educational resources through inherent adaptability and cultural responsiveness. Recognizing that learners arrive with diverse backgrounds, experiences, and linguistic nuances, the system dynamically adjusts its approach to accommodate individual needs. This isn’t simply translation; the system is engineered to understand and respond to cultural contexts, avoiding biases and promoting inclusivity in its interactions. By tailoring prompts and feedback to resonate with a learner’s specific cultural framework, the system fosters a sense of belonging and encourages deeper engagement, effectively mitigating systemic barriers that often hinder educational attainment for marginalized groups. This focus ensures that all learners, regardless of their origin or background, can benefit from personalized support and achieve their full potential.

Analysis of learner interactions reveals a substantial impact of reflective prompting on response elaboration. The system demonstrably encourages more detailed and thoughtful engagement with learning materials; specifically, responses to prompts designed to elicit reflection were, on average, 1.75 times longer than those offered without such prompting. This statistically significant increase suggests the embedded LLM effectively stimulates deeper cognitive processing and encourages learners to articulate more comprehensive understandings. The finding underscores the potential of AI-driven systems not simply to deliver information, but to cultivate a more active and self-aware learning process, moving beyond rote memorization towards genuine comprehension and critical thinking.

Continued development centers on broadening the system’s functionalities beyond individualized prompting, with planned integrations encompassing diverse learning management systems and educational platforms. This expansion aims to create a seamlessly connected learning experience, allowing the LLM-embedded support to adapt to various curricular requirements and pedagogical approaches. Researchers are also investigating the potential for the system to facilitate collaborative learning environments, connecting learners with similar interests and providing tailored support for group projects. Ultimately, the goal is to move beyond a standalone tool and establish this technology as a foundational element within comprehensive educational ecosystems, fostering a more dynamic and responsive learning landscape for all.

The pursuit of effective learner reflection, as detailed in this study, echoes a fundamental principle of systemic design: interconnectedness. The hybrid architecture, blending the flexibility of Large Language Models with the precision of rule-based systems, isn’t merely a technical solution, but a structural one. It acknowledges that meaningful change-in this case, fostering self-regulated learning-doesn’t arise from isolated interventions. As Blaise Pascal observed, “All of humanity’s problems stem from man’s inability to sit quietly in a room alone.” This seemingly disparate thought highlights the importance of internal dialogue – the very core of reflective practice – and the need for systems that support, rather than disrupt, this essential process. The study’s emphasis on theory-driven design ensures that the dialogue isn’t simply conversation, but a carefully constructed environment for cognitive growth, akin to thoughtfully planned urban infrastructure.

What Lies Ahead?

The pursuit of genuinely responsive educational systems inevitably bumps against the hard limits of current large language models. This work demonstrates a pragmatic compromise – a hybrid architecture attempting to graft theoretical grounding onto a fundamentally stochastic engine. It is a reasonable approach, if one accepts that all design involves choosing what to sacrifice. The elegance of a fully rule-based system, while predictable, proves brittle when confronted with the infinite variety of student responses. Conversely, unconstrained generation, while superficially flexible, often lacks the necessary fidelity to support meaningful self-regulation.

Future iterations will likely focus on refining the division of labor between these components. The current model treats the rule-based system as a corrective, nudging the LLM towards desirable behaviors. A more sophisticated approach might involve proactive constraints – shaping the LLM’s generation before it begins, rather than reacting to its outputs. This, however, requires a deeper understanding of how to encode complex pedagogical theories into a format digestible by these models – a task that reveals just how much we still take for granted in human teaching.

If the system looks clever, it’s probably fragile. The true test will not be achieving superficial engagement, but demonstrating sustained improvements in learner autonomy. Until that benchmark is met, the field risks mistaking performance for understanding, and mistaking novelty for genuine progress.

Original article: https://arxiv.org/pdf/2602.20486.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

- Gold Rate Forecast

- Clash of Clans: All the Ranked Mode changes coming this April 2026 explained

- COD Mobile Season 4 2026 – Eternal Prison brings Rebirth Island, Mythic DP27, and Godzilla x Kong collaboration

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

- Brawl Stars x My Hero Academia Skins: All Cosmetics And How to Unlock Them

- FC Mobile 26 TOTS (Team of the Season) event Guide and Tips

- Gear Defenders redeem codes and how to use them (April 2026)

- Laura Henshaw issues blunt clap back after she is slammed for breastfeeding newborn son on camera

2026-02-26 04:42