Author: Denis Avetisyan

Researchers have developed an AI framework capable of independently designing and executing experiments using scanning probe microscopy, paving the way for faster scientific discovery.

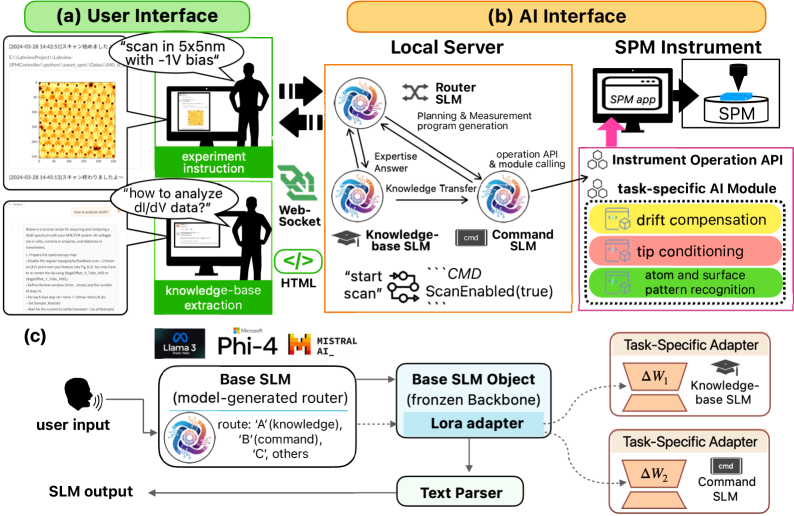

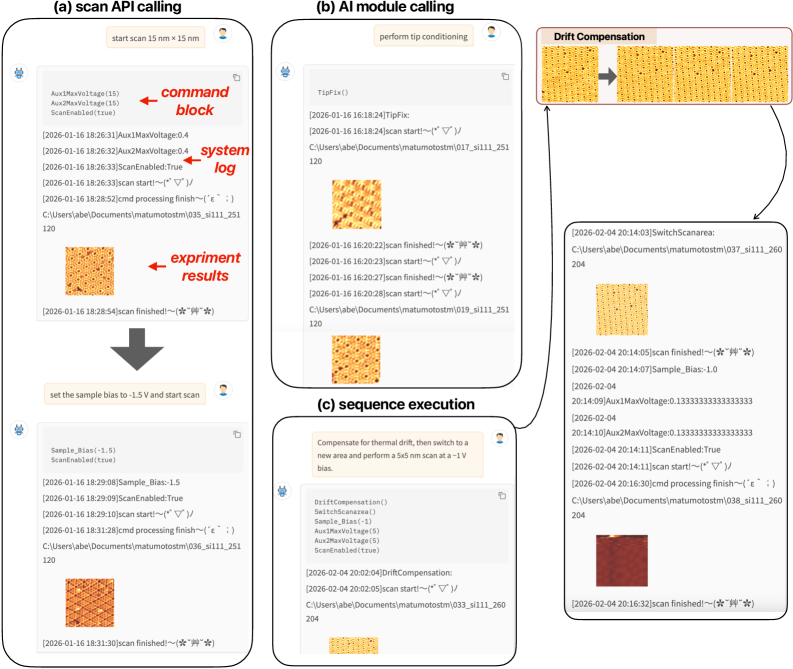

A customized language model and modular AI interface enable reliable, real-time autonomous control of complex laboratory equipment.

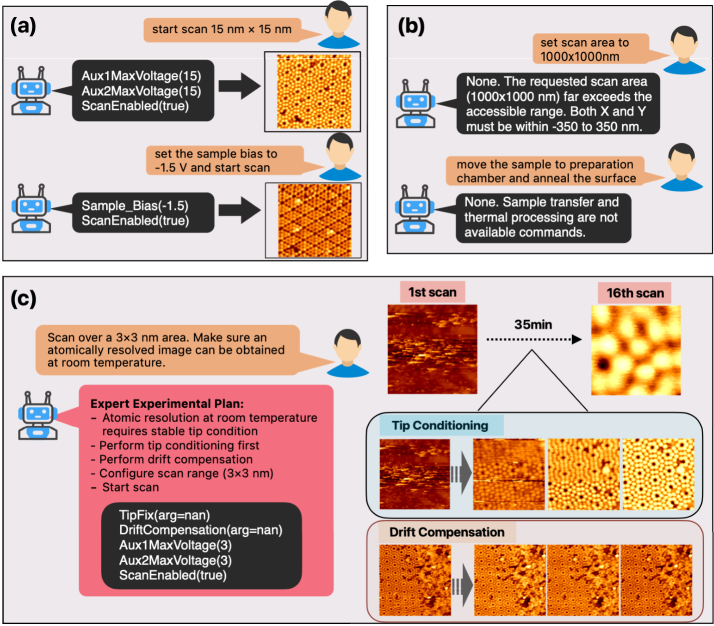

Achieving fully autonomous scientific experimentation remains challenging due to the need for both intelligent decision-making and reliable instrument control. This is addressed in ‘Autonomous Laboratory Agent via Customized Domain-Specific Language Model and Modular AI Interface’, which introduces a framework integrating customized small language models with a modular AI interface for real-time control of complex instrumentation. The system demonstrates robust, autonomous operation in scanning probe microscopy by separating intent interpretation from deterministic execution and validation, achieving high command accuracy even on consumer-grade hardware. Could this approach pave the way for scalable, self-driving laboratories capable of accelerating scientific discovery?

The Illusion of Control: Bridging the Gap to Physical Reality

The increasing sophistication of artificial intelligence demands more than just algorithmic prowess; it necessitates a reliable bridge between abstract commands and the nuanced actions of physical instruments. Modern AI applications, ranging from automated laboratories to robotic surgery, rely on translating high-level instructions – such as “analyze this sample” or “adjust the laser intensity” – into a precise sequence of control signals. This translation isn’t trivial, requiring systems capable of interpreting intent, managing potential ambiguities, and ensuring the safe and accurate operation of complex machinery. Without a robust intermediary, the inherent flexibility of AI can be undermined by imprecise execution or unintended consequences, hindering its practical deployment in real-world scenarios where precision and reliability are paramount.

Current automation architectures, while capable in controlled environments, frequently stumble when confronted with the unpredictable nuances of real-world application. A primary limitation lies in their rigidity; systems designed for specific tasks struggle to adapt to unforeseen circumstances or evolving experimental needs. This inflexibility is compounded by a lack of robust safety protocols, creating potential risks when AI-driven control interacts with sensitive equipment or complex processes. Existing systems often prioritize performance over precaution, leaving little margin for error when dealing with dynamic environments or ambiguous data. Consequently, the deployment of advanced AI in practical settings is often hampered by concerns surrounding reliability, security, and the potential for unintended consequences, necessitating a more adaptable and fail-safe approach to system design.

The AI Interface functions as a crucial intermediary, establishing a reliable connection between the abstract directives of artificial intelligence and the precise actions of physical systems. This interface doesn’t simply translate commands; it actively validates them, ensuring each instruction falls within pre-defined safety parameters and operational boundaries. By incorporating real-time monitoring and error-handling protocols, the AI Interface mitigates the risk of unintended consequences or system failures. It achieves this through a layered architecture that prioritizes both functional performance and operational integrity, effectively acting as a safeguard against potentially hazardous outcomes and bolstering confidence in complex AI-driven applications.

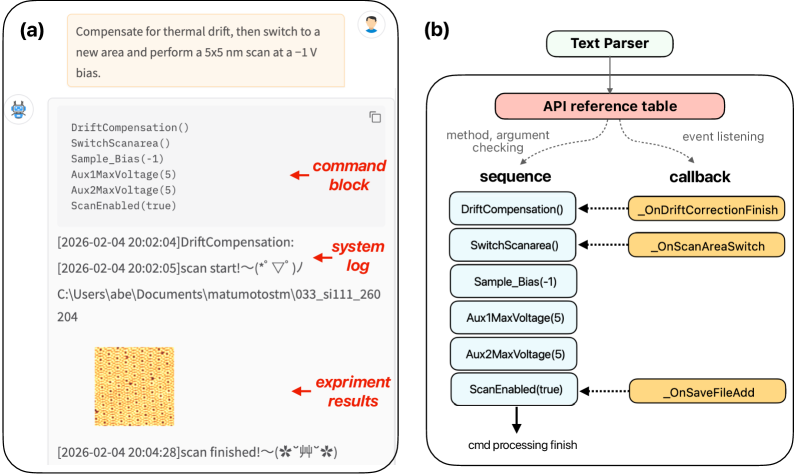

Decoding Intent: The Role of the Text Parser

The Text Parser component functions as the primary interface between the AI command generator and the system’s operational modules. It receives commands formulated in natural language and systematically deconstructs them into executable instructions. This process involves lexical analysis to identify keywords representing desired actions – such as ‘create’, ‘modify’, or ‘delete’ – followed by parameter extraction to define the scope and specifics of those actions. Parameters can include object identifiers, numerical values, or descriptive attributes, all of which are parsed and validated before being passed to the appropriate system functions. The parser employs a defined grammar and vocabulary to ensure accurate interpretation and to handle variations in command phrasing, enabling consistent and reliable command execution.

Asynchronous coroutines enable concurrent execution by allowing the system to initiate multiple operations without waiting for each to complete sequentially. This is achieved through a mechanism where a function can suspend its execution, yielding control back to the event loop, while retaining its state. The event loop then manages the execution of other ready-to-run coroutines. When the suspended coroutine’s required resources become available – such as data from an external source or the completion of a prior task – it resumes execution from where it left off. This non-blocking approach significantly increases processing speed and overall throughput, as the system isn’t idle while waiting for individual operations to finish, and allows for the simultaneous handling of multiple incoming instructions.

The Text Parser incorporates a rules-based system to validate all decoded commands against pre-defined operational boundaries. This validation process includes checks for permissible actions, acceptable parameter ranges, and resource availability. Commands failing these checks are flagged and rejected, preventing the execution of potentially harmful instructions such as unauthorized system modifications or data access. Specifically, the parser verifies input against a whitelist of approved functions and their associated parameters, and enforces limits on resource allocation to avoid denial-of-service scenarios or system instability. This safeguard is critical for maintaining system integrity and security when processing external or AI-generated input.

The Final Guardian: Constraint-Aware Validation

Constraint-Aware Validation functions as a critical safeguard by performing a final assessment of all commands prior to their execution by the instrument control systems. This validation step operates as a preemptive measure, ensuring that no instruction which could potentially lead to unsafe operation or damage reaches the hardware. By intercepting and rejecting commands that deviate from pre-defined operational parameters and safety protocols, the system minimizes the risk of erroneous actions and contributes to overall system reliability. This final check is distinct from, and operates independently of, the initial command generation processes.

Constraint-Aware Validation operates by comparing each generated command against a comprehensive set of pre-defined operational limits and safety protocols before execution. These limits encompass parameters such as maximum velocity, acceleration, joint angles, and temperature thresholds, as well as interlocks designed to prevent collisions or damage to the instrument. Any command that exceeds these boundaries, or would result in a violation of a safety protocol-for example, exceeding a motor’s torque capacity or entering a restricted workspace-is immediately rejected and flagged, preventing potentially hazardous actions from being transmitted to the instrument control systems. This proactive rejection mechanism ensures that only valid and safe commands are executed, contributing to the overall system safety.

Operational data indicates that the Constraint-Aware Validation system successfully prevented a substantial number of potentially unsafe commands. Specifically, during Stage I testing, the system achieved a command generation accuracy of 99.3%, meaning only 0.7% of generated commands were flagged and rejected as invalid or unsafe. Stage II testing yielded a command generation accuracy of 95.2%, indicating a 4.8% rejection rate. These figures demonstrate the system’s efficacy in identifying and preventing erroneous actions before they reach instrument control systems, contributing to overall operational safety.

The Mirage of Efficiency: Dynamic Adapter Injection

Dynamic adapter injection represents a significant advancement in neural network efficiency by enabling the selective activation of task-specific modules only as required. Rather than loading an entire network for each new task, this method strategically injects small, specialized adapters into the core model, focusing computational resources solely on the parameters relevant to the current operation. This on-demand activation process dramatically reduces the overall computational burden, as irrelevant parameters remain dormant, minimizing both memory consumption and processing time. The technique allows a single base model to effectively handle a diverse range of tasks without the prohibitive costs typically associated with large, monolithic networks, paving the way for more adaptable and resource-conscious artificial intelligence systems.

The principle of on-demand activation represents a significant leap in computational efficiency by mirroring the brain’s own resource allocation strategies. Instead of loading all possible task modules into memory simultaneously, this technique selectively engages only those necessary for the current operation. This drastically reduces both memory consumption and computational overhead, as unused modules remain dormant, freeing up valuable resources. The result is a streamlined system capable of achieving superior performance, particularly in resource-constrained environments, and enabling more complex operations without sacrificing speed or responsiveness. This targeted activation minimizes energy expenditure and allows for the processing of larger datasets or more intricate models, ultimately pushing the boundaries of what’s achievable with existing hardware.

A significant barrier to deploying complex artificial intelligence models lies in their substantial demand for computational resources, particularly GPU memory. Recent advancements address this challenge through 4-bit quantization, a technique that dramatically reduces the precision with which model parameters are stored. This compression achieves approximately a threefold reduction in GPU memory usage, effectively shrinking the digital footprint of these models. Consequently, sophisticated AI capabilities, previously confined to high-end server infrastructure, become viable on consumer-grade hardware, like standard gaming computers. This democratization of access empowers a broader range of researchers, developers, and users to engage with and build upon cutting-edge AI technologies, fostering innovation and expanding the potential applications of these powerful tools.

![Domain-specific adaptation systematically improves the performance of [latex]4[/latex]-bit-quantized language models-including Phi-4, Mistral-v0.3, and Llama-3.2-as demonstrated by gains in classification accuracy, token generation speed, reduced GPU memory usage, and improved scores on perplexity, BERT F1, and GEval metrics.](https://arxiv.org/html/2602.20669v1/x4.png)

The pursuit of autonomous experimentation, as detailed in this work concerning language model agents and scanning probe microscopy, echoes a fundamental challenge in all scientific endeavor. Each iteration of the customized domain-specific language model, striving for reliable control, feels akin to chasing an elusive truth. As Nikola Tesla observed, “The true mysteries of the universe are revealed to those who dare to question.” This research, in its attempt to build an AI interface capable of self-driving laboratories, embodies that very spirit of inquiry – a continuous refinement of models, acknowledging that complete understanding, like a black hole’s singularity, may forever remain beyond reach, yet the attempt itself illuminates the path.

What Lies Beyond the Horizon?

This work, concerning the automation of experimentation via language models and modular interfaces, doesn’t resolve the central paradox. It merely shifts the boundary of what is considered ‘known’. The capacity to direct an instrument, even with precision, does not equate to understanding the phenomena observed. Discovery isn’t a moment of glory, it’s realizing how little is truly grasped. The system functions, certainly, but the underlying reality remains stubbornly opaque, and every refinement of control reveals new layers of complexity, not necessarily comprehension.

The reliance on small language models, while pragmatic, introduces a subtle but crucial limitation. These models, by necessity, operate within defined parameters, extrapolating from existing data. But the truly novel – the signal lost in the noise, the unexpected interaction – may lie entirely outside that learned domain. Everything called law can dissolve at the event horizon. The challenge, then, isn’t simply building more sophisticated algorithms, but designing systems that can gracefully acknowledge their own ignorance.

Future work will undoubtedly focus on increasing the autonomy and efficiency of these agents. Yet, a more profound direction lies in incorporating mechanisms for active uncertainty quantification and genuine hypothesis generation. The goal shouldn’t be to eliminate the need for human intuition, but to create a symbiotic relationship where the machine highlights the limits of its knowledge, inviting further investigation – a mirror reflecting the vastness of the unknown.

Original article: https://arxiv.org/pdf/2602.20669.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Limbus Company 2026 Roadmap Revealed

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Total Football free codes and how to redeem them (March 2026)

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- ‘Project Hail Mary’s Soundtrack: Every Song & When It Plays

2026-02-25 06:34