Author: Denis Avetisyan

A new framework intelligently schedules perception modules, focusing computational resources on the most relevant information to enhance efficiency and reduce latency in collaborative robotics.

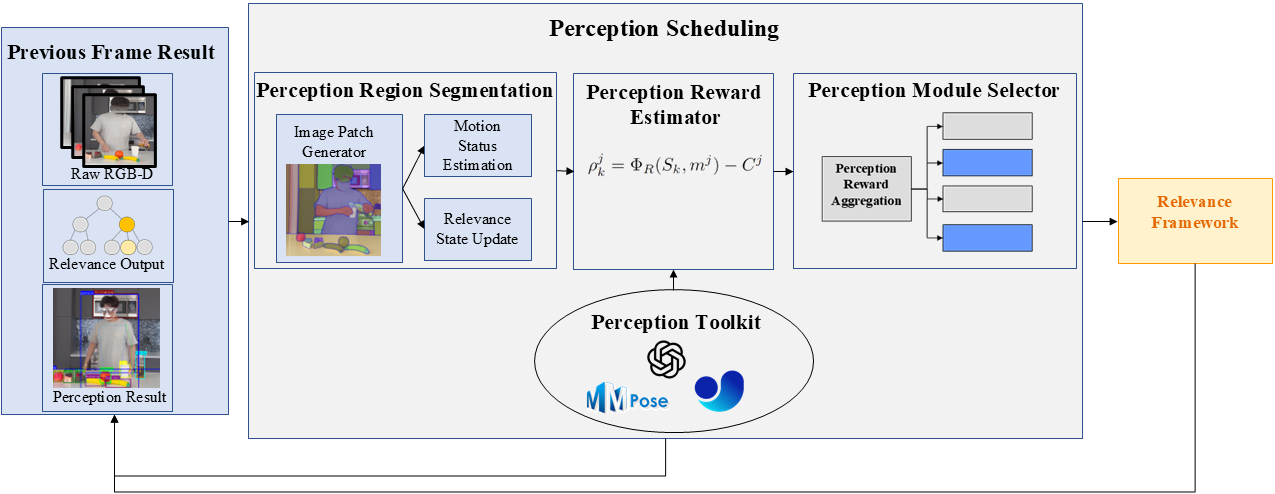

This work presents a relevance-driven perception scheduling system that dynamically activates modules based on information gain and computational cost for improved performance in multi-modal perception tasks.

Achieving comprehensive scene understanding in human-robot collaboration requires substantial computational resources, often leading to unacceptable latency in real-time applications. This paper, ‘Perceive What Matters: Relevance-Driven Scheduling for Multimodal Streaming Perception’, addresses this challenge by introducing a novel perception scheduling framework that dynamically prioritizes and activates perception modules based on estimated information gain and contextual relevance. Experimental results demonstrate that this approach reduces computational latency by up to 27.52% while simultaneously improving perception accuracy, suggesting a pathway toward more efficient and responsive collaborative robots. Could this relevance-driven scheduling serve as a scalable foundation for advanced multimodal perception systems in increasingly complex human-robot interactions?

The Computational Imperative of Selective Perception

Conventional perception systems often operate by exhaustively processing all incoming sensory data, a strategy that quickly becomes a computational bottleneck, particularly in complex and rapidly changing environments. This complete analysis, while seemingly thorough, demands significant processing power and time, hindering real-time responsiveness. Imagine a self-driving car attempting to simultaneously analyze every pixel from its cameras, every nuance of sound, and every signal from its lidar – the sheer volume of information overwhelms the system. This exhaustive approach contrasts sharply with human perception, which selectively focuses on relevant stimuli, prioritizing information crucial for immediate action. Consequently, these systems struggle to adapt to dynamic situations where timely reactions are essential, limiting their effectiveness in real-world applications requiring swift and intelligent responses.

The inherent slowness of processing all incoming sensory information creates a critical challenge for systems requiring real-time interaction. This computational burden introduces unacceptable latency – the delay between an event occurring and a system’s response – severely hindering effective engagement with dynamic environments. Consider applications like autonomous navigation or robotic surgery; even fractions of a second delay can compromise safety and performance. The limitations aren’t merely about processing speed, but the ability to react within the timeframe relevant to the ongoing activity. Consequently, systems reliant on exhaustive data processing struggle to maintain responsiveness, effectively becoming detached from the immediacy of the world they inhabit and limiting their practical application in fast-paced scenarios.

The efficacy of any perceptual system hinges not on processing all available information, but on discerning what truly matters. Faced with a constant influx of sensory data, an organism – or an artificial intelligence – must efficiently prioritize stimuli relevant to its current task and surrounding context. This selective attention is crucial because exhaustive processing quickly becomes a computational bottleneck, introducing unacceptable delays in response time. Studies reveal that effective prioritization relies on predictive coding – anticipating likely events and weighting incoming data accordingly – and attentional mechanisms that dynamically filter irrelevant signals. Ultimately, the ability to rapidly identify and focus on the most pertinent information determines the speed and accuracy with which an entity can interact with a dynamic environment, making contextual prioritization a foundational challenge in the field of real-time perception.

A Scheduling Framework for Perceptual Efficiency

The Perception Scheduling Framework operates by dynamically activating only a subset of available perception modules at any given time. This selective activation is based on an ongoing assessment of computational resources and the potential information yield of each module; modules with a high computational cost relative to their expected information gain are temporarily deactivated. This approach contrasts with systems that run all modules continuously, and allows for significant reductions in overall processing load. The framework’s adaptability enables it to respond to changing environmental conditions and task demands by reallocating resources to the most pertinent data streams, thereby maximizing the utility of available computational power.

The Reward Function is central to the Perception Scheduling Framework, quantitatively assessing the value of activating each perception module. This function calculates a reward score for each module by subtracting its Computational Cost from its expected Information Gain. Information Gain is determined by the potential reduction in uncertainty about the environment, measured by changes in the system’s internal state representation. Computational Cost is defined as the processing time, energy consumption, and memory bandwidth required for module execution. The resulting reward score, therefore, represents a net benefit; modules with higher scores are prioritized, ensuring that computational resources are allocated to those providing the most significant informational return. The function is mathematically expressed as: [latex]R = I – C[/latex], where R is the reward, I is the Information Gain, and C is the Computational Cost.

Adaptive Sampling and Relevance Modeling enhance the Perception Scheduling Framework by dynamically adjusting data acquisition rates and weighting incoming data streams. Relevance Modeling employs a learned model, trained on historical data, to predict the informational value of each data source. This allows the system to prioritize streams exhibiting high predicted relevance and reduce sampling rates for those deemed less critical. Adaptive Sampling then implements this prioritization by allocating computational resources proportionally to the predicted relevance, effectively focusing processing power on the most informative data while minimizing redundant data acquisition and processing. The combination reduces overall computational load and improves the system’s responsiveness to changing environmental conditions and task requirements.

Foundational Elements: Scene Understanding and Motion Analysis

Scene segmentation is a fundamental computer vision process that divides an image into multiple segments, each representing a distinct object or region. This partitioning is typically achieved through analysis of pixel properties like color, texture, and intensity, utilizing algorithms such as clustering, edge detection, and region growing. The resulting segments provide a simplified representation of the scene, enabling higher-level analysis tasks like object recognition and tracking by reducing the computational complexity and focusing processing on meaningful areas. Effective scene segmentation is crucial for applications including autonomous navigation, robotics, and video surveillance, as it provides the necessary environmental understanding for informed decision-making.

Motion Status Estimation determines which areas of a visual scene are changing over time, indicating the presence of dynamic elements. A common implementation utilizes Frame Differencing, a technique that calculates the difference between successive frames in a video sequence. Significant pixel value changes between frames are flagged as motion, allowing the system to isolate moving objects or actors. The magnitude of these changes can also provide information about the speed or intensity of the motion. While computationally efficient, Frame Differencing is sensitive to noise and illumination changes, often requiring pre- or post-processing steps, such as thresholding and filtering, to improve accuracy and reduce false positives.

Full-body human pose estimation utilizes computational algorithms to determine the 3D positions of key joints in the human body across consecutive frames of video. Accuracy is enhanced through the implementation of the Kalman Filter, a recursive algorithm that estimates the state of a dynamic system – in this case, human movement – by combining predictions with sensor measurements, effectively reducing noise and uncertainty. Furthermore, the incorporation of entropy measurements provides a means of quantifying uncertainty in pose estimations; higher entropy values indicate greater ambiguity and can trigger re-estimation or weighting of data to improve tracking robustness, particularly in scenarios involving occlusion or rapid movement. This combined approach enables precise tracking of human kinematics and facilitates the interpretation of intent based on observed motion patterns.

Real-World Validation: Collaborative Robotics

Effective human-robot collaboration hinges on responsive and informative interactions, and a newly developed framework prioritizes both to achieve a truly seamless partnership. By dramatically minimizing delays – known as latency – in processing and response times, the system allows humans and robots to work together with an unprecedented sense of synchronicity. This is accomplished not simply through speed, but also through a careful focus on maximizing the relevance of information shared between human and machine. The framework actively filters and prioritizes data, ensuring that each party receives only the essential details needed for immediate action, thereby reducing cognitive load and fostering a more natural, intuitive workflow. This targeted approach moves beyond simple task execution, enabling a dynamic interplay where human insight and robotic precision are combined for greater efficiency and adaptability.

Efficient human-robot collaboration hinges on intelligent resource allocation, and recent advancements demonstrate a significant leap forward through improved activation recall. This innovative approach ensures that perception modules – the ‘eyes’ and ‘ears’ of the robot – are engaged only when relevant information is required, drastically reducing computational load and power consumption. Rather than constantly processing sensory data, the system strategically activates modules based on contextual need, achieving up to a 72.73% improvement in efficiency specifically within the complex task of human-guided walking. This targeted activation not only streamlines operations but also allows for more responsive and intuitive interaction, paving the way for robots that seamlessly adapt to dynamic environments and collaborate effectively with humans.

The system’s capacity for human-robot collaboration is significantly bolstered through the integration of Vision-Language Models, enabling nuanced comprehension of complex directives and dynamic adaptation to evolving surroundings. This approach moves beyond simple task execution, allowing robots to interpret instructions phrased in natural language and react intelligently to unforeseen changes. Critically, this integration achieves a demonstrable performance improvement, reducing processing latency by 27.52% when contrasted with traditional parallel perception pipelines. Furthermore, the system exhibits a high degree of accuracy – reaching 98% keyframe accuracy – signifying reliable performance even in complex and unpredictable scenarios, ultimately paving the way for more intuitive and effective human-robot partnerships.

The pursuit of computational efficiency, as demonstrated in this work on relevance-driven scheduling, aligns perfectly with a fundamental principle of robust system design. The paper meticulously addresses the challenge of minimizing latency in multimodal perception, a goal achievable only through rigorous prioritization of information gain. This echoes Andrew Ng’s sentiment: “The key to AI isn’t scaling up, it’s scaling down-reducing the data required to achieve the same result.” By dynamically activating perception modules based on relevance, the framework effectively ‘scales down’ computational demands, ensuring timely responses in human-robot collaboration. The elegance lies in its mathematical foundation – a provable method for maximizing information return on investment.

Beyond the Horizon

The presented framework, while demonstrating a pragmatic approach to perception scheduling, ultimately highlights the enduring tension between approximation and truth. The reliance on reward modeling, however cleverly constructed, introduces a subjective element – a heuristic, if one prefers – into what should ideally be a deterministic process. The system operates on what matters, but defining ‘mattering’ necessitates an acceptance of inherent imprecision. Reproducibility, a cornerstone of rigorous analysis, remains subtly compromised by this reliance on learned valuations.

Future work must address the limitations of current information gain metrics. The assumption that increased information always equates to improved performance requires further scrutiny. A more nuanced understanding of the relationship between perceptual data, computational cost, and actual task completion is critical. Furthermore, the scalability of this approach to highly complex, dynamic environments-those exhibiting genuine unpredictability-remains an open question. The elegance of a provably optimal solution, even if computationally intractable, still holds a certain appeal.

Ultimately, the pursuit of efficient perception cannot come at the expense of foundational principles. The current trajectory suggests a move toward increasingly adaptive, yet inherently probabilistic, systems. A parallel investigation into formal verification techniques-methods capable of guaranteeing system behavior even in the face of uncertainty-may prove essential if truly reliable human-robot collaboration is to be achieved. The algorithm must not merely appear to function, but be demonstrably correct.

Original article: https://arxiv.org/pdf/2603.13176.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- Total Football free codes and how to redeem them (March 2026)

- Limbus Company 2026 Roadmap Revealed

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Brawl Stars Sands of Time Brawl Pass brings Sandstalker Lily and Sultan Cordelius sets, along with chromas and more

- Brawl Stars Brawl Cup Pro Pass arrives with the Dragon Crow skin and Chroma, unique cosmetics, and more rewards

- Clash of Clans April 2026 Gold Pass Season introduces a Archer Queen skin

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

2026-03-17 06:33