Author: Denis Avetisyan

A new framework empowers robots to anticipate human errors during teamwork and proactively adjust, leading to more seamless and effective collaboration.

This review details a hybrid approach combining Large Language Models and Relational Dynamic Influence Diagram Language to enable robust task planning and failure handling in human-robot interactions.

Despite advances in artificial intelligence, enabling robots to reliably collaborate with humans in complex environments requires robust handling of inevitable task failures. This paper introduces ‘Anticipate, Adapt, Act: A Hybrid Framework for Task Planning’, which addresses this challenge by integrating the predictive power of Large Language Models with the probabilistic reasoning of Relational Dynamic Influence Diagram Language. The resulting framework allows robots to proactively anticipate potential failures stemming from human limitations or environmental factors, and to execute preventative or recovery actions, demonstrably improving task performance in simulation. Will this hybrid approach pave the way for more seamless and effective human-robot teamwork in real-world applications?

Anticipating the Collaborative Imperative

For genuine human-robot teamwork to emerge, robots must move beyond simply reacting to instructions and instead learn to anticipate upcoming tasks and navigate inherent uncertainties. This proactive capability demands sophisticated systems capable of modeling human intentions, predicting potential disruptions, and adapting plans in real-time. Rather than requiring explicit direction for every step, these robots should infer goals from subtle cues – a glance, a partially completed action, or even the arrangement of tools – and prepare accordingly. Successfully anticipating needs and gracefully handling unexpected events isn’t merely about efficiency; it’s about building trust and fostering a collaborative environment where humans and robots can seamlessly achieve shared objectives, even in dynamic and unpredictable settings.

Conventional robotic planning relies heavily on precisely defined environments and predictable sequences of action, a stark contrast to the inherent messiness of human-robot collaboration. These methods, often built on algorithms designed for static scenarios, falter when confronted with the dynamic, ambiguous, and often illogical behavior typical of human partners. Real-world tasks introduce countless unforeseen variables – a misplaced tool, an unexpected interruption, or a spontaneous change in plan – that overwhelm traditional planning systems. The rigidity of these approaches necessitates constant human intervention to correct errors or redirect the robot, hindering true collaboration and limiting the potential for robots to proactively assist in complex, shared endeavors. Consequently, researchers are actively developing more adaptable planning frameworks capable of handling uncertainty and integrating seamlessly with the fluid nature of human action.

Achieving genuine joint task completion and seamless human-robot collaboration hinges on overcoming existing limitations in robotic adaptability. The capacity for a robot to not merely respond to human actions, but to proactively integrate with them, necessitates a fundamental shift in design principles. This isn’t simply about improved efficiency; it’s about establishing a truly synergistic partnership where tasks are completed more effectively – and with greater flexibility – than by either agent working alone. Such collaboration demands a robot capable of interpreting ambiguous cues, predicting potential needs, and dynamically adjusting its plans, ultimately fostering a work environment where human intuition and robotic precision converge for optimal outcomes. Without this level of integrated functionality, robots remain tools, rather than true collaborators, limiting the potential for complex problem-solving and shared accomplishment.

Constructing a Framework for Intelligent Collaboration

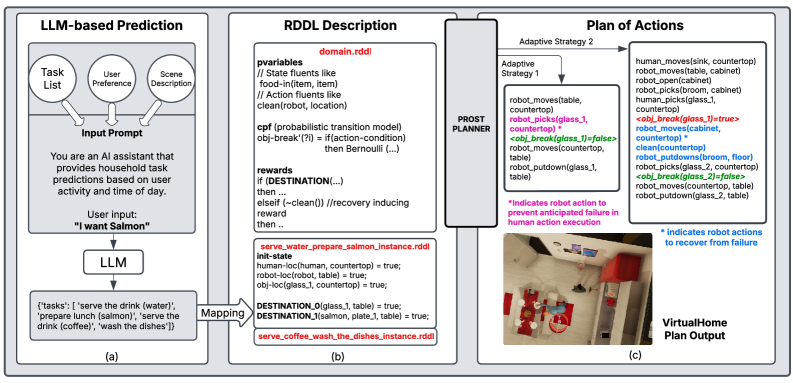

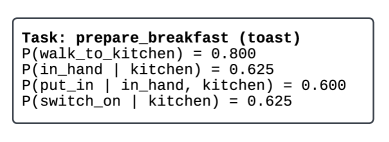

Task anticipation within the framework utilizes Large Language Models (LLMs) to forecast subsequent tasks based on the current operational context and established user preferences. The LLM is trained on datasets of task sequences, enabling it to probabilistically predict the next logical action given the current state and historical user behavior. This prediction is not deterministic; rather, the LLM outputs a ranked list of potential future tasks, along with associated confidence scores. The system then utilizes these predictions to proactively prepare resources and pre-compute potential action sequences, reducing latency and improving overall system responsiveness. The LLM’s parameters are continuously refined through reinforcement learning, based on the accuracy of its predictions and the efficiency of the resulting task execution.

The system employs Relational Dynamic Influence Diagram Language (RDDL) for probabilistic planning, enabling the modeling of uncertainty inherent in real-world task execution. RDDL facilitates the representation of state variables, actions, and their probabilistic effects on the environment. This representation is then utilized by planners such as the PROST Planner to compute optimal action sequences, considering the probabilities of different outcomes. The PROST Planner specifically leverages RDDL models to generate plans that maximize expected reward while accounting for the uncertainty defined within the RDDL formulation, allowing for robust task completion even with imperfect information or unpredictable events.

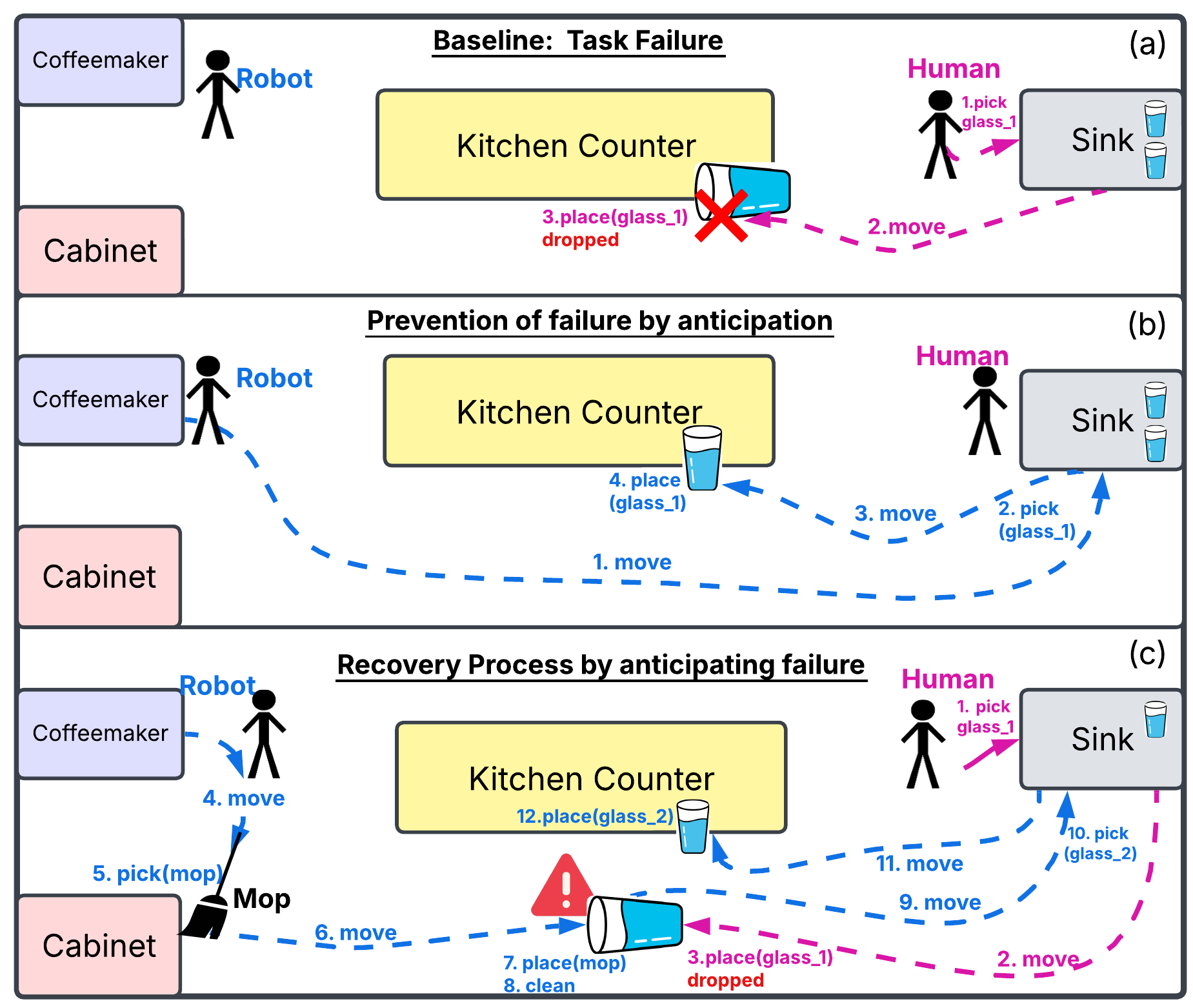

The system incorporates proactive failure handling through dual mechanisms: Failure Prevention and Failure Recovery. Failure Prevention employs predictive modeling and real-time monitoring of task execution to identify and mitigate potential issues before they occur, utilizing techniques such as redundant sensing and conservative action selection. Should a failure occur despite preventative measures, the Failure Recovery component activates, leveraging a library of pre-defined recovery actions and a probabilistic replanning algorithm to restore the system to a functional state. This dual approach aims to minimize downtime and maximize task completion rates by addressing both the anticipation and resolution of potential errors.

The integrated framework achieves an 85.5% subgoal completion rate by combining effective task planning with proactive issue management. This is accomplished through the simultaneous application of LLM-based task anticipation and RDDL-based probabilistic planning, allowing the system to not only determine optimal action sequences but also to forecast potential execution failures. The framework incorporates both failure prevention – identifying and avoiding problematic states – and failure recovery – implementing alternative plans when failures occur. This dual approach to robustness significantly improves performance compared to systems relying solely on reactive planning or static task sequences, enabling sustained operation in dynamic and uncertain environments.

Modeling Human Behavior for Robust System Performance

The Human Behavior Model is a probabilistic framework designed to represent the likelihood of various human actions within a shared environment. This model doesn’t attempt to predict specific actions, but rather assigns probabilities to a range of possible behaviors, including potential errors or failures in task execution. These probabilities are determined through observational data and statistical analysis of human-robot interaction scenarios. The model incorporates parameters representing factors such as task complexity, environmental conditions, and individual human characteristics to refine these probability estimations. The output of the model is a distribution of potential human actions and their associated failure rates, which serves as input for both proactive and reactive robot behaviors.

The Human Behavior Model directly supports both proactive and reactive robotic behaviors. Failure Prevention strategies utilize predicted human actions – and associated probabilities – to anticipate potential issues and adjust robot behavior accordingly, thereby avoiding problematic scenarios before they arise. Conversely, when unexpected human actions do occur, the model informs Failure Recovery actions by providing a range of likely responses and enabling the robot to select the most effective course of action to mitigate the impact of the unforeseen event. This dual functionality allows for a more robust and adaptable system capable of operating effectively in dynamic human environments.

Validation and refinement of the Human Behavior Model are performed using the VirtualHome simulation environment. This allows for controlled experimentation and data collection regarding the probabilities of human actions and potential failures in a realistic domestic setting. Comparative analysis against baseline methods, lacking this modeled human behavior component, demonstrates a statistically significant increase in the number of failures prevented. Specifically, simulations reveal a measurable improvement in the robot’s ability to anticipate and avoid problematic interactions, leading to enhanced robustness and reliability in task execution. The simulation environment facilitates iterative model adjustments based on observed performance, optimizing the predictive accuracy of the Human Behavior Model.

Demonstrating Results and Charting Future Directions

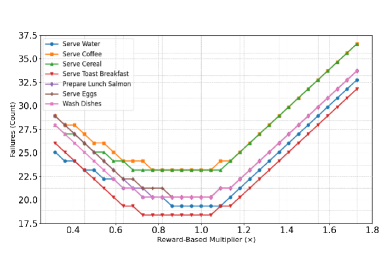

Evaluations conducted within the VirtualHome simulation environment reveal a substantial enhancement in task completion accuracy when utilizing the proposed framework compared to conventional planning methodologies. The system consistently outperformed established baselines, demonstrating a marked ability to successfully navigate and complete assigned household tasks. This improved performance isn’t simply a matter of speed, but rather a demonstration of robust and reliable execution, even when faced with the inherent uncertainties present in a dynamic, simulated home environment. The results suggest a promising pathway towards more adaptable and efficient artificial intelligence capable of functioning effectively in complex, real-world settings, exceeding the capabilities of prior approaches to robotic task planning.

Evaluations within the VirtualHome simulation reveal a substantial performance advantage for the developed framework. The system successfully completed 85.5% of defined subgoals during task execution, demonstrating a marked improvement over established methods. In direct comparison, a traditional RDDL-based approach achieved a subgoal completion rate of only 68.26%, while a large language model baseline lagged further behind at 44.55%. This quantitative result underscores the framework’s efficacy in navigating complex environments and achieving higher levels of task success through proactive planning and adaptive execution.

The observed gains in task completion accuracy stem from the framework’s core strengths in preemptive reasoning and robust execution. Unlike conventional planning approaches, this system doesn’t simply react to immediate needs; it actively forecasts upcoming requirements, allowing for preparatory actions that streamline the overall process. Crucially, the framework is designed to navigate unpredictable environments by incorporating mechanisms for uncertainty modeling and real-time adaptation. When unexpected events or failures occur – a common challenge in dynamic settings – the system doesn’t halt; instead, it intelligently re-evaluates its plan, identifies alternative pathways, and proactively implements corrective measures, thereby maintaining progress even amidst disruption. This combination of foresight, flexibility, and resilience is the key driver behind the substantial performance improvements demonstrated within the VirtualHome simulation.

The demonstrated success within the VirtualHome simulation is intended as a stepping stone towards practical application; future development will prioritize translating this framework to address the intricacies of real-world environments. This expansion involves tackling challenges such as unpredictable conditions, incomplete information, and the need for robust error recovery in dynamic settings. Crucially, the research team aims to bridge the gap between simulated intelligence and physical action by integrating the framework with advanced robotic platforms, enabling robots to not only plan and anticipate tasks but also to execute them autonomously in complex, unstructured spaces. This integration promises to unlock new capabilities in areas such as assistive robotics, automated logistics, and search-and-rescue operations, ultimately moving beyond virtual proficiency towards tangible real-world impact.

The pursuit of robust human-robot collaboration, as detailed in this framework, echoes a fundamental principle of systemic design. The integration of LLMs with RDDL isn’t merely about predicting failures; it’s about creating a system capable of adapting to unforeseen circumstances-a hallmark of resilient structures. As John von Neumann observed, “The sciences do not try to explain away mystery, but to refine it.” This research doesn’t eliminate the inherent unpredictability of human action, but rather refines the robot’s ability to anticipate and respond, ensuring a more fluid and effective partnership. The framework’s emphasis on proactive failure handling demonstrates that structure, carefully considered, dictates behavior-a concept central to building truly collaborative systems.

Where Do We Go From Here?

This work demonstrates a path toward more robust human-robot collaboration, but systems break along invisible boundaries-if one cannot see them, pain is coming. The integration of Large Language Models with formal planning methods like RDDL offers a compelling synergy, yet the inherent fragility of relying on natural language understanding remains a central concern. The LLM component, while adept at predicting human actions, still operates as a ‘black box’; its reasoning is opaque, and failure modes are difficult to anticipate systematically. Future work must address this by developing methods for verifiable reasoning within the LLM itself, or by creating formal guarantees about its outputs.

Furthermore, the current framework’s reliance on a predefined reward mechanism presents a limitation. Real-world collaboration is rarely governed by explicit, quantifiable rewards. True adaptability requires a system capable of learning and internalizing nuanced, implicit social cues-a capacity that necessitates a deeper engagement with the principles of relational dynamics and game theory. The framework also currently assumes a relatively static environment; extending its capabilities to handle unforeseen circumstances and dynamically changing goals remains a significant challenge.

Ultimately, the pursuit of truly collaborative robots demands a shift in focus from merely anticipating failures to understanding the underlying structure of the collaborative process itself. Structure dictates behavior, and until we model the relational dependencies between humans and robots with sufficient fidelity, we will remain limited in our ability to create systems that are not only intelligent but also genuinely trustworthy.

Original article: https://arxiv.org/pdf/2602.19518.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- The Division Resurgence Best Weapon Guide: Tier List, Gear Breakdown, and Farming Guide

- Last Furry: Survival redeem codes and how to use them (April 2026)

- GearPaw Defenders redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- eFootball 2026 “Countdown to 1 Billion Downloads” Campaign arrives with new Epics and player packs

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- Total Football free codes and how to redeem them (March 2026)

- Clash of Clans Sound of Clash Event for April 2026: Details, How to Progress, Rewards and more

2026-02-25 05:14