Author: Denis Avetisyan

A new framework allows teams of robots to dynamically adjust their plans based on natural language instructions, enhancing collaboration and safety.

This work introduces CaPE, a language-guided path editing system for safe and interpretable multimodal motion planning in multi-agent robotics.

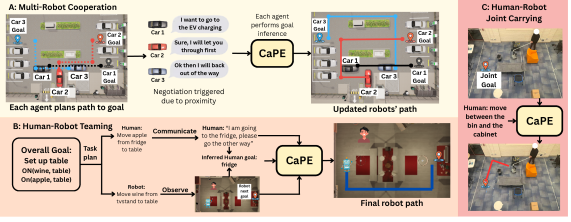

Achieving robust multi-agent cooperation demands adaptable planning, yet decentralized agents often struggle with uncertainty in predicting others’ intentions. This work introduces ‘Safe and Interpretable Multimodal Path Planning for Multi-Agent Cooperation’, presenting CaPE, a framework that enables robots to dynamically adjust their trajectories based on natural language communication. CaPE leverages a vision-language model to synthesize path editing programs, verified by a model-based planner, ensuring both safety and interpretability in collaborative scenarios. Could this approach unlock truly open-ended cooperation between robots and humans in complex, real-world environments?

The Inevitable Drift: Navigating Dynamic Systems

Conventional path planning methodologies, while effective in controlled settings, frequently encounter limitations when deployed in the unpredictable nature of real-world environments. These systems typically rely on pre-defined maps and static calculations, proving inadequate when confronted with unforeseen obstacles, dynamic changes, or incomplete information. The rigidity of these approaches necessitates constant recalculations or complete replanning when faced with even minor alterations, hindering responsiveness and efficiency. Consequently, a robot operating with a traditional planner might become stuck, require human intervention, or fail to complete its task in a timely manner, highlighting the need for more adaptable and robust navigational strategies that can accommodate the inherent complexities and uncertainties of dynamic surroundings.

Successful collaborative efforts between humans, and between multiple robotic systems, hinge on a capacity for real-time path modification that surpasses the limitations of pre-programmed routes. Static plans, while useful in predictable scenarios, falter when faced with the dynamism inherent in shared workspaces or unforeseen obstacles. Instead, effective collaboration requires continuous sensing, predictive modeling of partner trajectories, and the ability to rapidly replan and adjust individual paths – not just to avoid collisions, but to optimize workflows and anticipate the needs of collaborators. This necessitates algorithms that prioritize responsiveness and adaptability, allowing agents to seamlessly negotiate complex environments and achieve shared goals through fluid, coordinated movement. The focus shifts from simply finding a path to maintaining a collaborative path, even as conditions evolve.

CaPE: A Framework for Adaptive Trajectories

Traditional path planning systems often generate a single, fixed trajectory which is inadequate for dynamic environments or unforeseen circumstances. CaPE (Language-Guided Path Editing) overcomes this limitation by introducing a framework that allows agents to dynamically adjust their planned paths in response to external stimuli. This is achieved by enabling the system to interpret natural language instructions or react to environmental cues – such as the presence of a new obstacle or a change in a goal location – and subsequently modify the existing trajectory without requiring complete replanning from scratch. This approach improves adaptability and robustness in complex scenarios where static plans are likely to fail.

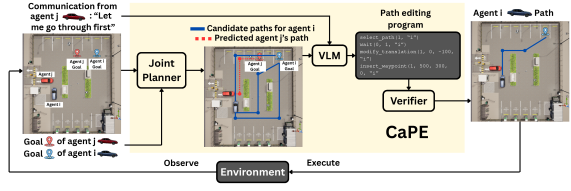

CaPE utilizes a Vision-Language Model (VLM) to interpret natural language commands and convert them into actionable path modifications for agents. The VLM processes both visual input – representing the environment and agent states – and textual instructions, such as “avoid the obstacle” or “go around the pedestrian”. This combined understanding enables the system to generate concrete adjustments to pre-planned trajectories, defining alterations to waypoints, velocities, or overall route selection. The resulting interface allows users to control multi-agent systems through intuitive language, eliminating the need for precise numerical inputs or complex programming.

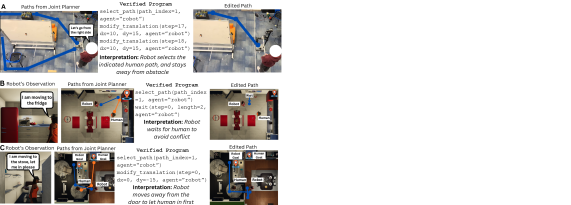

The CaPE system employs a sequential process beginning with a ‘Planner’ module that generates multiple candidate paths for an agent to follow. These initial paths are then inputted into a Vision-Language Model (VLM) which refines them based on natural language instructions. Following VLM refinement, a ‘Safety Verification’ module assesses the resulting path for potential collisions, ensuring the proposed trajectory is executable without violating safety constraints before implementation. This three-stage process – planning, refinement, and verification – allows for dynamic path adaptation based on linguistic input while maintaining operational safety.

The Architecture of Adaptation: Multimodal Sensory Integration

The CaPE system’s Vision-Language Model (VLM) utilizes a multimodal input approach, integrating four core data streams for path planning. These inputs consist of static map data providing environmental context, a set of candidate paths generated by the planner, predicted trajectories of surrounding agents obtained through ‘Trajectory Prediction’, and natural language instructions specifying desired navigational goals. This combined input allows the VLM to reason about both the static and dynamic elements within the environment, enabling informed path modification based on high-level instructions and anticipated agent behavior. The integration of these diverse data types is critical for robust and adaptable path planning in complex scenarios.

The CaPE system’s Vision-Language Model (VLM) utilizes combined inputs to generate a ‘Path Editing Program’ consisting of a series of modifications to an initially proposed path. This program doesn’t simply overlay a new path; it analyzes the input data – including map features representing static obstacles, and predicted trajectories of other agents defining dynamic elements – to determine specific alterations. These alterations can include path smoothing, detours around obstacles, adjustments to avoid predicted collisions, and optimizations based on predicted agent behavior. The resulting program is then executed by the path planner to produce a refined trajectory that accounts for both fixed and moving elements within the environment.

Homotopy-aware path planning, integrated within the Planner component, generates a set of diverse initial paths by considering continuous deformations of the path without altering its endpoints. This approach differs from traditional sampling-based planners by prioritizing path similarity, resulting in a collection of candidate trajectories that explore a wider range of feasible solutions while maintaining topological consistency. The diversity of these initial paths significantly enhances the capabilities of the Vision-Language Model (VLM) during path editing, providing the VLM with a richer input space from which to select and refine the optimal trajectory based on multimodal inputs and predicted environmental changes.

Beyond Simulation: Real-World Implications and Future Trajectories

The CaPE system showcases remarkable adaptability through its successful implementation in both human-robot collaboration and multi-robot teamwork scenarios. Specifically, it facilitates ‘Joint Carrying’ tasks, where a human and robot work together to transport objects, and ‘Object Rearrangement’ – enabling multiple robots to cooperatively reorganize items within a workspace. This versatility stems from CaPE’s ability to effectively coordinate actions and adapt to dynamic environments, proving its potential beyond controlled laboratory settings. By excelling in these practical applications, CaPE establishes itself as a promising framework for advanced robotic systems designed to operate seamlessly alongside humans and amongst themselves, opening doors for more efficient and collaborative workflows.

The CaPE system demonstrated a marked ability to coordinate multiple robotic agents, achieving a 60% success rate in complex simulated tasks – a result that sharply contrasts with baseline methods which were unable to complete the tasks at all. This substantial improvement highlights CaPE’s effectiveness in managing the intricacies of multi-robot coordination, suggesting a robust framework for tackling scenarios demanding synchronized action. The successful coordination wasn’t merely about completion; the system exhibited an ability to navigate unforeseen challenges within the simulation, suggesting a degree of adaptability crucial for real-world deployment where perfect information is rarely available. This achievement establishes a significant benchmark, indicating CaPE’s potential to unlock more sophisticated and reliable multi-robot teamwork in dynamic environments.

Recent human-robot teaming trials reveal that the CaPE system consistently achieves superior performance in joint carrying tasks when compared to existing baseline models. Participants directly ranked CaPE’s collaborative efforts as significantly more effective, indicating a tangible improvement in the ease and success of shared manipulation. This enhanced performance isn’t simply a matter of robotic precision; it reflects CaPE’s ability to anticipate human movements and adapt its assistance accordingly, resulting in a smoother, more intuitive collaborative experience. The findings suggest a promising pathway toward robots seamlessly integrating into human workflows, offering genuine support and increasing overall efficiency in physically demanding tasks.

The presented CaPE framework, designed to facilitate adaptable multi-agent path planning through language-guided editing, inherently acknowledges the transient nature of solutions. Every adjustment, every correction communicated via natural language, is a response to the inevitable imperfections and evolving circumstances of a dynamic environment. As Donald Davies observed, “Every abstraction carries the weight of the past,” and CaPE embodies this by continually refining paths based on accumulated experience and communicated feedback. The system doesn’t strive for a perfect, static solution, but rather embraces a process of continual adaptation, ensuring resilience through slow, communicative change – a pragmatic approach to managing the decay inherent in all complex systems.

What Lies Ahead?

The presented framework, CaPE, represents a predictable step: the imposition of linguistic control onto a fundamentally physical system. Such interventions rarely introduce perfection, but rather a new class of failures. The inevitable discord between communicated intent and robotic execution will not negate the value of this work; it will define the next phase. Future iterations must address not the elimination of error – an impossible task – but the graceful accommodation of it. The system’s current reliance on interpretable paths is a laudable constraint, yet interpretability itself is a transient property, eroded by increasing complexity.

A pertinent question lingers: to what extent does imposing human-like communication onto multi-agent systems truly enhance coordination, or simply mirror the inefficiencies inherent in human collaboration? The pursuit of “natural” interaction risks conflating usability with optimality. The true measure of success will not be whether robots appear to cooperate, but whether they achieve outcomes beyond the capabilities of the agents acting independently, irrespective of perceived “naturalness.”

Ultimately, this work exemplifies a common trajectory in robotics: the initial enthusiasm for a novel interface gives way to the pragmatic realization that the interface is the problem. The long-term value lies not in the specifics of language-guided path editing, but in the accumulated understanding of how communication, even imperfect communication, can shape – and be shaped by – the evolution of collective robotic behavior. Time, as always, will reveal the true cost of this particular accommodation.

Original article: https://arxiv.org/pdf/2602.19304.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Limbus Company 2026 Roadmap Revealed

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Total Football free codes and how to redeem them (March 2026)

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- ‘Project Hail Mary’s Soundtrack: Every Song & When It Plays

2026-02-25 06:32