Author: Denis Avetisyan

Deploying robots in real-world settings presents unique challenges, and this article offers a collaboratively-built resource to help navigate them.

This review presents a checklist developed by the HRI community to articulate tacit knowledge and improve the safety and quality of field research with robots.

Despite growing interest in public human-robot interaction (HRI), valuable lessons from real-world deployments are often lost, leading to repeated challenges for researchers. This paper, ‘A Checklist for Deploying Robots in Public: Articulating Tacit Knowledge in the HRI Community’, addresses this gap by presenting a collaboratively developed checklist designed to capture and share essential considerations for successful public HRI studies. Through interviews with experts and application to existing research, we demonstrate how this resource can refine deployment practices and improve both the quality and safety of fieldwork. Will this open-source, customizable checklist foster a more robust and collaborative approach to public robotics research?

The Disconnect Between Lab and Life

Human-Robot Interaction research has historically favored the precision of laboratory settings, allowing for meticulous control over variables and repeatable experiments. However, this approach often creates an artificial disconnect from the complexities of everyday life. Public spaces – bustling sidewalks, crowded cafes, or dynamic workplaces – present a stark contrast to the sterile environment of a lab, introducing unpredictable factors like varying lighting, ambient noise, diverse pedestrian behaviors, and unexpected obstacles. Consequently, robots that perform flawlessly in controlled tests may struggle with even basic navigation or social cues when deployed in real-world scenarios, highlighting a significant challenge in translating laboratory successes into practical, real-world applications. The nuanced social dynamics and inherent unpredictability of public environments demand a shift towards more ecologically valid HRI studies to develop truly adaptable and robust robotic systems.

The pursuit of genuinely seamless social navigation for robots faces a significant hurdle: a disconnect between laboratory demonstrations and the complexities of real-world environments. Current research often prioritizes controlled settings, yielding robots adept at performing tasks within defined parameters but struggling with the inherent unpredictability of public spaces – spontaneous human movements, ambiguous social cues, and unexpected obstacles. This limitation prevents the development of robots capable of truly integrating into human society, requiring them to not only perceive their surroundings but also to interpret and appropriately respond to the nuanced and often unwritten rules governing human interaction. Until robotic systems can reliably navigate these complexities, their potential for widespread social acceptance and practical application remains unrealized, hindering progress beyond carefully curated demonstrations.

Deploying robots beyond the laboratory necessitates a reckoning with the substantial, often unseen, work of field studies. Research transitioning to real-world environments isn’t simply a matter of replicating lab protocols; it demands considerable logistical support, meticulous data annotation that accounts for unpredictable variables, and dedicated personnel to manage interactions with the public. This “hidden labor” encompasses not only the technical challenges of maintaining robots in dynamic spaces, but also the ethical considerations of prolonged public engagement and the complex task of interpreting nuanced social cues in uncontrolled settings. Ignoring these demands can lead to flawed data, unsustainable research practices, and ultimately, robots ill-equipped to navigate the complexities of human environments. Acknowledging and addressing this labor is therefore crucial for fostering responsible innovation in human-robot interaction.

Standardizing Real-World Deployments

Ecological validity, the extent to which research findings generalize to real-world settings, is significantly enhanced through public robot deployments. Traditional Human-Robot Interaction (HRI) research frequently occurs in controlled laboratory environments which, while offering precision, often lack the unpredictable variables present in naturalistic settings. Deploying robots in public spaces introduces complexities such as diverse populations, variable lighting and sound conditions, and unanticipated human behaviors. Addressing these challenges during development and testing yields more robust and generalizable results, improving the potential for successful long-term integration of robots into everyday life and providing a more accurate assessment of HRI system performance than is possible within the confines of a laboratory.

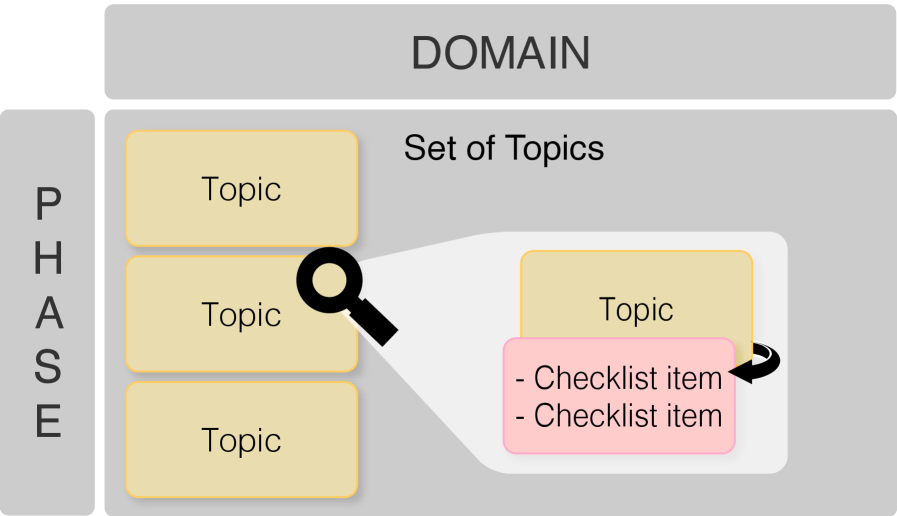

This research introduces a checklist designed to facilitate robot deployments in public environments, addressing the need for shared practical knowledge within the Human-Robot Interaction (HRI) community. The checklist is structured as a set of “flip cards” – a tangible format intended to promote accessibility and ease of use during deployment preparation and execution. Developed collaboratively with HRI researchers, the checklist consolidates lessons learned from prior public deployments, aiming to reduce redundant errors and improve the overall efficiency of field studies. The tool is intended to be a resource for documenting and disseminating best practices related to the logistical and practical challenges of operating robots outside of controlled laboratory settings.

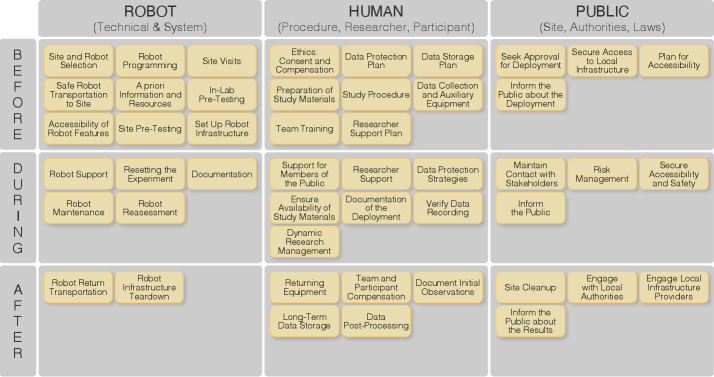

The deployment checklist categorizes considerations across three primary domains – Robot, Human, and Public – to provide a holistic framework for public human-robot interaction (HRI) research. These domains encompass factors such as robot hardware and software limitations, participant safety and informed consent procedures, and public space regulations and accessibility. The checklist further structures these considerations across distinct deployment phases – Planning, Preparation, Execution, and Post-Deployment – to facilitate systematic evaluation and iterative improvement of deployment protocols. By organizing knowledge in this manner, the checklist intends to minimize redundant errors, standardize best practices, and ultimately enhance the efficiency and reproducibility of public HRI studies.

Ethical Considerations: A Foundation for Trust

Public deployment of robotic systems necessitates rigorous ethical protocols, primarily concerning informed consent and data protection. Informed consent requires clear communication to individuals regarding the robot’s capabilities, data collection methods – including sensor types and storage locations – and the intended use of collected data. Data protection mandates compliance with relevant regulations, such as GDPR or CCPA, ensuring data minimization, purpose limitation, storage security, and individual rights to access, rectify, and erase personal data. Failure to obtain explicit consent or adequately protect collected data can result in legal repercussions, reputational damage, and erosion of public trust in robotic technologies. Organizations deploying these systems must establish transparent data governance policies and implement robust security measures to mitigate potential privacy breaches and ensure responsible data handling practices.

Proactive risk assessment is paramount in the deployment of robotic systems, requiring researchers to identify potential hazards throughout the design, development, and testing phases. This includes evaluating failure modes, considering environmental factors, and anticipating unintended consequences of robot actions. Mitigation strategies must be implemented to minimize potential harm to the public, encompassing both physical safety – such as collision avoidance systems and emergency stop mechanisms – and psychological well-being. Comprehensive testing, including simulations and real-world trials in controlled environments, is necessary to validate safety measures and ensure robust performance under various conditions. Documentation of identified risks, mitigation strategies, and testing results is crucial for transparency and accountability.

Participatory design methodologies, including techniques like the Wizard of Oz method, are essential for developing socially acceptable and effective robotic systems. The Wizard of Oz technique allows researchers to simulate complex robotic behaviors using human operators hidden from the user, enabling the evaluation of user interactions and expectations before fully automated systems are built. This iterative process facilitates the identification of usability issues and allows for the collection of nuanced qualitative feedback regarding user experience, preferences, and potential concerns. By involving end-users throughout the design and development lifecycle, researchers can ensure that robotic systems are not only technically feasible but also align with user needs and are readily adopted in real-world scenarios, minimizing potential for negative social impact and maximizing user satisfaction.

Towards Truly Inclusive Robotics

Human-robot interaction research frequently centers on Western, educated, industrialized, rich, and democratic (WEIRD) populations, inadvertently limiting the scope and relevance of findings. This geographical and cultural bias hinders the development of truly generalizable HRI principles; robots designed and evaluated solely within these contexts may exhibit unpredictable or ineffective behaviors when deployed in diverse cultural settings. The assumption that human social cues and expectations are universal proves demonstrably false, as cultural norms profoundly shape perceptions of robots, acceptable interaction styles, and even the very definition of ‘trust’ or ‘rapport’. Consequently, a lack of global perspectives not only restricts the impact of HRI research but also risks creating technologies that exacerbate existing inequalities or fail to meet the needs of a substantial portion of the world’s population, necessitating a conscious shift toward inclusive and representative study designs.

Sustained engagement in long-term Human-Robot Interaction (HRI) field studies demands a proactive focus on researcher wellbeing. The immersive nature of these studies – often involving extended periods in diverse cultural contexts and consistent engagement with participants – can lead to considerable emotional and physical strain. Prolonged exposure to challenging environments, coupled with the cognitive demands of data collection and analysis, increases the risk of burnout, potentially compromising data quality and study longevity. Recognizing this, researchers are increasingly advocating for strategies that prioritize self-care, including adequate rest, access to mental health resources, and the implementation of robust support networks. By acknowledging the human cost of rigorous research and actively fostering a culture of wellbeing, the field can ensure the sustainability of crucial long-term studies and safeguard the valuable contributions of its researchers.

The future of human-robot interaction hinges on a deliberate shift towards socially responsible and culturally sensitive design. Current development often prioritizes technical prowess, yet truly impactful robots require systematic consideration of diverse global perspectives and ethical implications. This necessitates a proactive approach, integrating cultural understanding and social awareness into every stage of the design process – from initial conceptualization to long-term deployment. By intentionally building robots that respect cultural nuances, acknowledge varied societal values, and prioritize inclusivity, researchers move beyond simply creating functional machines and instead foster genuine, beneficial partnerships between humans and robots across the globe. Such an approach promises not only more effective technology, but also a future where robotic systems contribute positively to a wider range of communities and enhance human wellbeing for all.

The development of this checklist exemplifies a pursuit of clarity within a complex field. Researchers often operate with tacit knowledge-unwritten understandings honed through experience. This paper seeks to externalize that understanding, transforming it into a structured resource. As Blaise Pascal observed, “The eloquence of the body is proportioned to the poverty of the mind.” Similarly, a well-defined checklist diminishes the need for implicit, and potentially flawed, assumptions during robot deployment. By distilling best practices into a concise tool, the authors aim to reduce ambiguity and foster safer, more reliable public Human-Robot Interaction research, mirroring a desire to move from intuitive practice to deliberate, documented procedure.

What Lies Ahead?

This checklist is not an ending. It is a provisional map. The field assumes robot deployment is primarily a technical problem. That assumption ages quickly. True difficulty resides in the unpredictable nature of public interaction. Every complexity needs an alibi; repeated failures demonstrate a lack of honest accounting for that complexity.

Future work must move beyond enumerating potential problems. It requires robust methods for capturing unexpected outcomes. Qualitative data, while valuable, is prone to subjective interpretation. The challenge isn’t simply documenting what goes wrong, but discerning why it went wrong, with sufficient rigor to inform subsequent deployments. Abstractions age, principles don’t.

Ultimately, the goal isn’t a perfect checklist. It’s a culture of mindful deployment. A commitment to treating public space not as a testing ground, but as a shared environment. A recognition that the most important data isn’t about the robot, but about the people who encounter it.

Original article: https://arxiv.org/pdf/2602.19038.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Limbus Company 2026 Roadmap Revealed

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Total Football free codes and how to redeem them (March 2026)

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- ‘Project Hail Mary’s Soundtrack: Every Song & When It Plays

2026-02-24 18:57