Author: Denis Avetisyan

A new approach fine-tunes pre-trained navigation policies using reinforcement learning, allowing robots to safely and reliably explore environments they’ve never seen before.

This work introduces a reinforcement learning framework for adapting diffusion-based navigation policies, enabling robust sim-to-real transfer without requiring additional expert demonstrations.

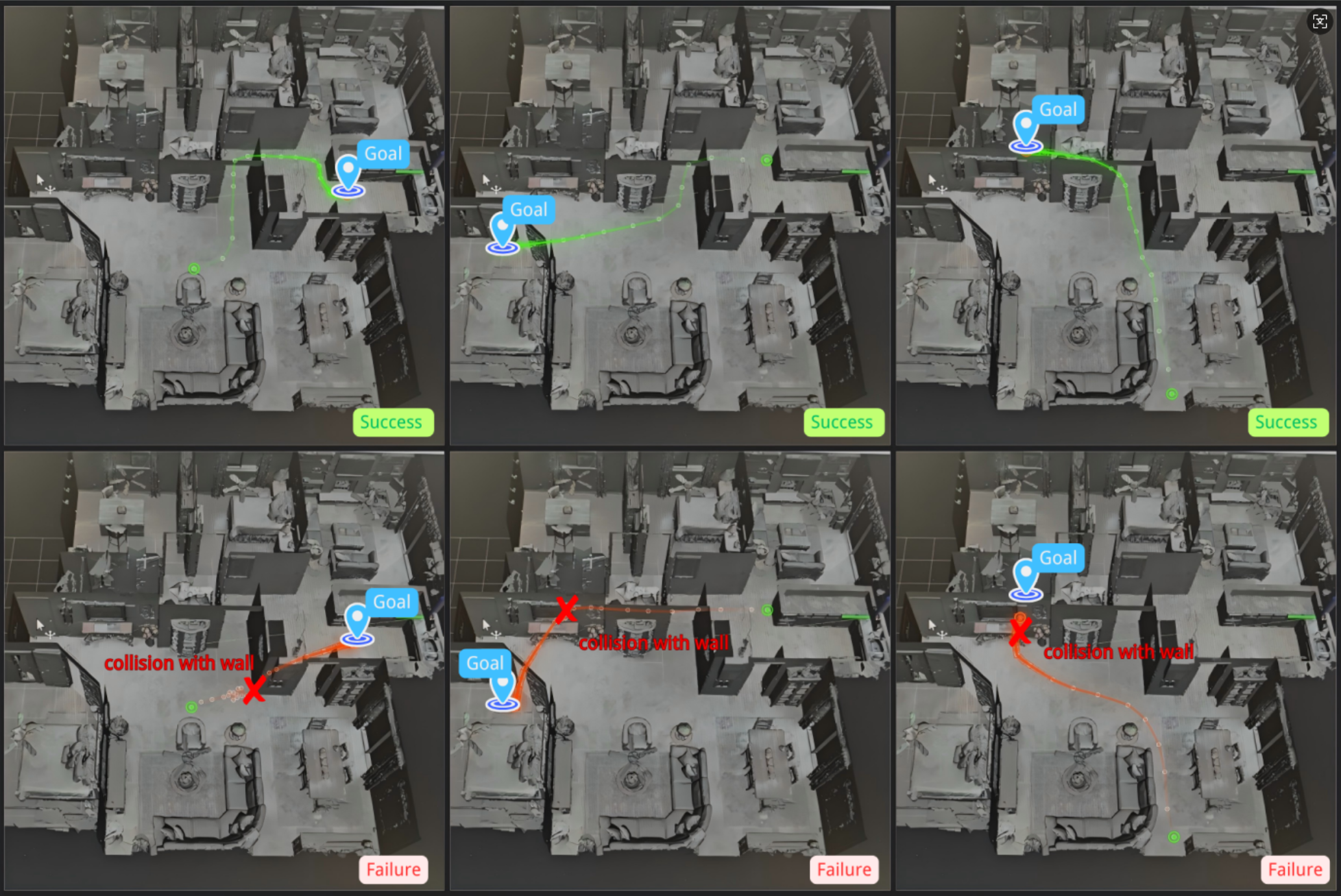

While diffusion-based robot navigation policies demonstrate impressive zero-shot generalization from large-scale imitation learning, their performance degrades in unseen environments due to distribution shift and accumulated errors. This work, ‘Beyond Imitation: Reinforcement Learning Fine-Tuning for Adaptive Diffusion Navigation Policies’, introduces a novel reinforcement learning framework to adapt these pretrained policies, leveraging their multi-trajectory sampling and a Group Relative Policy Optimization (GRPO) approach that avoids computationally expensive value network training. Experiments in simulation demonstrate improved success rates and reduced collisions, while successful transfer to a real quadruped platform suggests enhanced adaptability and safe generalization. Can this approach unlock truly robust and reliable robotic navigation in complex, real-world scenarios?

Deconstructing the Static World: The Limits of Traditional Robot Navigation

Conventional robotic navigation often depends on pre-defined movement paths, or trajectories, meticulously designed for static environments. However, this approach encounters significant challenges when confronted with the complexities of the real world – spaces filled with moving obstacles, unexpected changes, and inherent unpredictability. These carefully crafted trajectories struggle to adapt to dynamic scenarios, frequently requiring constant recalculation or resulting in collisions. The rigidity of these methods stems from their reliance on precise environmental maps and predictable behaviors, making them less effective in situations where real-time adjustments are crucial for successful navigation. Consequently, robots employing solely trajectory-based planning often exhibit limited autonomy and struggle to operate reliably outside of highly controlled settings.

Traditional robot trajectory planning frequently demands painstaking manual adjustments to perform even simple tasks, a process that proves brittle when confronted with unforeseen circumstances. This reliance on precisely calibrated motions restricts a robot’s ability to adapt to dynamic environments – a cluttered warehouse, a bustling home, or an uneven outdoor terrain. Each new scenario often necessitates a complete re-tuning of the system, hindering the development of truly autonomous machines capable of operating reliably in the real world. The inability to generalize learned behaviors from one situation to another represents a significant obstacle, limiting the deployment of robotic systems beyond highly structured and predictable settings and ultimately impeding progress toward widespread robotic assistance.

Unpredictability as a Feature: Diffusion Policies and the Art of Anticipation

A Diffusion Policy is a generative model specifically designed to learn the probability distribution governing robot trajectories. This approach contrasts with traditional policies that output a single, deterministic action; instead, the Diffusion Policy learns to represent the range of plausible paths a robot can take to achieve a given goal. By modeling this distribution, the policy can then sample diverse trajectories, enabling adaptability and robustness in dynamic or uncertain environments. The learned distribution allows the system to move beyond pre-defined paths and explore a wider solution space, improving performance in complex scenarios. This generative capability is achieved through a process of learning to map states to probability distributions over future states and actions.

The Diffusion Policy employs an iterative refinement process, termed a ‘denoising chain’, to generate robot trajectories. This chain begins with a purely random trajectory and progressively refines it through multiple steps. Each step involves predicting and removing noise from the current trajectory, guided by the learned distribution of feasible paths. This iterative denoising allows the policy to explore a wide range of possible trajectories, ultimately converging on a feasible and diverse set of paths. The result is improved robustness in navigation, as the robot is not limited to a single, pre-defined solution, but can adapt to unforeseen circumstances by sampling from the generated distribution of refined trajectories.

The Diffusion Policy operates by modeling a ‘Trajectory Distribution’ which encapsulates the probability of all potential robot paths given a specific goal. This distribution is not explicitly defined but is instead learned through a diffusion process, allowing the policy to represent complex, multi-modal possibilities. By sampling from this learned distribution, the policy generates diverse trajectories, effectively exploring the solution space and enabling the robot to adapt to varying environmental conditions and unforeseen obstacles. The ability to represent and sample from this trajectory distribution is fundamental to the policy’s robustness and its capacity to plan in dynamic environments.

The Economy of Adaptation: Selective Fine-Tuning for Efficient Learning

Training a Diffusion Policy from scratch requires substantial computational resources due to the complexity of the diffusion model and the policy learning process. The diffusion model necessitates numerous iterative steps to generate trajectories, each requiring forward and backward passes through a neural network. Furthermore, the policy optimization process, typically employing reinforcement learning techniques, demands extensive environment interactions and gradient computations. These factors combine to create a high computational burden, manifesting as long training times and significant GPU memory requirements, particularly when dealing with high-dimensional observation and action spaces. This expense limits the feasibility of training such policies for complex tasks or in environments where data collection is costly.

Selective fine-tuning is a training methodology that improves computational efficiency and learning speed by strategically updating only a subset of a model’s parameters. Rather than retraining all layers of a pre-trained diffusion policy, this technique fixes the weights of selected layers – preserving learned representations – while updating the remaining layers to adapt to a new task or environment. This approach reduces the number of trainable parameters, thereby decreasing computational cost and the amount of data required for effective adaptation. Consequently, selective fine-tuning allows for faster convergence and improved sample efficiency compared to full model training.

Selective fine-tuning of diffusion-based navigation policies yields a measurable improvement in performance within unseen simulation environments. Empirical results demonstrate an increase in success rate from 52.0% to 58.7% when employing this technique. This performance gain is attributed to the method’s capacity to retain previously learned knowledge – encoded within the frozen layers – while simultaneously adapting to the specifics of novel environments through updates to the remaining, trainable parameters. This mitigates the need for extensive retraining and enhances the efficiency of deployment in previously unencountered scenarios.

Beyond the Oracle: Critic-Free Reinforcement and the Pursuit of Elegance

Conventional reinforcement learning algorithms frequently incorporate intricate value networks – neural networks designed to estimate the long-term reward associated with different states and actions. While intended to guide the learning process, these networks introduce significant computational demands, increasing training time and resource consumption. More critically, the complexity of these value networks can introduce instability; slight errors in value estimation can propagate through the system, leading to erratic behavior and hindering the agent’s ability to converge on an optimal policy. This reliance on accurate value estimation represents a core challenge in many reinforcement learning applications, prompting research into alternative methods that minimize or eliminate this dependency.

Conventional reinforcement learning algorithms frequently depend on a ‘critic’ – a separate neural network tasked with evaluating the quality of actions taken by an ‘agent’ – which adds significant computational complexity and can introduce instability during training. A novel approach, termed ‘Critic-Free RL’, bypasses this requirement entirely. By removing the critic, the learning pipeline is substantially simplified, reducing the number of parameters needing optimization and accelerating the training process. This streamlined architecture doesn’t sacrifice performance; instead, it allows the agent to learn directly from the rewards received, focusing computational resources on refining its policy without the intermediary step of value estimation. The result is a more efficient and robust learning system, particularly advantageous in complex environments where accurate value function approximation proves challenging.

Trajectory-level optimization represents a paradigm shift in reinforcement learning, moving away from incremental, step-by-step improvements to holistic evaluations of complete behavioral sequences. Instead of relying on a critic to assess individual actions, this method directly measures the success of an entire trajectory – from initial state to final outcome – and utilizes this comprehensive feedback to refine the agent’s policy. This direct assessment circumvents the instabilities often associated with value function approximation, enabling significantly faster convergence during training. By focusing on the overall performance of a trajectory, the algorithm efficiently identifies and reinforces successful patterns of behavior, resulting in improved performance and a streamlined learning process that requires fewer computational resources.

Embodied Intelligence: Unitree Go2 and the Threshold of Real-World Autonomy

The developed methodology transitioned from simulation to practical application through deployment on the Unitree Go2 quadruped robot, a platform chosen for its dynamic capabilities and relevance to real-world navigation challenges. This physical embodiment allowed for a rigorous assessment of the approach’s efficacy beyond controlled virtual environments. Researchers subjected the robot to a series of trials designed to mimic the complexities of human-populated spaces, testing its ability to adapt and maintain stable locomotion. The successful operation of the system on this physical platform validates the robustness and potential of the developed algorithms for broader robotic applications, marking a significant step towards truly autonomous navigation in unstructured environments.

The system’s core functionality relies on a Model Predictive Control (MPC) controller, which leverages predicted future trajectories to orchestrate remarkably precise and stable robot movement. In testing with the Unitree Go2 quadruped, this approach demonstrated a 70% success rate navigating challenging real-world scenarios – specifically, both the confined spaces of narrow corridors and the dynamic obstacle courses presented by cluttered office environments. By proactively anticipating the robot’s path and adjusting its gait accordingly, the MPC controller minimizes errors and maintains balance even amidst complexity, representing a significant advancement over conventional control methods and their comparatively lower 50% success rate in the same conditions.

The successful implementation of this novel approach on a quadruped robot demonstrably improves navigational capabilities in challenging real-world scenarios. Achieving a 70% success rate in both narrow corridors and cluttered office environments – a significant leap from the 50% baseline performance of pretrained models – suggests a pathway toward robots with substantially increased autonomy. This advancement isn’t merely about improved percentages; it indicates a fundamental shift in a robot’s ability to interpret and react to unpredictable surroundings. Consequently, future iterations promise machines capable of operating effectively in unstructured spaces – from warehouses and construction sites to disaster relief zones – with a level of adaptability previously unattainable, minimizing the need for constant human intervention and maximizing operational efficiency.

The pursuit of robust robotic navigation, as detailed in this work, mirrors a fundamental principle of system analysis. It acknowledges that even the most sophisticated pretrained diffusion models-essentially, complex systems built on probabilities-will inevitably encounter unforeseen circumstances. The paper’s focus on reinforcement learning as a fine-tuning mechanism isn’t simply about improving performance; it’s about actively probing the model’s boundaries, revealing its limitations through interaction with novel environments. As Claude Shannon observed, “The most important thing in a good experiment is to look for things that are unexpected.” This research embodies that spirit, deliberately stressing the system to uncover vulnerabilities and then intelligently adapting it for greater resilience, ultimately pushing the boundaries of sim-to-real transfer.

What Lies Ahead?

The presented work dismantles a comfortable assumption: that a foundation model, however elegantly pretrained, is sufficient for real-world deployment. It acknowledges, tacitly, that even the most sophisticated generative system requires a period of iterative stress-testing – a controlled breaking – to reveal the brittle points in its reasoning. The fine-tuning via reinforcement learning isn’t about teaching navigation so much as discovering the boundaries of the diffusion model’s implicit world understanding. This is less artificial intelligence, and more applied epistemology – a system probing its own knowledge.

Future iterations will inevitably wrestle with the limitations inherent in defining “safe” and “reliable” within a continuous action space. The current framework sidesteps the need for extensive expert data, but at what cost in terms of exploration efficiency? The true test won’t be achieving navigation, but minimizing the volume of potentially catastrophic failures during the learning process. Scaling this approach to more complex environments-those with dynamic obstacles or incomplete information-will demand a reckoning with the fundamental trade-off between exploration and exploitation.

Ultimately, this research highlights a broader shift. The era of simply building intelligent systems is yielding to an era of interrogating them. The goal isn’t to create a perfect mimic of intelligent behavior, but to expose the underlying mechanisms-the elegant, often surprising, ways in which these systems represent and interact with the world. And, naturally, to see precisely where they fall apart.

Original article: https://arxiv.org/pdf/2603.12868.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- Total Football free codes and how to redeem them (March 2026)

- Limbus Company 2026 Roadmap Revealed

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Brawl Stars Sands of Time Brawl Pass brings Sandstalker Lily and Sultan Cordelius sets, along with chromas and more

- Brawl Stars Brawl Cup Pro Pass arrives with the Dragon Crow skin and Chroma, unique cosmetics, and more rewards

- Clash of Clans April 2026 Gold Pass Season introduces a Archer Queen skin

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- Gold Rate Forecast

2026-03-17 05:03