Beyond Words: How Reasoning Models Build Abstract Understanding

New research reveals that advanced reasoning systems aren’t just processing language, they’re dynamically reshaping their internal representations to grasp the underlying structure of problems.

![The study demonstrates that while idealized multi-agent flocking-measured by alignment [latex]\gamma\gamma[/latex] and inter-agent distances-maintains coherence, the introduction of even modest delays and noise predictably degrades performance, manifesting as deviations in centroid path length [latex]SS[/latex] and reduced overall flock stability.](https://arxiv.org/html/2602.04012v1/x9.png)

![Across the SIR, CR, and gases datasets, the average magnitude of forecasting error-measured as Mean Absolute Error [latex] MAE [/latex]-varied predictably with increasing noise levels, indicating a consistent sensitivity to data quality regardless of the specific time series being analyzed.](https://arxiv.org/html/2602.04114v1/test_Avg_MAE_per_noise_gases.png)

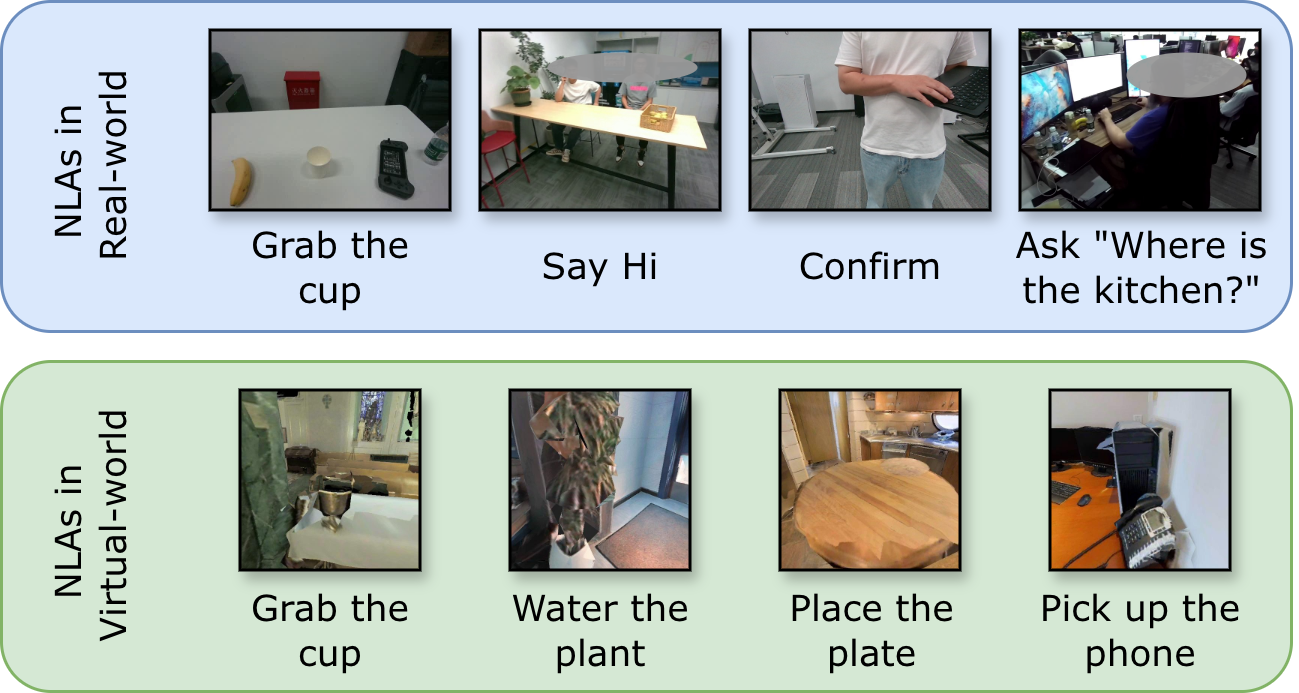

![The system integrates streaming egocentric vision and audio via a real-time multimodal language model to not only generate spoken dialogue but also to proactively issue low-latency function calls-such as directing gaze to specific people, objects, or areas-that dynamically update perceptual context and drive active perception through external tools like [latex]Look\_at\_Person[/latex], [latex]Look\_at\_Object[/latex], and [latex]Use\_Vision[/latex].](https://arxiv.org/html/2602.04157v1/figs/main_fig.png)