Beyond Words: How AI Builds Its Own Languages

New research reveals that vision-language models are capable of developing task-specific communication systems, diverging from human language in surprising ways.

New research reveals that vision-language models are capable of developing task-specific communication systems, diverging from human language in surprising ways.

New research shows how continuous-time neural networks can enhance our ability to model and understand complex systems from limited data.

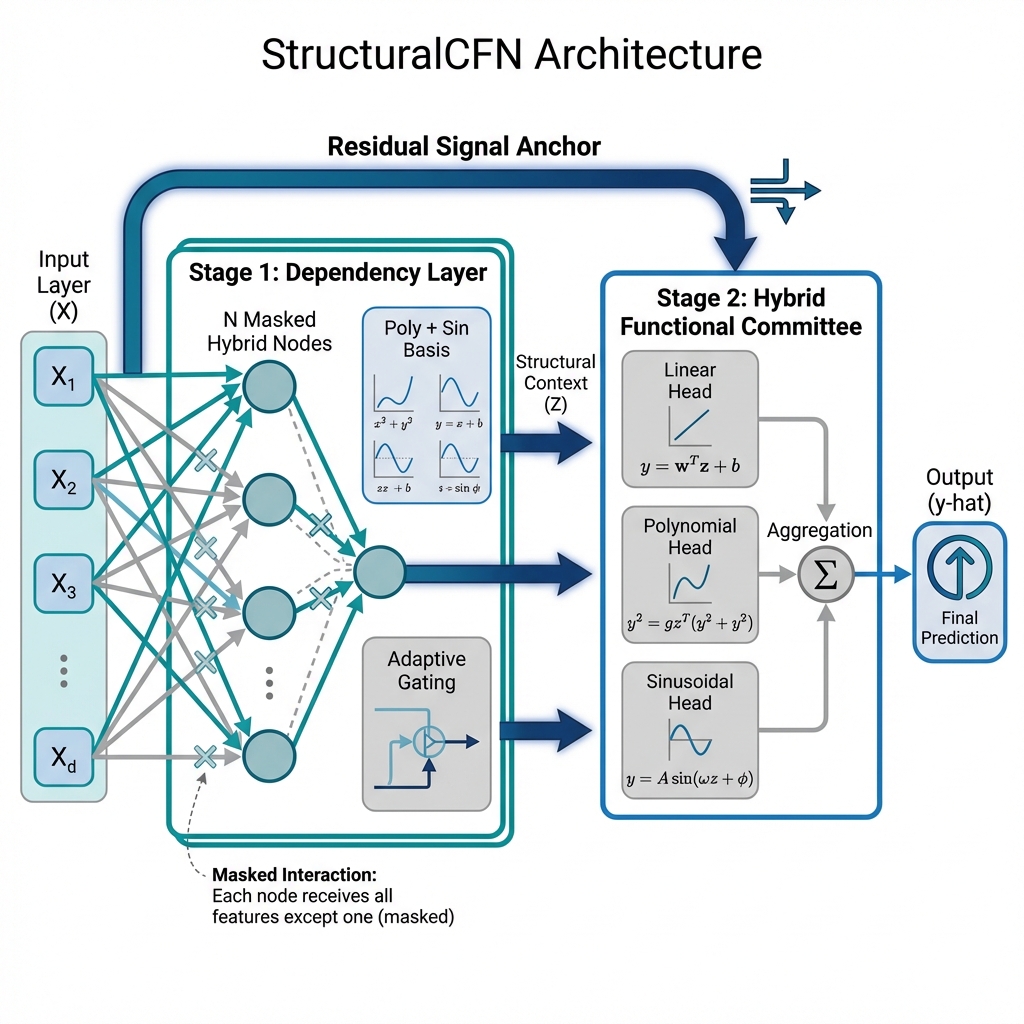

A new neural network architecture prioritizes interpretability and efficiency by explicitly modeling the mathematical connections between features in structured data.

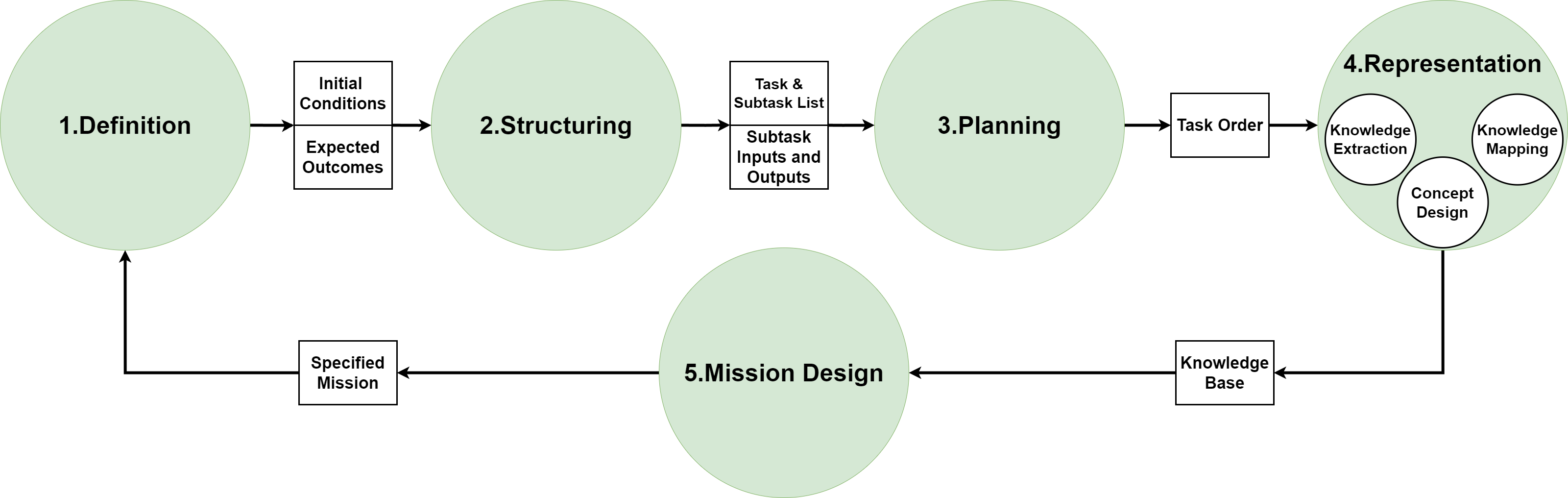

New research details a robust methodology for equipping robots with knowledge graphs, enabling more intelligent and adaptable mission execution.

Researchers have developed a new interface that lets users communicate animation ideas to artificial intelligence through intuitive, hand-drawn sketches and iterative refinement.

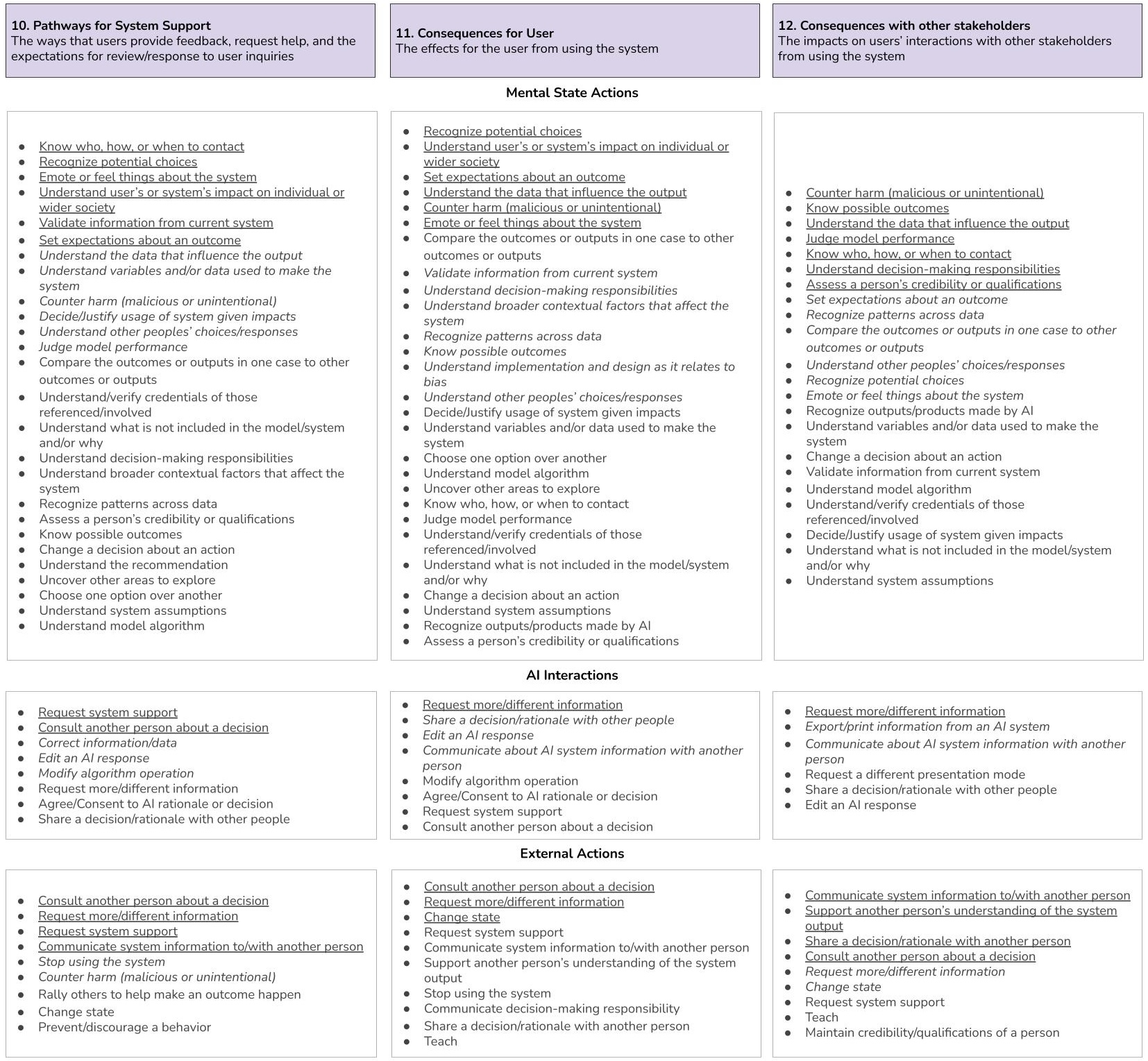

A new framework assesses how well AI explanations empower users to take meaningful action, shifting the focus from interpretability to practical impact.

![The system operates through a layered planning process, initially guided by a large language model →, then extended through specialized modules [latex] \Rightarrow [/latex] to refine and expand upon the initial strategic framework.](https://arxiv.org/html/2601.20577v1/x1.png)

A new framework enables teams of robots to learn from past tasks, dramatically improving efficiency and reducing the need for constant re-planning.

A new agentic AI framework promises to unlock significant energy savings and automation in building operations.

New research shows that artificial intelligence agents can learn to control robots through trial and error, without needing pre-programmed demonstrations.

A new framework streamlines research by converting initial ideas into complete scientific narratives, accelerating discovery and knowledge synthesis.