Robots Learn by Watching: Predicting Motion for Flexible Imitation

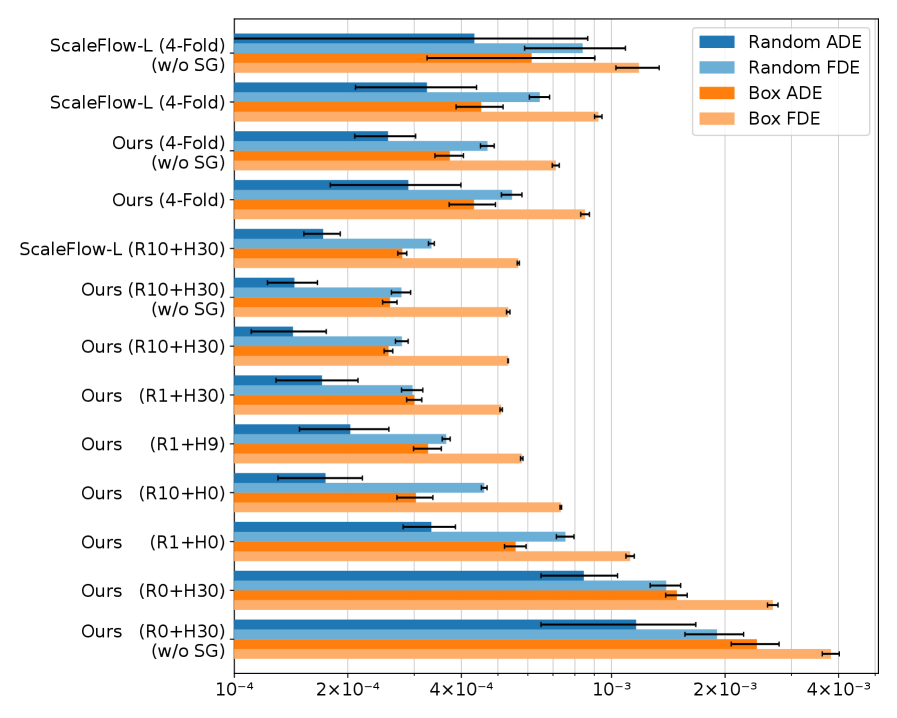

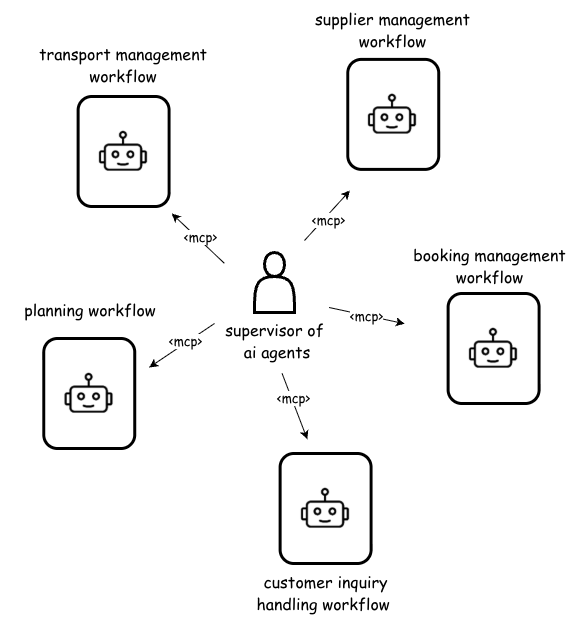

New research demonstrates a method for robots to learn complex tasks from limited human demonstrations by focusing on predicting 3D scene flow and leveraging cropped point cloud data.

![A significant chasm exists between the streamlined, computationally-focused workflows currently dominating early-stage drug discovery-reliant on representations like [latex]SMILES[/latex] strings, curated databases, and text mining-and the complex, multi-modal biological realities of iterative wet lab experimentation and multi-objective optimization inherent in bringing therapies to fruition, a limitation this work seeks to address through architectural innovation.](https://arxiv.org/html/2602.10163v1/x1.png)