Author: Denis Avetisyan

A new, affordable robot platform powered by large language models is opening doors for researchers studying how physical form impacts human-AI trust and engagement.

Botson is an open-source, low-cost platform designed to facilitate research in human-robot interaction, affective computing, and the role of embodiment in building trust.

Despite advances in artificial intelligence, establishing genuine trust remains a significant hurdle for effective human-AI collaboration, particularly with disembodied agents. This paper introduces Botson-an accessible and low-cost platform for social robotics research-designed to investigate the role of physical embodiment in fostering more natural and trustworthy interactions. Botson is an anthropomorphic robot powered by a large language model, enabling exploration of non-verbal cues and affective behaviors. Will providing AI with a physical presence ultimately prove crucial for building truly collaborative relationships with humans?

The Architecture of Trust: Bridging the Gap in Human-Robot Interaction

Effective human-robot interaction transcends mere task completion; it hinges on the cultivation of trust and rapport between people and machines. While robotic functionality is essential, a lack of social connection can impede acceptance and collaboration. Research indicates that individuals are more likely to engage positively with robots perceived as approachable and understanding, qualities fostered through consistent, predictable behavior and the capacity to acknowledge human emotional states. This emphasis on the relational aspect of HRI suggests that designing robots capable of building trust is not simply a matter of improving performance, but of creating genuinely engaging and cooperative partners.

Historically, the field of robotics has heavily prioritized functional performance, often neglecting the subtle, yet critical, role of non-verbal communication in building effective human-robot relationships. Traditional robotic designs frequently lack the capacity to express or interpret emotional cues – things like facial expressions, body language, and tone of voice – which are fundamental to how humans establish trust and rapport with one another. This oversight can lead to interactions feeling cold, unnatural, and ultimately, untrustworthy, hindering the potential for seamless collaboration and acceptance of robotic systems in everyday life. The absence of these nuanced signals creates a disconnect, as humans instinctively rely on these cues to gauge intent, sincerity, and emotional state, elements essential for fostering genuine connection and reliable partnership.

Botson represents a novel approach to human-robot interaction by seamlessly merging the sophisticated reasoning capabilities of a Large Language Model with a tangible, physically present robotic platform. This integration moves beyond purely functional exchanges, enabling Botson to prioritize natural interaction through both verbal and non-verbal cues. The design intentionally centers on replicating the nuances of human communication – including expressive movements and contextual awareness – to foster a more intuitive and engaging experience for users. By embodying artificial intelligence, Botson aims to bridge the gap between technology and human social understanding, ultimately creating a more trustworthy and relatable robotic companion.

Recent usability studies evaluating Botson, a physically embodied robotic agent integrated with a Large Language Model, reveal a significant preference for embodied interaction when establishing trust with users. Participants consistently rated Botson with a trust score of 3.00 on a 5-point scale, a demonstrably higher value than that assigned to comparable voice-only agents. This suggests that the inclusion of a physical presence-allowing for non-verbal cues and a more natural interaction-plays a crucial role in fostering a sense of reliability and connection. The findings highlight the importance of considering embodiment as a key factor in the design of effective and trustworthy robotic companions, moving beyond purely functional capabilities to address the nuanced aspects of human-robot rapport.

Constructing the System: Botson as a Platform for Socially Aware Robotics

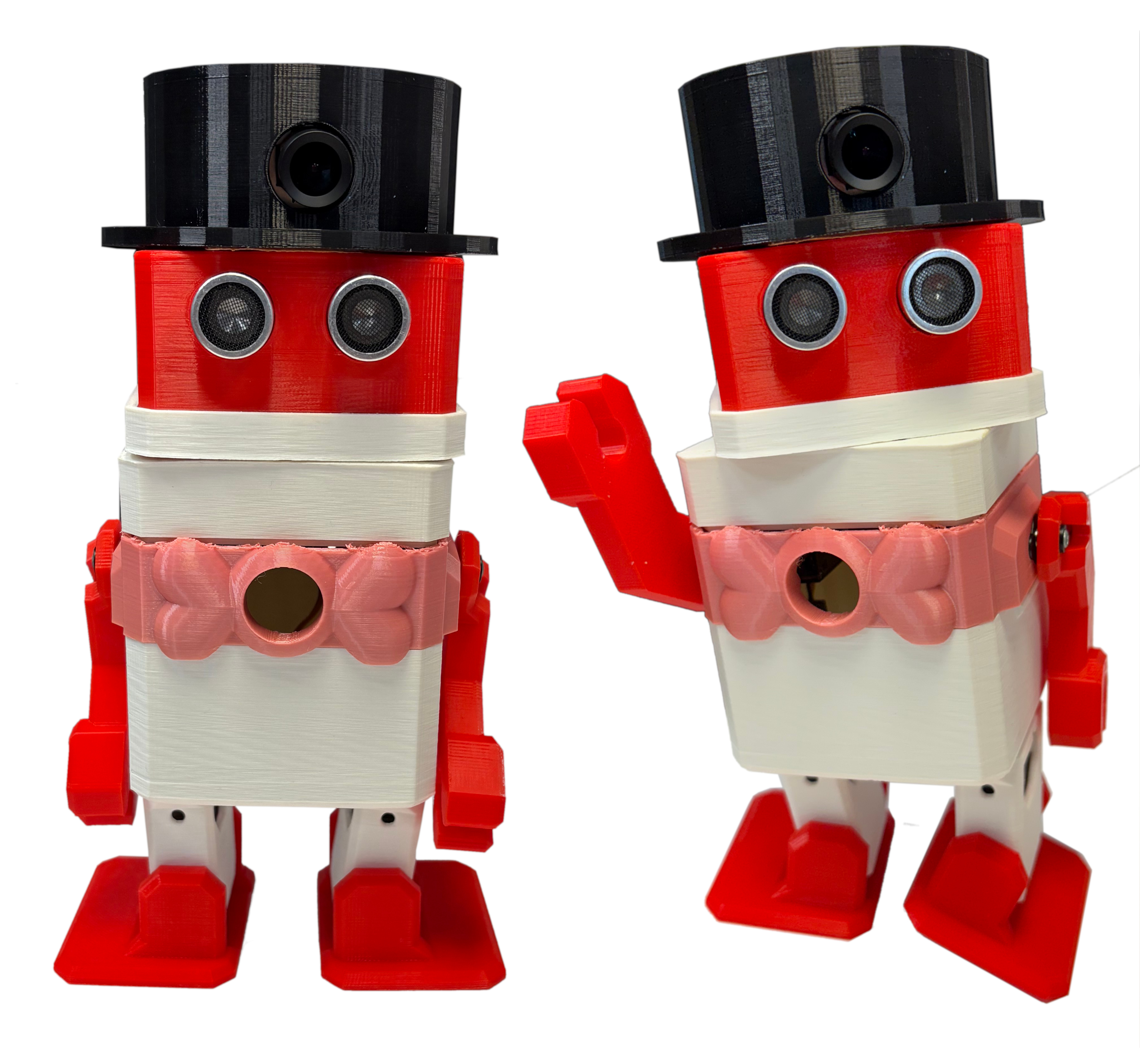

The Botson robot platform is built upon the Otto DIY chassis, a pre-engineered robotic kit designed for ease of assembly and modification. This choice of a commercially available base simplifies the construction process, reducing the need for extensive fabrication of the robot’s core structure. The Otto chassis provides a robust and mobile foundation, incorporating wheels, motors, and basic structural components. Its modular design allows users to readily adapt the physical form of Botson by adding or replacing components, and the readily available documentation and community support associated with the Otto DIY platform contribute to its accessibility for researchers and hobbyists alike.

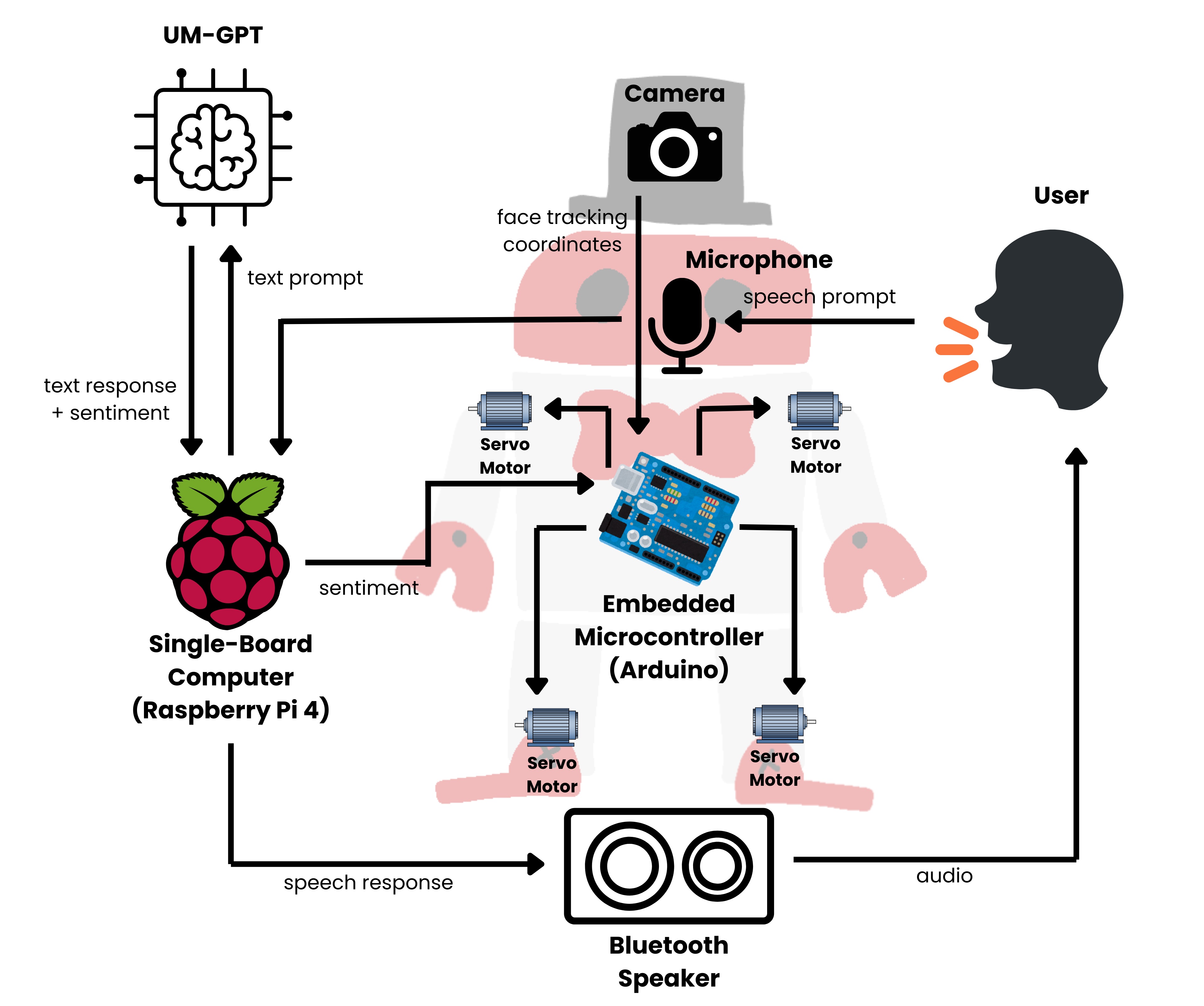

Botson’s physical actions are governed by a dual-controller system comprising a Raspberry Pi and an Arduino Micro-Controller. The Raspberry Pi serves as the central processing unit, receiving high-level commands and executing the logic for navigation and interaction. These commands are then translated into specific actuator control signals by the Arduino Micro-Controller, which directly manages the robot’s motors, servos, and other physical components. This architecture allows for efficient task management; the Raspberry Pi handles complex computations and decision-making, while the Arduino provides real-time control and precise movement execution, ensuring responsiveness and stability in Botson’s operations.

Botson incorporates the GPT-4o Large Language Model to process and interpret natural language input, enabling conversational interaction and command execution. This integration allows users to communicate with Botson using everyday language, bypassing the need for specialized robotic programming or scripting. GPT-4o facilitates the translation of spoken or typed requests into actionable commands for the robot’s actuators and systems. The model’s capabilities extend to contextual understanding, allowing Botson to maintain a degree of conversational state and respond appropriately to follow-up questions or ambiguous instructions. This approach distinguishes Botson from robots reliant on pre-programmed routines, enabling a more flexible and intuitive user experience.

3D printing is integral to Botson’s development process, facilitating iterative design and personalized aesthetics. The platform leverages additive manufacturing to create custom enclosures, mounting brackets for sensors and actuators, and alternative end-effectors, all of which are readily modified based on specific application requirements. This approach reduces lead times associated with traditional manufacturing, enabling developers to rapidly prototype and test physical components. Furthermore, 3D printing allows for significant customization of Botson’s external appearance, enabling users to tailor the robot’s form factor to their preferences or project needs without requiring specialized tooling or fabrication expertise.

From Intent to Expression: Generating Believable Interaction

Prompt engineering is a foundational element in controlling Botson’s behavior and eliciting socially appropriate responses from the underlying Large Language Model (LLM). Specifically, carefully constructed prompts define the context, desired persona, and behavioral boundaries for Botson, influencing both its verbal and non-verbal communication. These prompts aren’t simply requests for information; they include instructions regarding emotional tone, politeness levels, and the application of specific social cues, such as acknowledging user statements or offering apologies. The precision of these prompts directly impacts Botson’s ability to generate coherent, contextually relevant, and emotionally consistent interactions, ensuring alignment with human social expectations and enhancing the overall user experience.

Emotional expression in Botson is realized through the synchronized operation of its physical and vocal components. Specifically, Botson’s movements – encompassing facial expressions, head poses, and body language – are algorithmically linked to the emotional content of its spoken responses. This coordination isn’t simply additive; the system modulates movement intensity, speed, and type based on the semantic and prosodic features of the generated speech. For example, a statement expressing sadness will trigger a slower speaking rate coupled with a drooping head pose and downturned mouth, while an excited statement will result in faster speech, increased head movement, and a smiling facial expression. The timing of these movements is also critical, with actions often preceding or coinciding with specific words or phrases to maximize communicative impact.

Gesture synthesis within the Botson system utilizes a parameterized library of movements to generate non-verbal cues that supplement verbal communication. These synthesized gestures are not random; they are algorithmically linked to the emotional content and semantic meaning of Botson’s textual and vocal outputs. The system employs kinematic modeling and animation techniques to produce realistic and contextually appropriate body language, including facial expressions, hand movements, and postural shifts. User studies have indicated an average score of 3.17 on a Likert scale regarding the usefulness of these gestures in enhancing user engagement, demonstrating a quantifiable positive impact on perceived interaction quality.

Botson utilizes Automatic Speech Recognition (ASR) to convert human vocalizations into text, enabling the system to understand spoken commands and questions. This transcribed text is then processed by the LLM to generate a relevant response, which is subsequently converted into audible speech using Text-to-Speech (TTS) technology. The integration of ASR and TTS allows for a natural language interface, facilitating bidirectional communication; Botson not only interprets spoken input but also responds with synthesized speech, creating a conversational experience. Current ASR accuracy rates for Botson are reported at 92.3% in controlled environments, while TTS utilizes a neural network-based architecture to produce speech with a reported Mean Opinion Score (MOS) of 4.1 for naturalness.

User evaluations demonstrate a high perception of Botson’s helpfulness, registering an average score of 4.33. This score represents a statistically significant improvement compared to interactions with voice-only agents. Furthermore, the contribution of non-verbal communication to user engagement was quantified via Likert scale assessment, yielding an average score of 3.17. These findings suggest that the integration of both verbal and non-verbal cues substantially enhances the perceived utility and engagement levels of the Botson interaction model.

Expanding the Horizon: Future Directions in Synchronized Expression and Affective Robotics

Current development surrounding the Botson robot prioritizes the refinement of Co-Speech Gesture Synthesis, a technique focused on generating nonverbal movements that align perfectly with spoken language. This isn’t simply about adding random motions; researchers are meticulously crafting gestures that mirror human communication patterns, aiming for a level of naturalness previously unseen in social robotics. The precise synchronization between speech and gesture is hypothesized to dramatically improve a robot’s ability to connect with humans, fostering a sense of trust and engagement. By focusing on this nuanced interplay, the team hopes to move beyond purely functional interactions and create robots capable of truly empathetic communication, making Botson appear less like a machine and more like a responsive social partner.

The development of Botson and its synchronized expressive capabilities extends significantly into the realm of Affective Robotics. By achieving more nuanced and human-like communication – encompassing both verbal and non-verbal cues – robots move closer to accurately perceiving and understanding the emotional states of those they interact with. This isn’t merely about recognizing basic emotions; the ability to interpret subtle shifts in affect allows for a more contextually appropriate and empathetic response. Consequently, robots can tailor their interactions – adjusting tone, pacing, and even gestures – to foster stronger connections and build trust with humans, ultimately leading to more effective collaboration and assistance in a variety of settings, from healthcare and education to customer service and companionship.

The design of Botson prioritizes accessibility through its open-source framework, intentionally fostering a collaborative environment for researchers and developers. By making the robot’s blueprints, software, and data publicly available, the project actively invites contributions from a wider community, circumventing the limitations of closed-source development. This approach not only accelerates the pace of innovation in socially aware robotics – allowing for rapid prototyping, testing, and refinement of algorithms – but also promotes transparency and reproducibility, crucial elements for building trust in increasingly interactive robotic systems. The open nature of Botson ensures that advancements are not siloed within a single institution, but rather benefit the collective pursuit of creating robots capable of more nuanced and effective social interactions.

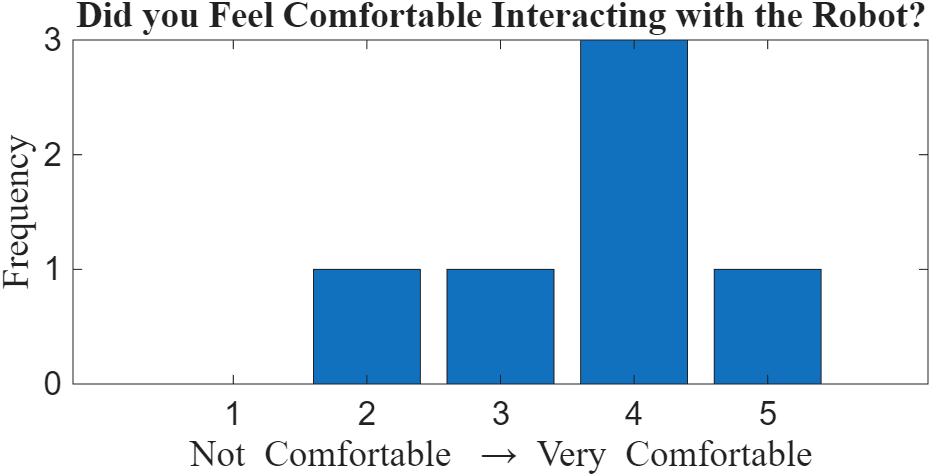

Evaluations of the embodied robotic platform demonstrated a significant level of user engagement, with all participants reporting increased interaction when compared to non-embodied interfaces. This heightened engagement suggests a strong potential for more natural and effective human-robot communication. Comfort levels, measured on a standard Likert scale, averaged 3.67, indicating positive initial acceptance of the robotic form and suggesting that users readily perceived the platform as approachable and non-threatening. These findings highlight the importance of embodiment in fostering trust and facilitating meaningful interactions, paving the way for robots that are not simply tools, but genuine social partners.

The Botson platform, as detailed in the article, underscores a crucial principle: systemic elegance arises from mindful constraints. The design prioritizes accessibility and affordability, intentionally limiting complexity to facilitate research into the fundamental aspects of human-robot interaction. This echoes Edsger W. Dijkstra’s sentiment: “Simplicity is prerequisite for reliability.” By focusing on core functionalities-gesture synthesis, large language model integration, and physical embodiment-Botson allows researchers to isolate variables and study the impact of embodiment on trust and engagement. Every added dependency, as the article subtly demonstrates through its deliberate design choices, introduces a hidden cost, potentially obscuring the very phenomena it seeks to understand. The platform’s success hinges on this understanding – structure dictates behavior, and a well-defined structure enables meaningful insights.

What’s Next?

The proliferation of accessible robotic platforms, such as Botson, inevitably shifts the central question. It is no longer merely if embodiment influences trust, but how specific, and often imperfect, physical manifestations shape it. The system, after all, looks clever; it is probably fragile. A low barrier to entry exposes the underlying truth: a fluent interface does not necessarily imply genuine interaction. Future work must grapple with the inevitable discord between linguistic promise and mechanical performance.

Current evaluations largely treat robots as black boxes, assessing behavioral outcomes without fully dissecting the contribution of individual kinematic or dynamic properties. A more rigorous approach demands a granular understanding of which aspects of physical presence – speed, smoothness, even the subtle imperfections of movement – are most salient for users. This will necessitate interdisciplinary collaboration, blending robotics, psychology, and increasingly, a willingness to accept that elegant design often demands difficult sacrifices. Architecture, at its core, is the art of choosing what to sacrifice.

Ultimately, Botson, and platforms like it, serve as a useful, if humbling, reminder. The goal is not to simulate social interaction, but to understand it. And that understanding will not come from building ever-more-realistic robots, but from acknowledging the fundamental limitations of any artificial system attempting to replicate the messy, unpredictable beauty of human connection.

Original article: https://arxiv.org/pdf/2602.19491.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Limbus Company 2026 Roadmap Revealed

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Total Football free codes and how to redeem them (March 2026)

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- Gold Rate Forecast

- ‘Project Hail Mary’s Soundtrack: Every Song & When It Plays

2026-02-24 12:29