Author: Denis Avetisyan

Artificial intelligence is reshaping software engineering, extending its reach beyond traditional code to encompass workflows, prompts, and the broader organizational systems that support them.

This review examines the implications of ‘semi-executable artifacts’ and agentic systems for the future of software engineering and socio-technical design.

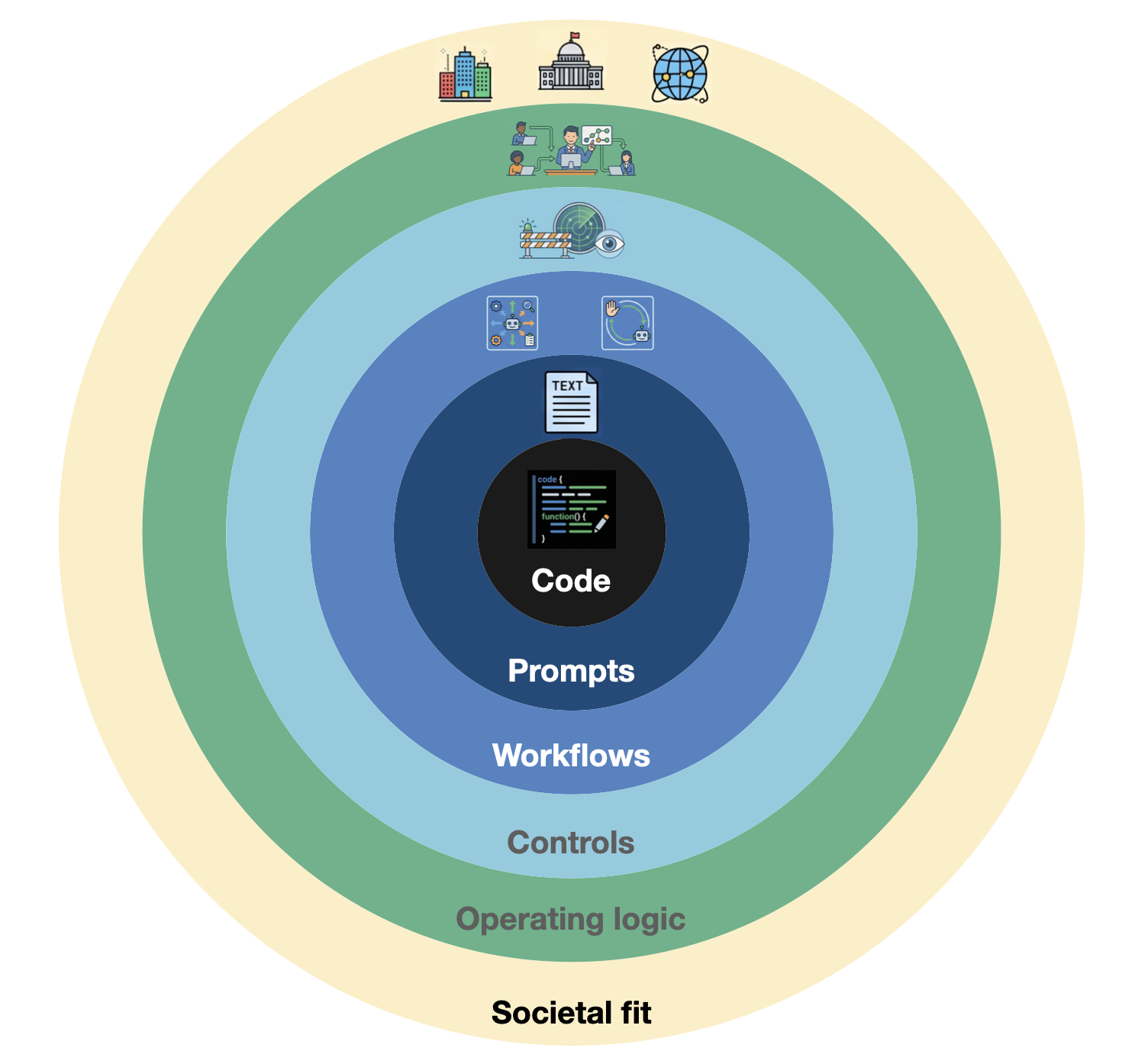

While automation increasingly threatens traditional coding roles, viewing AI as solely a replacement for software engineers overlooks a more profound shift in the very nature of engineering itself. This paper, ‘The Semi-Executable Stack: Agentic Software Engineering and the Expanding Scope of SE’, argues that the scope of software engineering is expanding beyond executable code to encompass ‘semi-executable artifacts’-combinations of language, workflows, and organizational routines enacted through human or probabilistic interpretation. We introduce a six-ring diagnostic model to map this expansion, shifting focus from code execution to the orchestration of these broader systems. Ultimately, the question becomes not how to resist automation, but how to engineer for a future where software is only one component of a complex, socio-technical whole?

The Evolving Landscape of Software Systems

For decades, software engineering centered on crafting deterministic artifacts – programs that, given the same input, consistently produce the same output. However, the rise of artificial intelligence, particularly Foundation Models, introduces a fundamentally different class of components termed ‘semi-executable’. Unlike traditional code, these AI-driven elements don’t operate through strict algorithmic instructions; instead, they respond to prompts and generate outputs based on probabilistic reasoning and learned patterns. This means that even with identical inputs, a semi-executable component may yield varying results, introducing a degree of non-determinism previously uncommon in software systems. Consequently, developers are now tasked with integrating components that aren’t simply executed, but guided, demanding a rethinking of established engineering practices to account for this inherent variability and build robust, adaptable applications.

The rise of Foundation Models necessitates a fundamental recalibration of software engineering practices. Traditionally focused on deterministic code execution, the field now contends with systems driven by prompts and characterized by probabilistic outputs. This transition introduces a new class of components-semi-executable-that aren’t simply run, but rather guided. To address this evolving landscape, researchers have proposed the Semi-Executable Stack, a diagnostic model that maps the layers of interaction between prompts, models, and resulting behaviors. Successfully navigating this shift requires engineers to develop new techniques for testing, debugging, and ensuring the reliability of systems where predictability is no longer guaranteed, and where understanding the influence of input prompts is paramount to maintaining control and preventing unexpected outcomes.

Failure to acknowledge the expanding scope of software engineering, particularly the integration of semi-executable components, introduces significant vulnerabilities into modern systems. These systems, increasingly reliant on probabilistic reasoning and prompt-based guidance, become demonstrably brittle – susceptible to unexpected failures stemming from subtle shifts in input or model behavior. This fragility isn’t merely a matter of bugs; it represents a fundamental loss of control and predictability. Traditional testing methodologies, designed for deterministic systems, prove inadequate in assessing the reliability of components whose outputs are inherently variable. Consequently, developers risk deploying systems that appear functional under limited conditions but exhibit erratic and potentially dangerous behavior when confronted with novel scenarios, demanding a re-evaluation of software validation and monitoring practices.

Deconstructing the Semi-Executable Stack

The Semi-Executable Stack proposes a six-layered model for analyzing contemporary AI systems, moving beyond the conventional focus on solely executable code. This framework recognizes that modern AI functionality extends through multiple interdependent layers, each contributing to overall system behavior. These layers, progressing outward from core executable code, include instructional artifacts defining system behavior, operating logic governing function, control systems managing interactions, orchestrated execution coordinating complex tasks, and finally, the critical layer of societal and institutional fit, which accounts for external constraints and alignment with organizational objectives. This multi-layered approach is intended to provide a more complete and nuanced understanding of AI systems than traditional analyses, acknowledging the interplay between code and the broader context in which it operates.

The Semi-Executable Stack is comprised of four core layers built upon a foundation of executable code and instructional artifacts. Orchestrated Execution represents the direct implementation of tasks via code. Above this lies the Control System, which governs the execution flow and manages resources. Operating Logic defines the rules and decision-making processes that guide the system’s behavior. Critically, the stack culminates in Societal and Institutional Fit, encompassing the alignment of the system with relevant regulations, ethical considerations, and organizational policies; this final layer ensures responsible deployment and integration within broader contexts.

The Semi-Executable Stack’s layered architecture is designed to address the inherent complexity of modern AI systems by providing a framework for systemic analysis. This holistic view enables decomposition of agentic systems into manageable components – Orchestrated Execution, Control Systems, Operating Logic, and Societal/Institutional Fit – facilitating targeted investigation of potential failure points and performance bottlenecks. Critically, this layered model underpins our diagnostic process, allowing for the assessment of alignment between technical implementation and broader organizational objectives, and providing a structured methodology for evaluating the overall health and efficacy of AI deployments.

Adapting Engineering Practices for Agentic Systems

Agentic Software Engineering represents a paradigm shift in software development through the integration of artificial intelligence systems capable of operating with varying degrees of autonomy. These systems, ranging from fully autonomous agents to those requiring human oversight, are incorporated into established lifecycle phases such as requirements gathering, design, coding, testing, and deployment. Unlike traditional software components that execute pre-defined instructions, agentic systems utilize machine learning and reasoning capabilities to perform tasks, adapt to changing conditions, and potentially generate novel solutions. This integration necessitates a re-evaluation of existing software engineering practices to effectively leverage the capabilities of these intelligent agents and manage the inherent complexities of their probabilistic behavior.

Traditional software engineering relies on detailed, explicit instructions for each step of the development process; however, integrating agentic systems necessitates a shift toward guiding and coordinating intelligent components. This transition requires developers to define high-level goals and constraints, allowing the AI agent to autonomously determine the optimal implementation. Rather than dictating specific actions, the focus becomes providing appropriate feedback mechanisms, evaluating agent performance against defined objectives, and iteratively refining the guiding parameters. This represents a move from deterministic control to probabilistic influence, acknowledging the inherent uncertainty in AI-driven systems and leveraging their capacity for independent problem-solving.

The ‘Preserve vs Purify’ heuristic suggests that when integrating agentic systems, software development practices should be adapted rather than entirely replaced. Attempting to ‘purify’ existing workflows by imposing strict control on probabilistic AI agents is often counterproductive, as it disregards the inherent uncertainty in their operation. Instead, ‘preserving’ valuable existing practices and augmenting them with agentic capabilities allows for a more pragmatic and effective integration. This approach acknowledges that agentic systems operate based on probabilities and estimations, and seeks to guide these systems within established frameworks, rather than attempting to force deterministic behavior. Prioritizing adaptation minimizes disruption and leverages existing expertise while accommodating the unique characteristics of agentic technologies.

Navigating Organizational Barriers and Measuring Impact

The integration of agentic systems, despite potential benefits, frequently encounters resistance due to organizational inertia – a tendency for established patterns of behavior and thought to persist. This isn’t simply a matter of reluctance to change; deeply ingrained operating procedures, communication channels, and even cultural norms can actively impede the adoption of new technologies. The friction arises because agentic systems often require shifts in workflow, redefined roles, and a willingness to cede some control to automated processes. Consequently, initial enthusiasm can quickly dissipate as teams grapple with the disruption to existing rhythms and the perceived effort required to reconcile new tools with familiar practices, ultimately slowing down progress and hindering the realization of potential gains.

Successful integration of agentic systems isn’t simply about introducing new technology; it fundamentally depends on a thorough comprehension of an organization’s ingrained Operating Logic – the established routines, decision-making processes, and behavioral patterns that govern daily operations. Attempts to impose novel practices without acknowledging these existing structures often encounter resistance and fail to deliver expected benefits. Instead, effective implementation necessitates a careful alignment of new agentic tools with these recurring behaviors, essentially working with the organization’s natural flow rather than against it. This means identifying how agentic systems can augment, rather than disrupt, established workflows, ensuring that the technology seamlessly integrates into the fabric of the organization and fosters genuine, lasting change.

Early evaluations of agentic systems reveal a compelling trend: AI augmentation doesn’t necessarily outperform human teams, but significantly streamlines processes. Initial testing indicates that teams working alongside AI achieve performance levels comparable to those operating independently, suggesting a shift in focus from raw output to optimized efficiency. Specifically, the GoNoGo system demonstrably reduced decision-making time by an average of two hours per instance, freeing up valuable resources. This efficiency extends to quality control, as evidenced by the SPAPI-Tester, which identified 23 previously undetected failures within a live automotive testing environment, highlighting the potential for AI to bolster existing workflows and prevent costly errors.

Towards Responsible and Integrated AI Systems

Artificial intelligence holds the promise of fundamentally reshaping software engineering, but its true value extends beyond mere technological advancement. The most impactful applications of AI will not be those that simply automate existing tasks, but rather those that create systems genuinely integrated with the fabric of society and responsive to pressing real-world challenges. This necessitates a shift in focus – from building clever algorithms to designing solutions that address tangible needs, whether in healthcare, environmental sustainability, or equitable access to resources. Successfully realizing this potential demands a holistic approach, considering not only technical feasibility but also the broader societal implications and ensuring that these intelligent systems augment human capabilities and contribute to collective well-being. It is through this mindful integration that AI can move beyond a tool and become a catalyst for positive change.

The successful integration of artificial intelligence demands more than just technical prowess; it necessitates a thorough evaluation of its societal and institutional compatibility. Systems built with AI must not only function as intended but also align with existing legal frameworks, ethical guidelines, and societal values. This involves proactively addressing potential biases embedded within algorithms, ensuring data privacy and security, and considering the broader implications for employment and social equity. A responsible approach prioritizes transparency, accountability, and continuous monitoring to mitigate unintended consequences and foster public trust, ultimately shaping AI development towards demonstrably positive impact and widespread acceptance.

The pursuit of truly impactful artificial intelligence in software engineering hinges on a shift towards the Semi-Executable Stack – a methodology that blends the precision of executable code with the flexibility of declarative specifications and human oversight. This approach doesn’t aim to fully automate software creation, but rather to augment human capabilities, allowing developers to focus on higher-level design and ethical considerations while AI handles routine tasks and verification. By adapting current development practices to embrace this stack, systems become more innovative through rapid prototyping and experimentation, demonstrably resilient due to continuous validation, and fundamentally responsible as ethical constraints are embedded directly into the development process. This integration fosters a future where AI isn’t merely a tool for automation, but a collaborative partner in building software that genuinely serves societal needs and withstands evolving challenges.

The pursuit of agentic software engineering, as detailed in the paper, necessitates a holistic understanding of systems extending beyond mere code. It’s not simply about automating tasks, but about recognizing that software now encompasses prompts, workflows, and organizational structures – a far broader scope than traditionally considered. Grace Hopper aptly stated, “It’s easier to ask forgiveness than it is to get permission.” This resonates with the paper’s central argument; a rigid adherence to traditional software engineering methodologies may stifle innovation in this rapidly evolving landscape. The need to experiment, iterate, and adapt-even if it means venturing outside established norms-is crucial for navigating the complexities of these expanded socio-technical systems and embracing the potential of AI-driven automation.

What’s Next?

The expansion of software engineering’s scope, as outlined, isn’t merely a technical problem. It’s a symptom of a deeper truth: the boundaries of a system are rarely defined by its code. Focusing solely on the ‘semi-executable’ – the prompts, workflows, and configurations – risks optimizing the wrong variable. The true leverage lies not in perfecting these artifacts in isolation, but in understanding the organizational structures that generate and consume them. A prompt, however elegantly crafted, is merely a local maximum within a far more complex landscape of incentives, expertise, and communication.

Future work must address the inherent fragility introduced by this expanding scope. Dependencies are, after all, the true cost of freedom. As engineering artifacts become less explicitly coded and more reliant on emergent behavior, the architecture of trust – the mechanisms for verifying and validating these systems – will become paramount. Good architecture, it must be remembered, is invisible until it breaks; a concerning proposition when the ‘code’ is increasingly distributed across organizational boundaries and expressed in natural language.

The field now faces a critical choice. It can pursue increasingly clever automation of these semi-executable artifacts, or it can acknowledge that scalability derives from simplicity. The former path promises short-term gains, but risks entangling systems in brittle, opaque dependencies. The latter demands a fundamental rethinking of engineering practice – one that prioritizes clarity, observability, and a deep understanding of the socio-technical systems in which these artifacts are embedded.

Original article: https://arxiv.org/pdf/2604.15468.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Gold Rate Forecast

- These Cartoon Reboots Totally Missed the Point of the Originals (& Went Downhill Fast)

- Total Football free codes and how to redeem them (March 2026)

- Netflix’s Best Stranger Things Replacement Officially Takes America By Storm

- Top 5 Best New Mobile Games to play in May 2026

- Zenless Zone Zero version 2.8 ‘New: Eridan Sunset’ update will release on May 6, 2026

- 6 Animated Movie Trilogies Where Every Entry Is Near-Perfect

- Maggie Smith’s sons “deeply touched” by huge honour to the late “national treasure”

- Clash Royale May 2026 Update to bring Collection Levels, Mastery rework, and progression overhaul

- eFootball 2026 Big Time & Show Time Standout Guardians 25-26 Season’s Best pack: Review, Best Progression Builds, and Skills

2026-04-21 03:14