Author: Denis Avetisyan

Researchers are pioneering a new method of robotic adaptation where robots can quickly learn to manipulate objects in unseen environments by ‘cloning’ visual characteristics from reference images.

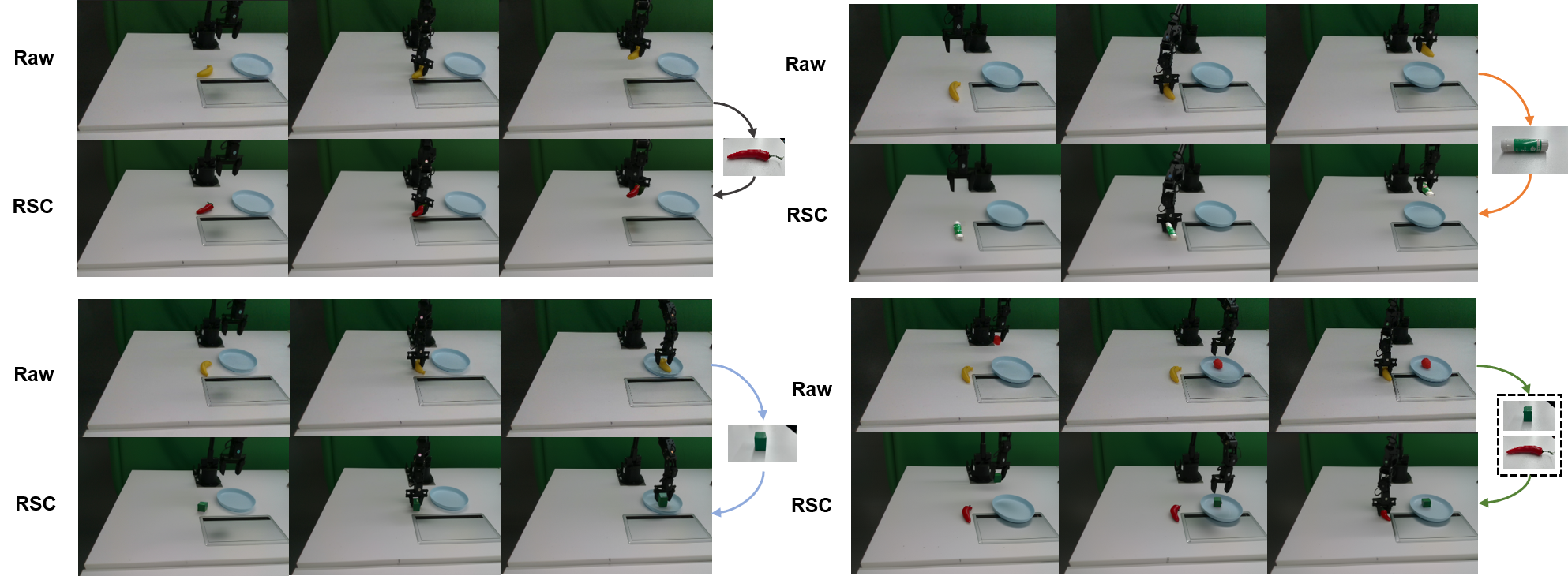

This paper introduces Robotic Scene Cloning, a data augmentation technique using visual prompts to improve zero-shot robotic transfer and reduce the reliance on extensive real-world training data.

Despite advances in robotic manipulation, deploying pre-trained models in novel environments remains challenging due to limited zero-shot generalization capabilities and the need for extensive on-site data collection. This paper introduces Robotic Scene Cloning (‘Robotic Scene Cloning:Advancing Zero-Shot Robotic Scene Adaptation in Manipulation via Visual Prompt Editing’), a novel data augmentation technique that leverages visual prompts to generate realistic robotic training data tailored to new scenes. By editing existing trajectories with scene-specific visual cues, RSC enhances policy generalization and significantly reduces the reliance on costly real-world data. Could this approach unlock truly adaptable robots capable of seamlessly operating in previously unseen environments?

The Persistent Challenge of Real-World Robotic Generalization

A significant hurdle in robotics lies in the persistent difficulty of transferring skills learned in simulated environments to the complexities of the real world – a phenomenon known as the ‘sim-to-real’ gap. Robotic systems, adept at performing tasks within the controlled parameters of a simulation, frequently encounter unpredictable variations in lighting, texture, and physical interactions when deployed in authentic settings. This discrepancy isn’t merely a matter of visual fidelity; it extends to the physics engine itself, where subtle differences in how simulations model friction, gravity, or object deformation can lead to substantial performance degradation. Consequently, a robot successfully navigating a virtual warehouse may falter when confronted with uneven flooring, imperfectly shaped objects, or unexpected disturbances in a physical space, highlighting the critical need for methods that bridge this generalization gap.

The difficulty robots face when transitioning from simulated training to real-world application stems from a fundamental mismatch in visual information. Current simulation technologies struggle to replicate the sheer variety and intricacy of real-world scenes; subtle variations in lighting, texture, and object appearance – details effortlessly processed by humans – can dramatically confuse a robot’s perception systems. This limitation isn’t merely about visual fidelity; it’s about the distribution of visual data. Robots trained on limited, often idealized, simulated datasets lack the experience to reliably interpret the unpredictable and nuanced visual input encountered in dynamic real-world environments, leading to degraded performance and hindering their ability to generalize learned behaviors effectively.

The deployment of robotic systems is frequently hampered by the logistical challenges of acquiring sufficient real-world data for training. Gathering this data necessitates significant investments in both time and financial resources; each hour of robotic operation requires careful supervision, meticulous data annotation, and substantial computational power for processing. This process is particularly difficult for tasks requiring diverse scenarios, as robots must experience a wide range of lighting conditions, object variations, and environmental disturbances to achieve robust performance. Consequently, many promising robotic applications – from autonomous driving to in-home assistance – remain limited by the impracticality of collecting the necessary datasets, creating a bottleneck in the advancement of the field and hindering the widespread adoption of robotic technologies.

Expanding the Training Landscape: Data Augmentation Strategies

Data augmentation techniques address the limitations of finite training datasets by generating modified versions of existing data. This artificial expansion increases both the quantity and variability of training examples, enabling machine learning models to generalize more effectively to unseen data. The core principle is to introduce perturbations – such as rotations, translations, noise, or color adjustments – that preserve the semantic content of the data while creating new, plausible variations. By exposing the model to a wider range of inputs during training, data augmentation reduces overfitting and improves performance on real-world data distributions, particularly when labeled data is scarce or expensive to obtain.

ROSIE, an early data augmentation technique utilizing diffusion models, demonstrated the potential of generative approaches for tasks like image inpainting and scene modification to expand training datasets. However, ROSIE’s architecture presented limitations in scalability, hindering its application to large-scale datasets or complex augmentation scenarios. Furthermore, the level of control over the generated augmentations was restricted; users had limited ability to precisely specify the types of modifications applied, potentially leading to the creation of unrealistic or irrelevant training examples. These constraints prompted the development of subsequent methods focused on improving both the efficiency and controllability of diffusion-based data augmentation.

Recent data augmentation techniques, including GenAug, GreenAug, and CACTI, build upon advances in generative modeling to improve augmentation effectiveness. GenAug utilizes generative adversarial networks (GANs) conditioned on task-specific information to create realistic and relevant training samples. GreenAug focuses on identifying and applying only the most impactful augmentations, reducing computational cost and overfitting. CACTI (Contextual Augmentation using Conditional Transformers) employs transformer networks to generate augmentations based on contextual understanding of the input data, leading to more diverse and representative datasets. These methods move beyond simple transformations and aim to create augmentations that are both high-quality and aligned with the specific requirements of the target task.

Robotic Scene Cloning: A Paradigm Shift in Data Synthesis

Robotic Scene Cloning employs a data synthesis pipeline that utilizes visual prompting to create realistic and varied robotic environments. This process begins with the capture of a reference scene, which is then used as a visual prompt for a diffusion model. The system extracts depth information using Depth-AnythingV2 and identifies objects via Grounding-DINO, subsequently generating segmentation masks with SAM2 to accurately represent scene elements. These extracted elements are then integrated into new scenes, conditioned by ControlNet, enabling the diffusion model to generate augmentations that maintain scene consistency and visual fidelity. The resulting synthetic data is designed to enhance the robustness and generalization capability of robotic learning algorithms by providing a diverse range of training scenarios.

The robotic scene cloning pipeline relies on a combination of computer vision techniques for accurate object representation within synthesized scenes. Specifically, Depth-AnythingV2 is utilized for depth estimation, providing 3D spatial information about objects in the initial scene. Grounding-DINO is then employed to identify and localize specific objects based on textual prompts, enabling targeted manipulation. Finally, Segment Anything Model 2 (SAM2) generates precise masks delineating the boundaries of identified objects, ensuring accurate segmentation for subsequent scene augmentation and realistic compositing of objects within the cloned environment. This multi-stage process facilitates detailed scene understanding and precise object manipulation.

ControlNet is integrated into the robotic scene cloning pipeline to guide diffusion model generation, ensuring the creation of scene augmentations that adhere to established spatial and compositional relationships. This is achieved by providing additional conditioning signals to the diffusion process, effectively steering the model to produce outputs that are consistent with the original scene’s structure and layout. Specifically, ControlNet facilitates the generation of realistic variations by constraining the diffusion process to respect existing edges, surface normals, and semantic segmentations within the robotic environment, resulting in improved visual quality and realism compared to unconditioned diffusion-based augmentation techniques.

RoboEngine utilizes diffusion models to generate varied background elements within robotic scenes, thereby increasing the diversity of the synthesized data. This approach moves beyond simple texture or color variations by creating entirely new background compositions. The diffusion models are integrated into the data synthesis pipeline to produce backgrounds that are consistent with the overall scene context, but are not limited to pre-existing examples. This technique contributes to improved generalization capabilities of robotic learning systems, particularly when encountering novel environments or conditions, as evidenced by a 35% increase in success rates with novel objects and a 42.5% improvement over pre-trained models in the SIMPLER environment.

Evaluations demonstrate a significant performance increase using the proposed robotic scene cloning pipeline. The method achieves a 35% higher success rate when tested with novel, previously unseen objects. Within the SIMPLER environment, overall success rates reach 60% for cross-texture scenarios and approximately 30% for cross-shape scenarios, representing a 42.5% improvement compared to baseline pre-trained models. Specifically, these results substantially outperform the GreenAug method, which achieves success rates of only 10% for cross-texture and 7.5% for cross-shape challenges.

Towards Robust and Adaptable Robotic Intelligence

Robotic Scene Cloning represents a pivotal advancement in the pursuit of robots capable of operating effectively in unfamiliar environments without task-specific training. This technique allows for the generation of synthetic training data that closely mirrors the intricacies of real-world scenes, effectively bridging the notorious ‘sim-to-real’ gap that often hinders robotic performance. By learning from these cloned environments, robotic policies can generalize more readily to novel situations, exhibiting a significant step towards true zero-shot deployment – the ability to perform tasks in entirely new settings without requiring additional training or fine-tuning. This approach moves beyond reliance on meticulously crafted simulations and towards a more adaptable and robust robotic intelligence, capable of handling the inherent unpredictability of the physical world.

A persistent challenge in robotics is the discrepancy between simulated training environments and the unpredictable nature of the real world – a phenomenon known as the sim-to-real gap. This work addresses this issue through the generation of highly realistic training data, meticulously crafted to mirror the visual and physical complexities encountered in genuine environments. By exposing robotic policies to simulations that more accurately represent real-world conditions – including variations in lighting, textures, and object interactions – the resulting models demonstrate significantly improved performance when deployed in novel settings. This approach allows robots to generalize more effectively, operating reliably even when faced with conditions not explicitly encountered during training, and ultimately paving the way for more adaptable and robust robotic systems.

Robotic systems often struggle when confronted with situations not explicitly encountered during training; however, the generation of varied and demanding training scenarios significantly bolsters their resilience. By exposing robotic models to a wide spectrum of potential challenges – including cluttered environments, unexpected object interactions, and complex task sequences – these systems develop a capacity for generalization beyond the limitations of their initial dataset. This proactive approach to training cultivates adaptability, enabling robots to not merely execute pre-programmed instructions, but to intelligently respond to unforeseen circumstances and maintain reliable performance even when faced with novel or ambiguous situations. The result is a more robust and versatile robotic platform, better equipped to navigate the unpredictable realities of the physical world.

Robotic Scene Cloning benefits significantly from training within complex, interactive environments such as CALVIN, enabling the development of robots capable of tackling long-horizon, language-conditioned tasks. These environments facilitate the creation of scenarios demanding extended sequences of actions – for example, a robot instructed to “find the red block, then place it on top of the blue cylinder, and finally bring the green sphere to the table” – effectively bridging the gap between simple, short-term commands and realistic, multi-step objectives. By generating data from these extended interactions, models learn to anticipate future states and plan accordingly, improving performance in real-world applications where tasks rarely conclude after a single action. Average sequence length achieved within the CALVIN environment – reaching 2.57 steps – demonstrates a substantial advancement over baseline models at 1.79 and GreenAug at 2.05, highlighting the effectiveness of this combined approach for fostering more capable and adaptable robotic systems.

Real-world robotic deployments demand adaptability, and recent experiments demonstrate a substantial performance leap through advanced training methodologies. Specifically, robotic policies trained with this novel approach exhibited a 30% improvement when applied to previously unseen, complex tasks involving multiple objects and extended operational sequences. This enhancement is particularly evident within the CALVIN environment, where the average sequence length achieved was 2.57 – a considerable margin above the 1.79 of baseline models and the 2.05 attained using GreenAug. These results suggest a marked increase in the robot’s capacity to plan and execute extended, intricate actions in dynamic environments, paving the way for more robust and versatile robotic systems.

The pursuit of robust zero-shot transfer, as demonstrated by Robotic Scene Cloning, demands a rigorous foundation. It’s not merely about achieving functionality, but about establishing verifiable correctness. As Robert Tarjan aptly stated, “If it feels like magic, you haven’t revealed the invariant.” RSC, by meticulously crafting synthetic data through visual prompt editing, attempts to reveal the underlying principles governing robotic manipulation in novel environments. This isn’t about creating illusions of competence; it’s about defining and demonstrating the consistent, predictable behavior necessary for true adaptability. The method’s success hinges on identifying and controlling the core invariants of the robotic task, ensuring performance isn’t simply a result of serendipitous training but of provable design.

Future Directions

The presented work, while demonstrating a pragmatic improvement in sim-to-real transfer, merely skirts the fundamental question of robotic generalization. The generation of synthetic data, even with visually-conditioned prompts, remains a process of approximation. True elegance would lie not in creating more data, but in algorithms that require less. The current reliance on visual cues, however cleverly augmented, avoids the more difficult challenge of deriving abstract, invariant representations of environment affordances – representations that would permit reasoning about novel scenes independent of pixel-level fidelity.

A critical limitation resides in the scalability of the prompt engineering process itself. While the technique improves zero-shot adaptation, the creation of effective prompts necessitates a degree of manual tuning that belies the promise of truly autonomous learning. Future investigation must address this bottleneck, perhaps through the development of meta-learning algorithms capable of automatically generating prompts, or, more radically, by dispensing with visual prompting altogether in favor of symbolic or logical representations.

Ultimately, the field should not be satisfied with incremental gains in data efficiency. The pursuit of robotic intelligence demands a shift in focus – from the accumulation of experiential data to the construction of provably correct algorithms. The measure of success will not be how well a robot performs on a benchmark, but how little data it requires to achieve demonstrable competence, and how confidently its actions can be predicted through formal verification.

Original article: https://arxiv.org/pdf/2603.09712.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Total Football free codes and how to redeem them (March 2026)

- Clash of Clans May 2026: List of Weekly Events, Challenges, and Rewards

- Farming Simulator 26 arrives May 19, 2026 with immersive farming and new challenges on mobile and Switch

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

- NTE: Neverness to Everness Original Game Soundtracks: Your Ultimate Playlist Guide

- Top 5 Best New Mobile Games to play in May 2026

- Pixel Brave: Idle RPG redeem codes and how to use them (May 2026)

- Brent Oil Forecast

2026-03-11 19:10