Author: Denis Avetisyan

New research reveals that while AI agents are quickly adopted for code contributions, they often introduce type-related issues in TypeScript projects.

Analysis of AI-generated code patterns suggests a potential increase in technical debt despite faster pull request acceptance rates.

While artificial intelligence promises to enhance software development productivity, its impact on fundamental programming practices-specifically type safety-remains largely unexplored. This paper, ‘Mining Type Constructs Using Patterns in AI-Generated Code’, presents an empirical analysis of type construct usage in TypeScript projects generated by AI agents, revealing a nine-fold increase in the use of the ‘any’ keyword and a greater reliance on constructs that bypass type checking compared to human developers. Surprisingly, despite these potential issues, pull requests from AI agents are 1.8 times more likely to be accepted. This raises a critical question: as AI becomes increasingly integrated into the software development lifecycle, how can developers effectively mitigate the risk of accruing technical debt stemming from AI-introduced type-related vulnerabilities?

The Allure and Peril of Fluidity in Code

JavaScript’s inherent flexibility stems from its dynamic typing, where variable types are checked during program execution rather than beforehand. While this allows for rapid prototyping and concise code, it simultaneously introduces a significant risk of runtime errors that become increasingly problematic in large-scale development. Unlike statically typed languages, JavaScript doesn’t immediately flag type mismatches; instead, these errors surface unpredictably when the problematic code is actually run. This can lead to frustrating debugging sessions and unstable applications, particularly as projects grow in complexity and involve larger teams. The absence of early error detection means that potentially faulty code can make its way into production, requiring extensive testing and potentially causing unexpected failures – a substantial burden on development resources and user experience.

As JavaScript applications grew in complexity, the inherent flexibility of its dynamic typing began to pose challenges for developers and maintainability. TypeScript addresses these concerns by introducing an optional static type system. This system allows developers to define the expected data types for variables, function parameters, and return values, enabling early detection of potential errors during development – rather than at runtime. By enforcing type safety, TypeScript significantly reduces the likelihood of unexpected behavior and facilitates more robust code. Furthermore, the explicit type annotations serve as valuable documentation, enhancing code readability and making it easier for teams to collaborate on large-scale projects, ultimately leading to more maintainable and scalable applications.

The potential benefits of TypeScript, designed to address the challenges of JavaScript’s dynamic typing, are fundamentally tied to conscientious implementation by developers. While the language offers a robust static type system intended to catch errors during development and enhance code clarity, its effectiveness diminishes if types are not accurately or consistently defined. Superficial adoption – adding types without fully leveraging the system’s capabilities, or neglecting to update them as code evolves – can create a false sense of security, masking underlying vulnerabilities and hindering maintainability. Consequently, the true value of TypeScript isn’t simply in its presence within a project, but in the disciplined and thorough application of its type annotations, requiring a commitment to best practices and a deeper understanding of static typing principles.

Identifying Erosion in Typed Systems

Type-related anti-patterns in TypeScript development frequently manifest through the use of the `any` type and non-null assertions (!). The `any` type effectively disables type checking for a variable, allowing any value to be assigned without compile-time validation. Similarly, the non-null assertion operator (!) tells the compiler to assume a value is not null or undefined, bypassing null and undefined checks. While these constructs offer flexibility, they circumvent TypeScript’s static type system, increasing the potential for runtime errors that the type system was designed to prevent. Frequent use of `any` and `!` indicates a potential disregard for type safety and can introduce risks comparable to those found in dynamically-typed languages.

The use of anti-patterns such as `any` types and non-null assertions in TypeScript code diminishes the advantages of static typing by circumventing type checking. This effectively allows values of any type to be assigned to variables declared as potentially typed, or asserts that a value is not null or undefined without runtime validation. Consequently, errors that would have been caught during compilation in a strictly typed system are deferred to runtime, increasing the probability of unexpected behavior and making debugging more difficult. This reintroduction of dynamic typing characteristics negates the core benefits of TypeScript, including improved code reliability, maintainability, and developer productivity.

A rigorous evaluation of TypeScript code for anti-patterns necessitates analysis of substantial, real-world codebases to establish baseline occurrences and prevalence. This involves automated tooling capable of parsing TypeScript syntax trees and identifying instances of problematic constructs – such as excessive use of any, non-null assertions, and type assertions – across multiple projects. Quantitative metrics, including the frequency of these constructs per thousand lines of code (KLOC) and their distribution across different project types, are crucial for establishing statistically significant trends. Such analysis should account for project size, team experience, and the specific domain to avoid skewed results and provide actionable insights into the adoption and effective use of TypeScript’s type system.

Mapping Change with Intelligent Agents

The AIDev Dataset comprises a collection of TypeScript pull requests sourced from publicly available GitHub repositories. This dataset was specifically curated to facilitate the empirical analysis of type-related coding patterns as they occur in practical software development. The dataset includes complete pull request metadata, including commit messages, diffs, and associated code files, allowing for detailed examination of type annotations, type definitions, and type usage within the codebase. The initial collection comprises over 10,000 pull requests, with ongoing expansion to increase the statistical significance of analyses performed on the data. Data was filtered to include only pull requests modifying TypeScript files, ensuring relevance to the study of type-related practices.

The pull request analysis pipeline utilized a multi-agent Large Language Model (LLM) system in conjunction with a Regex-Based Parser for automated categorization. The Regex-Based Parser initially filtered the AIDev Dataset, identifying pull requests containing type annotations and modifications. Subsequently, the LLM system, comprising multiple specialized agents, analyzed the filtered pull requests to extract specific type-related features, including the introduction of new types, modifications to existing type definitions, and the resolution of type errors. This agent-based approach allowed for granular categorization based on the nature of the type-related change, improving the efficiency and accuracy of the analysis compared to single-model approaches.

The methodology facilitated the identification of type-related anti-patterns within a substantial codebase by automating the analysis of pull requests. This involved processing a dataset of TypeScript code changes and applying defined criteria to detect instances of problematic type usage, such as excessive use of ‘any’, overly broad type assertions, or inconsistent type definitions. The efficiency stemmed from the system’s ability to analyze a large volume of code changes without manual review, pinpointing specific instances of anti-patterns and enabling developers to address them systematically, thereby improving code quality and maintainability.

The Echoes of Change and Future Trajectories

The study demonstrates a clear relationship between the introduction of specific type-related coding patterns – those considered detrimental to long-term code health – and a decreased likelihood of code changes being successfully merged into a project. Analysis indicates that pull requests containing these anti-patterns experience a significantly lower ‘Acceptance Rate’ compared to those adhering to best practices. This suggests that while automated code generation tools, like AI agents, can accelerate development, the resulting code may require more review and revision due to these inherent quality issues. Consequently, addressing these patterns proactively could substantially improve developer workflows and reduce the accumulation of ‘Technical Debt’, ultimately leading to more maintainable and robust software systems.

Analysis indicates a substantial difference in the reception of code contributions originating from AI agents versus human developers; agentic pull requests demonstrate an acceptance rate of 45.8% compared to 25.3% for those authored by humans. This divergence, statistically significant with a p-value less than 0.0001 and a Cramer’s V of 0.32, suggests that code generated by AI agents is more readily integrated into projects. The magnitude of this effect highlights a potential for increased development velocity when leveraging AI-assisted coding tools, although further investigation is needed to understand the specific factors driving this higher acceptance rate and to ensure long-term code maintainability.

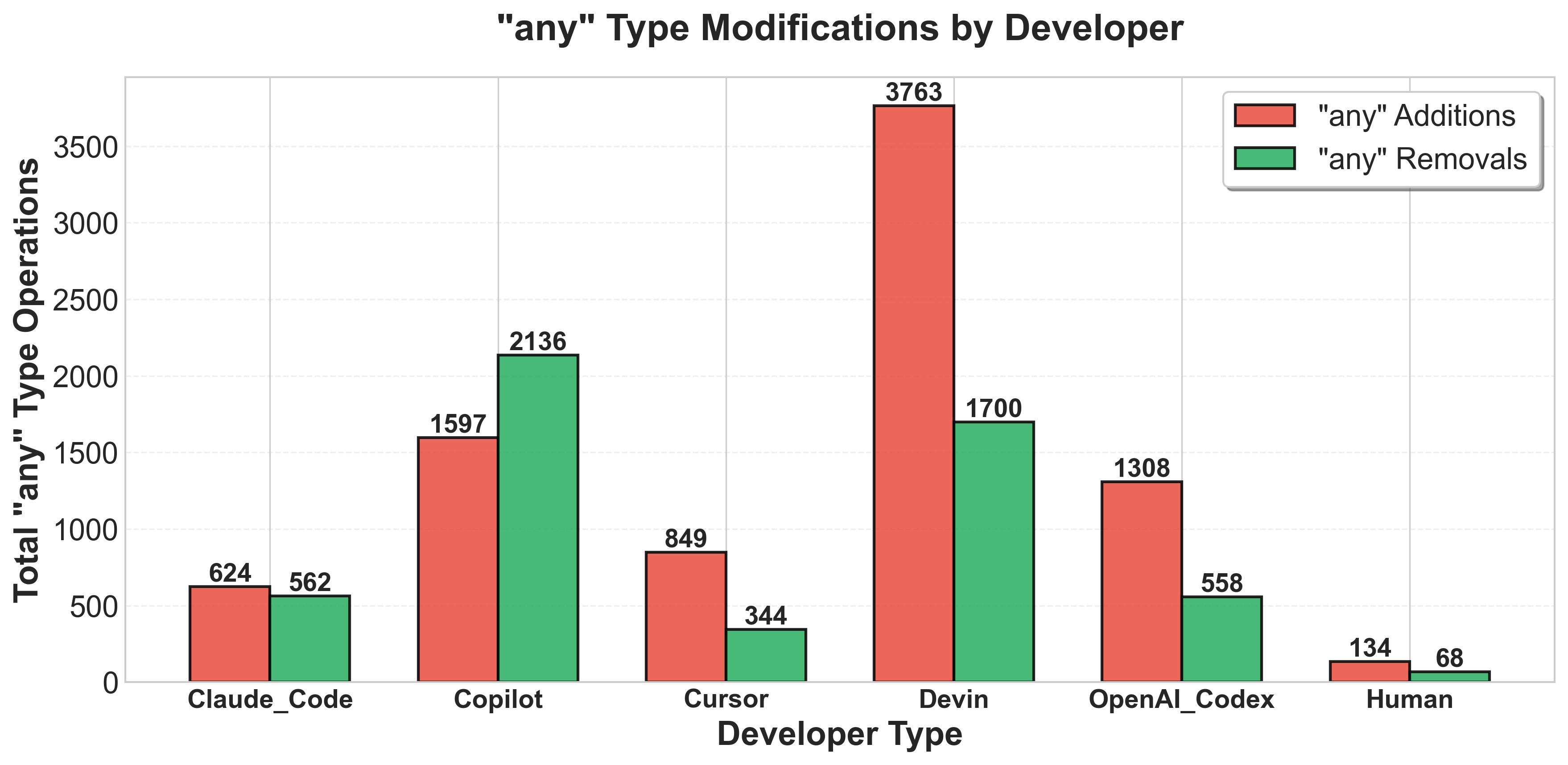

Analysis indicates a notable discrepancy in type usage between AI agents and human developers; specifically, AI agents introduce the ‘any’ type at a rate 2.16 times greater than their human counterparts. This statistically significant finding-supported by a p-value of approximately 2.33 × 10⁻⁷ and a Cohen’s d of 0.32-suggests a systematic difference in how these agents approach type safety during code generation. While the increased use of ‘any’ may accelerate initial development, it potentially introduces vulnerabilities and increases the likelihood of runtime errors, ultimately contributing to [latex]Technical Debt[/latex] and necessitating more extensive debugging and refactoring efforts. This pattern highlights a crucial area for improvement in AI-driven code synthesis, focusing on strategies to encourage more precise and specific type annotations.

The observed prevalence of type-related anti-patterns in code generated by AI agents appears to contribute to the accumulation of technical debt within software projects. These patterns, while not immediately causing functional errors, represent suboptimal coding practices that necessitate future refactoring and maintenance efforts. This ultimately hinders developer productivity as engineers spend valuable time addressing these issues instead of focusing on new feature development or innovation. The increased complexity arising from these patterns can also make the codebase harder to understand, debug, and extend, further exacerbating the long-term costs associated with maintaining the software. Addressing these patterns proactively, rather than reactively, is crucial for ensuring the sustainability and efficiency of modern software development workflows.

Analysis indicates that AI agents not only utilize type-related features, but do so at a substantially greater rate than human developers. Statistical evaluation reveals a significant difference in the implementation of these advanced typing mechanisms – with agents exhibiting a Cohen’s d effect size of 1.45 (p < 5.50 × 10⁻⁵). This suggests a proclivity towards leveraging complex type systems, potentially aiming for increased code robustness or expressiveness. While not inherently negative, this heightened usage demands careful consideration, as overly intricate type definitions can sometimes introduce complexity and hinder code maintainability if not managed effectively. Further research is needed to determine whether this pattern translates to long-term benefits in code quality and developer productivity.

The potential for artificial intelligence to not only generate code automatically, but also to actively monitor and refine it, presents a compelling pathway towards enhanced software development practices. Utilizing AI agents to proactively identify instances of type-related anti-patterns – such as the excessive use of ‘any’ types – during the code creation process could substantially decrease the accumulation of technical debt. This preemptive approach, integrated into automated code generation workflows, promises to improve overall code quality by addressing potential issues before they manifest as bugs or maintenance challenges. Consequently, organizations may experience a significant reduction in development costs associated with debugging, refactoring, and long-term maintenance, ultimately accelerating project timelines and boosting developer productivity.

The study illuminates a critical tension within modern software development: the allure of rapid iteration versus the imperative of long-term maintainability. While AI agents demonstrate an ability to quickly generate code accepted by reviewers, the introduction of type-related issues suggests a potential accumulation of technical debt. This echoes Kernighan’s observation that “every abstraction carries the weight of the past.” The apparent trade-off between immediate progress and future resilience is a recurring theme in system design, and this research underscores the necessity of proactively addressing these concerns before they compound. A focus on graceful decay, rather than simply accelerated creation, remains paramount.

What Lies Ahead?

The observed tendency of AI agents to generate type-related issues in TypeScript is not, perhaps, a failing of the agents themselves, but a symptom of a larger truth. Systems age not because of errors, but because time is inevitable. The higher acceptance rate of their pull requests, despite the introduction of potential technical debt, suggests a troubling efficiency – a swiftness that prioritizes immediate functionality over long-term maintainability. It isn’t a matter of if the debt will be collected, but when, and at what compounding interest.

Future work should move beyond simply identifying these patterns; it must explore the systemic consequences. How does this accelerated accumulation of technical debt affect the overall resilience of software projects? Does the perceived efficiency of AI-generated code ultimately create more brittle, less adaptable systems? The focus should shift from optimizing for immediate acceptance to assessing the long-term cost of these seemingly innocuous compromises.

Ultimately, this research hints at a fundamental tension. Static type systems are intended to provide guarantees, to delay the inevitable decay of complex software. But sometimes stability is just a delay of disaster. The question isn’t whether AI will write perfect code, but whether it will accelerate the rate at which all code – human or machine-authored – succumbs to the entropy inherent in any complex system.

Original article: https://arxiv.org/pdf/2602.17955.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- Clash of Clans: All the Ranked Mode changes coming this April 2026 explained

- Brawl Stars April 2026 Brawl Talk: Three New Brawlers, Adidas Collab, Game Modes, Bling Rework, Skins, Buffies, and more

- COD Mobile Season 4 2026 – Eternal Prison brings Rebirth Island, Mythic DP27, and Godzilla x Kong collaboration

- Gear Defenders redeem codes and how to use them (April 2026)

- The Mummy 2026 Ending Explained: What Really Happened To Katie

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

- Razer’s Newest Hammerhead V3 HyperSpeed Wireless Earbuds Elevate Gaming

- Brent Oil Forecast

2026-02-23 19:28