Author: Denis Avetisyan

Researchers have developed a new soft optical sensor with an integrated lens, enabling improved light control and precision in mechanosensing applications.

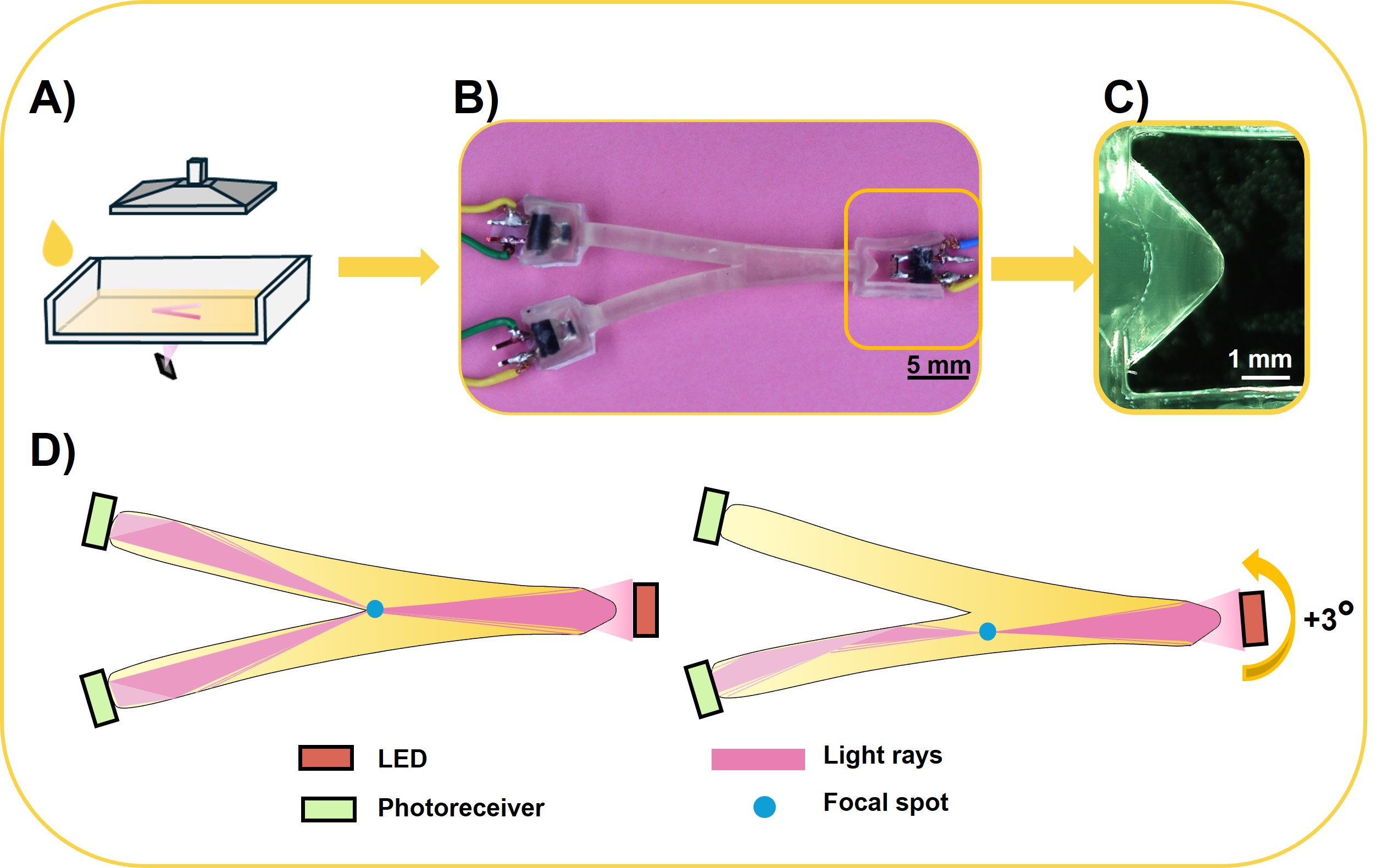

This work demonstrates the fabrication and characterization of a 3D-printed soft optical sensor (SOLen) incorporating a lens for enhanced light guidance and sensitivity.

Achieving robust sensing within soft robots remains challenging due to the need for fabrication-compatible methods that avoid performance degradation from uncontrolled light propagation. This is addressed in ‘3D-printed Soft Optical sensor with a Lens (SOLen) for light guidance in mechanosensing’, which presents a novel approach integrating a 3D-printed lens within a soft optical sensor to enhance light control and signal precision. By leveraging deformation-induced lens rotation and focal spot translation within a Y-shaped waveguide, the sensor generates a differential optical output encoding both motion direction and amplitude. Could this transferable material-to-optics workflow unlock new functionalities and sensing capabilities for next-generation soft robotic systems?

Deconstructing Softness: The Quest for Integrated Sensing

The potential of soft robotics lies in its ability to interact with environments and objects in a way that is both adaptable and safe, a stark contrast to the rigid and often forceful movements of conventional robots. This capability, however, is fundamentally reliant on precise deformation sensing – the robot must ‘know’ how it is bending, stretching, and twisting in order to execute tasks effectively and avoid unintended consequences. Unlike industrial robots where position is paramount, soft robots operate on the principle of continuous change; therefore, accurately measuring these changes across the entire body of the robot is not merely a technical challenge, but a prerequisite for realizing their full potential in applications ranging from delicate surgical procedures to safe human-robot collaboration and exploration of unpredictable environments.

Conventional sensors, typically rigid and bulky, present a fundamental mismatch with the inherent flexibility of soft robotics. These devices often struggle to conform to the continuously changing shapes of soft bodies, hindering accurate measurement of deformation and strain. Beyond physical incompatibility, integrating these sensors into the soft robotic structure proves difficult; traditional mounting methods can compromise the system’s compliance or require complex wiring, diminishing the benefits of a soft design. Consequently, a key obstacle in advancing soft robotics lies in developing sensing technologies that not only exhibit similar mechanical properties – being stretchable, bendable, and compliant – but also seamlessly integrate with the soft materials, offering a truly unified and responsive system.

Conventional optical sensing techniques, while frequently employed for monitoring deformation in robotics, encounter significant limitations when applied to soft, compliant systems. A primary challenge lies in susceptibility to extraneous light sources; ambient illumination and internal reflections within the soft material itself can dramatically degrade signal fidelity. These unwanted signals introduce noise, obscuring the subtle changes in light indicative of actual deformation and hindering accurate measurements. Furthermore, the diffuse nature of light transmission through soft tissues-often lacking the rigid, reflective surfaces found in traditional robotics-disperses the optical signal, reducing its strength and further complicating detection. Consequently, achieving reliable and precise sensing in soft robots necessitates innovative approaches that minimize the impact of these inherent optical disturbances.

To truly realize the potential of soft robotics, a paradigm shift in sensing technology is necessary. Current methods often rely on rigid components or external sensing, hindering the inherent adaptability of these systems. Researchers are now focusing on integrated optics and monolithic manufacturing techniques to create sensors within the soft robotic structure itself. This approach involves fabricating waveguides and optical components directly into the soft material, enabling highly accurate and localized deformation sensing without compromising flexibility. By eliminating discrete sensors and external wiring, monolithic integration minimizes signal noise, enhances durability, and allows for the creation of sensors that stretch, bend, and twist seamlessly with the robot’s movements – paving the way for more responsive, reliable, and truly integrated soft robotic systems.

![This soft robot architecture integrates [latex] ext{SOLen}[/latex] sensors by using structural waveguides and printed lenses to focus light for distributed sensing capabilities.](https://arxiv.org/html/2602.17421v1/figs/Figure_5.jpg)

The SOLen Sensor: Embedding Sight Within the Machine

The SOLen sensor achieves functionality by fabricating an optical lens directly within its elastomeric body, creating a fully integrated, monolithic device. This design eliminates the need for discrete lens assembly, simplifying manufacturing and improving robustness. The lens is not a separate component bonded or mechanically aligned; rather, the refractive surfaces are defined by the geometry of the soft material itself. This approach enables seamless integration with the sensor’s other soft robotic components and allows for the creation of highly compliant and deformable optical systems. The monolithic construction minimizes potential failure points associated with traditional optical assemblies and facilitates miniaturization of the overall sensor package.

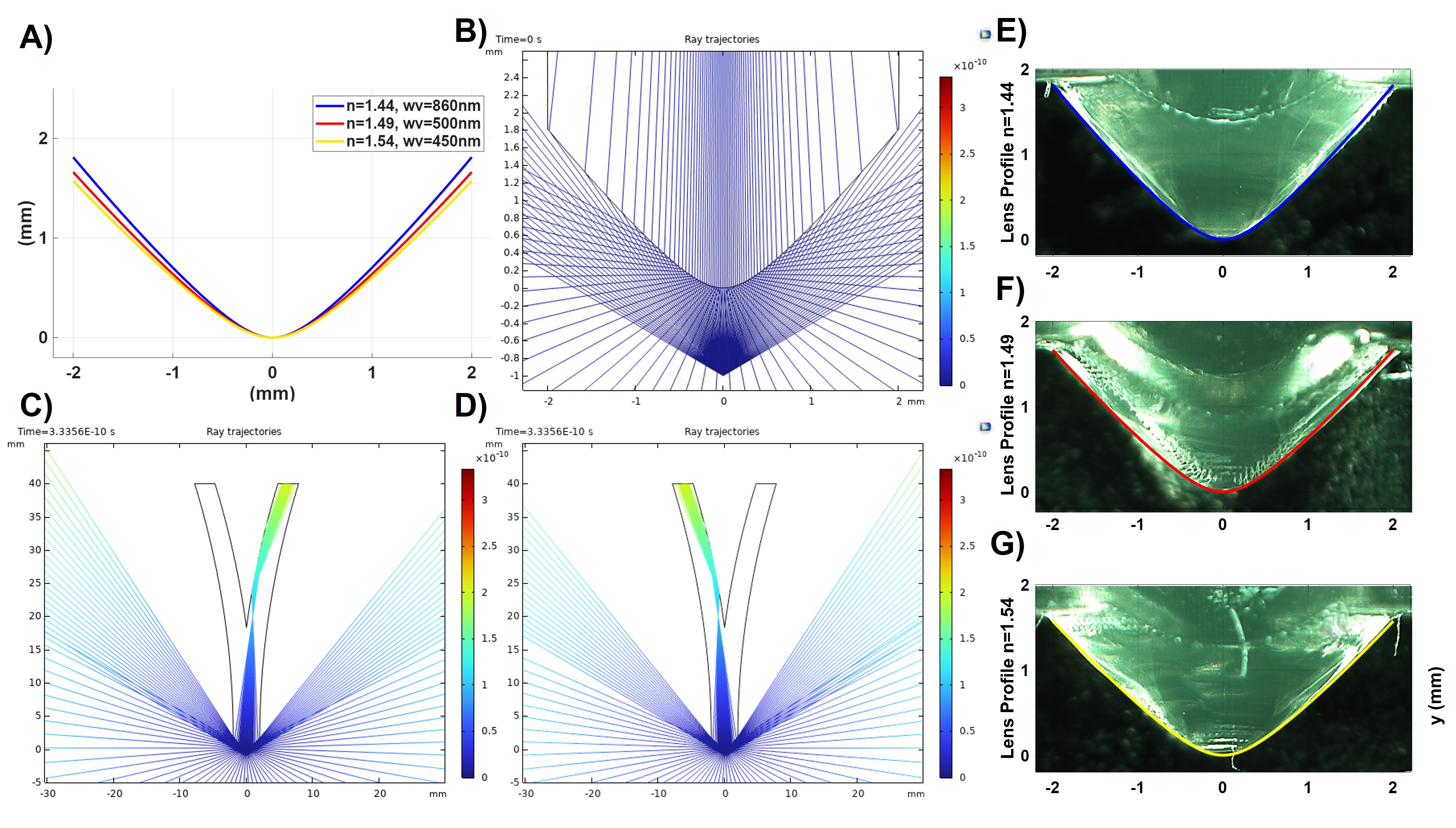

The SOLen sensor’s optical system utilizes a lens profile defined by a Cartesian Oval. This non-circular geometry was selected to optimize light focusing within the soft sensor structure. Unlike spherical lenses, the Cartesian Oval allows for astigmatism correction and improved focal spot size, leading to enhanced signal strength. The equation for a Cartesian Oval is [latex] \frac{x^2}{a^2} + \frac{y^2}{b^2} = 1 [/latex], where ‘a’ and ‘b’ define the semi-major and semi-minor axes, respectively, and were iteratively adjusted during the design process to achieve peak performance based on ray tracing simulations.

The SOLen sensor’s lens design was iteratively refined using Finite Element Simulation employing a Ray Optics approach. This method modeled light propagation through the proposed lens geometry, allowing for the evaluation of focusing characteristics and signal strength without physical prototyping. Parameters such as lens curvature, material refractive index, and incident light angle were varied within the simulation environment. The resulting data enabled optimization of the lens profile – a Cartesian Oval – to maximize light concentration on the sensor’s active area, ultimately improving the sensor’s sensitivity and signal-to-noise ratio. Simulation results were validated through comparison with fabricated prototypes, confirming the accuracy of the computational model and the effectiveness of the optimization process.

The refractive index of the SOLen sensor’s integrated lens was optimized to 1.50 as a critical parameter in achieving the desired focal length and signal strength. This value represents the ratio of the speed of light in a vacuum to its speed within the lens material, directly influencing the degree of light bending as it passes through the lens. Simulation and iterative design adjustments were performed to confirm that a refractive index of 1.50 maximized light concentration at the sensor’s focal plane, improving detection sensitivity. Deviations from this value would alter the lens’s ability to accurately converge incident light, reducing performance.

Materializing the Vision: Fabrication and Characterization

The monolithic sensor structure was fabricated utilizing Digital Light Processing (DLP) printing with an Acrylate Polyurethane resin. This additive manufacturing technique allows for the creation of complex geometries directly from a digital model through the selective curing of liquid photopolymer by a projected light pattern. Acrylate Polyurethane was selected due to its desirable mechanical properties – specifically its flexibility and resilience – and its compatibility with the required optical characteristics of the sensor. The DLP process facilitated the creation of a single, integrated component, eliminating the need for assembly and improving structural integrity.

UV-Vis spectroscopy was utilized to characterize the optical properties of the lens material, demonstrating a high level of transmittance across the visible and near-infrared spectrum. Measurements confirmed that the material exhibits greater than 85% transmittance within the 450 to 900 nanometer wavelength range. This level of optical clarity is critical for efficient light transmission through the integrated lens and supports the functionality of the sensor. The data indicates minimal light absorption or scattering within this spectral range, validating the suitability of the chosen material for optical applications.

Microscope imaging was utilized to assess the dimensional accuracy of the fabricated lens structure. Analysis of the printed features revealed a minimum resolvable positive feature size of 610 μm. This measurement indicates the smallest positive element that could be reliably reproduced during the printing process, and serves as a critical parameter for evaluating the fidelity of the fabricated optical element and its ability to maintain intended functionality. The resolution limit is determined by the printer’s capabilities and the material properties of the acrylate polyurethane resin.

Monolithic manufacturing, in this context, refers to the fabrication of the optical element and the flexible body as a single, continuous structure. This approach avoids traditional assembly methods and their associated interfacial weaknesses. The fabrication process achieved a Z-axis resolution of 25 μm, meaning features could be defined and created with 25-micrometer precision along the vertical dimension. This level of resolution is critical for accurately defining the optical characteristics of the lens and ensuring its functional integration within the soft material, enabling a structurally sound and mechanically stable device.

![UV-Vis spectroscopy reveals the transmittance and absorbance of a single layer of ma-PU, which, when modeled as a slab of thickness [latex]h[/latex], allows for the determination of its refractive index based on wavelength λ and reflectance [latex]R[/latex].](https://arxiv.org/html/2602.17421v1/figs/Figure_2.jpg)

Shielding the Signal: Robustness Against Ambient Interference

Optical sensors, despite their precision, are inherently vulnerable to interference from external ambient illumination. This external light contributes unwanted noise to the signal, effectively diminishing the signal-to-noise ratio and hindering accurate detection. The issue arises because these sensors often capture not just the intended signal, but also any stray light present in the environment-sunlight, artificial lighting, or even reflections. This noise can obscure the genuine signal, leading to errors in measurement or unreliable readings, particularly in applications demanding high sensitivity or operating in brightly lit or variable lighting conditions. Consequently, mitigating the impact of ambient light is crucial for ensuring the consistent and dependable performance of optical sensing systems.

To mitigate the detrimental effects of external light interference, the sensor incorporates a dual-coating strategy utilizing titanium dioxide (TiO2) and gold. The TiO2 layer acts as a primary broadband antireflection coating, significantly reducing the amount of ambient light reaching the sensor surface. This is further complemented by a carefully applied gold coating, which selectively reflects specific wavelengths known to contribute to noise while allowing the target signal to pass through. This synergistic approach effectively shields the sensor from unwanted illumination, improving the signal-to-noise ratio and ensuring consistent performance even under bright or fluctuating lighting conditions. The precise deposition and thickness control of these coatings are crucial to maximizing their shielding capabilities and maintaining the sensor’s sensitivity.

The sensor’s performance isn’t merely about detecting light, but doing so consistently, even when faced with interference. Researchers addressed this by meticulously integrating a specialized lens alongside protective titanium dioxide and gold coatings. This combination functions as a robust shield, minimizing the impact of ambient illumination and stray light that would otherwise diminish signal clarity. The result is a sensing system capable of maintaining accuracy and reliability in dynamic and unpredictable conditions-from brightly lit outdoor settings to dimly lit indoor spaces-and ensuring dependable data acquisition where conventional sensors might falter. This enhanced resilience broadens the scope of potential applications, paving the way for dependable operation in previously inaccessible environments.

The efficacy of intensity modulation – a technique where information is encoded in the amplitude of light – is directly correlated with the clarity of the received signal. By minimizing interference from ambient light through the strategic application of TiO2 and gold coatings, the sensor’s ability to accurately discern subtle changes in light intensity is substantially improved. This heightened signal clarity translates to a more reliable and sensitive detection process, allowing for precise measurements even in brightly lit or otherwise challenging environments. Consequently, the sensor’s overall performance is not merely enhanced, but fundamentally stabilized, enabling consistent and trustworthy data acquisition regardless of external light conditions.

![Experimental validation demonstrates that tilting the emitter/lens assembly in a Y-shaped optical sensor effectively switches focal spot intensity between the two receivers, resulting in a measurable voltage redistribution that correlates with the rotation angle [latex] (\pm 3^\circ) [/latex] and is repeatable over multiple cycles.](https://arxiv.org/html/2602.17421v1/figs/Figure_4.jpg)

The pursuit detailed in this research exemplifies a fundamental principle: understanding limitations through rigorous testing. This paper doesn’t simply present a functional soft optical sensor; it actively challenges the constraints of traditional sensing methods by integrating a 3D-printed lens. As Alan Turing observed, “Sometimes it is the people no one imagines anything of who do the things that no one can imagine.” This mirrors the innovative approach taken here – a departure from established techniques to achieve precise light guidance and signal fidelity within a soft robotic system. The successful implementation of this lens demonstrates that pushing boundaries, even in material characterization and waveguide design, can yield unexpectedly powerful results, validating the notion that true progress lies in questioning existing paradigms.

Beyond the Lens

The successful coupling of 3D-printed optics and soft sensing materials raises a fundamental question: has the pursuit of ‘flawless’ signal transmission blinded the field to the potential of controlled distortion? Current approaches prioritize minimizing light loss and maximizing fidelity, yet a deliberately imperfect waveguide – one that subtly alters the light spectrum or polarization – could encode additional information about the applied force. Perhaps the ‘noise’ isn’t a bug, but a latent variable, a fingerprint of the deformation itself.

Material characterization, while thorough, remains tethered to static measurements. The interplay between lens deformation and optical performance under cyclic loading, or in response to complex, multi-axial strain, remains largely unexplored. Does the lens, when stressed, become a dynamic filter, subtly shifting the detectable wavelengths? Investigating these nonlinear optical responses could unlock a new dimension of sensitivity, moving beyond simple magnitude detection toward nuanced ‘tactile’ perception.

The current architecture, while demonstrating proof-of-concept, relies on discrete component integration. The true challenge lies in moving toward fully integrated, self-assembling optical-soft sensor systems – where the lens isn’t simply added to the sensor, but becomes an integral part of its structural and sensing mechanism. This requires a shift in design philosophy, viewing the sensor not as a collection of parts, but as a single, organically grown entity, where form and function are inextricably linked.

Original article: https://arxiv.org/pdf/2602.17421.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Gear Defenders redeem codes and how to use them (April 2026)

- Clash Royale Season 83 May 2026 Update and Balance Changes

- Neverness to Everness Hotori Build Guide: Kit, Best Arcs, Console, Teams and more

- Brawl Stars Damian Guide: Attacks, Star Power, Gadgets, Hypercharge, Gears and more

- Total Football free codes and how to redeem them (March 2026)

- Brawl Stars x My Hero Academia Skins: All Cosmetics And How to Unlock Them

- Brawl Stars Starr Patrol Skins: All Cosmetics & How to Unlock Them

- Clash of Clans May 2026: List of Weekly Events, Challenges, and Rewards

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

2026-02-21 05:39