Author: Denis Avetisyan

Researchers have released HortiMulti, a comprehensive dataset designed to push the boundaries of robot localization and mapping within the complex environments of agricultural polytunnels.

The HortiMulti dataset and benchmarking suite reveals significant challenges for current SLAM algorithms operating in dense, repetitive agricultural settings.

Despite advances in agricultural robotics, reliable autonomy in complex horticultural environments remains a significant challenge. To address this gap, we introduce HortiMulti: A Multi-Sensor Dataset for Localisation and Mapping in Horticultural Polytunnels, a comprehensive, multi-seasonal dataset collected within commercial strawberry and raspberry polytunnels, capturing the perceptual difficulties inherent to these spaces. Our analysis reveals substantial performance limitations of current state-of-the-art SLAM and perception algorithms when applied to this challenging domain, due to factors like dynamic foliage, specular reflections, and GNSS-denied conditions. Will HortiMulti catalyze the development of more robust and adaptable robotic perception systems for the future of precision agriculture?

Navigating the Labyrinth: The Challenge of Precise Localization in Polytunnels

Agricultural automation relies heavily on a robot’s ability to pinpoint its location, a process known as localization. However, achieving this precision within polytunnels presents a unique challenge that defeats many conventional methods. Unlike open fields where Global Navigation Satellite Systems (GNSS) perform reliably, the plastic and metal framework of a polytunnel severely limits satellite signal reception. Furthermore, the repetitive nature of plant rows and supporting structures creates perceptual aliasing – where similar visual cues confuse the system – and makes it difficult for robots to differentiate between locations. Consequently, standard localization techniques, designed for simpler environments, struggle to provide the accurate and robust positioning necessary for tasks like automated harvesting, precision spraying, or detailed crop monitoring within these complex agricultural settings.

Agricultural robots operating within polytunnels frequently encounter difficulties with localization due to the inherent unreliability of Global Navigation Satellite Systems (GNSS). The plastic and metal framework characteristic of these structures significantly attenuates satellite signals, leading to positional inaccuracies. This issue is compounded by the repetitive nature of polytunnel layouts – rows of identical crops and supporting structures – which create perceptual aliasing, making it difficult for robots to uniquely identify their location. Consequently, standard localization algorithms, reliant on consistent and distinguishable landmarks, struggle to provide the precise positioning necessary for automated tasks like harvesting, pruning, or targeted treatment, hindering the full potential of robotic agriculture in controlled environments.

Polytunnel environments present a confluence of factors that severely limit the effectiveness of conventional localization systems. Reduced visibility, stemming from plant density and diffused light, compromises the performance of visual-based methods. Moreover, the dynamic nature of plant growth throughout the growing season introduces constant changes to the environment, creating perceptual aliasing where identical structures appear repeatedly, confusing robotic navigation. These seasonal shifts necessitate solutions that move beyond static mapping and embrace adaptive algorithms capable of robustly interpreting a continually evolving landscape, demanding innovative sensor fusion and data processing techniques tailored specifically for the challenges of controlled-environment agriculture.

Establishing a Ground Truth: The HortiMulti Dataset for Robust Evaluation

The HortiMulti dataset consists of synchronized data captured over multiple growing seasons within a functioning commercial greenhouse. Existing publicly available datasets for robotics and computer vision often lack the complexity of real-world operational environments, frequently being collected in simple, static lab spaces or outdoors. HortiMulti addresses these limitations by providing data collected amidst dynamic elements such as plant growth, mobile robots, and human workers, all within a cluttered and reflective greenhouse structure. The dataset incorporates data streams from LiDAR, visual, and inertial measurement units (IMUs), providing a comprehensive record suitable for developing and evaluating algorithms in a challenging, agriculturally-relevant setting.

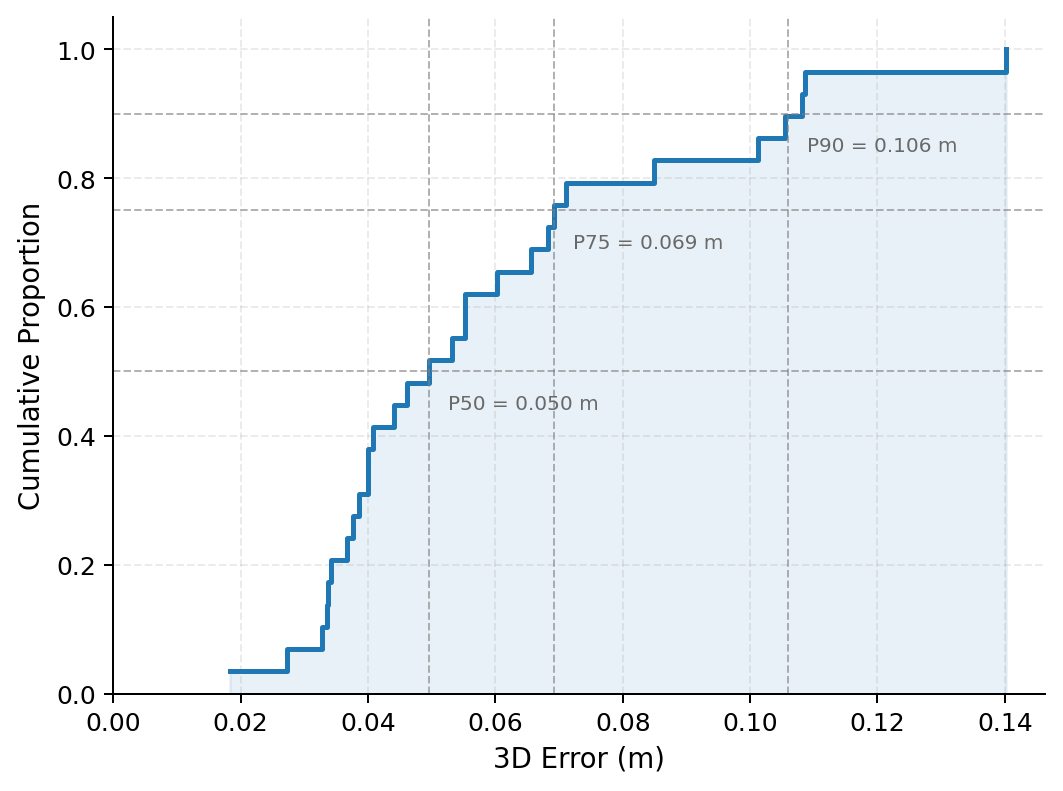

The HortiMulti dataset’s ground truth data is established via Poly-TagSLAM, a system integrating detections from fiducial tags, surveyed control points, and LiDAR-inertial odometry. This fusion process yields a positional accuracy of 7.3 cm Root Mean Squared Error (RMSE) when constrained by surveyed positions. The methodology utilizes surveyed positions as a global reference to correct for drift and scale errors inherent in LiDAR-inertial odometry, ensuring a highly accurate ground truth for evaluating localization and mapping algorithms within the horticultural environment. The system’s performance is verified through comparison with the surveyed control points, providing an independent measure of its accuracy.

The HortiMulti dataset facilitates thorough evaluation of Simultaneous Localization and Mapping (SLAM) and place recognition algorithms by integrating data streams from LiDAR, visual, and inertial measurement unit (IMU) sensors. This multi-sensor fusion enables benchmarking of algorithms across diverse conditions representative of a commercial horticultural setting. Furthermore, analysis using the dataset validates the performance of the Poly-TagSLAM ground truth generation system, achieving a Root Mean Squared Error (RMSE) of 6.6 cm when assessed against surveyed ground truth positions, establishing its reliability for accurate localization and mapping validation.

Validating Performance: Benchmarking SLAM and Place Recognition Algorithms

HortiMulti provides a platform for evaluating Simultaneous Localization and Mapping (SLAM) algorithms – specifically ORB-SLAM3, DSO, OpenVINS, and LIO-SAM – within the complexities of an agricultural setting. This evaluation is conducted using datasets captured in real-world orchards and farms, offering a departure from controlled laboratory environments. The platform allows for quantitative comparison of algorithm performance metrics such as trajectory error, map accuracy, and computational cost, all under conditions representative of dynamic agricultural scenes, including variations in lighting, vegetation density, and seasonal changes. This realistic testing allows researchers to assess the robustness and reliability of each SLAM algorithm for potential deployment in agricultural robotics and automation applications.

Evaluation of place recognition algorithms, including PointNetVLAD, SPVSoAP3D, and LoGG3D-Net, consistently demonstrates low Recall@1 scores within the HortiMulti environment. Recall@1, a metric indicating the proportion of correct matches when retrieving the single most similar location, averaged below 50% across tested algorithms and datasets. This suggests a significant challenge in accurately identifying previously visited locations in complex agricultural settings, potentially due to factors like seasonal changes in appearance, variations in lighting, and the density of repetitive features within crop rows. Further analysis indicates performance is sensitive to dataset composition and algorithm parameter tuning, highlighting the need for robust and adaptable place recognition techniques.

The evaluated SLAM and place recognition algorithms employ fundamentally different methodologies for environmental understanding. ORB-SLAM3 and DSO utilize feature-based visual odometry, relying on the detection and tracking of distinct visual features, while LIO-SAM integrates LiDAR odometry with visual information for improved robustness. Place recognition algorithms vary in their feature extraction and matching strategies; PointNetVLAD and LoGG3D-Net use learned feature descriptors from point clouds, while SPVSoAP3D focuses on surface patch descriptors. This diversity allows for a comparative analysis of performance under the challenging conditions of agricultural environments, where factors such as illumination changes, foliage density, and dynamic objects (e.g., moving machinery, growing crops) can significantly impact the accuracy and reliability of each algorithm.

Realizing the Potential: Expanding Applications and Future Directions

The advent of HortiMulti, a comprehensive dataset for agricultural robotics, coupled with advancements in robust localization algorithms, is poised to dramatically accelerate the development of autonomous robots within the agricultural sector. These technologies empower robots to navigate complex, real-world environments – such as fields and greenhouses – with greater precision and reliability. Consequently, automation of labor-intensive tasks like crop monitoring, selective harvesting, and targeted precision spraying becomes increasingly feasible. This shift promises not only to alleviate labor shortages but also to optimize resource utilization, reduce waste, and ultimately enhance the efficiency and sustainability of modern food production systems.

The potential of robotic assistance extends beyond broad-acre farming and into the more intricate environments of protected horticulture. Specifically, deploying technologies like HortiMulti within strawberry and raspberry polytunnels offers a pathway to address critical labor shortages and optimize yields in these high-value crops. These controlled environments, while offering protection from the elements, present unique challenges for robots – narrow pathways, dense foliage, and delicate fruit require precise navigation and manipulation. Successful implementation promises not only increased efficiency in tasks like harvesting and quality control, but also a reduction in resource use through precision application of water and nutrients, ultimately bolstering the sustainability of soft fruit production and improving food security.

Continued development hinges on sophisticated computational strategies capable of navigating the inherent complexities of agricultural environments. Future research prioritizes data fusion, integrating information from multiple sensors – visual, thermal, and potentially others – to create a more comprehensive understanding of crop health and field conditions. This will be coupled with lifelong learning techniques, allowing robotic systems to continuously refine their performance based on accumulated experience, rather than relying on static programming. Crucially, adaptive algorithms will be essential for responding to unpredictable factors like changing light, weather, and plant growth stages, ensuring reliable and robust operation not just in initial trials, but over extended deployment periods and across diverse agricultural landscapes.

The introduction of HortiMulti exposes a fundamental truth about robotic systems: robustness isn’t simply a matter of adding more sensors. The dataset’s challenges, particularly within the repetitive structures of polytunnels, highlight how easily current localization and mapping algorithms falter when faced with ambiguity. It echoes a sentiment Linus Torvalds once expressed: “Most good programmers do programming as a hobby, and most people don’t even have the time to learn it.” This dataset isn’t just a collection of sensor data; it’s a demand for algorithms that move beyond clever solutions and embrace fundamental clarity. If the system looks clever, it’s probably fragile, and HortiMulti seems designed to expose that fragility in agricultural robotics.

What’s Next?

The introduction of HortiMulti exposes, with characteristic clarity, what was already implicitly understood: a robust dataset is not merely a collection of sensor readings, but a stress test of fundamental assumptions. Current localization and mapping algorithms, demonstrably challenged by the subtle chaos of a horticultural polytunnel, reveal a reliance on simplifying geometries and static environments. The system’s behavior – its failures in this context – is not a bug to be patched, but a symptom of architectural limitations. Every optimization for open space, for predictable textures, creates new tension points when confronted with the organic complexity of a growing system.

Future work will inevitably focus on sensor fusion and place recognition. However, the true advancement lies not in adding more layers of abstraction, but in reconsidering the underlying representations. The question is not simply ‘can a robot navigate this environment?’ but ‘what does it mean to represent a space defined by growth, change, and deliberate imperfection?’ A mapping solution predicated on permanence will always struggle in a fundamentally impermanent world.

The benchmark presented here is, therefore, less a destination than a starting point. It’s a call for a shift in perspective: from algorithms that conquer complexity, to systems that accommodate it. The architecture must reflect the environment, not impose order upon it. And, perhaps, a humbling acknowledgement that some spaces are best understood not as maps, but as processes.

Original article: https://arxiv.org/pdf/2603.20150.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Gold Rate Forecast

- These Cartoon Reboots Totally Missed the Point of the Originals (& Went Downhill Fast)

- Netflix’s Best Stranger Things Replacement Officially Takes America By Storm

- 6 Animated Movie Trilogies Where Every Entry Is Near-Perfect

- Total Football free codes and how to redeem them (March 2026)

- STARBUCKS STAND by BEAMS Channels Kenyan Coffee Heritage Into Its Latest Spring/Summer Wardrobe

- Maggie Smith’s sons “deeply touched” by huge honour to the late “national treasure”

- Top 5 Best New Mobile Games to play in May 2026

- Clash Royale Season 83 May 2026 Update and Balance Changes

- Clash Royale May 2026 Update to bring Collection Levels, Mastery rework, and progression overhaul

2026-03-24 06:59