Author: Denis Avetisyan

A novel framework leverages artificial intelligence and human expertise to dramatically accelerate and improve the systematic review process.

Researchers introduce LR-Robot, a supervised AI system utilizing large language models and knowledge graphs to conduct real-time systematic literature reviews, demonstrated through a comprehensive analysis of option pricing research.

Despite advances in artificial intelligence for research synthesis, systematic literature reviews often lack contextual depth and require substantial expert oversight. To address this, we introduce ‘LR-Robot: A Unified Supervised Intelligent Framework for Real-Time Systematic Literature Reviews with Large Language Models’, a novel framework integrating human-in-the-loop processes with large language models and retrieval-augmented generation to automate and enhance the rigor of literature reviews. This approach enables multidimensional categorization, relationship mapping, and fine-grained analysis of topic evolution, demonstrated through a comprehensive study of option pricing research. Can such a framework fundamentally reshape how researchers navigate and synthesize the ever-growing body of scientific literature?

Deconstructing the Literature Avalanche

The undertaking of a systematic literature review, once a manageable task for researchers, now presents a substantial challenge due to the relentless expansion of scholarly publications. Traditional methods rely heavily on manual screening, data extraction, and synthesis – processes that demand significant time and dedicated resources. As the volume of research continues its exponential climb, particularly across disciplines like biomedicine and engineering, these manual approaches become increasingly impractical and often unsustainable. The sheer scale necessitates exhaustive searching across multiple databases, careful assessment of relevance, and meticulous data coding, quickly overwhelming individual researchers or small teams. This bottleneck hinders the timely identification of critical evidence, impedes meta-analyses, and ultimately slows the pace of scientific advancement, creating a pressing need for innovative solutions to effectively navigate and synthesize the ever-growing body of knowledge.

The limitations of manual literature review extend beyond simple time constraints; inherent biases and the potential for overlooking crucial findings become increasingly prominent as the volume of published research expands. Researchers, despite diligent efforts, inevitably apply subjective criteria when selecting studies for inclusion, potentially favoring results that align with pre-existing beliefs or overlooking research from less-prominent sources. This selection process, coupled with the cognitive limitations of processing vast quantities of information, means that critical insights – particularly those presented in unconventional formats or buried within extensive datasets – are at risk of being missed entirely. Consequently, even well-intentioned manual reviews may present an incomplete or skewed representation of the existing knowledge base, hindering accurate meta-analyses and potentially leading to flawed conclusions.

Automated literature review tools, while promising increased efficiency, frequently stumble when faced with the complexities of scholarly writing. These systems often rely on keyword matching or superficial statistical analysis, failing to grasp the subtle arguments, contextual dependencies, and methodological nuances crucial for accurate synthesis. A study of automated summarization techniques revealed a consistent inability to differentiate between supporting and contradictory evidence, leading to inaccurate conclusions and the potential for perpetuating flawed research. The challenge lies in replicating the human capacity for critical appraisal – understanding not just what a study reports, but how it frames its findings, acknowledges limitations, and relates to the broader body of knowledge. Consequently, automated approaches require significant refinement to move beyond simple data aggregation and achieve genuinely insightful knowledge synthesis.

The burgeoning volume of scientific literature presents a significant bottleneck to progress, demanding solutions that move beyond traditional, manually-driven reviews. A truly scalable approach isn’t simply about processing more papers; it requires a system capable of maintaining analytical rigor and minimizing subjective bias as it expands. Transparency is equally crucial, necessitating clear documentation of methods and criteria to ensure reproducibility and facilitate critical evaluation of findings. Ultimately, a reliable solution – one consistently delivering valid and comprehensive syntheses – promises to dramatically accelerate the pace of knowledge discovery, allowing researchers to build upon existing work with greater confidence and efficiency, and fostering innovation across disciplines.

LR-Robot: Augmenting the Human Lens

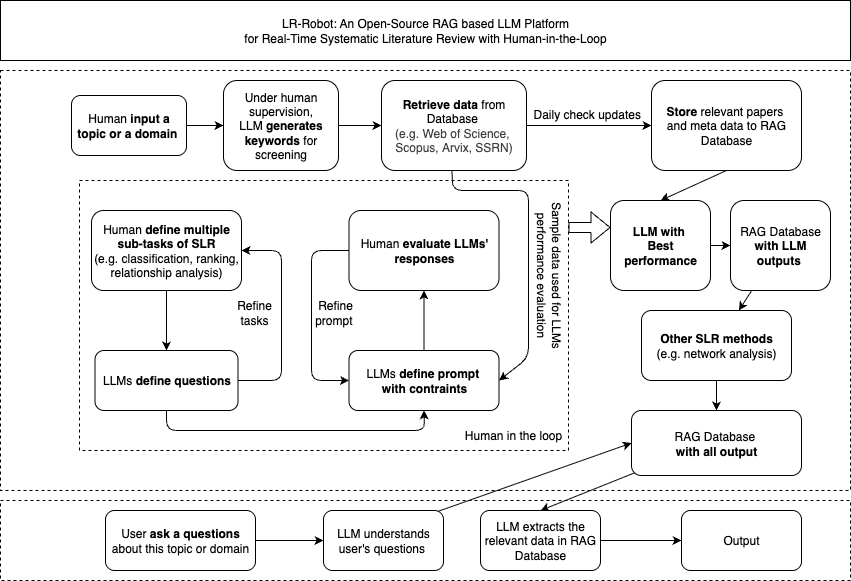

LR-Robot is a supervised-augmented framework intended to streamline and improve the systematic literature review process. This is achieved through the automation of key tasks traditionally performed manually, such as initial screening, data extraction, and summary generation. The “supervised” aspect indicates that the system’s outputs are designed for review and validation by human experts, ensuring accuracy and adherence to established review protocols. Augmentation refers to the system’s ability to enhance human reviewers’ efficiency and reduce potential bias, rather than replacing them entirely. The framework focuses on accelerating the early stages of a systematic review, allowing researchers to focus on critical analysis and synthesis of findings.

LR-Robot leverages Large Language Models (LLMs) to automate key initial stages of systematic literature review. Specifically, LLMs are employed to parse and interpret user-defined review requirements, facilitating the construction of effective search queries. These models then retrieve relevant literature from designated databases and synthesize the findings into concise summaries. This process significantly reduces the time required for screening and initial analysis, as LLMs can process a high volume of abstracts and full texts, identifying potentially relevant studies much faster than manual methods. The resulting summaries provide a condensed overview of the literature, accelerating the formulation of research questions and the development of review protocols.

Retrieval-Augmented Generation (RAG) is a technique employed within the LR-Robot framework to mitigate the potential for factual inaccuracies inherent in Large Language Model (LLM) outputs. RAG functions by first retrieving relevant documents from a knowledge source based on a given prompt or query. These retrieved documents are then concatenated with the original prompt and provided as context to the LLM. This process grounds the LLM’s response in external, verifiable information, thereby improving the accuracy and reliability of generated summaries and reducing the occurrence of hallucinations. By integrating information retrieval as a prerequisite to content generation, RAG enhances the overall quality and trustworthiness of the framework’s outputs.

The LR-Robot framework is architecturally divided into two primary layers: a Data Retrieval Layer and a Human-in-the-Loop Processing Layer. The Data Retrieval Layer is responsible for automated information acquisition from specified sources, utilizing keyword searches and database queries to compile a relevant corpus of literature. This layer functions independently, minimizing initial human intervention. Subsequently, the Human-in-the-Loop Processing Layer integrates expert review at critical junctures. Human experts validate the relevance of retrieved data, resolve ambiguities in LLM-generated summaries, and provide feedback to refine the system’s performance. This dual-layer approach balances the speed and scalability of automation with the accuracy and nuanced judgment of human oversight, ensuring both efficient processing and high-quality results.

Validating Insights: The Human Firewall

Human-in-the-Loop supervision is a core component of LR-Robot, functioning as a quality control mechanism to guarantee the validity and applicability of the system’s outputs. This process involves expert review of automatically generated summaries and insights, verifying factual accuracy and assessing whether the information is presented within the appropriate context of the source literature. By integrating human judgment, LR-Robot mitigates potential errors stemming from algorithmic limitations, such as misinterpretations of complex terminology or an inability to discern subtle nuances within research findings. The resulting validation ensures that the delivered insights are not only technically correct but also meaningfully relevant to the user’s specific information needs.

The LR-Robot framework incorporates expert validation as a critical safeguard against inaccuracies and misinterpretations inherent in automated literature review. Subject matter experts review generated summaries and insights, identifying and correcting errors in factual recall or logical reasoning. This process extends to prompt refinement; expert feedback is used to iteratively improve the precision and relevance of queries submitted to the language model. Furthermore, experts resolve ambiguities in the source material or in the model’s output, ensuring that nuanced information is not overlooked and that conclusions are appropriately qualified, thereby reducing the potential for flawed analysis when relying solely on automated systems.

The LR-Robot framework incorporates an interactive output visualization component designed to facilitate efficient review and evaluation of generated summaries and insights. This feature presents results in a readily digestible format, allowing users to quickly scan key findings and supporting evidence. The architecture supports multiple visualization methods, including tabular data displays, network graphs representing relationships between concepts, and highlighted text excerpts from source documents. Users can dynamically filter, sort, and drill down into the data, enabling detailed inspection of specific claims and the associated literature. This interactive approach minimizes the time required for validation and promotes a more thorough understanding of the generated insights.

The integration of human judgment into LR-Robot’s workflow establishes a feedback loop that strengthens the validity and reliability of generated insights. Experts reviewing system outputs not only correct inaccuracies but also provide contextual understanding that automated systems may lack, particularly when dealing with complex or ambiguous research. This iterative refinement of prompts and analyses, based on human expertise, moves beyond simple factual recall to enable nuanced interpretation of the literature, acknowledging the subtleties and limitations inherent in scientific findings and building user trust in the presented results.

Mapping the Landscape of Option Pricing

A comprehensive bibliometric network analysis of option pricing research was enabled through the application of LR-Robot, a tool designed for large-scale literature review. This analysis moved beyond simple citation counts, mapping the relationships between influential studies to reveal the intellectual structure of the field. By visualizing these connections, researchers were able to identify pivotal papers that served as foundational works and trace the evolution of key concepts. The network revealed emerging themes – such as the increasing focus on stochastic volatility and jump diffusion models – and highlighted the researchers and institutions driving innovation in option pricing theory and practice. Ultimately, this approach provided a nuanced understanding of the field’s development and pinpointed areas ripe for future investigation, offering a powerful tool for both scholars and practitioners.

A detailed network analysis of option pricing research revealed a clear evolution of thought, mapping the connections between seminal papers and emerging trends. This approach visualized how foundational models, such as the Black-Scholes framework, served as building blocks for increasingly complex methodologies like Stochastic Volatility models and beyond. The resulting network highlighted key intellectual lineages, demonstrating which papers were most influential in shaping subsequent research directions. By quantifying the relationships between studies, the analysis not only identified central works but also illuminated how ideas diffused and transformed within the field, offering a dynamic picture of intellectual progress and revealing previously obscured connections between seemingly disparate research efforts.

Research into option pricing has demonstrably evolved from its initial reliance on foundational models, most notably the Black-Scholes Model, toward increasingly sophisticated methodologies. Topic evolution analysis reveals a clear trajectory; while the Black-Scholes framework provided an essential starting point for understanding option valuation, its limitations in addressing real-world market complexities spurred the development of Stochastic Volatility Models. These newer models attempt to capture the dynamic and often unpredictable nature of volatility, a crucial factor in accurate pricing. This shift reflects a growing recognition that constant volatility, an assumption inherent in the Black-Scholes framework, is rarely observed in financial markets, prompting researchers to explore models incorporating time-varying volatility and other nuanced factors to improve predictive accuracy and risk management.

Recent investigations into option pricing research leveraged the capabilities of Gemini Flash 2.0, revealing a remarkable degree of internal consistency in model classification – achieving a score of 0.9472. This suggests the AI reliably categorizes these complex financial instruments based on established parameters. Further validating the system’s accuracy, a comparison between the AI’s annotations of option underlying types and those provided by human experts yielded a mean Jaccard Similarity of 0.8185. This high level of agreement underscores the potential for artificial intelligence to not only streamline the analysis of option pricing literature, but also to offer insights that align closely with human understanding in this specialized field.

Towards a Living Knowledge Synthesis

The incorporation of a Knowledge Graph into the LR-Robot framework fundamentally improves its ability to synthesize information from complex research papers. Rather than treating text as unstructured data, the Knowledge Graph represents concepts, entities, and their relationships in a formalized, interconnected manner. This structured approach provides a robust foundation for factual grounding, enabling the system to verify claims and identify potential inconsistencies within the literature. Moreover, the Knowledge Graph dramatically enhances transparency; the relationships between different pieces of evidence are explicitly defined and traceable, allowing researchers to easily follow the reasoning behind the system’s conclusions. This, in turn, facilitates a deeper, more nuanced understanding of the existing body of knowledge and accelerates the process of identifying key insights and research gaps.

The construction of a knowledge graph within the literature review framework allows for a level of analytical rigor previously unattainable in systematic reviews. By representing research findings as interconnected nodes and relationships, the system doesn’t simply catalog information, but actively assesses it for internal coherence. This process reveals inconsistencies where different studies offer conflicting evidence, ambiguities in terminology or methodology, and, crucially, gaps in the existing body of knowledge where further research is needed. The identification of these issues isn’t a matter of simple keyword searches; instead, the system leverages the structured knowledge to perform a reasoned analysis, offering a more nuanced and insightful understanding of the current state of research and highlighting critical areas for future investigation.

The long-term evolution of this research hinges on establishing dynamic knowledge synthesis, moving beyond static literature reviews to a continuously evolving understanding of scientific progress. This necessitates the development of algorithms capable of not only incorporating new publications into the existing knowledge graph, but also of critically evaluating their claims, resolving potential contradictions with prior findings, and updating the framework’s overall synthesis accordingly. Such a system would effectively ‘learn’ from the literature, refining its comprehension and identifying emerging trends in real-time, ultimately offering a far more nuanced and up-to-date perspective than traditional methods allow. This adaptive capacity promises to unlock a cycle of continuous discovery, where the framework itself becomes a proactive agent in knowledge creation and dissemination.

The systematic literature review, a cornerstone of evidence-based practice and scientific advancement, stands poised for significant transformation through frameworks like LR-Robot. By automating key aspects of the review process – including information extraction, synthesis, and critical appraisal – LR-Robot dramatically reduces the time and resources traditionally required. This acceleration isn’t merely about speed; it unlocks the potential for more frequent and comprehensive reviews, allowing researchers to stay current with rapidly evolving fields. Consequently, identification of emerging trends, replication of studies, and the pinpointing of research gaps become far more efficient, directly fostering innovation across disciplines ranging from medicine and engineering to social sciences and beyond. The ability to rapidly synthesize knowledge isn’t just a benefit to researchers, but holds the promise of informing policy decisions, driving technological advancements, and ultimately, improving outcomes in a multitude of sectors.

“`html

The LR-Robot framework, as detailed in the study, doesn’t merely apply existing knowledge; it actively dissects and reconstructs it through automated systematic reviews. This process resonates deeply with Aristotle’s assertion: “The ultimate value of life depends upon awareness and the power of contemplation rather than mere survival.” The framework’s capacity to rigorously examine option pricing research, building a knowledge graph from disparate sources, exemplifies this contemplative power. By challenging established understandings through AI-assisted analysis, the LR-Robot doesn’t simply summarize what is known, but investigates how knowledge is constructed and validated – a true exercise in reverse-engineering reality, pushing the boundaries of understanding within the field.

What Lies Beyond?

The automation of systematic literature review, as demonstrated by LR-Robot, isn’t simply about speed or scale. It’s about revealing the inherent biases within the process itself. One assumes a ‘systematic’ review eliminates subjectivity, but the framework’s success hinges on supervised learning-human-defined relevance. The interesting question isn’t whether the AI gets it ‘right’, but where its errors diverge from established consensus, and what those deviations might signify. Are flagged ‘irrelevant’ papers genuinely overlooked nuances, or simply anomalies within a tightly controlled narrative?

The current iteration rightly focuses on option pricing, a relatively well-defined domain. But pushing this framework toward more ambiguous fields-social sciences, perhaps-will expose its limitations, and potentially, its strengths. What happens when ‘relevance’ isn’t a clear-cut technical criterion, but a matter of interpretive debate? The framework’s architecture, with its emphasis on knowledge graphs and RAG, could become a tool not just for synthesis, but for actively mapping the fault lines of scholarly disagreement.

Ultimately, LR-Robot isn’t about replacing the researcher; it’s about externalizing the often-unconscious rules that govern their inquiry. The true next step isn’t better algorithms, but a deliberate attempt to break those rules – to systematically introduce controlled ‘noise’ and observe how the framework responds. Perhaps the ‘bug’ isn’t a flaw, but a signal, indicating a previously hidden structure within the literature itself.

Original article: https://arxiv.org/pdf/2603.17723.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Total Football free codes and how to redeem them (March 2026)

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Farming Simulator 26 arrives May 19, 2026 with immersive farming and new challenges on mobile and Switch

- PUBG Mobile x Harley-Davidson Partnership to introduce new Motor Cruise event with rewards and Skins

- ALLfiring redeem codes and how to use them (May 2026)

- First Look at Bad Bunny’s Exclusive Zara x Benito Antonio Collection

- Honor of Kings April 2026 Free Skins Event: How to Get Legend and Rare Skins for Free

- Clash of Clans May 2026: List of Weekly Events, Challenges, and Rewards

- Silver Rate Forecast

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

2026-03-19 09:02