Author: Denis Avetisyan

New research demonstrates how robots can infer task completion simply by observing where a person is looking, moving closer to more natural human-robot collaboration.

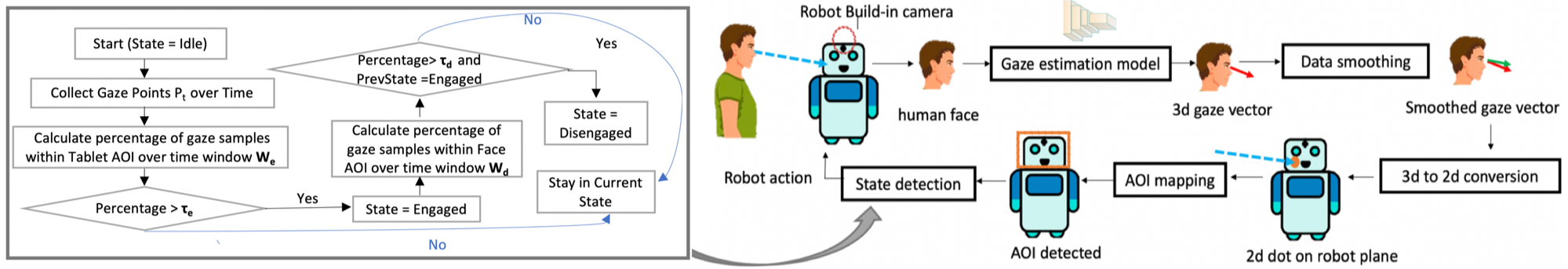

A calibration-free framework utilizes gaze estimation from RGB cameras to detect task progression based on shifts in attention between a display and the robot itself.

While effective human-robot interaction necessitates understanding user intent, reliance on specialized hardware often limits deployment in real-world settings. This research introduces a ‘Gaze-Aware Task Progression Detection Framework for Human-Robot Interaction Using RGB Cameras’ that leverages standard RGB cameras to infer task completion through natural gaze shifts-specifically, transitions from a display interface to the robot’s face. The framework achieves 77.6% accuracy in detecting task completion and demonstrates improved comfort and social presence compared to traditional button-based interaction. Could this calibration-free, low-cost approach unlock more natural and engaging human-robot collaborations grounded in intuitive social cues?

The Illusion of Control: Beyond Explicit Commands

Conventional methods of human-robot interaction frequently necessitate explicit, pre-defined commands – a process often described as cumbersome and unnatural for users. These systems typically require individuals to learn a specific vocabulary of instructions, or to manipulate physical interfaces like joysticks or touchscreens, creating a barrier to seamless communication. This reliance on direct control diminishes the fluidity of interaction, forcing humans to adapt to the robot’s limitations rather than allowing the robot to adapt to human behavior. The resulting experience can feel robotic and disconnected, hindering the development of truly collaborative partnerships and limiting the potential for intuitive, efficient task completion. Consequently, researchers are actively exploring alternative input modalities that more closely align with natural human communication patterns, seeking to bridge the gap between human intent and robotic action.

Human communication relies heavily on subtle cues, particularly where one directs their gaze; this natural behavior offers a powerful, yet often overlooked, avenue for human-robot interaction. By interpreting where a person looks, robots can infer intent and focus with remarkable accuracy, circumventing the need for explicit commands or complex interfaces. This intuitive modality mirrors how humans naturally understand each other, allowing for a more seamless and efficient exchange of information. The potential extends beyond simple object selection; gaze can indicate approval, disagreement, or even predict future actions, creating a truly responsive and adaptive robotic partner. Consequently, researchers are increasingly focused on leveraging this inherent human capability to build robots that are not merely programmed, but truly understand.

The successful integration of gaze as a control mechanism hinges on the development of highly accurate gaze estimation techniques. Current research focuses not only on precisely determining where a user is looking, but also on mitigating the challenges presented by individual variations in eye anatomy, lighting conditions, and head movement. Sophisticated algorithms, often employing machine learning and computer vision, are being refined to filter noise and compensate for these factors, striving for a seamless and intuitive user experience. Achieving robust gaze tracking requires a delicate balance between computational efficiency – enabling real-time performance – and precision, ensuring that even subtle shifts in attention are reliably detected and translated into actionable commands for the robotic system. Ultimately, the reliability of these estimation techniques directly determines the usability and effectiveness of gaze-controlled interfaces.

![The proposed framework successfully transitions between displaying Page 1 and Page 2 based on gaze tracking, as demonstrated by smoothed gaze [latex] (green) [/latex] aligning with original 3D gaze [latex] (red) [/latex] in both third-person and robot perspectives across time.](https://arxiv.org/html/2603.15951v1/gazeovertime.png)

Unseen Signals: Appearance-Based Gaze Estimation

Appearance-based gaze estimation circumvents the limitations of traditional hardware-dependent methods-which rely on specialized sensors like infrared illuminators and cameras-by leveraging readily available RGB imagery. This approach directly maps visual features within a standard image to a user’s gaze direction, eliminating the need for costly and potentially obtrusive equipment. Consequently, appearance-based techniques offer increased portability, scalability, and compatibility with standard camera systems, enabling gaze tracking in a wider range of environments and applications. The performance of these systems relies on training deep learning models to identify correlations between facial appearance and gaze direction, requiring substantial datasets for effective generalization.

The L2CS-Net architecture employs a convolutional neural network (CNN) to directly regress 3D gaze direction from a single RGB image. This network consists of multiple convolutional layers responsible for feature extraction, followed by fully connected layers that map these features to a 3D gaze vector represented as [latex](x, y, z)[/latex] coordinates. The CNN is trained end-to-end, minimizing the difference between predicted and ground truth gaze vectors using a regression loss function, typically L2 loss. Robustness is achieved through the network’s ability to learn complex mappings between image appearance and gaze direction, effectively handling variations in head pose, lighting conditions, and individual eye characteristics. The architecture’s depth and convolutional filters enable it to capture both local image features and global contextual information relevant for accurate gaze estimation.

Effective training and validation of appearance-based gaze estimation models necessitate large-scale datasets such as Gaze360, which contains over 230,000 images and corresponding 3D gaze annotations. The scale of these datasets is crucial for improving model generalization – the ability to accurately estimate gaze on previously unseen subjects and in diverse environments. Furthermore, large datasets help mitigate potential biases inherent in smaller, less representative collections of data, which could otherwise lead to skewed performance across different demographics or lighting conditions. Datasets like Gaze360 typically include variations in head pose, gaze direction, illumination, and subject characteristics, fostering the development of robust and unbiased gaze estimation systems.

Prior to training or inference, raw gaze data undergoes several pre-processing stages to enhance accuracy and stability. Gaze data smoothing, typically achieved through moving average filters or Kalman filtering, reduces noise and jitter inherent in the tracking process. Furthermore, 3D gaze direction, initially represented in a head-centered coordinate system, must be projected onto the 2D image plane using camera parameters – including focal length, principal point, and distortion coefficients – to establish correspondence between gaze direction and image locations. This projection accounts for perspective and ensures accurate alignment between the estimated gaze point and the visual field. Accurate camera calibration is therefore critical for effective 2D-to-3D and 3D-to-2D transformations during pre-processing.

The Illusion of Rapport: Designing Natural Interaction

The ‘First Day at Work’ paradigm is utilized as a test environment for gaze-based human-robot interaction due to its inherent social complexities and reliance on nonverbal cues. This scenario involves a simulated onboarding process where a human participant interacts with a robot, requiring the interpretation of subtle communicative signals common in initial workplace encounters. Specifically, the paradigm allows researchers to analyze how users naturally employ gaze direction, duration, and frequency to establish rapport, signal understanding, and manage conversational turns – behaviors crucial for successful social interaction and readily observable within a realistic, structured setting. The focus on naturally occurring communication cues differentiates this approach from artificial interaction models and provides ecologically valid data for evaluating the effectiveness of gaze-based interfaces.

The Pepper robot was selected as the interactive agent to facilitate a realistic simulation of a new employee onboarding scenario. This humanoid robot provides a physical presence and utilizes both verbal and non-verbal communication cues, including facial expressions and gestures, to enhance the naturalness of the interaction. Its capabilities include speech recognition and synthesis, allowing for dynamic dialogue, and its movement allows it to simulate realistic human behaviors within the defined interaction space. The selection of Pepper enables the study of human-robot interaction in a contextually relevant setting, moving beyond abstract testing environments and towards practical application in workplace scenarios.

Areas of Interest (AOIs) are spatially defined regions within the robot’s operational environment used to categorize a user’s gaze direction and subsequently infer their communicative intent. These AOIs are not arbitrary; they represent salient elements within the interaction scenario – such as the robot’s face, relevant objects on a desk, or specific points within a displayed visual aid. By tracking where a user is looking, the system can determine if they are attempting to establish eye contact with the robot, focusing on a shared object to discuss it, or referencing displayed information. This gaze-based analysis enables the robot to tailor its responses, initiating relevant dialogue, providing clarifying information, or acknowledging user focus, thus facilitating a more contextually appropriate and responsive interaction.

Gaze-based turn-taking mechanisms enable users to non-verbally signal their intention to speak, facilitating a more natural conversational flow with the robotic agent. This is achieved by monitoring the user’s gaze direction; sustained eye contact, or a shift in gaze towards the robot, is interpreted as a cue indicating the user wishes to contribute. The system then allows the user to speak, minimizing latency and overlap in communication. Conversely, avoidance of eye contact or a disengaged gaze can signal the user’s desire to remain a listener. This bidirectional gaze-based signaling reduces the need for explicit verbal cues like “um” or “I’d like to say something,” resulting in a more fluid and efficient interactive experience.

Inferring Intent: Gaze-Based State Detection & Validation

Gaze-based state detection functions by monitoring a user’s visual focus within specifically defined Areas of Interest (AOIs). The system analyzes gaze patterns – including dwell time, frequency of fixations, and transitions between AOIs – to infer cognitive states related to task engagement. Prolonged gaze within an AOI typically indicates active processing of information, while a lack of gaze or frequent transitions may signal disengagement or confusion. This data is then used to dynamically adjust the robot’s behavior, ensuring interaction remains aligned with the user’s attentional state and preventing unnecessary or disruptive actions. The system doesn’t rely on explicit user input, offering a non-intrusive method for assessing user focus during human-robot collaboration.

Task completion detection relies on analyzing shifts in the user’s gaze pattern. The system monitors gaze transitions away from areas of interest (AOIs) relevant to the current interaction step, interpreting these transitions as signals that the user has finished processing information or completing an action. This allows the robot to avoid prematurely advancing to the next step and ensures a more fluid interaction sequence. By accurately identifying these gaze-based cues, the robot can dynamically adjust its behavior and proceed only when the user demonstrably indicates readiness, contributing to a more natural and efficient human-robot collaboration.

Following each human-robot interaction, a memory task is employed to quantitatively evaluate the effectiveness of information delivery. This task requires users to recall specific details presented during the interaction, providing a direct measure of comprehension and retention. Performance on the memory task – typically assessed through question-and-answer format – serves as a key performance indicator (KPI) for the system, allowing for iterative refinement of interaction strategies and content presentation. Data from the memory task is correlated with gaze patterns and task completion metrics to establish a comprehensive understanding of factors influencing user understanding and engagement.

The integration of gaze-based state detection, task completion signaling via gaze transitions, and post-interaction memory assessment represents a substantial advancement in human-robot interaction. This combined methodology enables robots to dynamically interpret user engagement and accurately identify when interaction steps are complete, facilitating a more fluid and responsive dialogue. Empirical evaluation of this system has yielded a 77.6% success rate in real-time human-robot interaction scenarios, demonstrating its effectiveness in understanding and reacting to human intent and suggesting a pathway towards more natural and intuitive robotic interfaces.

![Gaze-based interaction demonstrated comparable memory task accuracy and user experience to button-based interaction, with significant differences observed in attention allocation and page duration, as revealed by behavioral and visual attention data [latex]<i>p<0.05[/latex], [latex]<b>p<0.01[/latex], [latex]</b></i>p<0.005[/latex].](https://arxiv.org/html/2603.15951v1/result2.jpg)

The pursuit of calibration-free systems, as demonstrated by this research into gaze-aware task progression, echoes a fundamental truth about complex arrangements. The framework doesn’t impose understanding; it observes the natural shifts in attention, interpreting the silent language of visual focus. It’s a subtle choreography, a recognition that intention reveals itself through action, not decree. As John McCarthy once observed, “Every architectural choice is a prophecy of future failure.” This work acknowledges that rigid, overly-engineered solutions are brittle, while a system attuned to natural cues-like the transition of gaze from display to robot-offers a more resilient, evolving interaction. The beauty lies in accepting imperfection, in building systems that grow with, rather than control, the human element.

What Lies Ahead?

This work, in its attempt to bridge the gap between human intention and robotic action through gaze, merely highlights how readily the field accepts proxies for understanding. A calibration-free system is, of course, appealing – but it’s a retreat from precision, a willingness to settle for ‘good enough’ when the true problem remains: robots cannot know what a human intends, only infer it from imperfect signals. Scalability is just the word used to justify complexity, to mask the fact that each added layer of inference invites further opportunity for misinterpretation.

The reliance on task completion as a binary state feels particularly limiting. Human activity is rarely so neat. What of partial completion? Of interrupted tasks? Of the subtle negotiation that often occurs during collaborative work? The system, in its current form, measures an outcome, not a process. Everything optimized will someday lose flexibility. A more fruitful avenue might lie in modeling the uncertainty inherent in these interactions, allowing the robot to respond not with certainty, but with carefully calibrated queries.

The perfect architecture is a myth to keep people sane. This framework, like all others, will eventually succumb to the unpredictable nature of human behavior. The real challenge isn’t building a system that detects task completion, but one that gracefully accepts its own fallibility, and learns from the inevitable moments when gaze shifts betray no clear intention. It is in those moments, not in the successful completions, that the true potential of human-robot collaboration resides.

Original article: https://arxiv.org/pdf/2603.15951.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Top 5 Best New Mobile Games to play in May 2026

- The SATISFY x adidas Adizero Adios Pro 4 Debuts in Three Earthy Colorways

- FC Mobile 26 TOTS (Team of the Season) event Guide and Tips

- These Cartoon Reboots Totally Missed the Point of the Originals (& Went Downhill Fast)

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

- Yummy Tteokbokki ASMR redeem codes and how to use them (May 2026)

- Supercell’s “neo mo.co” update set for the Summer of 2026 and this might save the game

- Honkai: Star Rail Silver Wolf Lv. 999 Build Guide: Best Relics, Light Cone, Team Comps, and more

- STARBUCKS STAND by BEAMS Channels Kenyan Coffee Heritage Into Its Latest Spring/Summer Wardrobe

- Last Furry: Survival redeem codes and how to use them (April 2026)

2026-03-18 07:58