Author: Denis Avetisyan

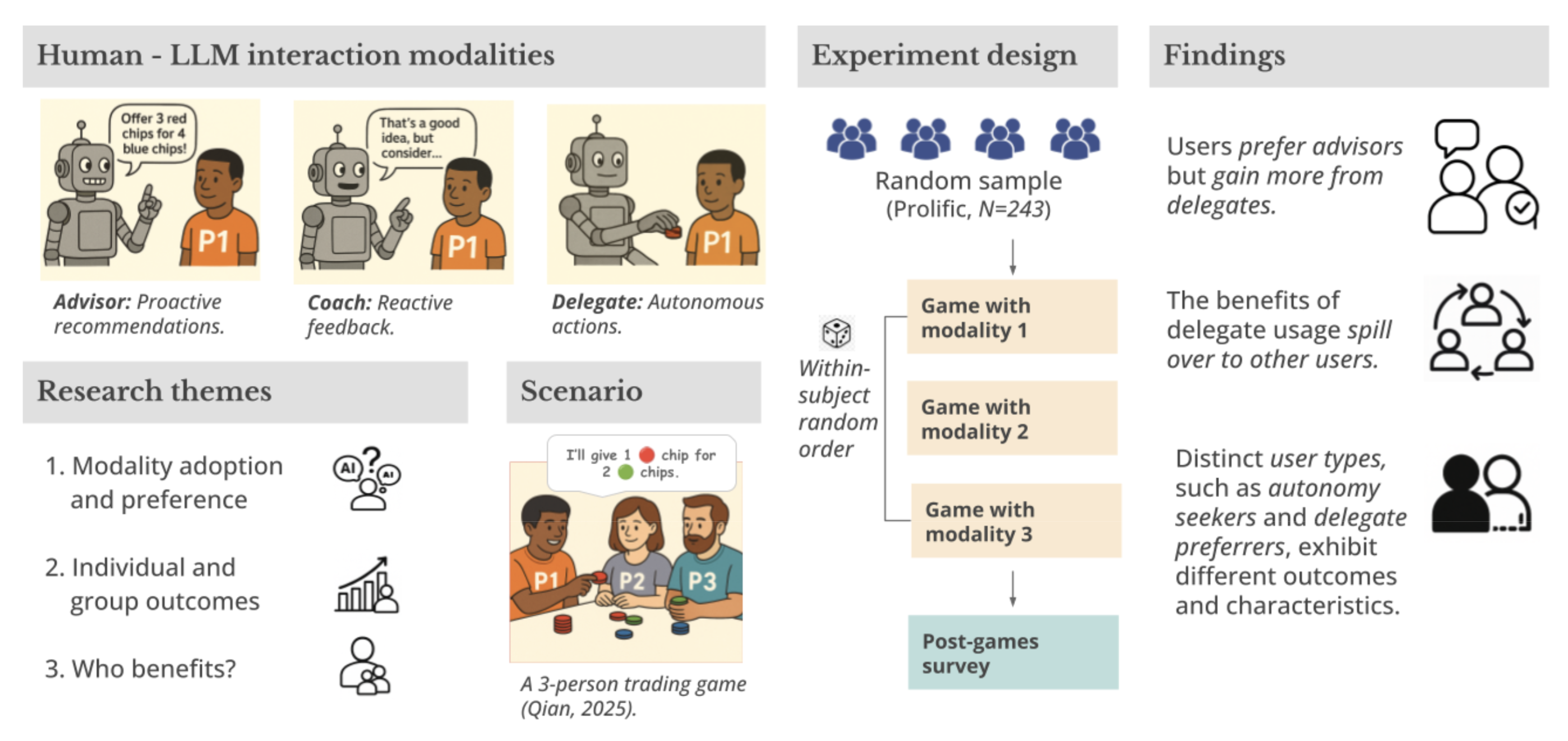

New research explores how different levels of AI involvement in multi-party bargaining – from advice to full delegation – impacts strategic choices and overall outcomes.

![Delegate agents demonstrably improve trade outcomes by consistently proposing offers that yield a significant increase in receiver surplus-a statistically significant shift not observed in non-AI-assisted scenarios [latex] (p<0.01) [/latex], suggesting a capacity for mutually beneficial negotiation.](https://arxiv.org/html/2602.12089v1/figs/spillover.png)

A study of agentic AI assistance in bargaining games reveals that while humans prefer retaining control, delegating fully to AI maximizes collective surplus.

While increasingly capable AI agents promise to enhance strategic interactions, realizing collective welfare gains hinges on user adoption and interface design. This research, ‘Choose Your Agent: Tradeoffs in Adopting AI Advisors, Coaches, and Delegates in Multi-Party Negotiation’, investigates how different levels of AI assistance-ranging from proactive advice to autonomous action-impact outcomes in multi-player bargaining games. Participants achieved the highest gains when fully delegating to an AI agent, despite preferring more control over the negotiation process, revealing a misalignment between perceived utility and actual performance. How can assistance modalities be designed to incentivize adoption and unlock the full potential of AI-driven surplus maximization in complex strategic settings?

Deconstructing the Human-AI Negotiation Paradox

Conventional approaches to negotiation, honed for two-party interactions, frequently struggle when scaled to the intricacies of multi-party scenarios. The exponential increase in possible coalitions, shifting priorities, and information asymmetry quickly overwhelm human cognitive capacity. This limitation creates a significant opportunity for artificial intelligence to step in as a supportive agent, not necessarily to replace human negotiators, but to augment their abilities. AI systems can process vast amounts of data, identify optimal strategies, and predict opponent behavior with a speed and accuracy beyond human reach. Consequently, the potential exists to not only improve negotiation outcomes-achieving more favorable agreements-but also to streamline the process itself, reducing time and resource expenditure in increasingly complex collaborative environments.

The efficacy of artificial intelligence in complex negotiation scenarios isn’t solely determined by its computational power, but critically by how humans integrate with and respond to its assistance. Research indicates that simply providing AI-driven suggestions doesn’t guarantee improved negotiation results; in fact, over-reliance or misinterpretation of AI advice can sometimes lead to suboptimal outcomes. Humans often bring inherent biases, emotional responses, and strategic considerations that AI models may not fully account for, creating a disconnect between suggested actions and effective negotiation tactics. Therefore, a nuanced understanding of the human-AI interaction – encompassing trust levels, cognitive load, and the ability to appropriately filter and apply AI insights – is paramount to unlocking the true potential of AI as a collaborative negotiation partner.

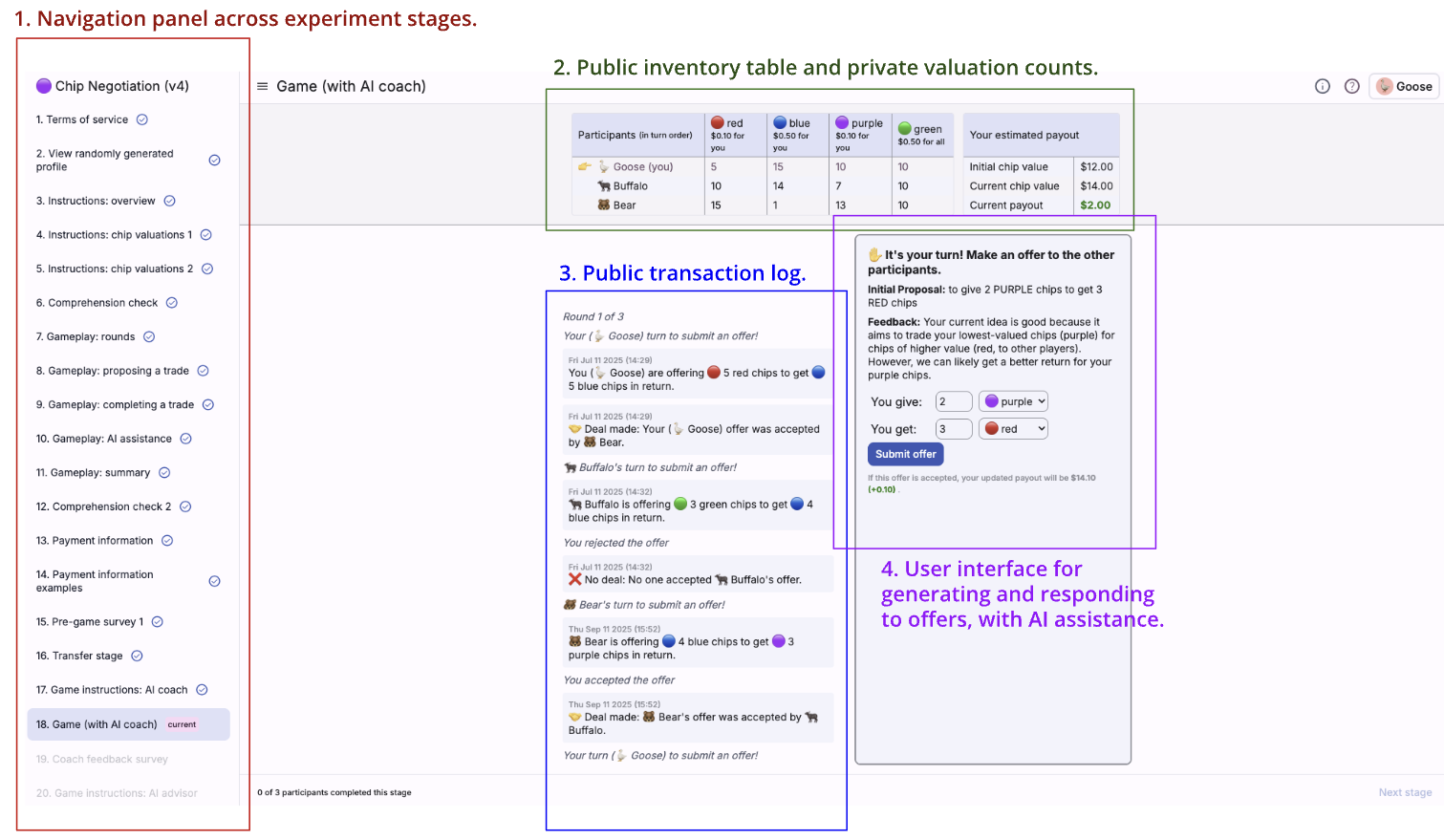

The core of this investigation revolves around a carefully constructed ‘Chip Bargaining Game,’ designed to simulate the dynamics of human-AI negotiation. This game presents participants with scenarios requiring collaborative resource allocation – essentially, dividing a limited pool of ‘chips’ among themselves and an AI agent. By framing the interaction within this controlled, gamified environment, researchers can isolate and meticulously analyze the subtle shifts in strategy, communication, and trust that emerge when humans negotiate alongside artificial intelligence. The game’s simplicity allows for numerous repetitions with varied parameters, generating a robust dataset for identifying patterns and ultimately, bridging the gap between anticipated benefits and actual outcomes in more complex real-world negotiations.

The study leveraged the Deliberate Lab, a purpose-built platform designed to capture nuanced behavioral data during complex negotiations. This digital environment enabled researchers to meticulously track every interaction within the ‘Chip Bargaining Game’, recording not only final agreements but also the timing of messages, the specific language used, and even subtle shifts in strategy over time. Beyond simple data logging, the platform facilitated real-time data analysis, allowing for immediate observation of patterns and trends as participants – both human and artificial intelligence – engaged in bargaining. This granular level of data collection proved essential in identifying the specific communication dynamics that contribute to success or failure in human-AI negotiation, ultimately revealing the gaps that hinder effective collaboration and informing strategies to bridge them.

Orchestrating Assistance: Three Modes of Interaction

The AI assistance system utilizes Large Language Model (LLM) Agents, specifically instantiated with the ‘Gemini-2.5-Flash’ model. These agents are integrated to offer a spectrum of support to human players, ranging from simple suggestions to full autonomy. The ‘Gemini-2.5-Flash’ model was selected for its balance of performance and efficiency, enabling real-time interaction within the application. The LLM Agents function as the core logic for all assistance modes, processing game state information and generating appropriate responses or actions based on the chosen level of intervention.

The ‘Advisor Mode’ functions by providing suggestions to the human player without directly controlling actions, thereby preserving user agency. This approach recognizes that while AI assistance can be valuable, presenting excessive or poorly timed information can increase cognitive load and hinder performance. Suggestions are designed to be non-intrusive and offer potential options for consideration, allowing the user to evaluate and ultimately determine the course of action. The system prioritizes minimizing the burden on the user’s working memory and decision-making processes, offering support without relinquishing control.

Coach Mode within the AI assistance system functions by providing evaluative feedback to the human player prior to action execution. This proactive approach differs from reactive assistance by attempting to influence decision-making before a choice is made, with the goal of improving the overall quality of those decisions. The system analyzes the current game state and potential actions, then generates feedback intended to highlight advantageous or disadvantageous aspects of each option. This is intended to guide the player towards more optimal strategies without directly controlling their actions, thereby fostering skill development and strategic thinking.

Delegate Mode represents a fully automated interaction paradigm where the AI agent, powered by the Gemini-2.5-Flash LLM, executes actions without direct human intervention. This functionality is achieved through meticulous prompt engineering, which defines the AI’s operational scope and decision-making process. The prompts are structured to enable the agent to interpret the game state, formulate a plan, and then enact that plan through defined actions. While offering a hands-free experience, the efficacy of Delegate Mode is directly correlated to the quality and specificity of the underlying prompts used to guide the AI’s behavior.

Decoding the Disconnect: Performance Versus Preference

Analysis of player choices reveals a substantial discrepancy between stated preference and objective performance. While 43.7% of participants favored the Advisor Mode, the Delegate Mode demonstrably generated a higher ‘Scaled Surplus’ of 0.084. This indicates that, despite achieving greater collective gains, players did not select the mode that maximized quantifiable outcomes, instead opting for the Advisor Mode, suggesting factors beyond purely maximizing surplus influenced their decision-making process.

The Delegate Mode generated a ‘Scaled Group Surplus’ of 0.084, representing the highest observed potential for collective gain across all tested modes. This metric quantifies the overall benefit realized by all participants, factoring in both adopters and non-adopters of the decision-making aid. While this surplus was the largest recorded, statistical correction for multiple comparisons resulted in a finding of non-significance; meaning the observed difference could be attributable to random variation rather than a genuine effect of the Delegate Mode. Despite lacking statistical significance, the magnitude of the surplus indicates a potentially substantial improvement in collective outcomes when utilizing fully autonomous delegation.

User preference data indicates a prioritization of perceived control and transparency in the decision-making process, even when objectively suboptimal outcomes result. While the Delegate Mode generated the highest ‘Scaled Group Surplus’ of 0.084, representing the greatest potential for collective gain, players demonstrated a stronger preference for the Advisor Mode. This suggests that participants place a significant value on understanding how decisions are reached and maintaining a level of agency, outweighing the benefit of maximizing surplus. The observed preference pattern implies a willingness to trade objective gains for subjective feelings of control and comprehension within the system.

Analysis indicates a tendency toward ‘Algorithm Aversion’ influenced player mode selection; despite the Delegate Mode demonstrating the highest potential for ‘Scaled Group Surplus’ at 0.084, players exhibited reluctance to fully relinquish control to the autonomous system. This aversion manifested as a preference for the Advisor Mode, chosen by 43.7% of participants, which allows for human oversight and intervention in the decision-making process. The observed behavior suggests users prioritize understanding and maintaining agency, even if it results in suboptimal objective outcomes as compared to the fully automated Delegate Mode.

Analysis indicates the Delegate Mode produced substantial positive externalities, wherein 48.8% of the total surplus generated benefited individuals who did not actively participate in utilizing the mode. This suggests that the benefits of the Delegate Mode’s decision-making extended beyond the immediate user group, creating spillover effects that positively impacted the broader system. These external benefits represent a significant portion of the overall gains achieved by the Delegate Mode, highlighting its capacity to improve outcomes for non-adopters alongside active users.

Re-Engineering Trust: Implications for AI Design and Future Research

Studies reveal a compelling preference among users for AI systems operating in an ‘Advisor’ mode, even when those systems demonstrably underperform compared to more autonomous alternatives. This suggests that perceived control and transparency are paramount in fostering user acceptance, outweighing purely objective measures of success. The research indicates individuals value understanding how an AI arrives at a recommendation – and retaining the final decision-making authority – over simply receiving the optimal outcome. Consequently, AI design should prioritize explainability and user agency, building systems that empower individuals rather than replace their judgment, even if it means sacrificing a degree of efficiency. This focus on human-centered design is crucial for building trust and facilitating the widespread adoption of AI assistance in complex, real-world scenarios.

Effective integration of artificial intelligence into complex decision-making relies heavily on minimizing cognitive load and fostering user trust. Studies indicate that even highly capable AI systems will be rejected if they overwhelm users with information or lack clear justification for their recommendations. A key challenge lies in presenting AI insights in a digestible format, allowing individuals to readily understand the reasoning behind suggestions without expending excessive mental effort. Building trust requires transparency; users need to perceive the AI as a reliable partner, understanding its limitations and having confidence in its objectivity. Consequently, designers must prioritize explainability and control, enabling users to scrutinize AI logic and retain agency over final decisions, ultimately paving the way for widespread acceptance and beneficial collaboration between humans and artificial intelligence.

A central challenge for future artificial intelligence development lies in harmonizing system recommendations with individual user preferences without compromising overall effectiveness. Current research suggests a willingness to accept slightly suboptimal outcomes from AI if the system demonstrably respects user input and perceived control; however, sustaining this balance requires innovative algorithmic approaches. Investigations should focus on techniques like preference elicitation – actively learning what a user values – and incorporating those insights into recommendation models. Furthermore, exploring methods for explaining why an AI suggests a particular course of action, even when it differs from a user’s initial inclination, could foster trust and encourage acceptance. Ultimately, successful AI assistance hinges not merely on achieving optimal solutions, but on collaboratively reaching outcomes that align with both objective criteria and subjective user values, demanding a shift towards more nuanced and adaptable AI systems.

A comprehensive understanding of how artificially intelligent agents influence human negotiation capabilities and broader social interactions requires sustained investigation. As AI increasingly mediates complex discussions – from business deals to personal disputes – it is critical to determine whether reliance on these systems erodes essential human skills such as persuasion, empathy, and conflict resolution. Research must extend beyond immediate task performance to assess the long-term consequences on individuals’ ability to navigate social dynamics independently, and to anticipate potential shifts in communication patterns and trust-building processes within communities. Ultimately, discerning the subtle yet profound effects of AI-mediated negotiation is paramount to fostering responsible innovation and ensuring that these technologies augment, rather than diminish, fundamental aspects of human social intelligence.

![Analysis of negotiation rounds reveals a statistically significant increase in assistance usage [latex] (p < 0.001) [/latex], indicating participants increasingly relied on AI support as negotiations progressed.](https://arxiv.org/html/2602.12089v1/figs/AIUsageRound.png)

The study illuminates a fascinating tension between human preference for control and the potential for optimized outcomes through AI delegation. This echoes Donald Knuth’s observation: “Premature optimization is the root of all evil.” While participants intuitively favored retaining agency in negotiation-acting as advisors or coaches-the research demonstrates that surrendering control to a fully delegated AI agent consistently maximized collective surplus. It suggests that a rigid adherence to maintaining control-prematurely optimizing for a sense of agency-can actually hinder achieving the most beneficial result, a key finding concerning strategic interdependence within bargaining games. The work subtly argues for challenging preconceived notions of control, mirroring Knuth’s warning against optimization before thorough understanding.

Beyond the Bargain: Charting Future Directions

The observed preference for retaining control, even when demonstrably suboptimal, suggests a deeper resistance at play than mere loss aversion. It’s not simply that humans dislike relinquishing agency; the data hints at a fundamental distrust of opaque decision-making, even when presented as beneficial. True security isn’t in the promise of maximized surplus, but in understanding how that surplus is achieved. Future work must move beyond evaluating whether to delegate, and focus on building interfaces that reveal the rationale behind AI proposals-allowing users to dissect, challenge, and ultimately, learn from their AI counterparts.

This research implicitly acknowledges that ‘rationality’ is not a fixed point, but a moving target, shaped by the interplay of information, control, and trust. A critical next step involves exploring how different levels of explainability impact user acceptance of AI delegation, and whether this acceptance varies across cultures or cognitive styles. The study currently treats the ‘surplus’ as the ultimate metric; however, a more nuanced analysis should investigate the psychological costs and benefits associated with different negotiation outcomes, even when objectively equivalent.

Ultimately, the pursuit of AI assistance in negotiation is not about replacing human strategists, but about augmenting their capabilities. The real challenge lies not in building agents that can win, but in building systems that help humans become better negotiators themselves. The long game isn’t about maximizing a single outcome, it’s about reverse-engineering the art of strategic interaction, and that requires a level of transparency this research only begins to address.

Original article: https://arxiv.org/pdf/2602.12089.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Episode 4 Release Date, Time, Where to Watch

- Physics Proved by AI: A New Era for Automated Reasoning

- How Martin Clunes has been supported by TV power player wife Philippa Braithwaite and their anti-nepo baby daughter after escaping a ‘rotten marriage’

- CookieRun: OvenSmash coupon codes and how to use them (March 2026)

- Total Football free codes and how to redeem them (March 2026)

- Goddess of Victory: NIKKE 2×2 LOVE Mini Game: How to Play, Rewards, and other details

- American Idol vet Caleb Flynn in solitary confinement after being charged for allegedly murdering wife

- Roco Kingdom: World China beta turns chaotic for unexpected semi-nudity as players run around undressed

- Nicole Kidman and Jamie Lee Curtis elevate new crime drama Scarpetta, which is streaming now

- “Wild, brilliant, emotional”: 10 best dynasty drama series to watch on BBC, ITV, Netflix and more

2026-02-14 18:27