Author: Denis Avetisyan

Researchers have developed a new odometry framework enabling quadruped robots to accurately estimate their pose and movement using only internal sensors and foot contact information.

This work introduces a contact-anchored proprioceptive odometry system leveraging inverse kinematics and a cubature Kalman filter for robust state estimation in legged robots.

Accurate and robust state estimation remains a key challenge for legged robots operating without external sensing. This paper introduces a novel proprioceptive odometry framework, ‘Contact-Anchored Proprioceptive Odometry for Quadruped Robots’, which leverages intermittent footfall contacts as kinematic anchors to suppress drift and improve pose estimation. By treating each contacting leg as a constraint and employing an inverse-kinematics cubature Kalman filter, the method achieves substantial improvements in accuracy across diverse quadruped and wheel-legged platforms. Could this contact-anchored approach unlock more reliable and autonomous navigation for legged robots in complex, real-world environments?

The Inevitable Drift: Why Robots Struggle to Know Where They Are

Autonomous robots rely heavily on accurate state estimation – a continuous process of determining the robot’s position and orientation within its environment – but this is inherently susceptible to drift. Imperfections in sensor readings, commonly referred to as noise, and the inevitable accumulation of small errors over time, gradually degrade the precision of pose estimation. This phenomenon manifests as a divergence between the robot’s perceived location and its actual location, potentially leading to navigation failures or inaccurate mapping. Consequently, maintaining long-term consistency in state estimation is a fundamental challenge in robotics, demanding sophisticated algorithms and sensor fusion techniques to mitigate the effects of noise and accumulated error and ensure reliable autonomous operation.

Conventional localization techniques frequently encounter difficulties in sustaining accuracy over extended periods, particularly when navigating challenging landscapes or environments with moving elements. These methods often rely on integrating incremental measurements from sensors – a process susceptible to the accumulation of errors stemming from noise and imperfect data. Consequently, even minor inaccuracies in each measurement can compound over time, leading to significant drift in the estimated robot pose. Complex terrains, characterized by uneven surfaces and limited visual features, exacerbate this issue by reducing the reliability of sensor data. Similarly, dynamic environments – those containing moving obstacles or people – introduce further uncertainty, as previously mapped features may change position, rendering traditional map-matching approaches ineffective and hindering the robot’s ability to maintain a consistent and accurate understanding of its location.

The demand for truly autonomous robotic systems hinges on the ability to accurately determine a robot’s position and orientation – its ‘pose’ – without relying on external infrastructure like GPS or motion capture systems. Traditional localization methods frequently falter over extended operation due to the inevitable accumulation of errors from imperfect sensor readings and environmental factors. Consequently, researchers are actively developing innovative odometry techniques that utilize onboard sensors – accelerometers, gyroscopes, and wheel encoders – to continuously estimate pose changes. These approaches aim to build a self-contained localization system, allowing robots to navigate and operate reliably in diverse and unpredictable environments, from sprawling warehouses to challenging outdoor terrains, all while maintaining a consistent and drift-free understanding of their own location.

A novel localization system, designed for robust and drift-resistant robot navigation, utilizes proprioceptive data – information derived from the robot’s internal sensors regarding joint angles, velocities, and accelerations. This approach effectively minimizes the accumulation of errors common in traditional odometry, enabling more accurate pose estimation over extended periods. Rigorous testing demonstrates the system achieves a horizontal loop error of just 0.1638 meters, a performance level directly comparable to that of the commercially available Unitree Go2 EDU quadrupedal robot, signifying a significant advancement in self-contained robot localization capabilities and opening pathways for reliable operation in challenging environments without reliance on external infrastructure.

[/latex] position in real-time.](https://arxiv.org/html/2602.17393v1/fig/MW_225_15_.png)

Internal Sensors: Building a Foundation for Drift Reduction

Proprioceptive odometry estimates robot motion using only internal sensor data, specifically inertial measurement units (IMUs) and motor encoders, eliminating reliance on external perception such as cameras or LiDAR. IMUs provide measurements of linear acceleration and angular velocity, while motor measurements, including joint angles and velocities, indicate wheel or limb movements. By fusing these data streams, the system calculates changes in position and orientation over time. This internal estimation process is crucial for maintaining localization accuracy in environments where external sensor data may be unavailable, noisy, or computationally expensive to process, and serves as a foundational element for drift reduction techniques.

The system utilizes a [latex]ConstantVelocityPrior[/latex] as its primary process model for state propagation, meaning that between perception updates, the robot’s state – position and orientation – is predicted assuming constant velocity. This prior provides an initial estimate of movement based on the previously known state and an assumption of continued motion at the same speed and direction. Specifically, the predicted state [latex]x_{t|t-1}[/latex] at time t given the state at t-1 is calculated using a simple kinematic model: [latex]x_{t|t-1} = Fx_{t-1} + Bu[/latex], where F is the state transition matrix (representing constant velocity), B is the control input matrix, and u represents the control inputs derived from motor commands. This predictive step is crucial for bridging the gaps between sensor readings and providing a continuous estimate of the robot’s pose, forming the foundation for subsequent refinement through sensor fusion and optimization.

InverseKinematicsVelocityEstimation directly calculates the linear and angular velocities of the robot’s feet by utilizing the robot’s joint angles and the known kinematic structure. This method bypasses the need for forward kinematics to determine foot positions in world coordinates, instead focusing on the change in foot position over time. Specifically, the Jacobian matrix, derived from the robot’s kinematic model, is used to relate joint velocities to foot velocities. This provides a direct measurement of foot-end velocities, independent of wheel slippage or external disturbances, and is then integrated into the odometry pipeline to refine pose estimates and minimize accumulated error.

Proprioceptive odometry, leveraging data from inertial measurement units and motor encoders, serves as the primary localization method within our system. This internal estimation process provides an initial pose estimate independent of potentially noisy or unreliable external sensors. By continuously propagating the robot’s state based on motor commands and IMU readings, and incorporating [latex] \text{InverseKinematicsVelocityEstimation} [/latex] to derive foot-end velocities, we establish a robust foundation for pose tracking. The resulting continuous pose estimates are critical for mitigating cumulative errors, effectively reducing closed-loop drift that would otherwise occur with reliance on intermittent external corrections. This approach enables accurate, long-term localization essential for autonomous navigation and mapping applications.

Anchoring to Reality: Correcting Drift with Footfalls

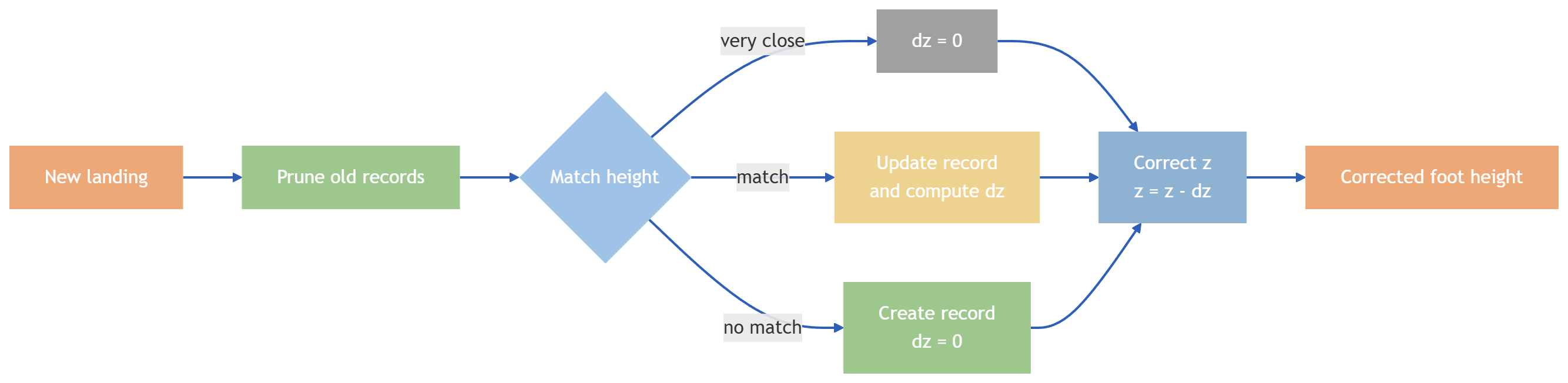

ContactAnchoredEstimation functions as a drift correction mechanism by intermittently constraining the estimated body state to detected footfall events occurring during the stance phase of locomotion. This process leverages the 3D positions recorded at the moment of ground contact as external constraints, effectively resetting accumulated errors in the body state estimation. By anchoring the estimated pose to these discrete, real-world measurements during periods of stable support, the system minimizes long-term drift and maintains localization accuracy. The intermittent nature of this correction – only during stance – avoids introducing constraints that would interfere with dynamic movement and allows for natural locomotion.

FootfallRecording establishes intermittent constraints within the state estimation process by capturing the three-dimensional position of each foot at the precise moment of ground contact. This data acquisition relies on identifying ground contact events during the stance phase of locomotion. The recorded 3D positions are then utilized as discrete, time-stamped constraints, effectively anchoring the estimated body state to known external points in space. This approach allows for the correction of accumulated drift in the estimated pose, as the system periodically realigns its internal representation with the observed foot positions.

Stance detection within the system is accomplished by the `StanceSelection` module, which utilizes estimations of end-effector force. These force estimations are derived from `JointTorqueEstimation`; specifically, joint torques are used to calculate the forces exerted at the robot’s end effectors – its feet. By monitoring these forces, the system identifies periods of stable ground contact, reliably determining the stance phase of the gait cycle and enabling subsequent drift correction mechanisms. This approach allows for robust stance detection even with imperfect sensor data or dynamic movements.

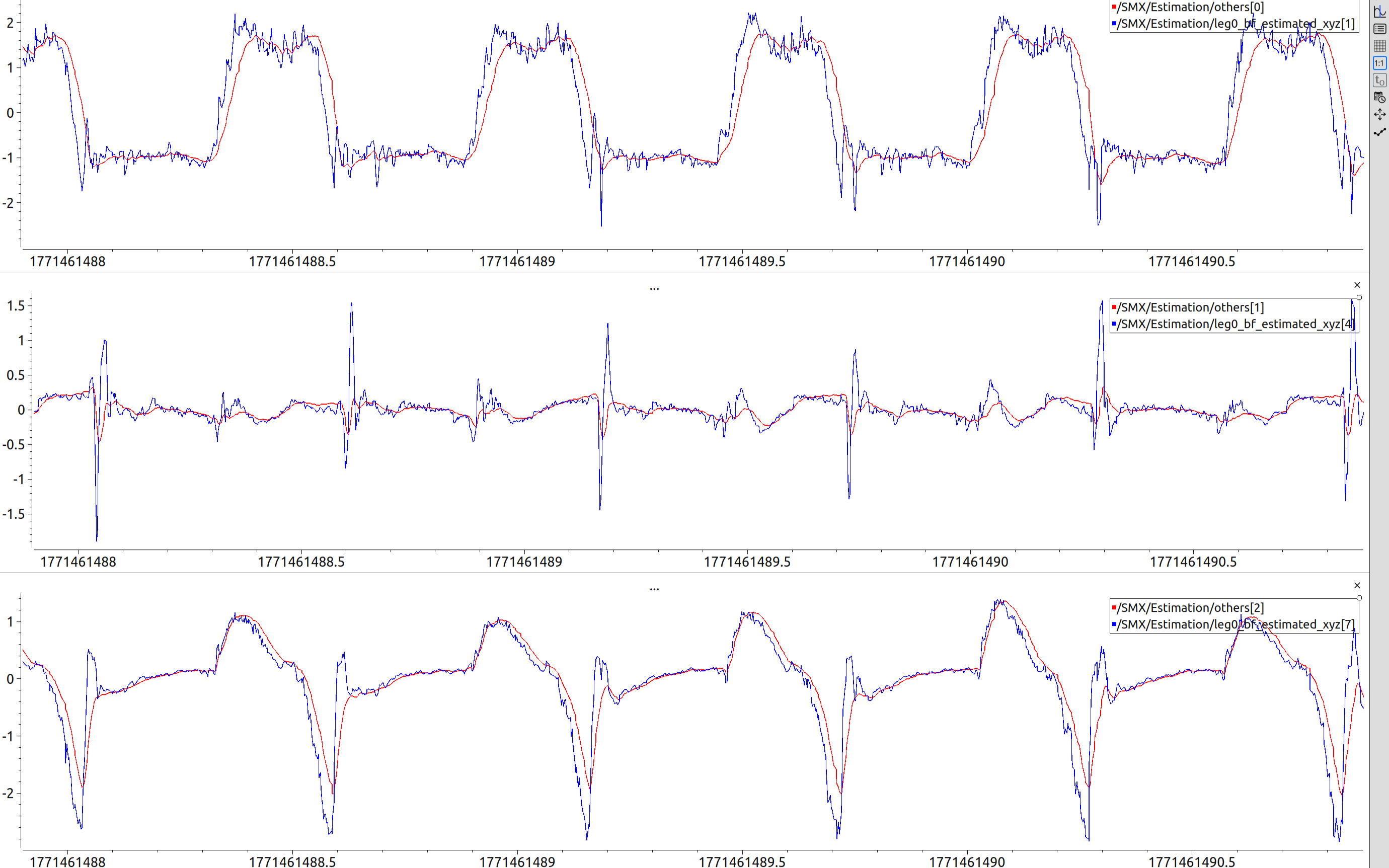

The `SupportPlaneHeightCorrection` module addresses vertical drift by refining the accuracy of recorded footfall heights. This is achieved through a clustering process that identifies and corrects inconsistencies in newly recorded footfall positions. Evaluation demonstrates a vertical loop error of 0.219 meters, a performance level comparable to that of the Unitree Go2 EDU robot. This correction mechanism effectively mitigates long-term elevation drift, maintaining a consistent and accurate estimate of the robot’s height relative to the ground plane during operation.

Stabilizing the Heading: The Illusion of Perfect Direction

A prevalent challenge in robot localization, yaw drift – the accumulation of error in heading estimation – is actively mitigated through a system called `YawCorrection`. This technique enforces geometric consistency by directly comparing the robot’s internally measured movements – its body-frame kinematics – with the positions of ground contact as observed in the world frame. By cross-validating these two data sources, discrepancies indicative of yaw drift are identified and corrected, ensuring a more accurate and stable heading estimate over time. This approach fundamentally improves odometry by grounding the robot’s perceived orientation in real-world observations, rather than relying solely on potentially noisy internal sensors.

Robust heading estimation relies on the principle of `MultiContactGeometricConsistency`, which intelligently integrates data from multiple simultaneous contact points to establish a reliable directional reference. Rather than depending on a single sensor or contact, this method triangulates the robot’s orientation by analyzing the geometric relationships between these points, effectively minimizing the impact of individual sensor noise or momentary inaccuracies. By considering the collective spatial information provided by multiple contacts, the system builds a more resilient and accurate understanding of the robot’s heading, even in dynamic or challenging environments where wheel slippage or uneven terrain might otherwise induce drift. This approach ensures that the estimated heading remains consistent with the physical constraints imposed by the robot’s interactions with the world, bolstering the overall accuracy of pose estimation.

Pose estimation in robotics often relies on filtering techniques to fuse sensor data and maintain an accurate state estimate, but robot kinematics are inherently nonlinear – a challenge for traditional Kalman filters. The system utilizes a [latex]CubatureKalmanFilter[/latex] (CKF) to address this issue; unlike extended Kalman filters which linearize the system around an operating point, the CKF uses a third-order Gauss-Hermite quadrature to accurately propagate the probability distribution through nonlinear transformations. This approach avoids the approximations inherent in linearization, leading to a more robust and accurate estimate, particularly when dealing with significant robot motion or complex kinematic models. By effectively capturing the nonlinear dynamics, the [latex]CubatureKalmanFilter[/latex] significantly contributes to the overall stability and precision of the pose estimation system, even in challenging environments.

The integration of this novel approach with the `IKVelCKF` filter demonstrably enhances pose estimation accuracy and stability, particularly when operating in complex and unpredictable environments. Rigorous testing on the Astrall Robot C yielded a horizontal loop error of just 7.68 meters, a significant reduction in closed-loop drift. This performance indicates a substantial improvement over previous methodologies, allowing for more reliable localization and navigation even amidst challenging conditions and prolonged operation where accumulated error traditionally presents a considerable obstacle.

![Maintaining a constant inter-foot geometry during multi-contact locomotion provides a kinematics-based heading reference that allows for yaw correction, as illustrated by the transition from the initial [latex]blue[/latex] model to the twisted [latex]yellow[/latex] trunk pose.](https://arxiv.org/html/2602.17393v1/fig/fig_Rotation2.png)

The pursuit of perfect state estimation, as demonstrated by this contact-anchored proprioceptive odometry, inevitably runs headfirst into the chaos of physical reality. This framework, meticulously designed to anchor estimations to footfall events, represents another layer of abstraction built atop the already complex problem of legged locomotion. It’s a clever refinement, certainly, but one destined to become tomorrow’s tech debt as robots encounter unforeseen terrains and dynamic forces. As Andrey Kolmogorov observed, “The most important things are not visible.” This holds true here; the elegance of the algorithm will ultimately be judged not by its theoretical accuracy, but by its failure modes in production. The ambition to eliminate reliance on external sensors is admirable, yet the system still operates under the illusion of complete information – a dangerous assumption when dealing with inherently noisy physical systems.

The Road Ahead

This contact-anchored approach, while elegantly sidestepping the perennial sensor fusion headache, merely shifts the burden. The footfall events, so neatly exploited for state estimation, will inevitably become the new single points of failure. Production robots don’t experience discrete events; they experience smudges, scrapes, and the slow accumulation of error. The cubature Kalman filter offers a clean theoretical framework, but one suspects reality will demand a far messier implementation.

The real challenge isn’t accuracy, it’s graceful degradation. Current work focuses on minimizing error during the stance phase. A more pressing concern is what happens between those anchors. The framework’s ability to bridge those gaps, to tolerate increasingly large uncertainties without catastrophic drift, will determine its longevity. One anticipates a future filled with increasingly sophisticated methods for predicting, and ultimately accepting, the inevitability of failure.

Ultimately, this is another step towards autonomous locomotion, but not a destination. The pursuit of purely proprioceptive odometry is a valuable exercise, a reminder that elegant theory rarely survives contact with the world. Legacy systems are a memory of better times; bugs are merely proof of life. The next iteration won’t solve the problem, it will simply prolong the suffering.

Original article: https://arxiv.org/pdf/2602.17393.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- The Division Resurgence Best Weapon Guide: Tier List, Gear Breakdown, and Farming Guide

- Last Furry: Survival redeem codes and how to use them (April 2026)

- GearPaw Defenders redeem codes and how to use them (April 2026)

- Gold Rate Forecast

- eFootball 2026 “Countdown to 1 Billion Downloads” Campaign arrives with new Epics and player packs

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- Total Football free codes and how to redeem them (March 2026)

- Clash of Clans Sound of Clash Event for April 2026: Details, How to Progress, Rewards and more

2026-02-23 04:21