Author: Denis Avetisyan

A new study examines how well we can understand the reasoning behind artificial intelligence systems when they analyze and interpret works of art.

This review assesses the effectiveness of explainable AI techniques for localizing iconographic elements in artworks using vision-language models, revealing limitations stemming from model biases and the subjective nature of art historical interpretation.

While Vision-Language Models (VLMs) demonstrate impressive capabilities in multimodal reasoning, the legibility of their visual interpretations remains a critical challenge. This paper, ‘On the Explainability of Vision-Language Models in Art History’, investigates the effectiveness of seven Explainable AI (XAI) methods in localizing iconographic elements within artworks using the CLIP model. Our results reveal that, although these methods capture aspects of human interpretation, their success is contingent on the conceptual clarity and representational stability of the categories being analyzed. Ultimately, this raises the question of how we can best align machine ‘understanding’ with the nuanced and often ambiguous nature of art historical analysis.

The Illusion of Understanding: Why We Ask AI to Explain Itself

Deep neural networks have achieved remarkable success in areas like image recognition and natural language processing, yet their internal workings often remain opaque. This lack of transparency presents significant challenges; while a network might accurately identify objects in an image, understanding why it arrived at that conclusion is frequently impossible. This ‘black box’ behavior hinders trust, particularly when these models are deployed in critical applications where accountability is paramount. Debugging also becomes extraordinarily difficult; when a network fails, pinpointing the source of the error – a faulty parameter, biased training data, or an inherent limitation of the architecture – can be a laborious and often fruitless endeavor. Consequently, researchers are increasingly focused on developing methods to illuminate the decision-making processes within these powerful, yet enigmatic, systems.

The ability to discern the reasoning behind a model’s conclusions is paramount, especially when applying artificial intelligence to nuanced fields such as art history. Unlike tasks with clearly defined correct answers, art historical analysis frequently involves subjective interpretation and contextual understanding; a model simply identifying a painting as “Baroque” offers little value without revealing why that classification was made. Was it the dramatic use of light and shadow, the emotional intensity of the figures, or specific stylistic elements compared to known works? Without this explanatory power, it becomes difficult to validate the model’s insights, identify potential biases reflecting skewed training data, or even learn anything new about the artwork itself. Consequently, understanding the ‘why’ fosters trust in the technology, facilitates meaningful collaboration between humans and machines, and ensures responsible application of AI in the humanities.

The opaqueness of deep neural networks has spurred significant development in Explainable AI (XAI) techniques specifically tailored for visual reasoning. These methods aim to move beyond simply what a model predicts, and instead illuminate how it arrives at a conclusion based on visual input. XAI for vision doesn’t just offer a prediction; it provides visual explanations – such as saliency maps highlighting important image regions or attribution methods revealing feature importance – allowing researchers and practitioners to understand the model’s decision-making process. This is particularly vital in fields where trust and accountability are paramount, as the ability to interpret a model’s reasoning fosters confidence and enables effective debugging, refinement, and ultimately, responsible deployment of these powerful technologies.

Heatmaps and Half-Truths: Visualizing What the Network ‘Sees’

Class Activation Maps (CAM) provide a technique for generating heatmaps that highlight the image regions influencing a convolutional neural network’s (CNN) prediction. These maps are produced by utilizing the weights of the final convolutional layer, effectively showing which areas of the input image contribute most strongly to the classification result. Specifically, a weighted sum of the feature maps from this layer is computed, and then upsampled to match the original image resolution, resulting in a visual representation of the network’s focus. This allows for a degree of interpretability, enabling users to understand why a network made a specific prediction rather than simply what the prediction was.

Early implementations of Gradient-weighted Class Activation Mapping (Grad-CAM) exhibited inherent limitations regarding the granularity and clarity of resulting heatmaps. Specifically, the coarse spatial resolution, a consequence of utilizing gradient information averaged across entire feature maps, often resulted in blurry or ill-defined activation regions. Furthermore, these visualizations were susceptible to noise and the presence of visual artifacts, stemming from gradient saturation and the accumulation of irrelevant gradients during backpropagation. This reduced the interpretability of the maps and hindered precise localization of the image regions driving the network’s classification decision.

Following the initial development of Grad-CAM, several techniques were introduced to address limitations in visualization quality. Grad-CAM++ builds on the original method by incorporating a weighted average of positive gradients, improving localization of important features. ScoreCAM distinguishes itself by utilizing the increase in class score as the criterion for determining feature importance, which reduces reliance on gradient saturation. LayerCAM further refines visualization by averaging activation maps across multiple convolutional layers, resulting in more detailed and less noisy heatmaps. These advancements collectively aim to provide more accurate and interpretable visualizations of neural network attention mechanisms, enhancing model understanding and debugging capabilities.

Bridging the Gap: Explainability for Vision-Language Models

Contrastive Language-Image Pre-training (CLIP) enables vision-language models to develop robust multimodal understanding by learning a shared embedding space for images and text. This process involves training the model to predict which images correspond to given text descriptions and vice-versa, forcing the visual and textual representations to align. Specifically, CLIP utilizes a contrastive loss function that maximizes the similarity between embeddings of matching image-text pairs while minimizing the similarity between non-matching pairs. This pre-training strategy allows the model to transfer knowledge effectively to downstream tasks such as image classification, image captioning, and visual question answering, without requiring task-specific labeled data.

Standard Class Activation Mapping (CAM) techniques, while effective for visualizing features in earlier convolutional neural networks, frequently produce low-quality visualizations when applied to Vision-Language Models. These models, trained with contrastive learning objectives, distribute representations differently, resulting in CAMs that lack sharp localization and often highlight broad, uninformative regions of the input image. This occurs because the feature maps used to generate CAMs are not directly predictive of the final classification decision in these architectures, leading to diluted activation signals and a failure to accurately pinpoint the salient image regions driving the model’s prediction.

CLIP Surgery and LeGrad represent improvements in Class Activation Mapping (CAM) techniques tailored for Vision-Language Models (VLMs) like CLIP. Standard CAM methods often fail to produce focused visualizations with VLMs due to the models’ training objectives and architectural differences. These newer methods address these limitations by modifying the gradient calculation process to generate more precise and high-fidelity CAMs, highlighting the specific image regions most relevant to the model’s prediction. This enhanced visualization capability facilitates improved interpretability, allowing researchers to better understand the model’s reasoning process and identify potential biases or failure modes.

Quantitative evaluation of visual explanation techniques utilizes metrics such as BoxAcc, which assesses the overlap between predicted saliency maps and ground truth object bounding boxes. Benchmarking with datasets like IconArt and ArtDL provides standardized comparisons; on the ArtDL test set, the CLIP Surgery method currently achieves a BoxAcc of 52.28%. This represents a significant performance advantage, demonstrating an absolute improvement of approximately 9 percentage points over the next best performing method, LeGrad, under the same evaluation conditions.

The Illusion of Alignment: Measuring Trust in AI Explanations

Determining the effectiveness of Class Activation Map (CAM) visualizations necessitates rigorous evaluation through user studies. These investigations move beyond purely quantitative metrics by directly assessing how humans perceive and interpret the highlighted regions within an image. Participants are typically asked to judge the relevance of these visualizations to the identified concept, or to utilize the maps to perform a specific task, such as object detection or image classification. The perceived quality and usefulness, as reported by users, become crucial indicators of whether the CAM accurately reflects the model’s reasoning process and provides genuinely helpful insights. Such subjective assessments are invaluable, as they highlight potential discrepancies between what the algorithm ‘sees’ and what a human understands, ultimately guiding improvements in both visualization techniques and the underlying AI models.

Accurate assessment of how well humans perceive and interpret visualizations necessitates careful handling of incomplete data, a common challenge in user studies. When multiple annotators evaluate the same content, discrepancies or missing annotations are inevitable. To address this, researchers employ statistical techniques like Multivariate Imputation by Chained Equations (MICE). MICE doesn’t simply discard incomplete data; instead, it leverages the relationships between variables to predict and impute missing values, creating a complete dataset for analysis. This sophisticated approach ensures that the final assessment of inter-rater agreement isn’t skewed by incomplete information, bolstering the reliability of findings and providing a more nuanced understanding of human perception in relation to AI-generated visualizations.

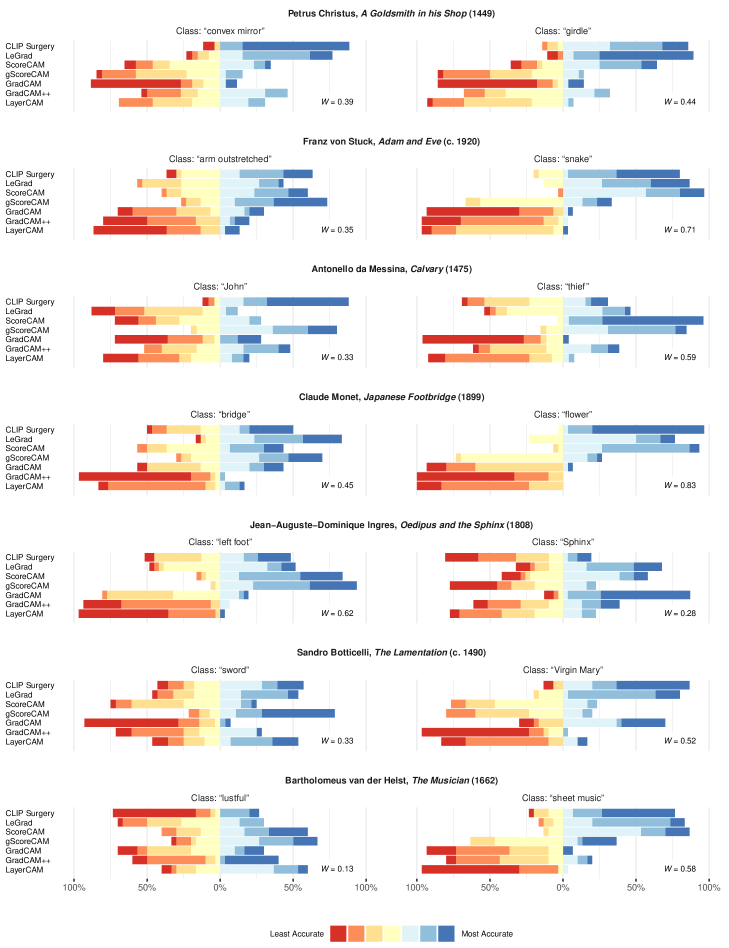

Statistical analysis reveals a compelling degree of consistency between human perception and artificial intelligence in identifying salient image features. Utilizing Kendall’s W, researchers quantified the agreement between human annotations – detailing which areas of an image draw the most attention – and the corresponding saliency maps generated by the AI model. Observed values of Kendall’s W consistently ranged from 0.71 to 0.83 for images possessing clearly defined visual concepts, demonstrating a robust level of alignment. This strong correlation suggests the model effectively mimics human visual attention, bolstering confidence in its ability to accurately interpret and prioritize information within complex imagery – a critical factor for applications demanding reliable image understanding.

The ability to quantify alignment between artificial intelligence and human perception is not merely an academic exercise, but a foundational requirement for widespread adoption, particularly within domains demanding high reliability. Strong statistical validation of AI-generated explanations, such as saliency maps, directly translates into increased user confidence and a greater willingness to integrate these systems into critical applications like medical diagnosis, autonomous vehicle operation, and financial modeling. When AI can demonstrably ‘show its work’ and that work consistently aligns with human understanding, it fosters a sense of transparency and control, mitigating concerns about ‘black box’ decision-making and ultimately accelerating the responsible deployment of these powerful technologies. This quantifiable trust is therefore paramount, shifting AI from a perceived risk to a valued and dependable asset.

The pursuit of explainability in vision-language models, as demonstrated by this study of art historical iconography, feels less like revealing truth and more like charting the topography of compromise. These models, while capable of localizing objects within artworks, expose the inherent biases baked into their representational layers – a predictable outcome. As Andrew Ng once stated, “Simplicity depends on the assumptions you make.” The paper subtly confirms this; these XAI methods, attempting to map model attention onto human perception, inevitably stumble where art historical concepts lack definitive boundaries. It isn’t a failure of the technique, but a testament to the fact that every optimization will one day be optimized back, revealing the initial constraints imposed upon the system. Architecture isn’t a diagram; it’s a compromise that survived deployment, and in this case, deployment involves interpreting the subjective realm of artistic meaning.

What’s Next?

The enthusiasm for applying Vision-Language Models to art history appears, predictably, to have outstripped a clear understanding of what these models are actually showing. Localization via saliency maps offers the illusion of insight, but the paper rightly points out that aligning with human perception doesn’t equate to genuine interpretability. It’s a comforting feeling when the machine ‘sees’ what a human does, until production reveals that ‘seeing’ is often a matter of convenient correlation, not causal understanding. One suspects these models are remarkably adept at finding what they were trained to find, and not necessarily what the art means.

Future work will, no doubt, involve more sophisticated XAI techniques, perhaps attempting to disentangle the layers of representational bias inherent in these pre-trained models. But a more fruitful approach might involve accepting the inherent limitations. Better one painstakingly curated dataset reflecting established art historical scholarship, than a hundred lightly annotated image sets fed to an algorithm hoping for serendipity. The truly challenging task isn’t making the model explain itself, but making the explanations reliable.

It’s a safe prediction that the next generation of ‘explainable’ methods will simply generate more plausible-sounding justifications for the same underlying ambiguities. The field will chase the mirage of algorithmic transparency, while the actual complexities of art historical interpretation remain stubbornly resistant to reduction. Anything called ‘scalable’ hasn’t been tested properly, and any claim of ‘understanding’ should be viewed with appropriate skepticism.

Original article: https://arxiv.org/pdf/2602.20853.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Limbus Company 2026 Roadmap Revealed

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Gold Rate Forecast

- Wuthering Waves Hiyuki Build Guide: Why should you pull, pre-farm, best build, and more

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- Guild of Monster Girls redeem codes and how to use them (April 2026)

- GearPaw Defenders redeem codes and how to use them (April 2026)

- Genshin Impact Version 6.5 Leaks: List of Upcoming banners, Maps, Endgame updates and more

- Total Football free codes and how to redeem them (March 2026)

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

2026-02-26 06:35