Author: Denis Avetisyan

A new framework empowers robots to manipulate objects and answer questions about their environment, even when vision is limited, by combining language understanding with enhanced visual perception.

![The system iteratively refines its understanding of a scene through interactive perception, where a robot evaluates observations and queries-leveraging a memory [latex]\mathcal{M}_{t}[/latex] to avoid repetition-and, when necessary, annotates images with segmented elements like push lines, keypoints, or grid patterns to inform subsequent actions [latex]a_{t}=\pi(\mathcal{M}_{t},\textbf{x}_{t},z_{t},\tilde{o}_{t})[/latex], ultimately building a contextual history [latex]S_{t}[/latex] of images and scene descriptions to enhance task efficiency.](https://arxiv.org/html/2602.18374v1/Figures/rss25/InterPer2.png)

This review details ZS-IP, a system leveraging vision-language models, spatial reasoning, and memory to achieve zero-shot interactive perception and robust object manipulation in the face of occlusion.

Resolving ambiguity and occlusions remains a core challenge for robotic manipulation in complex environments. This paper introduces Zero-shot Interactive Perception (ZS-IP), a novel framework that empowers robots to actively seek information through physical interaction and semantic reasoning. ZS-IP integrates a memory-driven vision-language model with enhanced visual affordances – specifically, ‘pushlines’ capturing contact-rich action possibilities – to guide manipulation and resolve queries without task-specific training. Could this approach unlock more robust and adaptable robotic systems capable of navigating and manipulating objects in truly unstructured scenes?

The Illusion of Control: Reactive Systems and the Need for True Interaction

Conventional robotic systems often operate on a foundation of pre-programmed responses to anticipated stimuli, a methodology that inherently restricts their performance in genuinely dynamic environments. These robots excel in highly structured settings-like factory assembly lines-where conditions remain predictable; however, when confronted with the unpredictable nature of the real world, their rigid programming becomes a significant limitation. A robot designed to grasp a specific object, for instance, may fail utterly if that object is slightly displaced, obscured, or if another object unexpectedly enters its workspace. This reliance on pre-defined actions prevents adaptation to novel situations, hindering a robot’s ability to operate effectively outside of carefully controlled parameters and limiting its potential for truly autonomous behavior.

Truly versatile robotic manipulation hinges on a shift from reactive control to proactive information gathering. Traditional systems, designed to respond to pre-defined stimuli, falter when confronted with novelty or ambiguity; a robot simply reacting to a scene cannot effectively address unforeseen obstacles or subtle changes in object properties. Instead, effective manipulation demands that a robot actively explore its environment – employing sensors not just to detect, but to query the world around it. This involves formulating hypotheses about an object’s characteristics – its weight, texture, or fragility – and then conducting physical interactions, like gentle prods or careful lifts, to validate or refine those initial assumptions. By intelligently seeking out specific information through interaction, robots can build a more robust and nuanced understanding of their surroundings, enabling them to adapt to dynamic situations and perform complex tasks with greater reliability and dexterity.

Robotic perception often falters not because of technical limitations in sensing, but due to the inherent ambiguity present in real-world scenes. Current systems, while adept at identifying objects under controlled conditions, struggle when faced with occlusion, varying lighting, or unfamiliar contexts. A simple pile of blocks, for example, can represent a construction project, a haphazard mess, or even a deliberate barrier – interpretations that necessitate understanding the surrounding environment and the likely intentions of any human actors present. This demand for contextual understanding extends beyond simple object recognition; it requires robots to infer relationships, predict outcomes, and resolve uncertainty, capabilities that remain a significant challenge for existing approaches focused primarily on passive observation and pre-programmed responses.

A fundamental shift in robotic design necessitates moving beyond simple reaction to embracing active inquiry. Instead of passively receiving sensory data and executing pre-defined actions, future robots will require the capacity to intelligently question their environment. This involves formulating relevant queries – not merely detecting an object, but asking what it is, what it’s used for, or how it relates to other objects. Crucially, these systems must then be capable of interpreting the responses – whether visual, auditory, or tactile – with a degree of contextual understanding. Such a paradigm enables robots to resolve ambiguities inherent in real-world scenes and build a richer, more nuanced representation of their surroundings, ultimately fostering truly adaptive and collaborative interaction.

ZS-IP: A Framework for Embracing the Unknown

ZS-IP is a newly developed robotic framework designed to facilitate interaction with previously unseen environments and objects without requiring task-specific training datasets. This is achieved by moving beyond traditional, pre-programmed robotic behaviors and enabling robots to dynamically understand and respond to novel situations. The framework’s core functionality centers on its ability to generalize learned knowledge from large-scale datasets to new contexts, allowing for zero-shot transfer of capabilities. Unlike systems reliant on extensive fine-tuning for each new task or object, ZS-IP aims to provide a generalized perception and interaction capability applicable across a wide range of scenarios, reducing the need for costly and time-consuming retraining processes.

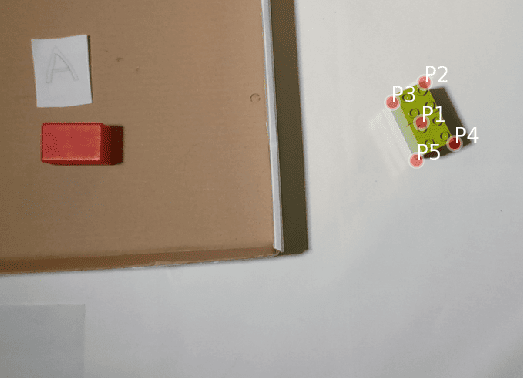

ZS-IP constructs scene understanding by integrating data from vision-language models (VLMs) with enhanced visual affordances. Specifically, the framework utilizes VLMs to process visual information and associate it with natural language descriptions. Complementing this, ZS-IP incorporates precise geometric data representing potential object interactions, including 2D grasp keypoints indicating feasible grasping locations and pushlines defining effective pushing trajectories. These affordances, derived from visual processing, provide the VLM with crucial spatial information, enabling the robot to not only recognize objects but also to understand how it can physically interact with them. This fusion of semantic and geometric data results in a more comprehensive and actionable representation of the environment.

The Perception Analyser functions as the central decision-making module within the ZS-IP framework, continuously evaluating the completeness of the robot’s environmental understanding. It operates by identifying information gaps – discrepancies between the robot’s current knowledge and the requirements for successful interaction – and subsequently generating requests for specific perceptual data. These requests specify the type of information needed, such as the location of grasp keypoints or the identification of pushable surfaces, and direct the robot’s active sensing strategies. The analyser then processes incoming data, updates its internal representation of the environment, and iteratively refines its information needs until a sufficient level of understanding is achieved to facilitate task execution.

The ZS-IP framework facilitates robotic understanding of novel environments via an iterative process of query formulation, response interpretation, and subsequent refinement of internal scene representations. Robots utilizing ZS-IP do not passively receive information; instead, they actively generate questions regarding observed objects and their potential interactions. These queries are processed, and the resulting responses – typically in natural language or structured data – are analyzed to update the robot’s internal model. This cycle of questioning and interpretation allows the robot to actively explore the environment, focusing information gathering on areas where uncertainty remains high, and progressively building a more complete and accurate understanding without requiring pre-programmed knowledge of the specific environment or objects encountered.

Memory as a Foundation: The Echo of Past Interactions

The Zero-Shot Interactive Planner (ZS-IP) incorporates a Memory Block as a dedicated storage component for retaining data related to previous interactions with the environment and descriptions of observed scenes. This Memory Block functions as a contextual repository, enabling the system to access and utilize past experiences when formulating current action plans. Stored data includes details of robot states, visual observations, and associated outcomes, effectively providing a historical record for improved decision-making. The Memory Block’s contents are dynamically updated with each new interaction, allowing ZS-IP to adapt to changing environments and refine its behavior over time. Access to this historical data is a core component of the system’s ability to perform context-aware planning and respond effectively to novel situations.

The Action Module within the ZS-IP system formulates action plans utilizing a multi-faceted input stream. This includes the robot’s current internal state – encompassing parameters like battery level, motor positions, and operational mode – alongside data from visual observations processed by the perception system. Critically, the module also incorporates information retrieved from the Memory Block, which provides contextual awareness derived from past interactions and scene descriptions. This retrieved memory is not simply appended to the input, but actively integrated to inform the planning process, enabling the generation of contextually relevant and feasible action sequences.

Memory-Driven Action Planning operates by condensing sequential observations into a summarized temporal representation, enabling the system to understand the progression of events and anticipate future states. This summary isn’t merely a static record; it captures the order and duration of interactions, allowing the robot to differentiate between recently completed actions and those performed further in the past. Consequently, the system’s decision-making process is informed by this contextual history, allowing it to select actions appropriate to the current situation and avoid repeating actions unnecessarily or executing them out of sequence. This temporal awareness is critical for complex tasks requiring sustained interaction with dynamic environments, as it facilitates more robust and efficient plan generation.

Retrieval-Augmented In-Context Generation (RAG) improves the Zero-Shot Interactive Perception (ZS-IP) system’s performance by supplementing the large language model (LLM) with relevant information retrieved from the Memory Block. This process mitigates the LLM’s reliance on solely its pre-trained knowledge, addressing limitations in adapting to novel situations or specific environmental details. By incorporating retrieved contextual data during plan generation, RAG enhances the semantic understanding of the current state and observed scene. This leads to more robust and accurate action planning, particularly in dynamic environments or when dealing with ambiguous inputs, as the LLM can ground its decisions in concrete, previously observed interactions and scene descriptions.

Beyond Benchmarks: The Illusion of Mastery and the Path Forward

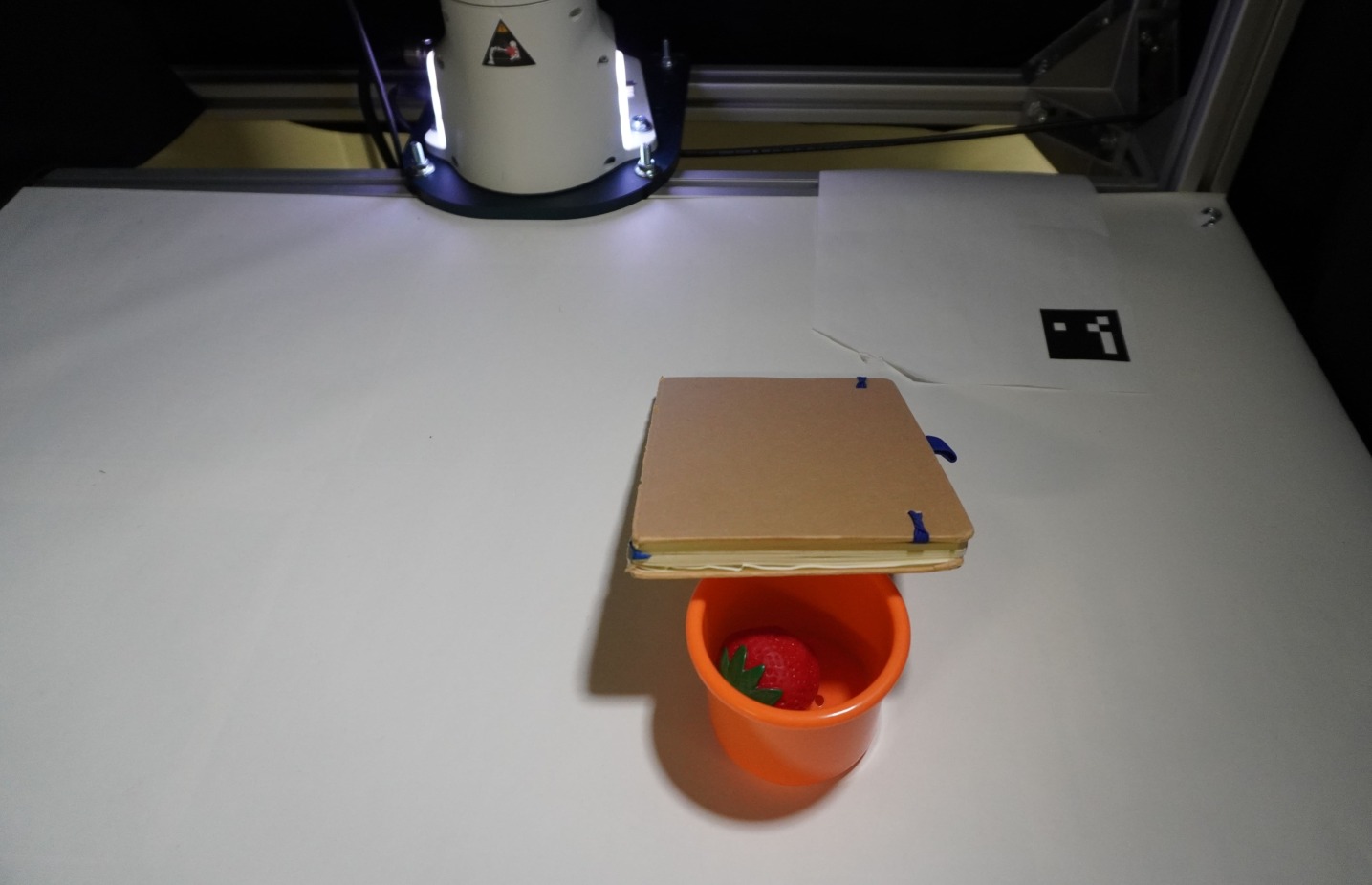

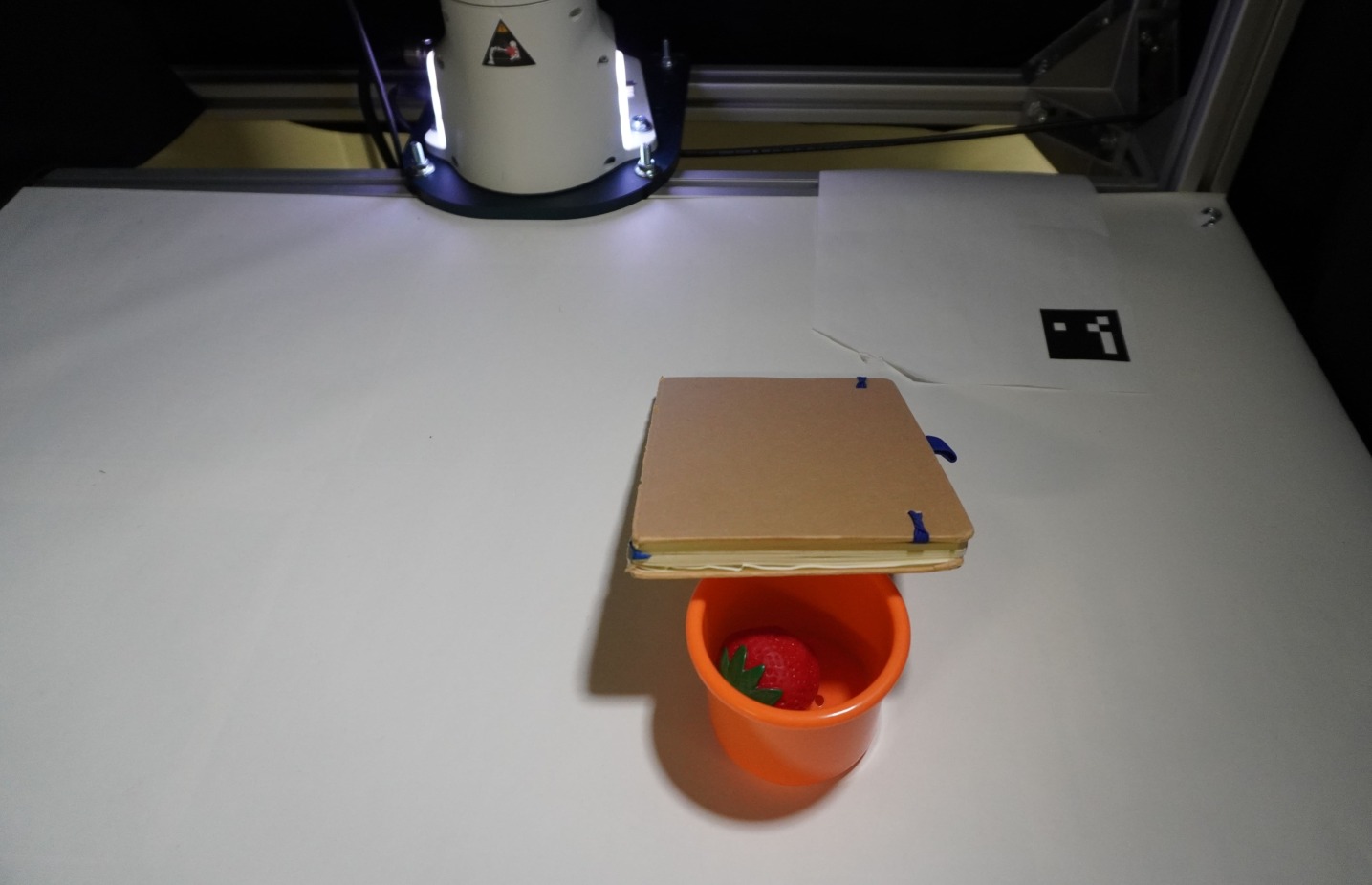

Rigorous testing of the ZS-IP framework utilized the widely recognized YCB Object Set – a diverse collection of household items – alongside ArUco markers to establish a ground truth for precise robot localization. This methodical approach wasn’t merely about achieving accuracy in a controlled environment; it was designed to evaluate the system’s capacity to generalize its understanding to previously unseen objects and arrangements. The successful navigation and manipulation of these varied items, coupled with accurate localization using the markers, indicates ZS-IP’s potential for robust performance in dynamic, real-world scenarios where perfect prior knowledge is unrealistic. This ability to adapt and perform reliably in novel situations is a crucial step towards creating truly versatile and intelligent robotic systems.

A detailed comparative study positioned the ZS-IP framework as outperforming the PIVOT system in interactive robotic tasks that demand contextual understanding. This advantage isn’t simply about completing actions, but about how those actions are performed – ZS-IP demonstrated a greater ability to interpret the surrounding environment and adjust its behavior accordingly. The analysis revealed that ZS-IP consistently made more informed decisions when faced with ambiguity, leading to smoother and more effective interactions. This suggests that the framework’s architecture excels at integrating perceptual information with task goals, enabling it to navigate complex scenarios with a level of adaptability that surpasses existing methods and opens new avenues for truly intelligent robotic systems.

The integration of GPT-4o as the perceptual core of the framework signifies a substantial leap towards more intuitive and adaptable robotic systems. Traditionally, robots have relied on painstakingly programmed responses to specific stimuli; however, this approach limits their ability to handle the inherent ambiguity of real-world environments. By leveraging the advanced natural language understanding and reasoning capabilities of a large language model, the system can interpret sensory data – visual input, object recognition, and scene understanding – with a level of sophistication previously unattainable. This allows for dynamic task planning and execution based not just on what is perceived, but also on why it matters, effectively bridging the gap between raw data and intelligent action and opening avenues for robots to respond to nuanced instructions and unforeseen circumstances with greater flexibility.

The ZS-IP framework demonstrated a noteworthy capacity for handling intricate robotic challenges, achieving an 0.8 success rate on Task VI. This benchmark involved a complex scenario requiring not just object recognition and manipulation, but also an understanding of spatial relationships and sequential actions. The high success rate indicates ZS-IP’s robustness in navigating these difficulties, showcasing its ability to reliably perform tasks that demand more than simple pre-programmed responses. This performance suggests a significant step toward creating robotic systems capable of adapting to unpredictable, real-world environments and completing complex objectives with a high degree of consistency.

The ZS-IP framework demonstrated a notable capacity for contextual reasoning during the execution of Task VII, achieving a success rate of 0.7. This performance indicates the system’s ability to not merely identify objects and actions, but to interpret them within a broader understanding of the task’s demands. By successfully navigating the complexities of Task VII, ZS-IP In-Context highlights how integrating contextual awareness significantly enhances robotic problem-solving capabilities, moving beyond rote execution toward more flexible and adaptable interaction with dynamic environments. This result suggests a pathway for future robotic systems to better anticipate needs and respond appropriately in real-world scenarios, offering a crucial step towards truly intelligent and helpful robotic companions.

Beyond its performance in intricate challenges, the ZS-IP framework demonstrated consistent reliability across foundational interactive tasks. Specifically, high success rates were achieved on Tasks I, II, and IV, indicating a robust capacity for basic object manipulation and scene understanding. These simpler tasks served not only as validation of the system’s core functionalities – such as accurate localization and motion planning – but also established a baseline for evaluating performance gains on more complex scenarios. The consistent success in these fundamental areas suggests the framework possesses a strong foundation for scaling to increasingly sophisticated robotic applications, ensuring dependability alongside its advanced contextual reasoning capabilities.

The pursuit of zero-shot interactive perception, as demonstrated in this work, echoes a fundamental truth about complex systems. The framework’s ability to reason about spatial relationships and object affordances, even amidst occlusion, isn’t about achieving perfect foresight, but about building resilience into the interaction itself. As Robert Tarjan once observed, “The most effective programs are the ones that handle the most exceptions.” This aligns with the core idea that robots must navigate uncertainty and incomplete information-a temporary cache between failures, if one will-to successfully manipulate their environment. The system doesn’t solve occlusion; it adapts to it, revealing that robust interaction is less about eliminating chaos and more about gracefully absorbing it.

What Lies Ahead?

The pursuit of zero-shot interaction, as exemplified by this work, reveals less a path to generalized intelligence and more a deepening appreciation for the inherent messiness of the physical world. The framework addresses occlusion, a perennial frustration, yet merely shifts the burden to the quality of its memory and the precision of its affordance reasoning. Dependencies remain. A robot that ‘understands’ affordances is still vulnerable to the unpredictable chaos of novel object arrangements and unforeseen environmental disturbances. It is a compromise frozen in time.

The true challenge isn’t building systems that react to what is visible, but anticipating what might be. Future efforts will likely focus on probabilistic models of occlusion – not simply ‘object is hidden’, but ‘object is 80% likely to be present, given…’. This requires a shift from feature detection to belief propagation, from rigid affordances to fluid estimations of possibility. The architecture isn’t structure – it’s a fragile forecast.

Ultimately, this line of inquiry reminds one that robotics isn’t about conquering the environment, but about coexisting within it. The robot, like any organism, must learn to navigate uncertainty, to infer the hidden, and to accept the inevitability of failure. Technologies change, but the fundamental laws of entropy do not.

Original article: https://arxiv.org/pdf/2602.18374.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- ‘Project Hail Mary’s Unexpected Post-Credits Scene Is Worth Sticking Around

- Total Football free codes and how to redeem them (March 2026)

- Limbus Company 2026 Roadmap Revealed

- The Division Resurgence Specializations Guide: Best Specialization for Beginners

- After THAT A Woman of Substance cliffhanger, here’s what will happen in a second season

- Brawl Stars Sands of Time Brawl Pass brings Sandstalker Lily and Sultan Cordelius sets, along with chromas and more

- Brawl Stars Brawl Cup Pro Pass arrives with the Dragon Crow skin and Chroma, unique cosmetics, and more rewards

- Clash of Clans April 2026 Gold Pass Season introduces a Archer Queen skin

- XO, Kitty season 3 soundtrack: The songs you may recognise from the Netflix show

- Gold Rate Forecast

2026-02-23 16:34