Author: Denis Avetisyan

A new technical curriculum aims to equip translators and communicators with the skills needed to navigate the rapidly evolving landscape of language-oriented artificial intelligence.

This paper details the development and evaluation of a curriculum focused on enhancing AI literacy and computational thinking among language and translation professionals, leveraging tools like Jupyter Notebooks and transformer networks.

Despite growing reliance on language-oriented artificial intelligence in professional contexts, a significant gap persists in the technical understanding of these technologies among language and translation (L&T) specialists. This paper details the development and evaluation of ‘A technical curriculum on language-oriented artificial intelligence in translation and specialised communication’, designed to bridge this gap by fostering AI literacy through hands-on engagement with core concepts like vector embeddings and transformer networks. Results from implementation in an MA course demonstrate the curriculum’s didactic effectiveness in enhancing computational thinking and algorithmic awareness. However, optimal learning appears contingent on robust didactic scaffolding – raising the question of how best to integrate such technical curricula into existing L&T educational frameworks to ensure sustained digital resilience.

The Evolving Landscape: LLMs and the Need for Informed Engagement

The emergence of Large Language Models (LLMs) signals a dramatic reshaping of language and translation technologies, driven by their capacity for unprecedented automation. These models, trained on massive datasets, can now generate human-quality text, translate languages with increasing accuracy, and even perform complex linguistic tasks like summarization and content creation with minimal human intervention. This isn’t simply an incremental improvement; LLMs offer the potential to automate workflows previously requiring significant human effort, impacting fields from customer service and content marketing to scientific research and education. The speed and scale at which these models operate promise substantial gains in efficiency and productivity, while simultaneously opening new avenues for innovation in how humans interact with and process information. However, realizing these benefits requires careful consideration of implementation strategies and a proactive approach to addressing potential challenges related to bias, accuracy, and ethical considerations.

The transformative power of Large Language Models extends only as far as the human capacity to harness them; simply possessing the technology is insufficient. Realizing the full benefits of LLMs demands a workforce proficient not only in utilizing these tools, but also in comprehending their underlying mechanics and limitations. This necessitates cultivating skills in prompt engineering, data analysis, and model evaluation, enabling professionals to effectively integrate LLMs into existing workflows and critically assess the accuracy and biases of generated outputs. Without this foundational understanding, organizations risk misinterpreting results, overlooking potential errors, and ultimately failing to maximize the return on investment in these increasingly sophisticated technologies. A workforce adept at both implementation and critical evaluation is, therefore, paramount to unlocking the true potential of LLMs and ensuring their responsible deployment.

The language technology industry is undergoing a rapid evolution, and sustained success increasingly depends on a workforce possessing technical AI literacy. This isn’t merely about understanding what Large Language Models can do, but grasping how they function, allowing for effective implementation, customization, and critical assessment of outputs. Recent training programs demonstrate a clear impact; stakeholders who receive targeted education in areas like prompt engineering, model evaluation metrics, and data curation consistently report improved project outcomes and increased efficiency. This suggests a fundamental shift is necessary – one where continuous learning and the cultivation of AI-specific technical skills are prioritized, enabling professionals to move beyond being users of the technology and become active shapers of its future within the language industry.

The Inner Workings: Neural Networks and Subword Tokenization

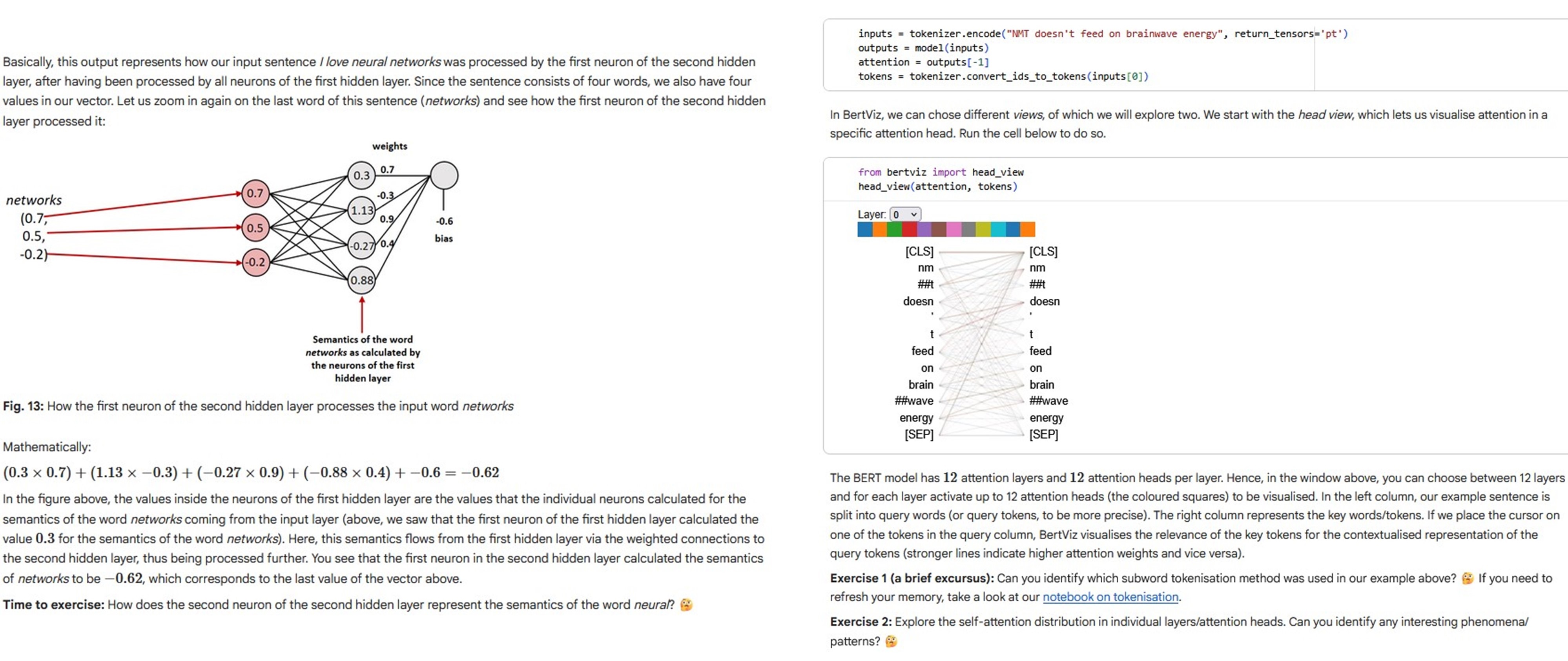

Large Language Models (LLMs) fundamentally rely on neural networks – interconnected layers of algorithms modeled after the human brain – to process and understand language. These networks learn by identifying statistical patterns within massive datasets of text and code. Input data is converted into numerical representations, or vectors, which are then propagated through the network’s layers. Each connection between nodes, or neurons, has an associated weight; these weights are adjusted during a training process to minimize prediction errors and improve the network’s ability to accurately model the relationships between words and concepts. The resulting network captures complex dependencies, enabling LLMs to perform tasks such as text generation, translation, and question answering based on the patterns learned from the data.

Subword tokenization addresses the challenges of representing large vocabularies in natural language processing models. Traditional word-based tokenization often results in excessively large vocabulary sizes, increasing computational costs and data sparsity. Subword techniques decompose words into smaller, frequently occurring units – such as morphemes or character n-grams – allowing models to represent a wider range of words with a smaller, more manageable vocabulary. This reduction in vocabulary size directly improves model performance by reducing the number of parameters, enhancing generalization to unseen words, and mitigating the impact of rare words, ultimately leading to more efficient training and inference.

Subword tokenization algorithms address the challenge of managing large vocabularies in natural language processing. Byte-Pair Encoding (BPE) iteratively merges the most frequent pairs of characters or tokens until a desired vocabulary size is reached, prioritizing compression. WordPiece, utilized in models like BERT, selects the token pair that maximizes the language model likelihood, focusing on statistical significance. Unigram, employed in models such as SentencePiece, uses a probabilistic approach, training a unigram language model and iteratively removing tokens that least affect the likelihood, enabling control over vocabulary size and offering flexibility in handling rare words. Each algorithm presents trade-offs; BPE is relatively simple but can create overly long tokens, WordPiece balances compression and model performance, and Unigram provides greater control but demands more computational resources during training.

Practical Tools: Jupyter Notebooks for Hands-on Learning

Jupyter Notebooks facilitate interactive learning and experimentation with Large Language Models (LLMs) and associated technologies through a browser-based interface. These notebooks combine live code, explanatory text utilizing Markdown, and optional visualizations into a single document. The environment supports multiple programming languages, most notably Python, which is prevalent in the AI/ML landscape. Hosting platforms such as Google Colaboratory eliminate the need for local environment setup, providing access to computational resources and pre-installed libraries. This accessibility lowers the barrier to entry for individuals seeking to understand and implement LLM-based solutions, enabling iterative development and immediate feedback on code execution.

Jupyter Notebooks facilitate a learning methodology integrating executable code, explanatory documentation, and data visualizations within a single environment. This combined approach enables users to not only implement AI and LLM techniques but also to simultaneously observe the process and understand the underlying principles. A recent study quantitatively supports this method, demonstrating that 80% of participants expressed strong agreement regarding the suitability of Jupyter Notebooks as an effective didactic instrument for technical learning.

Several initiatives – LT-LiDER, MultiTraiNMT, DataLitMT, and adaptMLLM – are actively providing resources and training materials designed to enhance technical AI literacy within the Learning and Teaching (L&T) sector. Evaluation of these programs indicates a statistically significant increase in participants’ self-assessed technical knowledge; pre-test results averaged 3.72 on an 11-point Likert scale, rising to a post-test mean of 6.76 (p < 0.001). This data suggests a demonstrable impact of these initiatives in upskilling professionals in the application of AI technologies within educational contexts.

Beyond Skill Acquisition: Agency, Resilience, and Interpretability

The pursuit of technical AI literacy extends beyond mere skill acquisition; it fundamentally centers on cultivating algorithmic agency – the empowering capacity to not only comprehend how algorithmic systems function, but also to actively influence them. This isn’t simply about understanding code, but about developing a critical awareness of the biases, assumptions, and potential impacts embedded within these systems. Individuals with algorithmic agency can move beyond being passive recipients of algorithmic outputs and instead become informed participants, capable of questioning, interpreting, and even reshaping these technologies to better align with their values and needs. This proactive engagement is crucial for ensuring that AI serves as a tool for empowerment, rather than a source of opaque control, and it necessitates fostering a skillset that blends technical knowledge with critical thinking and ethical considerations.

The capacity for stakeholders to meaningfully interact with and shape artificial intelligence hinges not only on technical skills, but also on a robust foundation of computational thinking and digital resilience. These interwoven capabilities enable a critical evaluation of AI solutions, moving beyond passive acceptance to informed questioning of algorithmic logic and potential biases. When stakeholders possess this skillset, they are better equipped to responsibly deploy AI, understanding its limitations and anticipating unintended consequences. This proactive approach fosters innovation grounded in ethical considerations and ensures AI serves as a tool for empowerment rather than a source of opaque control, ultimately driving more effective and trustworthy implementations across diverse applications.

Explainable AI is paramount to building reliable and responsible technological systems, and recent studies demonstrate a substantial capacity for improvement in public understanding. Participants undergoing training exhibited a marked increase in knowledge – shifting from a pre-test average of 2.93 to a post-test average of 6.76 – indicating a significant gain in comprehension and confidence regarding these complex algorithms. This change is not merely incremental; the observed effect size of 1.60 represents a very large impact, suggesting that focused initiatives to demystify AI can effectively foster trust and accountability by ensuring greater transparency in how these systems operate and make decisions.

The curriculum detailed herein prioritizes a focused understanding of Large Language Models and their application to specialized communication. It seeks not to overwhelm with extraneous detail, but to distill core principles for practical application. This approach echoes Alan Turing’s sentiment: “The question is not whether a machine can think, but whether a machine can do.” The emphasis isn’t on replicating human intelligence in its entirety, but on leveraging computational tools to achieve specific, measurable outcomes – in this case, enhanced AI literacy and improved competency in language-oriented AI technologies for L&T professionals. The removal of unnecessary complexity allows for a clearer grasp of the underlying mechanisms and fosters genuine digital resilience.

Where To Next?

This curriculum addresses a symptom, not the disease. Digital resilience isn’t built with Jupyter Notebooks, but with critical thought. The field fixates on how these models function, while neglecting why they are deployed. Abstractions age, principles don’t. A deeper understanding of computational thinking – of algorithmic bias, of data provenance – remains paramount.

Evaluation focused on demonstrable skill. But true literacy isn’t a checklist. It’s a questioning stance. Future work must move beyond technical proficiency and explore ethical implications. Every complexity needs an alibi. The current emphasis on ‘AI literacy’ risks becoming mere tool usage, obscuring the larger questions of agency and responsibility in automated communication.

The focus now shifts to longitudinal studies. Can this curriculum foster sustained critical engagement? Or will these skills atrophy, becoming another forgotten layer in the rapidly evolving tech stack? The task isn’t to teach professionals about AI, but to equip them to critically assess its role in their fields, long after the current large language models are obsolete.

Original article: https://arxiv.org/pdf/2602.12251.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Episode 4 Release Date, Time, Where to Watch

- Physics Proved by AI: A New Era for Automated Reasoning

- How Martin Clunes has been supported by TV power player wife Philippa Braithwaite and their anti-nepo baby daughter after escaping a ‘rotten marriage’

- CookieRun: OvenSmash coupon codes and how to use them (March 2026)

- Goddess of Victory: NIKKE 2×2 LOVE Mini Game: How to Play, Rewards, and other details

- Total Football free codes and how to redeem them (March 2026)

- American Idol vet Caleb Flynn in solitary confinement after being charged for allegedly murdering wife

- Nicole Kidman and Jamie Lee Curtis elevate new crime drama Scarpetta, which is streaming now

- “Wild, brilliant, emotional”: 10 best dynasty drama series to watch on BBC, ITV, Netflix and more

- Roco Kingdom: World China beta turns chaotic for unexpected semi-nudity as players run around undressed

2026-02-14 18:32