Author: Denis Avetisyan

Researchers have developed an AI agent capable of autonomously formulating and solving multi-physics problems by reasoning about known physical laws, leading to more accurate and reliable simulations.

![A fully coupled simulation, incorporating an autonomous mechanism activated upon detection of low De numbers, successfully stabilized the effective stress path and prevented falsely predicted rock fracture-arresting stress reduction at [latex] p^{\prime}=8.9 \text{ MPa} [/latex]-whereas a model constrained to undrained conditions drove the path directly into the failure zone, demonstrating the essential role of diffusive dissipation in preserving qualitative physical correctness.](https://arxiv.org/html/2603.09756v1/phase3_stress_path_comparison.png)

This work introduces a neuro-symbolic framework for autonomous mechanism completion, enabling physically consistent multi-physics simulation by extracting and applying constitutive models.

Current approaches to integrating large language models into scientific discovery struggle with the unstated assumptions embedded within retrieved physical laws, often leading to physically unrealistic simulations. In ‘Epistemic Closure: Autonomous Mechanism Completion for Physically Consistent Simulation’, we present a neuro-symbolic generative agent that autonomously validates and completes physical mechanisms by reasoning about these underlying assumptions, preventing ‘physical hallucinations’. This agent achieves improved accuracy by encapsulating physical laws into modular skills and employing dimensionless scaling analysis, as demonstrated in the context of thermal pressurization in porous media. Could this paradigm shift enable AI to function not merely as a coding assistant, but as a true epistemic partner in scientific investigation?

The Inevitable Complexity of Multi-Physics Modeling

Historically, creating simulations that combine multiple physical phenomena – such as fluid dynamics with heat transfer or electromagnetism with structural mechanics – has been a painstakingly manual process. Engineers and scientists must individually define the governing equations for each physics domain, then meticulously couple them, specifying how information is exchanged between each model. This requires not only deep expertise in each individual field, but also a significant investment of time and effort in parameterization – tuning the models to accurately reflect real-world conditions. Consequently, exploring a wide range of design options, or rapidly iterating on a prototype, is often severely limited by the sheer computational and human resources required to formulate and validate each new simulation. The demand for increasingly complex, multi-faceted designs highlights the critical need for automated and streamlined approaches to multi-physics modeling.

Simulating systems governed by multiple interacting physical phenomena-such as fluid dynamics coupled with structural mechanics or electromagnetics with heat transfer-presents a significant computational hurdle. Accurately representing these interactions requires solving complex sets of coupled equations, often demanding highly specialized numerical methods and considerable expertise in each individual physics domain. This intricacy isn’t merely theoretical; the computational cost escalates rapidly with model fidelity and complexity, frequently necessitating high-performance computing resources and lengthy simulation times. Consequently, detailed multi-physics modeling can become prohibitively expensive, limiting the scope of investigations and hindering the rapid exploration of potential designs or operational scenarios. The need for efficient and robust coupling algorithms remains a central challenge in advancing predictive capabilities across diverse scientific and engineering disciplines.

Current multi-physics modeling techniques often falter when confronted with real-world complexity, particularly when the governing physical laws are not fully understood or are subject to change. Traditional approaches assume a fixed and known physical model, making it difficult to accommodate scenarios requiring adaptive refinement based on incoming data. This limitation is critical in fields like materials science, where material properties evolve during processing, or in climate modeling, where feedback loops and incomplete knowledge of atmospheric processes necessitate continuous calibration. Consequently, simulations may diverge from reality, demanding substantial manual intervention and hindering predictive accuracy. Innovative strategies are needed that enable models to learn and adapt from observational data, effectively bridging the gap between theoretical predictions and complex, evolving systems.

A Pragmatic Approach: Neuro-Symbolic Generative Agents

The Neuro-Symbolic Generative Agent is a computational framework designed for the autonomous formulation and solution of complex multi-physics problems. It utilizes Large Language Models (LLMs) as the core reasoning engine, enabling the agent to translate problem descriptions into executable simulation models without requiring explicit programming. This is achieved by integrating the LLM with a knowledge base of pre-defined physics principles and numerical methods. The agent’s functionality encompasses problem decomposition, model selection, parameter identification, simulation execution, and result analysis, all performed through LLM-driven reasoning and knowledge retrieval. The system aims to reduce the need for manual intervention in the simulation pipeline and facilitate rapid prototyping of solutions for complex scientific and engineering challenges.

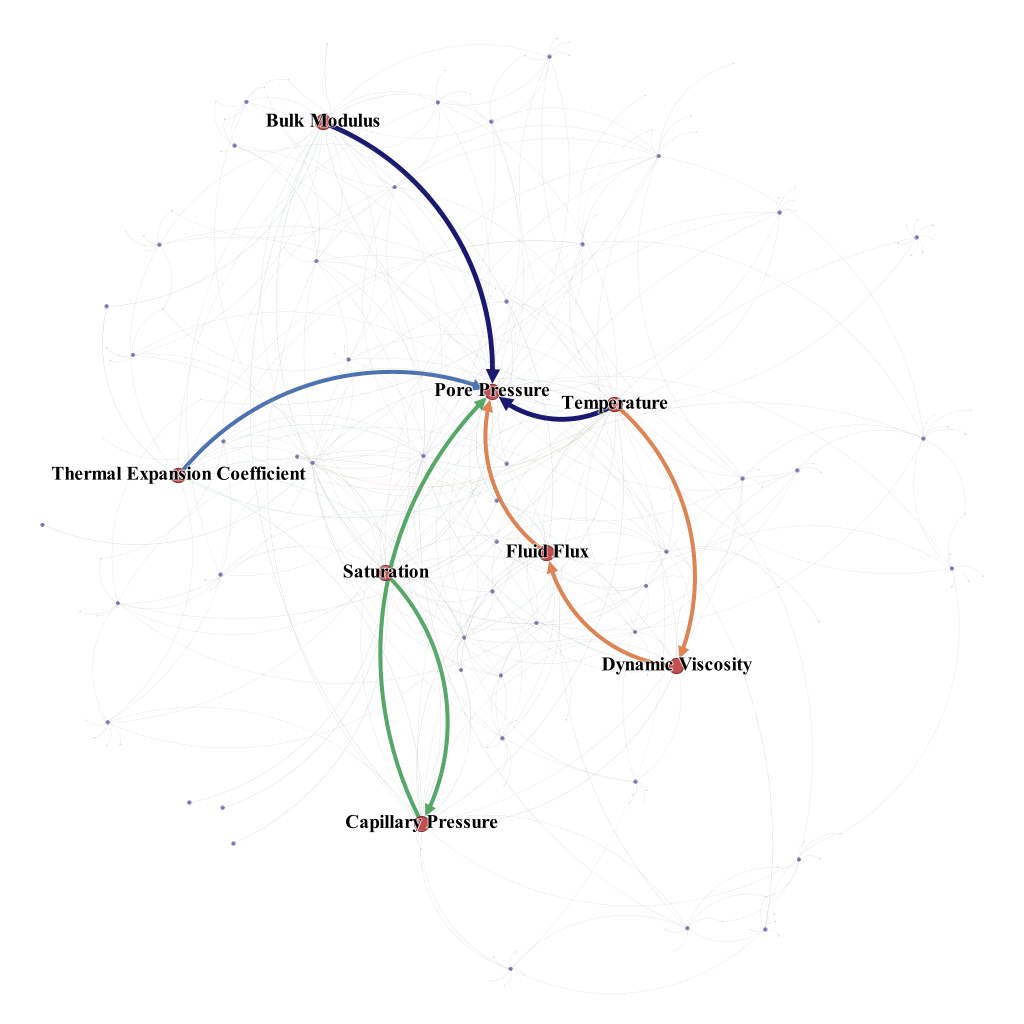

The Neuro-Symbolic Generative Agent utilizes ‘Constitutive Skills’ – formalized representations of scientific principles and relationships – to provide a structured knowledge base for the Large Language Model (LLM). These skills are not simply data points, but rather executable components that define physical laws, material properties, and boundary conditions. By integrating these skills with the LLM’s reasoning capabilities, the agent can autonomously construct simulation models, starting from a problem description. The LLM leverages the constitutive skills to determine appropriate model parameters, select relevant equations, and define the simulation setup. Furthermore, the agent can refine these models iteratively, using simulation results to adjust parameters or modify the model structure based on the encoded scientific knowledge within the constitutive skills.

Retrieval-Augmented Generation (RAG) is implemented to provide the Neuro-Symbolic Generative Agent with access to a knowledge base of physics principles, material properties, and simulation techniques. This process involves retrieving relevant information from the knowledge base based on the current problem context and incorporating it into the Large Language Model’s prompt. By grounding the LLM in established scientific knowledge, RAG reduces the likelihood of hallucinated or inaccurate simulation setups. The system dynamically queries the knowledge base throughout the simulation process, allowing it to adapt and refine the model without requiring explicit, manual input, thereby minimizing the need for human intervention in both model creation and problem-solving.

![This neuro-symbolic generative agent improves physical simulation by extracting governing equations from scientific literature, employing deductive pruning and inductive completion-guided by dimensionless scaling analysis using the Deborah number [latex]D_e[/latex]-to avoid physically implausible outcomes, as demonstrated by its ability to correctly predict stable rock behavior under heat where a literature-only model hallucinates fracture.](https://arxiv.org/html/2603.09756v1/fig1_workflow.png)

Validation Through Rigorous Analysis: Beyond Simple Accuracy

Dimensionless scaling analysis serves as a crucial step in model validation by reducing the number of required simulations and ensuring computational efficiency. This technique involves identifying the relevant physical parameters and formulating dimensionless groups – ratios of physical forces – which characterize the system’s behavior. By analyzing these dimensionless numbers, researchers can determine the dominant regimes and confirm the appropriateness of simplifying assumptions made within the simulation. This process effectively narrows the scope of necessary calculations, eliminating scenarios where assumptions are invalid or where certain physical effects are negligible, thereby reducing computational cost and improving the accuracy of results. The process also helps to ensure that the simulation accurately represents the physical system being modeled, preventing spurious results arising from incorrect assumptions or irrelevant calculations.

Dimensionless analysis, specifically utilizing the Deborah Number, characterizes the viscoelastic behavior of the simulated system. The Agent determined a Deborah Number range of 10⁻² to 10⁻³, calculated as the ratio of a characteristic relaxation time of the fluid to the characteristic time scale of the deformation. This value indicates operation within a ‘Drained’ regime, meaning viscous dissipation is dominant and inertial effects are negligible; the fluid responds slowly enough that it effectively drains energy from the deformation process. Consequently, simulations can be simplified by excluding terms related to elastic recoil and focusing on the viscous response, improving computational efficiency without sacrificing accuracy for this specific parameter space.

The implemented analysis framework incorporates diagnostic criteria to identify conditions where the Undrained Condition – a simplification often used in modeling fluid behavior – is no longer valid. This is achieved by monitoring key parameters and comparing them against established thresholds; deviations indicate the presence of significant dissipation or other effects that necessitate a fully coupled, time-dependent solution. Specifically, the framework assesses the relative magnitude of transient and steady-state terms in the governing equations; if transient terms become dominant, the Undrained Condition is flagged as invalid, and the simulation proceeds with a more comprehensive, computationally intensive model to accurately represent the fluid’s behavior. This adaptive approach ensures that computational resources are allocated efficiently, avoiding unnecessary simplification when it compromises accuracy.

The simulation utilizes the Finite Element Method (FEM) as its primary numerical solver due to its capacity to handle complex geometries and material properties. To address the computational demands arising from coupled equations – specifically those governing fluid flow and structural mechanics – the system implements Operator Splitting. This technique decomposes the problem into smaller, more manageable steps, solving each individually before recombining the results. This approach improves computational efficiency and stability by avoiding the simultaneous solution of a large, coupled system of equations, thereby reducing both processing time and memory requirements. The FEM, combined with Operator Splitting, allows for a robust and scalable solution to the modeled physical phenomena.

![Analysis of the Deborah number reveals the system operates in a drained regime ([latex]De \ll 1[/latex]) due to fluid diffusion dominance, prompting the agent to incorporate intrinsic dissipation and dynamically modulate Darcy flow based on competing effects of viscosity reduction and storage capacity changes.](https://arxiv.org/html/2603.09756v1/phase3_deborah_number.png)

Beyond Porous Media: A Platform for Broad Applicability

The Neuro-Symbolic Generative Agent showcases a novel capacity for simulating fluid flow within complex porous materials, effectively merging data-driven learning with established physics principles. This framework doesn’t merely approximate behavior; it explicitly incorporates both [latex]Darcy’s\,Law[/latex], which governs fluid transport, and the influence of thermal effects on that flow. By learning the underlying relationships between these physical laws and observed data, the Agent accurately predicts how fluids move through materials like soil, rock, or even biological tissues, accounting for variations in permeability, viscosity, and temperature gradients. This integration of symbolic knowledge and neural networks enables robust and physically consistent simulations, offering a powerful tool for applications ranging from groundwater modeling to optimizing enhanced oil recovery and understanding heat dissipation in geological formations.

The Neuro-Symbolic Generative Agent’s architecture is fundamentally designed for broad applicability, stemming from its core reliance on ‘Constitutive Skills’ – modular representations of physical laws. These skills are not hardcoded, but rather learned and combined, allowing the agent to reason about physics in a flexible manner. Crucially, this framework can be extended to model more complex phenomena; for instance, incorporating Jurin’s Law enables accurate simulation of capillary effects within porous media, significantly enhancing its predictive power in scenarios like groundwater flow or oil recovery. This extensibility isn’t limited to fluid dynamics; the modular nature of these constitutive skills paves the way for adaptation to diverse physical systems, encompassing areas like heat transfer, structural mechanics, and electromagnetics, suggesting a unified approach to multi-physics problem-solving.

Traditionally, constructing accurate simulations of complex physical phenomena demands substantial time and specialized knowledge in both the governing physics and numerical methods. However, this new framework offers a pathway to drastically reduce that burden. By automating model development and parameterization, the process is streamlined, allowing researchers to focus on interpreting results rather than meticulously crafting the underlying simulation. This acceleration isn’t merely about speed; it unlocks the potential for broader exploration of parameter spaces and more rapid iteration on scientific hypotheses. Consequently, discovery cycles are shortened, and the framework empowers a wider range of scientists to tackle previously inaccessible multi-physics problems, ultimately fostering innovation across diverse fields.

The Neuro-Symbolic Generative Agent demonstrated a crucial advancement in predicting the mechanical stability of porous media by accurately modeling Darcy dissipation – the energy loss due to fluid flow within the material. This capability allowed the Agent to calculate an effective stress at equilibrium of 8.9 MPa, a result significantly diverging from the 0 MPa prediction of a simpler, naive model. This discrepancy highlights the importance of accounting for fluid-solid interactions; the naive model predicted material failure, while the Agent’s accurate dissipation calculation indicated structural integrity. The successful prediction isn’t merely a numerical refinement; it represents a fundamental improvement in understanding how fluids contribute to the overall stress state within porous materials, paving the way for more reliable simulations and designs in fields like subsurface engineering and materials science.

The Neuro-Symbolic Generative Agent’s architecture isn’t limited to the intricacies of fluid flow within porous materials; its foundation in composable, physics-informed ‘Constitutive Skills’ unlocks a surprisingly broad applicability. This framework can be readily adapted to model a diverse range of multi-physics problems, moving beyond Darcy’s Law and thermal effects to encompass heat transfer phenomena, the stresses and strains of structural mechanics, and even the interactions governing electromagnetics. By learning and integrating fundamental physical principles, the Agent transcends the boundaries of a single application, offering a unified platform for automated model development and predictive simulation across traditionally disparate scientific domains. This adaptability promises to significantly accelerate research in fields requiring complex, multi-faceted modeling, paving the way for rapid prototyping and insightful discoveries.

![The agent accurately replicates both capillary-driven fluid rise, validated against Jurin’s Law [latex]H=29.68[/latex] cm, and experimental pore pressure evolution under undrained heating, confirming the correct instantiation of key constitutive relationships before autonomous pruning and intrinsic completion.](https://arxiv.org/html/2603.09756v1/fig3_page-0001.jpg)

The pursuit of autonomous problem solving, as detailed in this paper, feels… familiar. It reminds one of David Hilbert’s assertion: “We must be able to answer every well-defined mathematical question by a finite number of operations.” Of course, the devil’s in the ‘well-defined’ part, isn’t it? This ‘Neuro-Symbolic Generative Agent’ attempts to sidestep ‘physical hallucinations’ by grounding simulations in extracted physical laws. Laudable, certainly. But the system will inevitably encounter edge cases, novel scenarios the literature didn’t anticipate. It’ll start patching, approximating, and before anyone notices, what began as elegant reasoning about multi-physics will resemble a sprawling kludge. They’ll call it AI and raise funding, naturally.

The Long Tail of Consistency

The pursuit of ‘physical hallucination’ mitigation feels less like an engineering problem solved, and more like pushing the boundaries of what constitutes a tolerable error. This work correctly identifies the brittle core of many simulations: the implicit assumptions baked into constitutive models. Yet, each elegantly closed equation is merely a local minimum in a vast, multi-dimensional space of potential failure modes. Production environments will inevitably reveal corner cases where even ‘dimensionless scaling’ proves insufficient to mask fundamental inconsistencies. The bug tracker, already overflowing, will soon house a new taxonomy of physical implausibility.

The framing of this as ‘autonomous mechanism completion’ carries a particular irony. Agency, in this context, isn’t creation-it’s the diligent application of pre-existing knowledge. The real challenge isn’t building agents that can solve physics problems, but agents that can gracefully degrade when faced with incomplete or contradictory information. The current focus on literature-derived laws seems optimistic; the volume of undocumented, tacit knowledge embedded within engineering practice is orders of magnitude larger.

It is tempting to believe that better simulation equates to better understanding. The reality, however, is often the inverse. Each increase in fidelity simply reveals new layers of complexity-and new opportunities for things to go wrong. The system doesn’t deploy; it lets go.

Original article: https://arxiv.org/pdf/2603.09756.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Total Football free codes and how to redeem them (March 2026)

- Clash of Clans May 2026: List of Weekly Events, Challenges, and Rewards

- Farming Simulator 26 arrives May 19, 2026 with immersive farming and new challenges on mobile and Switch

- Last Furry: Survival redeem codes and how to use them (April 2026)

- Pixel Brave: Idle RPG redeem codes and how to use them (May 2026)

- Gold Rate Forecast

- Honor of Kings x Attack on Titan Collab Skins: All Skins, Price, and Availability

- Top 5 Best New Mobile Games to play in May 2026

- Nekopara Sekai Connect Neko Tier List

- Top 15 Mobile Games for April 2026

2026-03-11 17:42